tl;dr

There is a broad tendency to reject reality today. It can be seen across society and our institutions. One of the big reasons is that reality is unrelenting and brutal. Reality bites back. It has become vogue to try to define reality according to the wishes of leadership. It is almost willing their desires into being. Often, the way to wish this reality into being is through virtual systems. The world is online, and the online image can be crafted to meet the desires. In fact, the populace engages so virtually, it encourages it. This is also true in science, where virtual simulations or AI provide a compelling view that does not need to be the actual reality. The problem with this approach is that it avoids innovation and problem-solving needed for progress. The harsh feedback from reality is needed to adjust and push back on wrong approaches. Too often today, we accept the wrong and reject the evidence of reality.

“Reality continues to ruin my life.”― Bill Watterson

The Attraction of Virtual

“The real world is where the monsters are.”― Rick Riordan

The escape from reality is driven by our increasingly online lives. It’s social media, email, and the internet. This seems to have driven a change in how leadership approaches dealing with reality. By dealing with it, I mean ignoring it. They increasingly look to shape the online narrative and worry about the exposure of their lies, issues, and problems there. In the real world, all of this exists, but can be hidden. They fear the capacity of the online to amplify the signal of reality.

I have seen the leadership work to shape the online narrative and avoid any sort of subtlety or nuance of reality. This is a troubling trend that is leading to a de facto ignorance of reality. They are systematically ignoring problems that exist. This is done simply by shaping the messaging to be ignored instead of identifying and confronting it. We as a society need to overcome this, or we will be swallowed by this virtual world. Then the real world will bite us back in a way that could be fatal.

I realize that my own writing online has pushed back against this trend. The reaction of my former workplace to my writing is evidence of how uncomfortable management is with the actual world. The result is an astonishing degree of hidden power and lack of transparency. In a sense, the lack of transparency now is worse in the online world than before. In the current time, it is clear that societal leadership is hiding a lot. The prime example is the behavior and actions of the “Epstein class”. There we see how the rich and powerful act behind closed doors, and it’s appalling. It’s criminal and unethical. The same kind of unethical and damning behavior is present to lesser degrees throughout the rest of society. All of this seems to be somewhat of a consequence of the tie to this online-virtual world dominating.

Science Cannot Be Virtual

The conduct of science has been infected by this. One key symptom of the problem is the prevalence of uncertainty quantification (UQ) over verification and validation (V&V). UQ has become dominant lately. V&V is fading from practice. The reason is simple. UQ only needs a virtual model to give voluminous results. V&V imposes a harsh reality on models. V&V finds problems and shortcomings that require plans to change. UQ just gives results galore. Why invite problems with V&V, when UQ makes you look great?

The answer is that V&V is the scientific method, and UQ is not without V&V.

It is the desire to have a purely virtual world. It doesn’t have any connection to reality. Verification and validation are both connections to reality. Verification is an analytical mathematical reality and validation is through experiment. Both are hard and unyielding. Both find problems and demand progress and improvement. More recently, machine learning, particularly AI, and the use of UQ. Both strongly push towards these virtual worlds that have no connection to reality (other than training data). They give results without the difficulties that come with reality, either real or analytical.

All of this goes together into a flywheel. Without the notions of reality, the flywheel falls apart. Reality provides the feedback to the efficacy of both the theory and our knowledge of the world. It also provides surprises and the necessary push to advance things. It provides the feedback that things either do work or don’t work, and makes sure that the advances in science are earnest and actually correct. What I’ve seen is that these notions are being rejected increasingly.

“If there’s a single lesson that life teaches us, it’s that wishing doesn’t make it so.” ― Lev Grossman

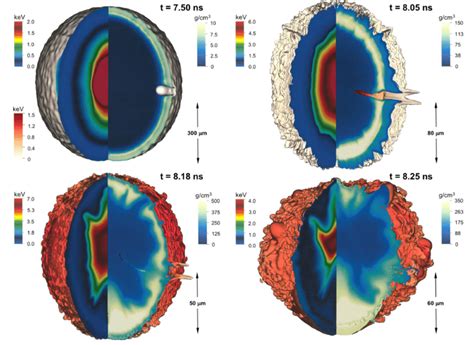

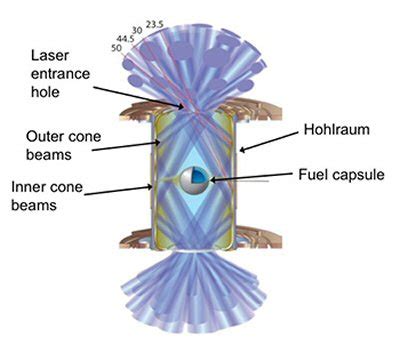

For example, in computational work, there is a seeming ubiquity and embrace of UQ as an activity unto itself. UQ is a key part of the validation of a model. Instead, UQ is often untethered from any sort of reality. Thus, there is no verification or validation applied to it. It simply exists by itself. This is the output from a virtual world, and without the feedback of reality. It will produce results that have absolutely no bearing on anything that we see. There are good examples of this in the literature. Perhaps one of the most august examples comes from the National Ignition Facility. There, they did an extensive UQ with their simulation code before they had even shot the lasers and attempted to do a fusion experiment. In this work, they produced a magnificent body of work that showed the possibilities of what the laser would produce. All of this work produced a PDF of the outcomes, and the target yield would go from 900 kJ to 9 MJ. Then they ran the actual experiment, and the result was a 300 kJ shot. This massive UQ study, with an incredible UQ framework and pipeline, supercomputers, and cutting-edge state-of-the-art simulation code, produced a study where the reality was not even in a PDF that spanned an order of magnitude. It showed how useless UQ is without V&V. Reality crushed the virtual.

It would be years before they ultimately got the targets to actually sit on the original PDF. What this really said was that this initial study was deeply flawed. If you look at the study, there is no hint of verification work either. The code was never attached to the reality of interest. When it was the results were damining! Whether or not it actually produced the theory correctly, and validation work certainly was not available, or applied to keep the work tethered to reality. Only when the experiments were actually conducted did they discover and learned important details. This entire troubling episode exists in the current world where honest and hard-nosed peer review is in free fall. The virtual world much more readily gives people the answers they want instead of the answers they need. Without the hard-nosed peer review that provides feedback to the overall process, the entire enterprise risks going in the wrong direction without the necessary adjustments to keep itself in a position where it can affect the actual reality.

In the case of the NIF study, it’s my belief that the modeling was done in a wholly optimistic way. This avoided a number of realities that should have been evident to the people conducting the study. First and foremost among these are the realities of turbulent mixing that is ubiquitous in nature. Much of the history of design for fusion capsules has worked from the premise that somehow this mix could be controlled and tamed. In this way, it would make the achievement of the conditions for fusion far easier than it is in reality. Without validation data to tie the reality, the modelers simply followed optimism to its usual outcome: results that were far more optimistic than any reality that they would be able to visit. The hope was that the optimistic results would allow money to rain down on the program at critical junctures when funding was threatened.

This is most acutely available in terms of AI and the belief that somehow AI can produce massive returns in the scientific world. This is definitely a perspective that needs to be leavened bya good dose of reality. It isn’t that AI can’t be a tool that we use to advance science, but rather that AI is still embedded within a scientific method and does not change its precepts. The scientific method is ultimately tied to observing and comparing to objective reality in two modes:

- The key mode is observation and experiment, where data from the real world is applied and looked at, sometimes to understand theories. Validation is the process for computational modeling.

- These theories almost always take the form of mathematics. Comparison with the theory computationallyis verification.

A large part of this dynamic revolves around the focus on supercomputers. As I’ve said many times before, supercomputers are an unabashed good. The concern with them is their priority compared to all other activities. Computational science is always a balance between what computers offer and what the rest of science offers to the entire enterprise of computing results. The current focus on AI only amplifies these concerns, as AI is trained from real-world data, but also is not tethered to a theory. Thus, in simple terms, the possibility of correlation equaling causation becomes an outcome that is invariably achieved. This must be countered by strong theoretical aspects that provide the sort of feedback needed to make sure that we actually understand what we are doing.

The same sort of bullshit optimism is present in programs that promote the extensive use of supercomputing as the ticket to modeling efficacy. We are seeing more of the same bullshit and optimism with AI, where computing is viewed as a one-size-fits-all cure to problems. The actual issues are far deeper scientifically, and requires much more balanced approach to get optimal solutions. In today’s world, it seems that if you want funding, you avoid reality. If you want progress and success, reality is something that needs to be wrestled with, like the brutal opponent that it actually is. Unfortunately, today, bullshit gets better funding for these institutions than real progress and real success. We should be wary, as A.I. is a bullshit factory, the likes of which we have never seen before. We are vulnerable to it. What is notable when you look at the science programs associated with AI is that not one iota of V&V is present in the work that’s proposed. It’s all stunts and showboats and little to no actual Science. The injection of V&V would bring the scientific method to bear on them.

“Reality is that which, when you stop believing in it, doesn’t go away.” ― Philip K. Dick

It Goes Past Science: The Trust Trap

“It’s funny how humans can wrap their mind around things and fit them into their version of reality.” ― Rick Riordan

An issue is the seeming present-day appeal of virtual worlds as compared to reality. Almost anyone you talk to says that reality sucks today, that it’s really terrible, and everything feels like it’s out of control and doomed. The virtual world offers escape from all this. My concern is that, after years and years of this virtual world, we will no longer be able to effectively deal with reality. Eventually, there will be some sort of feedback from reality that is so brutal that it will only serve to undermine and destroy any of the existing trust that exists. This could create even further damage and create a death spiral for the United States. This is true for science and other key institutions. Our government, corporations, and universities are all vulnerable to this.

One of the huge current developments is AI. It is receiving massive levels of investment across society. Science is one avenue of development where issues are evident. AI has incredible potential for business and corporate interests. In all cases, AI is both a huge opportunity and perhaps an even greater danger. We see a relatively uniform approach to improving AI via computing hardware, while the intellectual basis is languishing. We are ignoring the high risk high payoff routes to progress. The historical evidence points to mathematics and algorithms being the way to success. The real World consequences of this approach are endangering the health of the economy. The whole AI stack is well marbled with bullshit. The computing forward approach is questionable as an effective path to improving AI. All the evidence points toward it being grossly inefficient. Thus, the massive investment will not yield an effective payoff. This reality may be catastrophic with shades of the dot.com and 2008 economic meltdowns.

“Thinking something does not make it true. Wanting something does not make it real.” ― Michelle Hodkin

The misguided focus on computing is a reflection of our societal trust deficit. A better path to AI requires a level of trust we cannot muster today. In reaction to the trust deficit, we follow banal paths that are easy to sell to the public. Lots of computing is simple and seems plausible to the naive layperson. Computers are the tangible and concrete objects associated with modeling or AI. This becomes the simple projection of the virtual onto the real world. The actual path to progress is esoteric and far harder to describe. Computing is a part of this, but it cannot succeed without other investments. This is true for the scientific enterprise for modeling or developing AI. Today, our efforts completely lack any balance. This lack of balance will doom them both. We lack the trust necessary to succeed.

One of the key issues with corporate behavior is that trust is getting worse. The brutal focus on maximizing shareholder value has powered social media to annihilate societal trust. AI is far more powerful, and the same forces will take trust even lower. We are risking a downward spiral that could be a catastrophe. It is time to turn away from this “doom loop”. We need to take actions to improve trust and defuse both social media and AI’s damage. Triggering an economic meltdown would be damaging both materially and psychologically. It would be real-world consequences reminding this virtual focus of its power. It would be a worthy and brutal reminder of the need for appropriate focus.

The sort of brutality of reality points to the desire of leaders to live in a virtual world they can shape. The basic character of the virtual world can be steered to strongly confirm their biases. The leaders have a story of success, and the virtual world will confirm it. They work to make sure you aren’t getting new information to question their truth. This creates a situation where the gap between perception and reality grows larger and larger. When reality finally intrudes, you see a rupture of trust. This has happened over and over during the current era. The consequence is the loss of trust in almost every institution our society relies upon. We all see it in our current politics. I saw it from inside the National Labs.

It also has a more pernicious impact. That new information from reality is the source of innovation and progress. It is also the source of inspiration with plans that need to change to adapt. Thus, the denial of reality creates stagnation. The difference between stagnation and decline is subtle. One can easily build up the conditions for decline and decay. The use of earned value to manage science is a clear sign of a lack of trust. In recent years, this concept has been demanded for managing work at the Labs. Earned value is a concept appropriate for construction projects. Well-defined work you’ve done over and over again. It is completely inappropriate for anything like science or even cutting-edge engineering. This management model rejects reality and plays into the trap of virtually defined success.

We are constantly seeing the rejection of reality on the part of our leadership in all settings. I saw it regularly in terms of how lab leadership would talk about what was going on internally. All programs were wildly successful. This works as long as they are talking about something you don’t know about personally. Then they would talk about the same about something you do know about. Suddenly, you are confronted with their bullshit. The correct conclusion is to question everything else they say. If they are bullshitting you about what they know, can they be trusted? The answer increasingly is they can’t be trusted. This is the downward spiral of trust.

You see it with various corporations and how they talk about their own products. They are always looking to craft a message to maximize shareholder comfort. Then you see it with politicians who never take responsibility for anything and often outright lie and bullshit their way through everything. They are trying to spin every single event into a frame that they like. No one is confronting the objective reality. The actual truth becomes this game of “hot potato”. The virtual social media world becomes the vehicle for all of this bullshitting. Reality can be rejected through control, memes, and distraction.

This is perhaps most vividly shown in the spin and narrative around the two shootings of civilians in Minneapolis. In both cases, they were outright murders by paramilitary thugs. Instead, the government characterized them both as terrorists. They were people who actually deserved to be executed and were deserving of their fate. This served the purposes of the administration through a rejection of reality. In all of this rejection of reality, we lose the ability to adjust and change our course, to modifyactions so that bad things stop happening. This is true at every single level. Whether it be the laboratories I worked at, corporations, or the policies of the nation itself, all need adjustment. Without that adjustmentthat reality provides, they are careening towards even bigger disasters.

“Either you deal with what is the reality, or you can be sure that the reality is going to deal with you.” ― Alex Haley

At the NNSA laboratories, there is the prospect of a renewed nuclear arms race as the START Treaty has ended. The President has hinted at starting nuclear testing again. A resumption of nuclear testing, and/or active development of nuclear weapons, means harsh realities are coming. We cannot control or avoid them much longer. Ultimately, we will have to confront the problem that these realities are coming where reality has been rejected for a long time. The danger of the outcomes being bad has escalated to a dangerous level. Today, we have Schrodinger’s nuclear stockpile. By this, I mean it both works and doesn’t work as intended. Until we open that box, we won’t know the answer. We are about to open the box.

Are we ready for this? Not from what I observed. Reality is going to kick our ass. I pray it doesn’t kill us.

“Life is a series of natural and spontaneous changes. Don’t resist them; that only creates sorrow. Let reality be reality. Let things flow naturally forward in whatever way they like.” ― Lao Tzu

References

Oberkampf, William L., and Christopher J. Roy. Verification and validation in scientific computing. Cambridge university press, 2010.

Haan, S. W., J. D. Lindl, D. A. Callahan, D. S. Clark, J. D. Salmonson, B. A. Hammel, L. J. Atherton et al. “Point design targets, specifications, and requirements for the 2010 ignition campaign on the National Ignition Facility.” Physics of Plasmas18, no. 5 (2011).