tl;dr

Brute force computing power has become a one-size-fits-all plan for all computational progress. This is true for classical computational science and now AI. Computers market themselves to politicians and the business community. I’ll freely submit that more computing power is always good. These computers are so expensive. Plus, they are big and loud with lots of blinking lights, so idiots love them too. This approach follows the path of the exascale program. It was declared to be wildly successful!

The key is seeing what is not there. What you do with a computer matters more than the computers themselves. True for AI; true for computational science. We need to see what was not done and not invested in while focusing on hardware. This is domain knowledge, experiments and tests, mathematics, algorithms, V&V, UQ, and applications. All of these are subservient and diminished to serve the hardware focus. The hardware focus will eventually bring ruin.

“The reasonable man adapts himself to the world: the unreasonable one persists in trying to adapt the world to himself. Therefore all progress depends on the unreasonable man.”

— George Bernard Shaw

What We Focus On

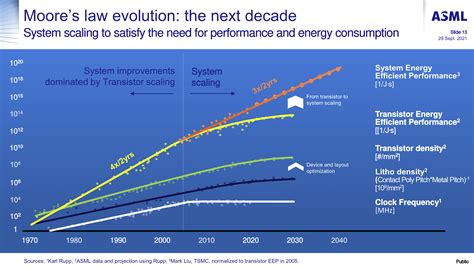

I’ve spoken out about our obsession with computers before. For most of my professional life, computing hardware has been the emphasis. This emphasis is short-sighted, especially now. The benefits of hardware are becoming less and less, with more effort needed for fewer gains. This all stems from the death of Moore’s law in all its forms. This was the exponential growth in computing power from the 1960’s to about 2010. Our overemphasis on hardware was a dumb idea 30 years ago. It is even dumber now. The dumbness stems from a lack of balance. Computers are great and important. More computing power is always good. However, what you put on them matters even more. This priority has been lost in the mourning of progress for “free” with Moore’s Law.

This lack of balance has been present for a long time, and our deficit is huge. It has played a role in the setting of American scientific dominance. We have failed to make sensible investments in science for decades. Computing has been one of our biggest investments. This investment has been focused on fighting reality and physics. It is guilty of failing to recognize the breadth of the computing ecosystem. Thus, our idiotic strategy has been an accelerant to the decline. One of the key reasons for American decline. There is a fair amount of corporate welfare, too.

Computing folks always talk about things like it were an ecosystem, including software and hardware. It is like an ecosystem. Predator-Prey balance matters there and can wax and wane. Our current system is like the deer population in much of the USA. Not healthy and doing damage. In this analogy, the wolf packs and cougars are missing. The predators are like algorithms, physics, and applications here. They are difficult to deal with and strain human systems, but are essential. Without the balance, the ecosystem is out of synch. Worse yet, we are adopting the same ideas for AI. The strategy there is hardware-focused. The rest of the ecosystem has been slaughtered.

The last blog post talked about part of what was missing. That is V&V. Now I’ll talk about what is in its place. Hardware. Software that the hardware demands for basic functionality. Algorithms that depend on the hardware. Everything else is missing. As you get closer to the use of the hardware, the effort diminishes. As you move closer to applications, the work gets harder and more failure-prone. This is key! Without trust, those failures cannot be accepted. They become proof for the propriety of withdrawing trust. Failures are the epitome of learning. Research is all about learning. This is why American science is faltering.

“Part of the inhumanity of the computer is that, once it is competently programmed and working smoothly, it is completely honest.”

— Isaac Asimov

Without failure, knowledge stagnates. This is exactly what has been wrought. We need to recognize the stagnation. Worryingly, we’ve made the same choices with AI. We need to take a different path. We need to admit how badly we’ve fucked this up. Instead, it’s all a massive “success” as our leaders declare. It isn’t. We are wasting effort and money on hardware. It could be better invested in other parts of the endeavor. We need to learn from this massive failure. This is not about not investing in hardware. That needs to happen. What we need is a better balance and care for the entire ecosystem. We have fed the bottom of the ecosystem for decades and slaughtered and starved the top of the ecosystem.

“Computers are useless. They can only give you answers.”

— Pablo Picasso

Computers and Money

The emphasis on computing has been a constant feature during most of my professional life. We chose that path when nuclear testing stopped. We stopped the real-world tests. Then we invested in the part of the virtual world farthest from the testing. The sales pitch was that computers would replace the testing. It is not a rational or logical approach. The main reason is political. Back in the 1990’s, our leaders needed to sell “no testing” to the Nation. Two things made hardware sell: politicians can see and touch computers, and computers are bought from companies. Politicians can see computers, which are big with lights and make lots of noise. They cannot see codes, or algorithms, math, or physics. Moreover, companies have money, and our politics runs on money. Back then software was intangible. The internet was barely a thing. So they sold the computers.

“Growth for the sake of growth is the ideology of the cancer cell.”

— Edward Abbey

Within a few years, scientists identified the gaps in this plan. The hardware was great, but the science was missing. To have a credible virtual deterrence, the science needed to be “world-class”. There was a recognition that what the computers computed mattered. It was not enough to simply have super fast computers. A faster computer is not automatically better unless it also does things right. Furthermore, the reality needed to keep the computers honest and adhering to the physics was gone. That gap could not be filled with bits and bytes. It could only be filled with physics, math, and refined practice. They added things to the program to fill the gap. Science actually had a moment in the sun.

It was a short-lived sunny moment.

This did not last. Monied interests became even more powerful. Science continued to fade and decline. The hardware as the path to success narrative took hold again. Science is hard. Hardware also had the message of crisis. Moore’s Law had held for decades and progress in computing was sort of free. It just happened. Now that progress would take focus. Ultimately physics would take over and no amount of money would yield hardware progress. You just had to spend a shitload of money to buy more hardware. Computer companies love that! Their lobbyists were flush too and greased the skids for hardware.

“Remember the two benefits of failure. First, if you do fail, you learn what doesn’t work; and second, the failure gives you the opportunity to try a new approach.”

— Roger Von Oech

The Exascale Computing Program

This takes us to late 2014 and 2015. My blog was in its infancy. We got a new program to feed the Labs and sell comptuers. It was the push to make the first Exascale computer. This would be a computer that can work at an exaflop. Let’s just submit that the speed of computers is big dose of bullshit on the face of it. Let me explain. We don’t get an exascale on any application we give a fuck about.

The speed is measured with a benchmark called LINPAC which does the inversion of a dense matrix. It is almost always the best case scenario for hardware with lots of floating point operations per memory reference. Thus the benchmark gets great performance for computers in general. More importantly, it does not reflect what happens in the vast majority of applications. Especially our most important applications. So the benchmark is basically marketing, not science. It has distorted our view of hardware for decades. Someone should have called bullshit on this long ago. Its a fucking farce.

Progress in the power of hardware had started to wane. The curve of progress that is Moore’s law was bending downward. It was a crisis! We need an exascale computer to fix it. Computers by themselves are the core of our scientific and military deterrence. Plus science is even harder. The USA doesn’t do hard any more. Funding science doesn’t do shit for corporate interests. At least corporate interests in the next quarter. Science is only going to help the future and not a specific company. Science has no lobbyist. The future doesn’t either. So fuck science and the future.

The answer to the need to fuck science and the future is the Exascale Computing Program (ECP). It was a really huge waste of money. Of course, no one ever says this. No one ever admits what is really going on or why. This is simply the inevitable outcome. Sure we got faster computers, but we also hollowed out the ecosystem. In that analogy it was a slaughter of apex predators. In addition, we encouraged the deer to breed like flies.

It makes sense to explain this. How such a “successful” program could be such an ensemble of bad ideas. The stupidity was baked in from the jump. The whole program was designed to be unbalanced. It starved the science. The science part was already done, it was just a passthrough. The new computers would just magically make science better! No need for V&V either. All the calculations would magically be correct by the power of computers. No need to check the quality. V&V would just find the problems and no one wants that.

“We don’t really learn anything properly until there is a problem, until we are in pain, until something fails to go as we had hoped … We suffer, therefore we think.”

— Alain de Botton

It reflected a combination of naive and superficial views of what computational science is. It has misconceptions and outright fabrications about how to ensure quality. Alone, a faster computer will not produce quality results unless you do many things correctly. ECP just assumed all of that was in hand. Further, it assumed that the faster computer is all that was needed. The basic premise is that faster computers will naturally improve everything. It only funded things that were necessary with the new computers. The applications rather than being the focus of the work were simply marketing. They were examples of all the awesome shit the faster computer could do. Better science would just happen by osmosis. No domain scince was really needed.

ECP is done. ECP declared to be a “success”. So why belabor these points at all? We are taking the same approach with AI.

Fervor About AI

“The question of whether a computer can think is no more interesting than the question of whether a submarine can swim.”

— Edsger W. Dijkstra

It’s hard to separate this trend from the general sense that the United States is overly focused on the industrial side of things. These computers have increasingly become large big-ticket purchases for industry and government. You might have noticed that computers and “data centers” are a huge focus recently for AI. NVIDIA is the richest company in the world by selling computer chips. It is clear that the funded path for improving AI is faster (bigger, more expensive) computers. There is almost nothing else on the map. ECP paved this road and its an even dumber road for AI.

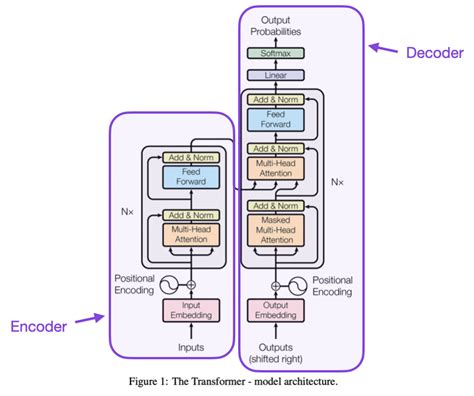

This is true to a greater degree than ECP by a mile. While the scaling of value in computation with computing power was terrible in ECP, it is worse for AI. AI improves far more slowly with computing power than scientific applications. For ECP the most optimistic strategy needed more than an order of magnitude in computing to get double accuracy. For AI, the amount of computing needed for this is 100-1000X. All of this with Moore’s Law being dead. AI is running out of data too. Its already eaten the entire internet. In both cases there is a stunning lack of recognition for what drives progress. These are algorithms and domain science. This entire moment is driven by an algorithmic breakthrough, the Transformer. Computing was at a critical mass to take advantage, but not the reason for the phase change.

AI also needs domain knowledge especially for science. We also have a profound lack of understanding where answers come from. The pathology of correlation equaling causation is quite central to AI. False positives are a very real danger. Indeed this could be part of explaining hallucinations. Training of models and bias is probably some of this along with the bullshitting of models. All of this demands V&V. Approaching AI with profound doubt and questioning results would simply be common sense. There is a huge need for deep applied mathematics to help comprehend AI better. Applied math has been declining for decades. A horrible trend for computational science. It may be a fatal trend for AI.

With the major DOE program, Genesis, none of the healthy balance is seen. It simply amplifies the imbalances present under exascale. The whole program is bunch of stunt projects. Core science, algorithms, applied math and V&V are all absent. The whole thing smells just like ECP. The aroma is a stench.

“Each day we take another step to hell,

Descending through the stench, unhorrified”

— Charles Baudelaire

We have Failed in Advance

Earlier, I talked about failure. It is essential. We are engaged in systemic failure that provides no lessons. If one learns and improves on the basis of failure, success is the usual outcome. ECP is really just failure. Full stop. We are structuring our AI future just like ECP. It is a failure by construction. Within our current incentive and value system both are a success. This is simply because of money. These projects get funded and make people rich. Success is not knowledge, it is just funding. It is a value system that leverages funding without investing in the future. The deer are breeding like crazy and we are driving the predators to extinction.

“Someone’s sitting in the shade today because someone planted a tree a long time ago.”

– Warren Buffett

The future has no constituency. The only future we seem to care about is the next quarter. We’ve seen this with how climate change is being ignored. There inconvenient facts are simply ignored. The same is happening with all this computational stuff. The terrible scaling laws I mention above were ignored in ECP. The even worse scaling laws for AI are ignored. They are such a bummer. The difficult work needed to advance algorithms, understand technology plus find problems are all ignored. These are too hard. It is too likely that progress would be uneven. We cannot manage hard things that payoff in decades instead of months. We have failed in the worst way possible. We have failed at the starting line.

“Between stimulus and response there is a space. In that space is our power to choose our response. In our response lies our growth and our freedom.”

— Viktor E. Frankl