tl;dr

There is a sense of stagnation in progress for numerical methods for hyperbolic systems (gas dynamics). There are reasons for the relative stasis. To some extent, these methods are a victim of their own success. There are some really deep, conflicting priorities for methods in places I used to work. On the positive side, the conditions for nuclear fusion are exceedingly challenging to compute. You want to avoid shock waves. Shock waves are also impossible to avoid. With shock waves, conservation is essential unless the shock is explicitly tracked. For fusion conditions, conservation is generally disregarded because flows are desired to be adiabatic, thus shockless and smooth. Work to satisfy both priorities simultaneously is completely lacking. It would seemingly be important to pursue, but it’s not. We have simply surrendered to this as a challenge.

So let’s peel this onion.

“Knowledge has to be improved, challenged, and increased constantly, or it vanishes.” ― Peter Drucker

Conflicting Priorities

The national labs I used to work at solve a host of extremely challenging problems. These are high-energy-density problems with some really challenging conditions to consider. These involve exotic conditions and difficult processes to engineer. Canonically, explosions and shock waves are ever-present. Amongst the most difficult things to produce are the conditions for nuclear fusion. Computational tools are necessary and ubiquitous for the design and analysis of these technologies. As I’ve noted before, these labs are the origin of the technology known as computational fluid dynamics (CFD). The past still rules choices today, and the labs are a bit of a scientific cul-de-sac. The cultures resist outside ideas. Security and other conditions are increasingly isolating the labs from the World.

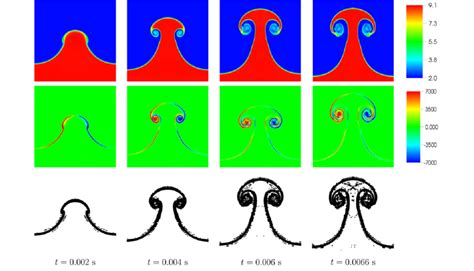

The two big priorities for the labs above are seemingly in conflict with one another. Not seemingly, they are in conflict! To compute shock waves, there are two approaches that have been successful. One is shock tracking. In this approach, the evolution of the shock wave is explicitly computed and updated. This was the first approach taken at the labs in computations done in World War 2. It is exceedingly complex, especially in more than one dimension. Shock capturing and artificial viscosity were invented as an alternative. Shock capturing dominates because of its generality and relative simplicity. It allows the examination of complex engineered systems.

The methods developed initially for shock capturing were in the Lagrangian frame of reference. These methods were quite successful, but have some limits. These methods are not generally conservative of energy. The internal energy equation is evolved. One mantra I have to repeat over and over is that the internal energy equation is not a conservation law. It is an evolution equation. To get a conservation law, the total energy must be conserved. In the Lagrangian frame, the conservation errors are small, being close to negligible. As soon as you leave the Lagrangian frame, these errors become large and have negative consequences. You get the wrong speed of propagation for shocks unless you are intentional.

“Don’t handicap your children by making their lives easy.” ― Robert A. Heinlein

It has been shown that conservation is essential for computing shock waves properly. This is the Lax-Wendroff theorem. Shocks are weak solutions of the equations, and conservation is essential to getting a weak solution. There is an additional caveat: proper dissipation is needed to obtain the correct weak solution. Weak solutions are not unique, and one must select the physical one. For most of CFD, this conservation is essential. Most computations for systems with shocks are conservative. Outside the lab,s developments adhere to its dictum. Inside the NNSA labs, not so much. The Lax-Wendroff theorem was developed at Los Alamos. For decades, it was just ignored there. That is part of the story here, and the challenge is to stop ignoring it.

The simple explanation of why conservation is ignored is available. In brief, the Labs are interested in a wide range of very high-Mach-number flows. If the Mach number is very high, the flow’s energy is dominated by kinetic energy. If one evolves total energy, the internal energy is found via a subtraction of two large numbers, e = E_t – K, where K = \frac{1}{2}\sum_i u_i^2. This can be error-prone and produce errors that can be quite important for fusion conditions. This gets to the resistance of the Labs for conservation form. For successful fusion designs, these errors are intolerable. (wordpress math isn’t working today)

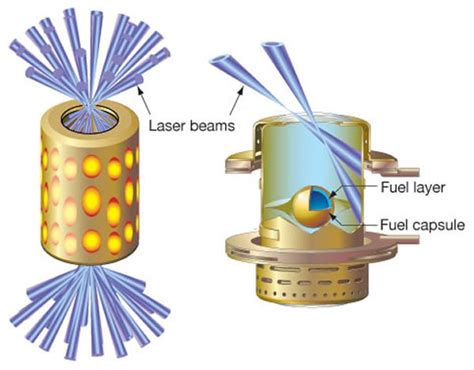

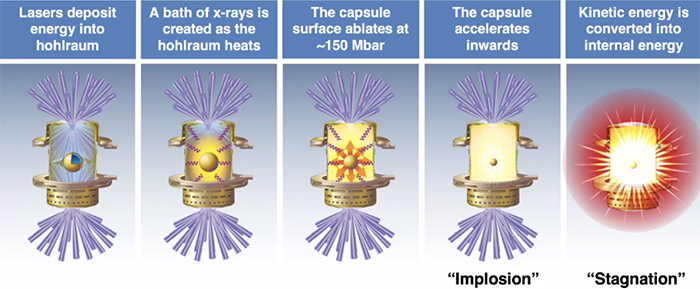

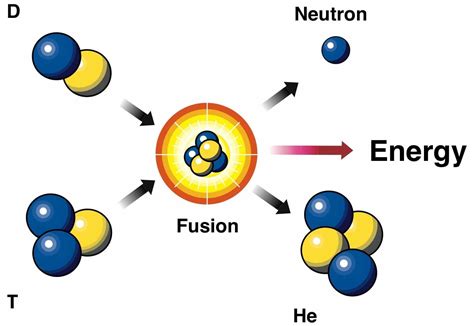

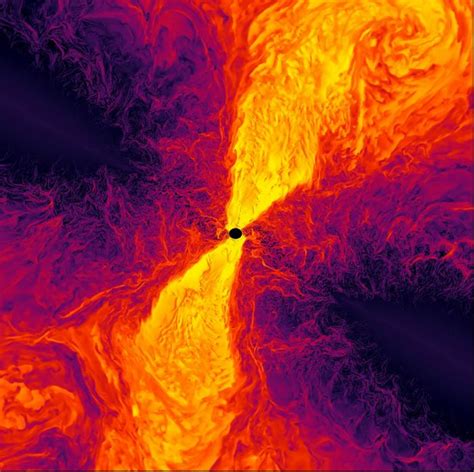

Fusion happens where the proper materials (isotopes of hydrogen) are put into very dense and very hot conditions. Fusion happens in very exciting stars with more than hydrogen, BTW! Getting these materials into dense hot conditions is quite challenging. Using shock waves to do this does not work well. The amount of density and energy growth with shocks is quite inefficient. One of the explanations is the growth of entropy with shocks. A shock wave always raises the entropy of the material. This makes the additional shocks more difficult to improve the conditions for fusion. The trick is to compress the material adiabatically with no increase in entropy. These conditions are quite difficult to engineer. Compressible fluid dynamics shocks readily. They require careful tuning and the creation of very high Mach number flows that are carefully balanced to avoid shocks.

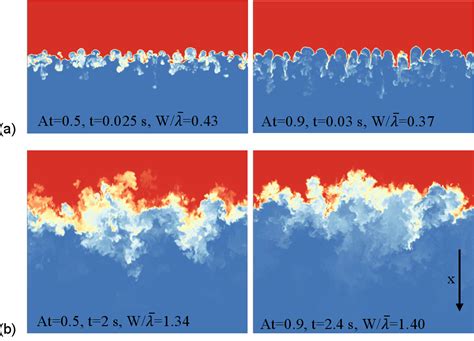

The original methods derived by von Neumann (and Richtmyer) are great for computing these kinds of flows. Conservation form methods solving total energy generally suck at it. Thus at the Labs the original non-conservative methods are favored. At Livermore, this favor is almost pathological (definitely cultural). For the optimistic view of technology, the original methods are great. All is well in the Lagrangian frame. The issue is that mixing and turbulence demand leaving the Lagrangian frame. To deal with the pessimistic side of technology and shock waves the original methods are problematic. Both turbulence and shocks are ubiquitous.

Something needs to give. Why can’t we have both? Its been 70 fucking years for Christs sake.

“The mind, once stretched by a new idea, never returns to its original dimensions.” ― Ralph Waldo Emerson

Are the Priorities Incompatible?

Even today the mantra demands one to make a choice. You either use a method that computes adiabatic evolution properly, but fucks up shocks, or one that computes shocks right and fucks up adiabats. At Livermore where fusion is king, the first choice wins all the time. Livermore has a big wake and this choice dominates computation at the Labs. Thus for the other labs too, shocks are hosed. Any serious code uses remap, and most problems eventually become Eulerian even if they start Lagrangian. Thus they start to lose conservation of energy. With this loss of energy makes incorrect shock wave evolution inevitable. Testing confirms that this happens as a matter of course.

The fix for this is simple, but not currently taken. This is go to conservation form. Instead there is a fix to return the evolutionequations to conservation was invented by DeBar in the 1970’s. Basically the kinetic energy deficit (or surplus) is added back to get conservation back. One of the issues is that this is not in conservation form. Thus, Lax-Wendroff does not apply as a theoretical backstop. The DeBar approach has been shown itself to be effective in getting correct solutions. It is also incredibly fragile. It generally does not function reliably on complex problems. It falls prey to the basic subtraction issue mentioned above.

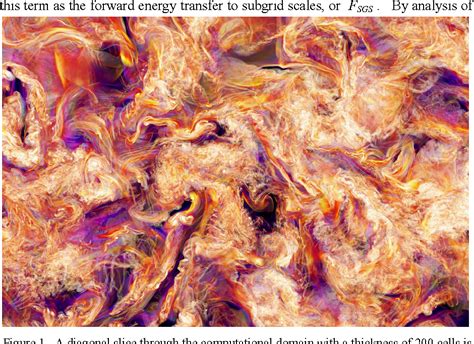

The main community outside the Labs facing similar problems are the astrophysical scientists. In the 1980’s they used codes based on technology from Livermore (written by a group headed by Mike Norman). This code has been supplanted by conservative methods based on the piecewise parabolic method (PPM). Thus today the astrophysical calculations are done using modern conservative methods. Most commonly PPM is the basis. The author of PPM is Paul Woodward who worked at Livermore in the 1970’s and 1980’s. Paul also worked with Bram Van Leer on sabbatical influencing his approach. Astrophysicists are also interested in adiabatic compression and fusion conditions. The difference is degree. Fusion like that in ICF is far more extreme than astrophysical flows.

Those familiar with my writing might recognize that I really favor PPM as a method and basic framework. I also believe that conservation is essential. It should not be disregarded as the Labs do. Correct shock wave propagation is too important to throw away.

“I am sufficiently proud of my knowing something to be modest about my not knowing all.” ― Vladimir Nabokov

Having Your Cake and…

This gets to the big challenge I am thinking about. Can we have methods that compute adiabats properly and have conservation? I firmly believe the answer is yes, and it is bemusing that we haven’t done this yet. The issue is that the adiabatic evolution is incredibly demanding. I would counter that the errors and faults with shock solutions are obvious too, and far larger. In dealing with the technologies the Labs are responsible for, both are essential. I see three possibilities that are most likely to succeed at both. None of these are likely to get the effort they deserve today as all eyes are on AI.

1. A robust DeBar (kinetic energy fix).

The simplest approach might be to make the kinetic energy fix in remap work. The issues are the fragility of the approach. The way forward seems to be to enforce conservation over time and carry a “bank” of the difference along until it can safely be used. That said, the method still won’t match the Lax-Wendroff theorem. There is no reason to believe that results are safe for complex shocked flows. I think the lessons of this fix is that kinetic energy is the core of the problem to be solved. A big part of this is entropy conditions and consistency.

2. A really careful and well constructed PPM scheme.

The PPM method is a cell-centered version of a method Van Leer created (scheme 5 from his 1977 paper). It is a practical version of the active flux method developed recently. I do think the active flux method could be another path forward. One of the things about adiabatic flows are their smoothness and analytical structure. The sense is that the method could produce the analytical behavior to the level of truncation error. It is possible that using even higher order methods could reduce the errors to the point of being neglgible. Work in the literature shows that very careful mathematics and integrations can produce better results. PPM is an amazing conservative method. It also computes a host of complex flows very well. The sort of adiabatic compression needed for successful fusion is a bigger challenge. Perhaps some ideas from the kinetic energy fix could apply here as well.

3. Building on the conservative Lagrangian codes from France

The next idea is using the advances in Lagrangian methods that are conservative by construction. Do these methods work sufficiently well a priori for these adiabatic flows? It seems so, The issues with remap do not change, the Lagrangian frame must be discarded with mix. The basic mathematics of the subtraction of large numbers is retained. In my experience one needs to be careful and intentional to get robust answers. This applies to the spatial reconstruction step and the Riemann solution. Nonetheless, the adiabatic compression (expansion) challenge is huge. The use of the generalized Riemann problem is also potentially essential.

There may be other ideas to consider to break the impasse. These seem the best bet. In many cases each idea can produce ideas that need to be blended together. It is pretty clear that the detailed treatment of kinetic energy is the key. There is an additional challenge I’ve identified that feels very related.

“Life is about accepting the challenges along the way, choosing to keep moving forward, and savoring the journey.” ― Roy T. Bennett

Other Issues to Consider

I’ve written about issues with very strong rarefaction waves before. This topic is probably adjacent to the issues with adiabatic evolutions. I have noted that classical methods of all sorts fail for very strong rarefaction problems. These are problems that start of approach the expansion of material into vacuum. A rarefaction is an adiabatic structure. Thus the failure of conservative or remap methods on this class of problem may be related.

I have noted that it seems that methods based on the Generalized Riemann Problem (GRP) seems to do better. This is based on work from China and also alluded to by Maire for Kidder’s problem. The GRP approach is the epitome of being super careful in the construction of a method. It seems reasonable that combining some or all these ideas could provide the solutions. There is a possible solution that would solve the full spectrum of key problems in a unified manner.

This would allow us to have our cake and eat it too.

I can suggest that a successful method would have certain characteristics. I believe strict conservation form is essential. The method should be high-order and maintain strict control of dissipation of all forms. The evolution equations should consider a GRP method as well. The question is how to square the canonical problems with high Mach number adiabatic flows together. I might suggest that a separate internal energy equation be evolved to allow better solutions. In adiabatic evolution this equation should be used for better behavior. We may also need a separate kinetic energy equation as well. The key would be how to evolve any gain or loss then synchronize with the conserved variables. One would want to do this in conjunction with entropy satisfaction. The second law is an important inequality to adhere to. The discrepency should be allowed to disappear once the flow becomes dissipative via mixing or shocks.

The questions is whether anyone gives a fuck? All the attention is on AI. The reality is that AI won’t solve this problem, but could help if used properly.

“If you want something new, you have to stop doing something old” ― Peter Drucker

References

Caramana, E. J., D. E. Burton, Mikhail J. Shashkov, and P. P. Whalen. “The construction of compatible hydrodynamics algorithms utilizing conservation of total energy.” Journal of Computational Physics 146, no. 1 (1998): 227-262.

Maire, Pierre-Henri, Rémi Abgrall, Jérôme Breil, and Jean Ovadia. “A cell-centered Lagrangian scheme for two-dimensional compressible flow problems.” SIAM Journal on Scientific Computing 29, no. 4 (2007): 1781-1824.

Maire, Pierre-Henri. Contribution to the numerical modeling of inertial confinement fusion. No. CEA-R–6260. Bordeaux-1 Univ., 33 (France), 2011.

Qian, Jianzhen, Jiequan Li, and Shuanghu Wang. “The generalized Riemann problems for compressible fluid flows: Towards high order.” Journal of Computational Physics 259 (2014): 358-389.

Loubère, Raphaël, Pierre‐Henri Maire, and Pavel Váchal. “3D staggered Lagrangian hydrodynamics scheme with cell‐centered Riemann solver‐based artificial viscosity.” International Journal for Numerical Methods in Fluids 72, no. 1 (2013): 22-42.

Colella, Phillip, and Paul R. Woodward. “The piecewise parabolic method (PPM) for gas-dynamical simulations.” Journal of computational physics 54, no. 1 (1984): 174-201.

Woodward, Paul, and Phillip Colella. “The numerical simulation of two-dimensional fluid flow with strong shocks.” Journal of computational physics 54, no. 1 (1984): 115-173.

Rider, William J., Jeffrey A. Greenough, and James R. Kamm. “Accurate monotonicity-and extrema-preserving methods through adaptive nonlinear hybridizations.” Journal of Computational Physics 225, no. 2 (2007): 1827-1848.

Blondin, John M., and Eric A. Lufkin. “The piecewise-parabolic method in curvilinear coordinates.” Astrophysical Journal Supplement Series (ISSN, 0067-0049), vol. 88, no. 2, Oct. 1993, p. 589-594. 88 (1993): 589-594.

DeBar, Roger B. Fundamentals of the KRAKEN code.[Eulerian hydrodynamics code for compressible nonviscous flow of several fluids in two-dimensional (axially symmetric) region]. No. UCID-17366. California Univ., Livermore (USA). Lawrence Livermore Lab., 1974.

Burton, Donald E., Nathaniel R. Morgan, Marc Robert Joseph Charest, Mark A. Kenamond, and Jimmy Fung. “Compatible, energy conserving, bounds preserving remap of hydrodynamic fields for an extended ALE scheme.” Journal of Computational Physics 355 (2018): 492-533.

Hawley, John F., Larry L. Smarr, and James R. Wilson. “A numerical study of nonspherical black hole accretion. I Equations and test problems.” Astrophysical Journal, Part 1 (ISSN 0004-637X), vol. 277, Feb. 1, 1984, p. 296-311. Research supported by the US Department of Energy. 277 (1984): 296-311.

Stone, James M., and Michael L. Norman. “ZEUS-2D: a radiation magnetohydrodynamics code for astrophysical flows in two space dimensions. I-The hydrodynamic algorithms and tests.” Astrophysical Journal Supplement Series (ISSN 0067-0049), vol. 80, no. 2, June 1992, p. 753-790. Research supported by University of Illinois. 80 (1992): 753-790.

Stone, James M., Thomas A. Gardiner, Peter Teuben, John F. Hawley, and Jacob B. Simon. “Athena: a new code for astrophysical MHD.” The Astrophysical Journal Supplement Series 178, no. 1 (2008): 137-177.