The news is full of stories and outrage at Hillary Clinton’s e-mail scandal. I don’t feel that anyone has remotely the right perspective on how this happened, and why it makes perfect sense in the current system. It epitomizes a system that is prone to complete breakdown because of the deep neglect of information systems both unclassified and classified within the federal system. We just don’t pay IT professionals enough to get good service. The issue also gets to the heart of the overall treatment of classified information by the United States that is completely out of control. The tendency to classify things is completely running amok far beyond anything that is in the actual best interests of society. To

The news is full of stories and outrage at Hillary Clinton’s e-mail scandal. I don’t feel that anyone has remotely the right perspective on how this happened, and why it makes perfect sense in the current system. It epitomizes a system that is prone to complete breakdown because of the deep neglect of information systems both unclassified and classified within the federal system. We just don’t pay IT professionals enough to get good service. The issue also gets to the heart of the overall treatment of classified information by the United States that is completely out of control. The tendency to classify things is completely running amok far beyond anything that is in the actual best interests of society. To compound things, further it highlights the utter and complete disparity in how laws and rules do not apply to the rich and powerful. All of this explains what happened, and why; yet it doesn’t make what she did right or justified. Instead it points out why this sort of thing is both inevitable and much more widespread (i.e., John Deutch, Condoleezza Rich, Colin Powell,

compound things, further it highlights the utter and complete disparity in how laws and rules do not apply to the rich and powerful. All of this explains what happened, and why; yet it doesn’t make what she did right or justified. Instead it points out why this sort of thing is both inevitable and much more widespread (i.e., John Deutch, Condoleezza Rich, Colin Powell, and what is surely a much longer list of violations of the same thing Clinton did).

and what is surely a much longer list of violations of the same thing Clinton did).

Management cares about only one thing. Paperwork. They will forgive almost anything else – cost overruns, gross incompetence, criminal indictments – as long as the paperwork’s filled out properly. And in on time.

― Connie Willis

Last week I had to take some new training at work. It was utter torture. The DoE managed to find the worst person possible to train me, and managed to further drain them of all personality then treated him with sedatives. I already take a massive amount of training, most of which utterly and completely useless. The training is largely compliance based, and generically a waste of time. Still by the already appalling standards, the new training was horrible. It is the Hillary-induced E-mail classification training where I now have the authority to mark my classified E-mails as an “E-mail derivative classifier”. We are constantly taking reactive action via training that only undermines the viability and productivity of my workplace. Like most my training, this current training is completely useless, and only serves the “cover your ass” purpose that most training serves. Taken as a whole our environment is corrosive and undermines any and all motivation to give a single fuck about work.

Last week I had to take some new training at work. It was utter torture. The DoE managed to find the worst person possible to train me, and managed to further drain them of all personality then treated him with sedatives. I already take a massive amount of training, most of which utterly and completely useless. The training is largely compliance based, and generically a waste of time. Still by the already appalling standards, the new training was horrible. It is the Hillary-induced E-mail classification training where I now have the authority to mark my classified E-mails as an “E-mail derivative classifier”. We are constantly taking reactive action via training that only undermines the viability and productivity of my workplace. Like most my training, this current training is completely useless, and only serves the “cover your ass” purpose that most training serves. Taken as a whole our environment is corrosive and undermines any and all motivation to give a single fuck about work.

Let’s get to the point of why Hillary was compelled to use a private e-mail system in the first place? Why classified information appeared in the place? Why people in positions of power feel they don’t have to follow rules?

Most people watching the news have little or no idea about the classified computing or e-mail systems. So let’s explain a few things about the classified systems people work on that will get to the point of why all of this is so fucking stupid. For starters the classified computing systems are absolutely awful to use. Anyone trying to get real work done on these systems is confronted with the utter horror they are to use. No one interested in productively doing work would tolerate them. In many government places the unclassified computing systems are only marginally better. The biggest reasons are lack of appropriately skilled IT professionals and lack of investment in infrastructure. Fundamentally we don’t pay the IT professionals enough to get first-rate service, and anyone who is good enough to get a better private sector job does. Moreover these  professionals work on old hardware with software restrictions that serve outlandish and obscene security regulations that in many cases are actually counter-productive. So, if Hillary were interested in getting anything done she would be quite compelled to leave the federal network for greener, more productive pastures.

professionals work on old hardware with software restrictions that serve outlandish and obscene security regulations that in many cases are actually counter-productive. So, if Hillary were interested in getting anything done she would be quite compelled to leave the federal network for greener, more productive pastures.

The more you leave out, the more you highlight what you leave in.

Where one might think that the government would give classified work the highest priority, the environment for working there is the worst. Keep in mind that it is worse than the already shitty and atrocious unclassified environment. The seeming purpose of ever ything is not my or anyone’s actual productivity, but rather the protection of information, or at least the appearance of protection. Our approach to everything is administrative compliance with directives. Actual performance on anything is completely secondary to the appearance of performance. The result of this pathetic approach to providing the taxpayer with benefit for money expended is a dysfunctional system that provides little in return. It is primed for mistakes and outright systematic failures. Nothing stresses the system more than a high-ranking person hell-bent on doing their job. The sort of people who ascend to high positions like Hillary Clinton find the sort of compliance demanded by the system awful (because it is), and have the power to ignore it.

ything is not my or anyone’s actual productivity, but rather the protection of information, or at least the appearance of protection. Our approach to everything is administrative compliance with directives. Actual performance on anything is completely secondary to the appearance of performance. The result of this pathetic approach to providing the taxpayer with benefit for money expended is a dysfunctional system that provides little in return. It is primed for mistakes and outright systematic failures. Nothing stresses the system more than a high-ranking person hell-bent on doing their job. The sort of people who ascend to high positions like Hillary Clinton find the sort of compliance demanded by the system awful (because it is), and have the power to ignore it.

Of course I’ve seen this abuse of power live and in the flesh. Take the former Los Alamos Lab Director, Admiral Pete Nanos who famously shut the Lab down an denounced the staff as “Butthead Cowboys!” He blurted out classified information in an unclassified meeting in front of hundreds if not thousands of people. If he had taken his training, and been compliant he should have known better. Instead of being issued a security infraction like any of the butthead cowboys in attendance would have gotten, he got a pass. The powers that be simply declassified the material and let him slide by. Why? Power comes with privileges. When you’re in a position of power you find the rules are different. This is a maxim repeated over and over in our World. Some of this looks like white privilege, or rich white privilege where you can get away with smoking pot, or raping unconscious girls with no penalty, or lightened penalties. If you’re not white or not rich you pay a much stiffer penalty including prison time.

Of course I’ve seen this abuse of power live and in the flesh. Take the former Los Alamos Lab Director, Admiral Pete Nanos who famously shut the Lab down an denounced the staff as “Butthead Cowboys!” He blurted out classified information in an unclassified meeting in front of hundreds if not thousands of people. If he had taken his training, and been compliant he should have known better. Instead of being issued a security infraction like any of the butthead cowboys in attendance would have gotten, he got a pass. The powers that be simply declassified the material and let him slide by. Why? Power comes with privileges. When you’re in a position of power you find the rules are different. This is a maxim repeated over and over in our World. Some of this looks like white privilege, or rich white privilege where you can get away with smoking pot, or raping unconscious girls with no penalty, or lightened penalties. If you’re not white or not rich you pay a much stiffer penalty including prison time.

I learned the lesson again at Los Alamos in another episode that will remain slightly vague in this post. I went to a meeting that honored a Lab scientist’s career. During the course of the meeting another Lab director read an account of this person’s work noting their monumental accomplishments and contributions to the national security. All of the account was good, true and correct except it was classified in its content. I took the written text to the classification office at the Lab and noted its issues. They agreed that it was indeed classified. Because the people who wrote the account (very high ranking DoE person) and the person who read it were so high ranking they would not touch this with the proverbial ten-foot pole. They knew a violation had occurred, but their experience also told them that it was foolish to pursue it. This pursuit would only hurt those who pointed out the problem and those committing the violations were immune.

Let me ask you, dear reader, how do you think someone would treat the Secretary of State of the United States. How much more untouchable would they be? It is certainly wrong in a perfect World, but we live in a very imperfect world.

A secret’s worth depends on the people from whom it must be kept.

― Carlos Ruiz Zafón

The core philosophy in all of this is that we have lots of secrets to protect because we are the biggest and baddest country on Earth. It was certainly true at one time, but every day I wonder less and less if we still are and gain assurance that we are not. So we have created a system that is predicated on our lead in science and technology, but completely and utterly undermines our ability to keep that lead. We have a system that is completely devoted to undermining our productivity at every turn in the service of protecting information that loses its real value every day. To put it differently our current approach and policy is utter and complete fucking madness!

I also want to be clear that classification of a lot of material is absolutely necessary. It is essential to the safety and security of the Nation and the World. The cavalier and abusive way that classification is applied today runs utterly counter to this. By classifying everything in sight, we reduce the value and importance of the things that must be classified. By using classification of documents to cover everything with a blanket, the real need and purpose of classification is obscured and harmed deeply. All of this said I have not discussed the most widely abused version of classification, “Official Use Only,” which is applied in an almost entirely unregulated manner. It is abused widely and casually. Among the areas regulated by this awful policy is the Export Controlled Information, which is easily one of the worst laws I’ve ever come in contact with. It is just simply put stupid and incompetent. It probably does much more harm than good to the national security of the nation.

Power does not corrupt. Fear corrupts… perhaps the fear of a loss of power.

― John Steinbeck

Republican presidential candidate, businessman Donald Trump stands during the Fox Business Network Republican presidential debate at the North Charleston Coliseum, Thursday, Jan. 14, 2016, in North Charleston, S.C. (AP Photo/Chuck Burton)

Let’s be clear about the Country and World we live in. The rich and powerful are corrupt. The rich and powerful are governed by entirely different rules than everyone else. Mistakes, violations of the law, and morality itself for the rich and powerful are fundamentally different than the common man. So to be clear Hillary Clinton committed abuses of power. Donald Trump has committed abuses of power too. Barack Obama has as well. Either Hillary or Trump will continue to do so if elected President. Until the basic attitudes toward power and money change we should expect this to continue. The same set of abuses of power happen across the spectrum of society in every organization and business. The larger the organization or business, the worse the abuse of power can expect to be. As long as it is tolerated it can be expected to continue.

A man who has never gone to school may steal a freight car; but if he has a university education, he may steal the whole railroad.

― Theodore Roosevelt

Our societal approach to classification of documents is simply a tool of this sort of rampant abuse of power. We see any sense of a viable “whistleblower” protection to be  complete and utter bullshit. People who have highlighted huge systematic abuses of power involving murder and vast violation of constitutional law are thrown to the proverbial wolves. There is no protection, it is viewed as treason and these people are treated as harshly as possible (Snowden, Assange, and Manning come to mind). As I’ve noted above people in positions of authority can violate the law with utter impunity. At the same time classification is completely out of control. More and mo

complete and utter bullshit. People who have highlighted huge systematic abuses of power involving murder and vast violation of constitutional law are thrown to the proverbial wolves. There is no protection, it is viewed as treason and these people are treated as harshly as possible (Snowden, Assange, and Manning come to mind). As I’ve noted above people in positions of authority can violate the law with utter impunity. At the same time classification is completely out of control. More and mo re is being classified with less and less control. Such classification often only serves to hide information and serve the needs of the status quo power structure.

re is being classified with less and less control. Such classification often only serves to hide information and serve the needs of the status quo power structure.

In the end, Hillary had really good reasons to do what she did, and believe that she had the right to do so. Everything in the system is going to provide her with the evidence that the rules for everyone else do not apply to her. Hillary wasn’t correct, but we have created an incompetent, unproductive computing system that virtually compelled her to choose the path she took. We have created a culture where the most powerful people do not have to follow the rules that the regular guy rules. The system has been structured by fear and lack of trust without any regard for productivity. If we want to remain the most powerful country, we need to change our priorities on productivity, secrecy and the corruption of power.

The whole issue of runaway classification, classified e-mails and our inability to produce a productive work environment in National Security is at the nexus of incompetence, lack of trust, corruption resulting in a systematic devotion to societal mediocrity.

Arguing that you don’t care about the right to privacy because you have nothing to hide is no different than saying you don’t care about free speech because you have nothing to say.

– Edward Snowden

I’ll just say up front that my contention is that there is precious little excellence to be found today in many fields. Modeling & simulation is no different. I will also contend that excellence is relatively easy to obtain, or at the very least a key change in mindset will move us in that direction. This change in mindset is relatively small, but essential. It deals with the general setting of satisfaction with the current state and whether restlessness exists that ends up allowing progress to be sought. Too often there seems to be an innate satisfaction with too much of the “ecosystem” for modeling & simulation, and not enough agitation for progress. We should continually seek the opportunity and need for progress in the full spectrum of work. Our obsession with planning, and micromanagement of research ends up choking the success from everything it touches by short-circuiting the entire natural process of progress, discovery and serendipity.

I’ll just say up front that my contention is that there is precious little excellence to be found today in many fields. Modeling & simulation is no different. I will also contend that excellence is relatively easy to obtain, or at the very least a key change in mindset will move us in that direction. This change in mindset is relatively small, but essential. It deals with the general setting of satisfaction with the current state and whether restlessness exists that ends up allowing progress to be sought. Too often there seems to be an innate satisfaction with too much of the “ecosystem” for modeling & simulation, and not enough agitation for progress. We should continually seek the opportunity and need for progress in the full spectrum of work. Our obsession with planning, and micromanagement of research ends up choking the success from everything it touches by short-circuiting the entire natural process of progress, discovery and serendipity. n my view the desire for continual progress is the essence of excellence. When I see the broad field of modeling & simulation the need for progress seems pervasive and deep. When I hear our leaders talk such needs are muted and progress seems to only depend on a few simple areas of focus. Such a focus is always warranted if there is an opportunity to be taken advantage of. Instead we seem to be in an age where the technological opportunity being sought is arrayed against progress, computer hardware. In the process of trying to force progress where it is less available the true engines of progress are being shut down. This represents mismanagement of epic proportions and needs to be met with calls for sanity and intelligence in our future.

n my view the desire for continual progress is the essence of excellence. When I see the broad field of modeling & simulation the need for progress seems pervasive and deep. When I hear our leaders talk such needs are muted and progress seems to only depend on a few simple areas of focus. Such a focus is always warranted if there is an opportunity to be taken advantage of. Instead we seem to be in an age where the technological opportunity being sought is arrayed against progress, computer hardware. In the process of trying to force progress where it is less available the true engines of progress are being shut down. This represents mismanagement of epic proportions and needs to be met with calls for sanity and intelligence in our future. The way toward excellence, innovation and improvement is to figure out how to break what you have. Always push your code to its breaking point; always know what reasonable (or even unreasonable) problems you can’t successfully solve. Lack of success can be defined in multiple ways including complete failure of a code, lack of convergence, lack of quality, or lack of accuracy. Generally people test their code where it works and if they are good code developers they continue to test the code all the time to make sure it still works. If you want to get better you push at the places where the code doesn’t work, or doesn’t work well. You make the problems where it didn’t work part of the ones that do work. This is the simple and straightforward way to progress, and it is stunning how few efforts follow this simple, and obvious path. It is the golden path that we deny ourselves of today.

The way toward excellence, innovation and improvement is to figure out how to break what you have. Always push your code to its breaking point; always know what reasonable (or even unreasonable) problems you can’t successfully solve. Lack of success can be defined in multiple ways including complete failure of a code, lack of convergence, lack of quality, or lack of accuracy. Generally people test their code where it works and if they are good code developers they continue to test the code all the time to make sure it still works. If you want to get better you push at the places where the code doesn’t work, or doesn’t work well. You make the problems where it didn’t work part of the ones that do work. This is the simple and straightforward way to progress, and it is stunning how few efforts follow this simple, and obvious path. It is the golden path that we deny ourselves of today. n the success of modeling & simulation to date. We are too satisfied that the state of the art is fine and good enough. We lack a general sense that improvements, and progress are always possible. Instead of a continual striving to improve, the approach of focused and planned breakthroughs has beset the field. We have a distinct management approach that provides distinctly oriented improvements while ignoring important swaths of the technical basis for modeling & simulation excellence. The result of this ignorance is an increasingly stagnant status quo that embraces “good enough” implicitly through a lack of support for “better”.

n the success of modeling & simulation to date. We are too satisfied that the state of the art is fine and good enough. We lack a general sense that improvements, and progress are always possible. Instead of a continual striving to improve, the approach of focused and planned breakthroughs has beset the field. We have a distinct management approach that provides distinctly oriented improvements while ignoring important swaths of the technical basis for modeling & simulation excellence. The result of this ignorance is an increasingly stagnant status quo that embraces “good enough” implicitly through a lack of support for “better”. Modeling & simulation arose to utility in support of real things. It owes much of its prominence to the support of national defense during the cold war. Everything from fighter planes to nuclear weapons to bullets and bombs utilized modeling & simulation to strive toward the best possible weapon. Similarly modeling & simulation moved into the world of manufacturing aiding in the design and analysis of cars, planes and consumer products across the spectrum of the economy. The problem is that we have lost sight of the necessity of these real world products as the engine of improvement in modeling & simulation. Instead we have allowed computer hardware to become an end unto itself rather than simply a tool. Even in computing, hardware has little centrality to the field. In computing today, the “app” is king and the keys to the market hardware is simply a necessary detail.

Modeling & simulation arose to utility in support of real things. It owes much of its prominence to the support of national defense during the cold war. Everything from fighter planes to nuclear weapons to bullets and bombs utilized modeling & simulation to strive toward the best possible weapon. Similarly modeling & simulation moved into the world of manufacturing aiding in the design and analysis of cars, planes and consumer products across the spectrum of the economy. The problem is that we have lost sight of the necessity of these real world products as the engine of improvement in modeling & simulation. Instead we have allowed computer hardware to become an end unto itself rather than simply a tool. Even in computing, hardware has little centrality to the field. In computing today, the “app” is king and the keys to the market hardware is simply a necessary detail. To address the proverbial “elephant in the room” the national exascale program is neither a good goal, nor bold in any way. It is the actual antithesis of what we need for excellence. The entire program will only power the continued decline in achievement in the field. It is a big project that is being managed the same way bridges are built. Nothing of any excellence will come of it. It is not inspirational or aspirational either. It is stale. It is following the same path that we have been on for the past 20 years, improvement in modeling & simulation by hardware. We have tremendous places we might harness modeling & simulation to help produce and even enable great outcomes. None of these greater societal goods is in the frame with exascale. It is a program lacking a soul.

To address the proverbial “elephant in the room” the national exascale program is neither a good goal, nor bold in any way. It is the actual antithesis of what we need for excellence. The entire program will only power the continued decline in achievement in the field. It is a big project that is being managed the same way bridges are built. Nothing of any excellence will come of it. It is not inspirational or aspirational either. It is stale. It is following the same path that we have been on for the past 20 years, improvement in modeling & simulation by hardware. We have tremendous places we might harness modeling & simulation to help produce and even enable great outcomes. None of these greater societal goods is in the frame with exascale. It is a program lacking a soul. The title is a bit misleading so it could be concise. A more precise one would be “Progress is mostly incremental; then progress can be (often serendipitously) massive” Without accepting incremental progress as the usual, typical outcome, the massive leap forward is impossible. If incremental progress is not sought as the natural outcome of working with excellence, progress dies completely. The gist of my argument is that attitude and orientation is the key to making things better. Innovation and improvement are the result of having the right attitude and orientation rather than having a plan for it. You cannot schedule breakthroughs, but you can create an environment and work with an attitude that makes it possible, if not likely. The maddening thing about breakthroughs is their seemingly random nature, you cannot plan for them they just happen, and most of the time they don’t.

The title is a bit misleading so it could be concise. A more precise one would be “Progress is mostly incremental; then progress can be (often serendipitously) massive” Without accepting incremental progress as the usual, typical outcome, the massive leap forward is impossible. If incremental progress is not sought as the natural outcome of working with excellence, progress dies completely. The gist of my argument is that attitude and orientation is the key to making things better. Innovation and improvement are the result of having the right attitude and orientation rather than having a plan for it. You cannot schedule breakthroughs, but you can create an environment and work with an attitude that makes it possible, if not likely. The maddening thing about breakthroughs is their seemingly random nature, you cannot plan for them they just happen, and most of the time they don’t. modern management seems to think that innovation is something to be managed for and everything can be planned. Like most things where you just try too damn hard, this management approach has exactly the opposite effect. We are actually unintentionally, but actively destroying the environment that allows progress, innovation and breakthroughs to happen. The fastidious planning does the same thing. It is a different thing than having a broad goal and charter that pushes toward a better tomorrow. Today we are expected to plan our research like we are building a goddamn bridge! It is not even remotely the same! The result is the opposite and we are getting less for every research dollar than ever before.

modern management seems to think that innovation is something to be managed for and everything can be planned. Like most things where you just try too damn hard, this management approach has exactly the opposite effect. We are actually unintentionally, but actively destroying the environment that allows progress, innovation and breakthroughs to happen. The fastidious planning does the same thing. It is a different thing than having a broad goal and charter that pushes toward a better tomorrow. Today we are expected to plan our research like we are building a goddamn bridge! It is not even remotely the same! The result is the opposite and we are getting less for every research dollar than ever before. A large part of the problem with our environment is an obsession with measuring performance by the achievement of goals or milestones. Instead of working to create a super productive and empowering work place where people work exceptionally by intrinsic motivation, we simply set “lofty” goals and measure their achievement. The issue is the mindset implicit in the goal setting and measuring; this is the lack of trust in those doing the work. Instead of creating an environment and work processes that enable the best performance, we define everything in terms of milestones. These milestones and the attitudes that surround them sew the seeds of destruction, not because goals are wrong or bad, but because the behavior driven by achieving management goals is so corrosively destructive.

A large part of the problem with our environment is an obsession with measuring performance by the achievement of goals or milestones. Instead of working to create a super productive and empowering work place where people work exceptionally by intrinsic motivation, we simply set “lofty” goals and measure their achievement. The issue is the mindset implicit in the goal setting and measuring; this is the lack of trust in those doing the work. Instead of creating an environment and work processes that enable the best performance, we define everything in terms of milestones. These milestones and the attitudes that surround them sew the seeds of destruction, not because goals are wrong or bad, but because the behavior driven by achieving management goals is so corrosively destructive.

So the difference is really simple and clear. You must be expanding the state of the art, or defining what it means to be world class. Simply being at the state of the art or world class is not enough. Progress depends on being committed and working actively at improving upon and defining state of the art and world-class work. Little improvements can lead to the massive breakthroughs everyone aspires toward, and really are the only way to get them. Generally all these things are serendipitous and depend entirely on a culture that creates positive change and prizes excellence. One never really knows where the tipping point is and getting to the breakthrough depends mostly on the faith that it is out there waiting to be discovered.

So the difference is really simple and clear. You must be expanding the state of the art, or defining what it means to be world class. Simply being at the state of the art or world class is not enough. Progress depends on being committed and working actively at improving upon and defining state of the art and world-class work. Little improvements can lead to the massive breakthroughs everyone aspires toward, and really are the only way to get them. Generally all these things are serendipitous and depend entirely on a culture that creates positive change and prizes excellence. One never really knows where the tipping point is and getting to the breakthrough depends mostly on the faith that it is out there waiting to be discovered. Computing the solution to flows containing shock waves used to be exceedingly difficult, and for a lot of reasons it is now modestly difficult. Solutions for many problems may now be considered routine, but numerous pathologies exist and the limit of what is possible still means research progress are vital. Unfortunately there seems to be little interest in making such progress from those funding research, it goes in the pile of solved problems. Worse yet, there a numerous preconceptions about results, and standard practices about how results are presented that contend to inhibit progress. Here, I will outline places where progress is needed and how people discuss research results in a way that furthers the inhibitions.

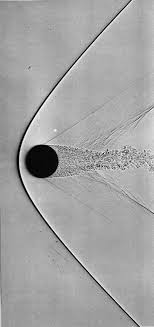

Computing the solution to flows containing shock waves used to be exceedingly difficult, and for a lot of reasons it is now modestly difficult. Solutions for many problems may now be considered routine, but numerous pathologies exist and the limit of what is possible still means research progress are vital. Unfortunately there seems to be little interest in making such progress from those funding research, it goes in the pile of solved problems. Worse yet, there a numerous preconceptions about results, and standard practices about how results are presented that contend to inhibit progress. Here, I will outline places where progress is needed and how people discuss research results in a way that furthers the inhibitions. table. To do the best job means making some hard choices that often fly in the face of ideal circumstances. By making these hard choices you can produce far better methods for practical use. It often means sacrificing things that might be nice in an ideal linear world for the brutal reality of a nonlinear world. I would rather have something powerful and functional in reality than something of purely theoretical interest. The published literature seems to be opposed to this point-of-view with a focus on many issues of little practical importance.

table. To do the best job means making some hard choices that often fly in the face of ideal circumstances. By making these hard choices you can produce far better methods for practical use. It often means sacrificing things that might be nice in an ideal linear world for the brutal reality of a nonlinear world. I would rather have something powerful and functional in reality than something of purely theoretical interest. The published literature seems to be opposed to this point-of-view with a focus on many issues of little practical importance. upon this example. Worse yet, the difficulty of extending Lax’s work is monumental. Moving into high dimensions invariably leads to instability and flow that begins to become turbulent, and turbulence is poorly understood. Unfortunately we are a long way from recreating Lax’s legacy in other fields (see e.g.,

upon this example. Worse yet, the difficulty of extending Lax’s work is monumental. Moving into high dimensions invariably leads to instability and flow that begins to become turbulent, and turbulence is poorly understood. Unfortunately we are a long way from recreating Lax’s legacy in other fields (see e.g.,  where it does not. Progress in turbulence is stagnant and clearly lacks key conceptual advances necessary to chart a more productive path. It is vital to do far more than simply turn codes loose on turbulent problems and let great solutions come out because they won’t. Nonetheless, it is the path we are on. When you add shocks and compressibility to the mix, everything gets so much worse. Even the most benign turbulence is poorly understood much less anything complicated. It is high time to inject some new ideas into the study rather than continue to hammer away at the failed old ones. In closing this vignette, I’ll offer up a different idea: perhaps the essence of turbulence is compressible and associated with shocks rather than being largely divorced from these physics. Instead of building on the basis of the decisively unphysical aspects of incompressibility, turbulence might be better built upon a physical foundation of compressible (thermodynamic) flows with dissipative discontinuities (shocks) that fundamental observations call for and current theories cannot explain.

where it does not. Progress in turbulence is stagnant and clearly lacks key conceptual advances necessary to chart a more productive path. It is vital to do far more than simply turn codes loose on turbulent problems and let great solutions come out because they won’t. Nonetheless, it is the path we are on. When you add shocks and compressibility to the mix, everything gets so much worse. Even the most benign turbulence is poorly understood much less anything complicated. It is high time to inject some new ideas into the study rather than continue to hammer away at the failed old ones. In closing this vignette, I’ll offer up a different idea: perhaps the essence of turbulence is compressible and associated with shocks rather than being largely divorced from these physics. Instead of building on the basis of the decisively unphysical aspects of incompressibility, turbulence might be better built upon a physical foundation of compressible (thermodynamic) flows with dissipative discontinuities (shocks) that fundamental observations call for and current theories cannot explain. Let’s get to one of the biggest issues that confounds the computation of shocked flows, accuracy, convergence and order-of-accuracy. For computing shock waves, the order of accuracy is limited to first-order for everything emanating from any discontinuity (Majda & Osher 1977). Further more nonlinear systems of equations will invariably and inevitably create discontinuities spontaneously (Lax 1973). In spite of these realities the accuracy of solutions with shocks still matters, yet no one ever measures it. The reasons why it matter are far more subtle and refined, and the impact of accuracy is less pervasive in its victory. When a flow is smooth enough to allow high-order convergence, the accuracy of the solution with high-order methods is unambiguously superior. With smooth solutions the highest order method is the most efficient if you are solving for equivalent accuracy. When convergence is limited to first-order the high-order methods effectively lower the constant in front of the error term, which is less efficient. One then has the situation where the gains with high-order must be balanced with the cost of achieving high-order. In very many cases this balance is not achieved.

Let’s get to one of the biggest issues that confounds the computation of shocked flows, accuracy, convergence and order-of-accuracy. For computing shock waves, the order of accuracy is limited to first-order for everything emanating from any discontinuity (Majda & Osher 1977). Further more nonlinear systems of equations will invariably and inevitably create discontinuities spontaneously (Lax 1973). In spite of these realities the accuracy of solutions with shocks still matters, yet no one ever measures it. The reasons why it matter are far more subtle and refined, and the impact of accuracy is less pervasive in its victory. When a flow is smooth enough to allow high-order convergence, the accuracy of the solution with high-order methods is unambiguously superior. With smooth solutions the highest order method is the most efficient if you are solving for equivalent accuracy. When convergence is limited to first-order the high-order methods effectively lower the constant in front of the error term, which is less efficient. One then has the situation where the gains with high-order must be balanced with the cost of achieving high-order. In very many cases this balance is not achieved. se. Everyone knows that the order of accuracy cannot be maintained with a shock or discontinuity, and no one measures the solution accuracy or convergence. The problem is that these details still matter! You need convergent methods, and you have interest in the magnitude of the numerical error. Moreover there are still significant differences in these results on the basis of methodological differences. To up the ante, the methodological differences carry significant changes in the cost of solution. What one finds typically is a great deal of cost to achieve formal order of accuracy that provides very little benefit with shocked flows (see Greenough & Rider 2005, Rider, Greenough & Kamm 2007). This community in the open, or behind closed doors rarely confronts the implications of this reality. The result is a damper on all progress.

se. Everyone knows that the order of accuracy cannot be maintained with a shock or discontinuity, and no one measures the solution accuracy or convergence. The problem is that these details still matter! You need convergent methods, and you have interest in the magnitude of the numerical error. Moreover there are still significant differences in these results on the basis of methodological differences. To up the ante, the methodological differences carry significant changes in the cost of solution. What one finds typically is a great deal of cost to achieve formal order of accuracy that provides very little benefit with shocked flows (see Greenough & Rider 2005, Rider, Greenough & Kamm 2007). This community in the open, or behind closed doors rarely confronts the implications of this reality. The result is a damper on all progress. assessment of error and we ignore its utility perhaps only using it for plotting. What a massive waste! More importantly it masks problems that need attention.

assessment of error and we ignore its utility perhaps only using it for plotting. What a massive waste! More importantly it masks problems that need attention. In the end shocks are a well-trod field with a great deal of theoretical support for a host issues of broader application. If one is solving problems in any sort of real setting, the behavior of solutions is similar. In other words you cannot expect high-order accuracy almost every solution is converging at first-order (at best). By systematically ignoring this issue, we are hurting progress toward better, more effective solutions. What we see over and over again is utility with high-order methods, but only to a degree. Rarely does the fully rigorous achievement of high-order accuracy pay off with better accuracy per unit computational effort. On the other hand methods which are only first-order accurate formally are complete disasters and virtually useless practically. Is the sweet spot second-order accuracy? (Margolin and Rider 2002) Or just second-order accuracy for nonlinear parts of the solution with a limited degree of high-order as applied to the linear aspects of the solution? I think so.

In the end shocks are a well-trod field with a great deal of theoretical support for a host issues of broader application. If one is solving problems in any sort of real setting, the behavior of solutions is similar. In other words you cannot expect high-order accuracy almost every solution is converging at first-order (at best). By systematically ignoring this issue, we are hurting progress toward better, more effective solutions. What we see over and over again is utility with high-order methods, but only to a degree. Rarely does the fully rigorous achievement of high-order accuracy pay off with better accuracy per unit computational effort. On the other hand methods which are only first-order accurate formally are complete disasters and virtually useless practically. Is the sweet spot second-order accuracy? (Margolin and Rider 2002) Or just second-order accuracy for nonlinear parts of the solution with a limited degree of high-order as applied to the linear aspects of the solution? I think so. When one is solving problems involving a flow of some sort, conservation principles are quite attractive since these principles follow nature’s “true” laws (true to the extent we know things are conserved!). With flows involving shocks and discontinuities, the conservation brings even greater benefits as the Lax-Wendroff theorem demonstrates (

When one is solving problems involving a flow of some sort, conservation principles are quite attractive since these principles follow nature’s “true” laws (true to the extent we know things are conserved!). With flows involving shocks and discontinuities, the conservation brings even greater benefits as the Lax-Wendroff theorem demonstrates ( variables don’t). The beauty of primitive variables is that they trivially generalize to multiple dimensions in ways that characteristic variables do not. The other advantages are equally clear specifically the ability to extend the physics of the problem in a natural and simple manner. This sort of extension usually causes the characteristic approach to either collapse or at least become increasingly unwieldy. A key aspect to keep in mind at all times is that one returns to the conservation variables for the final approximation and update of the equations. Keeping the conservation form for the accounting of the complete solution is essential.

variables don’t). The beauty of primitive variables is that they trivially generalize to multiple dimensions in ways that characteristic variables do not. The other advantages are equally clear specifically the ability to extend the physics of the problem in a natural and simple manner. This sort of extension usually causes the characteristic approach to either collapse or at least become increasingly unwieldy. A key aspect to keep in mind at all times is that one returns to the conservation variables for the final approximation and update of the equations. Keeping the conservation form for the accounting of the complete solution is essential. The use of the “primitive variables” came from a number of different directions. Perhaps the earliest use of the term “primitive” came from meteorology in terms of the work of Bjerknes (1921) whose primitive equations formed the basis of early work in computing weather in an effort led by Jules Charney (1955). Another field to use this concept is the solution of incompressible flows. The primitive variables are the velocities and pressure, which is distinguished from the vorticity-streamfunction approach (Roache 1972). In two dimensions the vorticity-streamfunction solution is more efficient, but lacks simple connection to measurable quantities. The same sort of notion separates the conserved variables from the primitive variables in compressible flow. The use of primitive variables as an effective approach computationally may have begun in the computational physics work at Livermore in the 1970’s (see e.g., Debar). The connection of the primitive variables to classical analysis of compressible flows and simple physical interpretation also plays a role.

The use of the “primitive variables” came from a number of different directions. Perhaps the earliest use of the term “primitive” came from meteorology in terms of the work of Bjerknes (1921) whose primitive equations formed the basis of early work in computing weather in an effort led by Jules Charney (1955). Another field to use this concept is the solution of incompressible flows. The primitive variables are the velocities and pressure, which is distinguished from the vorticity-streamfunction approach (Roache 1972). In two dimensions the vorticity-streamfunction solution is more efficient, but lacks simple connection to measurable quantities. The same sort of notion separates the conserved variables from the primitive variables in compressible flow. The use of primitive variables as an effective approach computationally may have begun in the computational physics work at Livermore in the 1970’s (see e.g., Debar). The connection of the primitive variables to classical analysis of compressible flows and simple physical interpretation also plays a role. Now we can elucidate how to move between these two forms, and even use the primitive variables for the analysis of the conserved form directly. Using Huynh’s paper as a guide and repeating the main results one defines a matrix of partial derivatives of the conserved variables,

Now we can elucidate how to move between these two forms, and even use the primitive variables for the analysis of the conserved form directly. Using Huynh’s paper as a guide and repeating the main results one defines a matrix of partial derivatives of the conserved variables,  So before getting to all the things fucking everything up, let’s talk about why the job should be so incredibly fucking awesome. I get to be a scientist! I get to solve problems, and do math and work with incredible phenomena (some of which I’ve tattooed on my body). I get to invent things like new ways of solving problems. I get to learn and grow and develop new skills, hone old skills and work with a bunch of super smart people who love to share their wealth of knowledge. I get to write papers that other people read and build on, I get to read papers written by a bunch of people who are way smarter than me, and if I understand them I learn something. I get to speak at conferences (which can be in nice places to visit) and listen at them too on interesting topics, and get involved in deep debates over the boundaries of knowledge. I get to contribute to solving important problems for mankind, or my nation, or simply for the joy of solving them. I work with incredible technology that is literally at the very bleeding edge of what we know. I get to do all of this and provide a reasonably comfortable living for my loved ones.

So before getting to all the things fucking everything up, let’s talk about why the job should be so incredibly fucking awesome. I get to be a scientist! I get to solve problems, and do math and work with incredible phenomena (some of which I’ve tattooed on my body). I get to invent things like new ways of solving problems. I get to learn and grow and develop new skills, hone old skills and work with a bunch of super smart people who love to share their wealth of knowledge. I get to write papers that other people read and build on, I get to read papers written by a bunch of people who are way smarter than me, and if I understand them I learn something. I get to speak at conferences (which can be in nice places to visit) and listen at them too on interesting topics, and get involved in deep debates over the boundaries of knowledge. I get to contribute to solving important problems for mankind, or my nation, or simply for the joy of solving them. I work with incredible technology that is literally at the very bleeding edge of what we know. I get to do all of this and provide a reasonably comfortable living for my loved ones.

art of these systematic control mechanisms at play is the growth of the management culture in all these institutions. Instead of valuing the top scientists and engineers who produce discovery, innovation and progress, we now value the management class above all else. The managers manage people, money and projects that have come to define everything. This is true at the Labs as it is at universities where the actual mission of both has been scarified to money and power. Neither the Labs nor Universities are producing what they were designed to create (weapons, students, knowledge,). Instead they have become money-laundering operations whose primary service is the careers of managers. All one has to do is see who are the headline grabbers from any of these places; it’s the managers (who by and large show no leadership). These managers are measured in dollars and people, not any actual achievements. All of this is enabled by control and control enables people to feel safe and in control. As long as reality doesn’t intrude we will go down this deep death spiral.

art of these systematic control mechanisms at play is the growth of the management culture in all these institutions. Instead of valuing the top scientists and engineers who produce discovery, innovation and progress, we now value the management class above all else. The managers manage people, money and projects that have come to define everything. This is true at the Labs as it is at universities where the actual mission of both has been scarified to money and power. Neither the Labs nor Universities are producing what they were designed to create (weapons, students, knowledge,). Instead they have become money-laundering operations whose primary service is the careers of managers. All one has to do is see who are the headline grabbers from any of these places; it’s the managers (who by and large show no leadership). These managers are measured in dollars and people, not any actual achievements. All of this is enabled by control and control enables people to feel safe and in control. As long as reality doesn’t intrude we will go down this deep death spiral. recognize is the relative simplicity of running mediocre operations without any drive for excellence. Its great work if you can get it! If your standards are complete shit, almost anything goes, and you avoid the need for conflict almost entirely. In fact the only source of conflict becomes the need to drive away any sense of excellence. Any hint of quality or excellence has the potential to overturn this entire operation and the sweet deal of running it. So any quality ideas are attacked and driven out as surely as the immune system attacks a virus. While this might be a tad hyperbolic, its not too far off at all, and the actual bull’s-eye for an ever growing swath of our modern world.

recognize is the relative simplicity of running mediocre operations without any drive for excellence. Its great work if you can get it! If your standards are complete shit, almost anything goes, and you avoid the need for conflict almost entirely. In fact the only source of conflict becomes the need to drive away any sense of excellence. Any hint of quality or excellence has the potential to overturn this entire operation and the sweet deal of running it. So any quality ideas are attacked and driven out as surely as the immune system attacks a virus. While this might be a tad hyperbolic, its not too far off at all, and the actual bull’s-eye for an ever growing swath of our modern world.

We see a large body of people in society who are completely governed by fear above all else. The fear is driving people to make horrendous and destructive decisions politically. The fear is driving the workplace into the same set of horrendous and destructive decisions. Its not clear whether we will turn away from this mass fear before things get even worse. I worry that both work and politics will be governed by these fears until it pushes us over the precipice to disaster. Put differently, the shit show we see in public through politics mirrors the private shit show in our workplaces. The shit is likely to get much worse before it gets better.

We see a large body of people in society who are completely governed by fear above all else. The fear is driving people to make horrendous and destructive decisions politically. The fear is driving the workplace into the same set of horrendous and destructive decisions. Its not clear whether we will turn away from this mass fear before things get even worse. I worry that both work and politics will be governed by these fears until it pushes us over the precipice to disaster. Put differently, the shit show we see in public through politics mirrors the private shit show in our workplaces. The shit is likely to get much worse before it gets better. Stability is essential for computation to succeed. Better stability principles can pave the way for greater computational success. We are in dire need of new, expanded concepts for stability that provides paths forward toward uncharted vistas of simulation.

Stability is essential for computation to succeed. Better stability principles can pave the way for greater computational success. We are in dire need of new, expanded concepts for stability that provides paths forward toward uncharted vistas of simulation. nstability at Los Alamos during World War 2. This method is still the gold standard for analysis today in spite of rather profound limitations and applicability. In the early 1950’s Lax came up with

nstability at Los Alamos during World War 2. This method is still the gold standard for analysis today in spite of rather profound limitations and applicability. In the early 1950’s Lax came up with the equivalence theorem (interestingly both Von Neumann and Lax worked with Robert Richtmyer,

the equivalence theorem (interestingly both Von Neumann and Lax worked with Robert Richtmyer,  methodology using Mathematica,

methodology using Mathematica,  Hyperbolic PDEs have always been at the leading edge of computation because they are important to applications, difficult and this has attracted a lot of real unambiguous genius to solve it. I’ve mentioned a cadre of genius who blazed the trails 60 to 70 years ago (Von Neumann, Lax, Richtmyer

Hyperbolic PDEs have always been at the leading edge of computation because they are important to applications, difficult and this has attracted a lot of real unambiguous genius to solve it. I’ve mentioned a cadre of genius who blazed the trails 60 to 70 years ago (Von Neumann, Lax, Richtmyer  he desired outcome is to use the high-order solution as much as possible, but without inducing the dangerous oscillations. The key is to build upon the foundation of the very stable, but low accuracy, dissipative method. The theory that can be utilized makes the dissipative structure of the solution a nonlinear relationship. This produces a test of the local structure of the solution, which tells us when it is safe to be high-order, and when the solution is so discontinuous that the low order solution must be used. The result is a solution that is high-order as much as possible, and inherits the stability of the low-order solution gaining purchase on its essential properties (asymptotic dissipation and entropy-principles). These methods are so stable and powerful that one might utilize a completely unstable method as one of the options with very little negative consequence. This class of methods revolutionized computational fluid dynamics, and allowed the relative confidence in the use of methods to solve practical problems.

he desired outcome is to use the high-order solution as much as possible, but without inducing the dangerous oscillations. The key is to build upon the foundation of the very stable, but low accuracy, dissipative method. The theory that can be utilized makes the dissipative structure of the solution a nonlinear relationship. This produces a test of the local structure of the solution, which tells us when it is safe to be high-order, and when the solution is so discontinuous that the low order solution must be used. The result is a solution that is high-order as much as possible, and inherits the stability of the low-order solution gaining purchase on its essential properties (asymptotic dissipation and entropy-principles). These methods are so stable and powerful that one might utilize a completely unstable method as one of the options with very little negative consequence. This class of methods revolutionized computational fluid dynamics, and allowed the relative confidence in the use of methods to solve practical problems. need is far less obvious than for hyperbolic PDEs. The truth is that we have made rather stunning progress in both areas and the breakthroughs have put forth the illusion that the methods today are good enough. We need to recognize that this is awesome for those who developed the status quo, but a very bad thing if there are other breakthroughs ripe for the taking. In my view we are at such a point and missing the opportunity to make the “good enough,” “great” or even “awesome”. Nonlinear stability is deeply associated with adaptivity and ultimately more optimal and appropriate approximations for the problem at hand.

need is far less obvious than for hyperbolic PDEs. The truth is that we have made rather stunning progress in both areas and the breakthroughs have put forth the illusion that the methods today are good enough. We need to recognize that this is awesome for those who developed the status quo, but a very bad thing if there are other breakthroughs ripe for the taking. In my view we are at such a point and missing the opportunity to make the “good enough,” “great” or even “awesome”. Nonlinear stability is deeply associated with adaptivity and ultimately more optimal and appropriate approximations for the problem at hand. Further extensions of nonlinear stability would be useful for parabolic PDEs. Generally parabolic equations are fantastically forgiving so doing anything more complicated is not prized unless it produces better accuracy at the same time. Accuracy is imminently achievable because parabolic equations generate smooth solutions. Nonetheless these accurate solutions can still produce unphysical effects that violate other principles. Positivity is rarely threatened although this would be a reasonable property to demand. It is more likely that the solutions will violate some sort of entropy inequality in a mild manner. Instead of producing something demonstrably unphysical, the solution would simply not have enough entropy generated to be physical. As such we can see solutions approaching the right solution, but in a sense from the wrong direction that threatens to produce non-physically admissible solutions. One potential way to think about is might be in an application of heat conductions. One can examine whether or not the flow of heat matches the proper direction of heat flow locally, and if a high-order approximation does not either choose another high-order approximation that does, or limit to a lower order method wit unambiguous satisfaction of the proper direction. The intrinsically unfatal impact of these flaws mean they are not really addressed.

Further extensions of nonlinear stability would be useful for parabolic PDEs. Generally parabolic equations are fantastically forgiving so doing anything more complicated is not prized unless it produces better accuracy at the same time. Accuracy is imminently achievable because parabolic equations generate smooth solutions. Nonetheless these accurate solutions can still produce unphysical effects that violate other principles. Positivity is rarely threatened although this would be a reasonable property to demand. It is more likely that the solutions will violate some sort of entropy inequality in a mild manner. Instead of producing something demonstrably unphysical, the solution would simply not have enough entropy generated to be physical. As such we can see solutions approaching the right solution, but in a sense from the wrong direction that threatens to produce non-physically admissible solutions. One potential way to think about is might be in an application of heat conductions. One can examine whether or not the flow of heat matches the proper direction of heat flow locally, and if a high-order approximation does not either choose another high-order approximation that does, or limit to a lower order method wit unambiguous satisfaction of the proper direction. The intrinsically unfatal impact of these flaws mean they are not really addressed. As surely as the sun rises in the East, peer review that treasured and vital process for the health of science is dying or dead. In many cases we still try to conduct meaningful peer review, but increasingly it is simply a mere animated zombie form of peer review. The zombie peer review of today is a mere shadow of the living soul of science it once was. Its death is merely a manifestation of bigger broader societal trends such as those unraveling the political processes, or transforming our economies. We have allowed the quality of the work being done to become an assumption that we do not actively interrogate through a critical process (e.g., peer review). Instead if we examine the emphasis for how money is spent in science and engineering everything, but the quality of the technical work is focused on and demands are made. Instead there is an inherent assumption that the quality of the technical work is excellent and the organizational or institutional focus. With sufficient time this lack of emphasis is eroding the quality presumptions to the point where they no longer hold sway.

As surely as the sun rises in the East, peer review that treasured and vital process for the health of science is dying or dead. In many cases we still try to conduct meaningful peer review, but increasingly it is simply a mere animated zombie form of peer review. The zombie peer review of today is a mere shadow of the living soul of science it once was. Its death is merely a manifestation of bigger broader societal trends such as those unraveling the political processes, or transforming our economies. We have allowed the quality of the work being done to become an assumption that we do not actively interrogate through a critical process (e.g., peer review). Instead if we examine the emphasis for how money is spent in science and engineering everything, but the quality of the technical work is focused on and demands are made. Instead there is an inherent assumption that the quality of the technical work is excellent and the organizational or institutional focus. With sufficient time this lack of emphasis is eroding the quality presumptions to the point where they no longer hold sway. rk, wellspring of ideas and vital communication mechanism. Whether in the service of publishing cutting edge research, or providing quality checks for Laboratory research or engineering design its primal function is the same; quality, defensibility and clarity are derived through its proper application. In each of its fashions the peer review has an irreplaceable core of a community wisdom, culture and self-policing. With its demise, each of these is at risk of dying too. Rebuilding everything we are tearing down is going to be expensive, time-consuming and painful.

rk, wellspring of ideas and vital communication mechanism. Whether in the service of publishing cutting edge research, or providing quality checks for Laboratory research or engineering design its primal function is the same; quality, defensibility and clarity are derived through its proper application. In each of its fashions the peer review has an irreplaceable core of a community wisdom, culture and self-policing. With its demise, each of these is at risk of dying too. Rebuilding everything we are tearing down is going to be expensive, time-consuming and painful. Doing peer review isn’t given much wait professionally whether you’re a professor or working in a private or government lab. Peer review won’t give you tenure, or pay raises or other benefits; it is simply a moral act as part of the community. This character as an unrewarded moral act gets to the issue at the heart of things. Moral acts and “doing the right thing” is not valued today, nor are there definable norms of behavior that drive things. It simply takes the form of an unregulated professional tax, pro bono work. The way to fix this is change the system to value and reward good peer review (and by the same token punish bad in some way). This is a positive side of modern technology, which would be good to see, as the demise of peer review is driven to some extent by negative aspects of modernity, as I will discuss at the end of this essay.

Doing peer review isn’t given much wait professionally whether you’re a professor or working in a private or government lab. Peer review won’t give you tenure, or pay raises or other benefits; it is simply a moral act as part of the community. This character as an unrewarded moral act gets to the issue at the heart of things. Moral acts and “doing the right thing” is not valued today, nor are there definable norms of behavior that drive things. It simply takes the form of an unregulated professional tax, pro bono work. The way to fix this is change the system to value and reward good peer review (and by the same token punish bad in some way). This is a positive side of modern technology, which would be good to see, as the demise of peer review is driven to some extent by negative aspects of modernity, as I will discuss at the end of this essay. As a result reviewers rarely do a complete of good job of reviewing things, as they understand what the expected result is. Thus the review gets hollowed out from its foundation because the recipient of the review expects to get more than just a passing grade, they expect to get a giant pat on the back. If they don’t get their expected results, the reaction is often swift and punishing to those finding the problems. Often those looking over the shoulder are equally unaccepting of problems being found. Those overseeing work are highly political and worried about appearances or potential scandal. The reviewers know this to and that a bad review won’t result in better work, it will just be trouble for those being reviewed. The end result is that the peer review is broken by the review itself being hollow, the reviewers being easy on the work because of the explicit expectations and the implicit punishments and lack of follow through for any problems that might be found.

As a result reviewers rarely do a complete of good job of reviewing things, as they understand what the expected result is. Thus the review gets hollowed out from its foundation because the recipient of the review expects to get more than just a passing grade, they expect to get a giant pat on the back. If they don’t get their expected results, the reaction is often swift and punishing to those finding the problems. Often those looking over the shoulder are equally unaccepting of problems being found. Those overseeing work are highly political and worried about appearances or potential scandal. The reviewers know this to and that a bad review won’t result in better work, it will just be trouble for those being reviewed. The end result is that the peer review is broken by the review itself being hollow, the reviewers being easy on the work because of the explicit expectations and the implicit punishments and lack of follow through for any problems that might be found. We then get to the level above who is being reviewed and closer to the source of the problem, the political system. Our political systems are poisonous to everything and everyone. We do not have a political system perhaps anywhere in the World that is functioning to govern. The result is a collective inability to deal with issues, problem and challenges at a massive scale. We see nothing, but stagnation and blockage. We have a complete lack of will to deal with anything that is imperfect. Politics is always present and important because science and engineering are still intrinsically human activities, and humans need politics. The problem is that truth, and reality must play some normative role in decisions. The rejection of effective peer review is a rejection of reality as being germane and important in decisions. This rejection is ultimately unstable and unsustainable. The only question is when and how reality will impose itself, but it will happen, and in all likelihood through some sort calamity.

We then get to the level above who is being reviewed and closer to the source of the problem, the political system. Our political systems are poisonous to everything and everyone. We do not have a political system perhaps anywhere in the World that is functioning to govern. The result is a collective inability to deal with issues, problem and challenges at a massive scale. We see nothing, but stagnation and blockage. We have a complete lack of will to deal with anything that is imperfect. Politics is always present and important because science and engineering are still intrinsically human activities, and humans need politics. The problem is that truth, and reality must play some normative role in decisions. The rejection of effective peer review is a rejection of reality as being germane and important in decisions. This rejection is ultimately unstable and unsustainable. The only question is when and how reality will impose itself, but it will happen, and in all likelihood through some sort calamity. To get to a better state visa-vis peer review trust and honesty needs to become a priority. This is a piece of a broader rubric for progress toward a system that values work that is high in quality. We are not talking about excellence as superficially declared by the current branding exercise peer review has become, but the actual achievement of unambiguous excellence and achievement. The combination of honesty, trust and the search for excellence and achievement are needed to begin to fix our system. Much of the basic structure of our modern society is arrayed against this sort of change. We need to recognize the stakes in this struggle and prepare our selves for difficult times. Producing a system that supports something that looks like peer review will be a monumental struggle. We have become accustomed to a system that feeds on false excellence and achievement and celebrates scandal as an opiate for the masses.

To get to a better state visa-vis peer review trust and honesty needs to become a priority. This is a piece of a broader rubric for progress toward a system that values work that is high in quality. We are not talking about excellence as superficially declared by the current branding exercise peer review has become, but the actual achievement of unambiguous excellence and achievement. The combination of honesty, trust and the search for excellence and achievement are needed to begin to fix our system. Much of the basic structure of our modern society is arrayed against this sort of change. We need to recognize the stakes in this struggle and prepare our selves for difficult times. Producing a system that supports something that looks like peer review will be a monumental struggle. We have become accustomed to a system that feeds on false excellence and achievement and celebrates scandal as an opiate for the masses. simply lie to ourselves about how good everything is and how excellent all of are.

simply lie to ourselves about how good everything is and how excellent all of are. of decay ultimately ends the ability to conduct a peer review at all. Moreover, the culture that is arising in science acts as a further inhibition to effective review by removing the attitudes necessary for success from the basic repertoire of behaviors.

of decay ultimately ends the ability to conduct a peer review at all. Moreover, the culture that is arising in science acts as a further inhibition to effective review by removing the attitudes necessary for success from the basic repertoire of behaviors.

t has been a long time since I wrote a list post, and it seemed a good time to do one. They’re always really popular online, and it’s a good way to survey something. Looking forward into the future is always a nice thing when you need to be cheered up. There are lots of important things to do, and lots of massive opportunities. Maybe if we can muster our courage and vision we can solve some important problems and make a better world. I will cover science in general, and hedge the conversation toward computational science, cause that’s what I do and know the most about.

t has been a long time since I wrote a list post, and it seemed a good time to do one. They’re always really popular online, and it’s a good way to survey something. Looking forward into the future is always a nice thing when you need to be cheered up. There are lots of important things to do, and lots of massive opportunities. Maybe if we can muster our courage and vision we can solve some important problems and make a better world. I will cover science in general, and hedge the conversation toward computational science, cause that’s what I do and know the most about. Fixing the research environment and encouraging risk taking, innovation and tolerance for failure. I put this first because it impacts everything else so deeply. There are many wonderful things that the future holds for all of us, but the overall research environment is holding us back from the future we could be having. The environment for conducting good, innovative game changing research is terrible, and needs serious attention. We live in a time where all risk is shunned and any failure is punished. As a result innovation is crippled before it has a chance to breathe. The truth is that it is a symptom of a host larger societal issues revolving around our collective governance and capacity for change and progress.Somehow we have gotten the idea that research can be managed like a construction project, and such management is a mark of quality. Science absolutely needs great management, but the current brand of scheduled breakthroughs, milestones and micromanagement is choking the science away. We have lost the capacity to recognize that current management is only good for leeching money out of the economy for personal enrichment, and terrible for the organizations being managed whether it’s a business, laboratory or university. These current fads are oozing their way into every crevice of research including higher education where so much research happens. The result is a headlong march toward mediocrity and the destruction of the most fertile sources of innovation in the society. We are living off the basic research results of 30-50 years past, and creating an environment that will assure a less prosperous future. This plague is the biggest problem to solve but is truly reflective of a broader cultural milieu and may simply need to run its disastrous course.Over the weekend I read about the difference between first- and second-level thinking. First-level thinking looks for the obvious and superficial as a way of examining problems, issues and potential solutions. It is dealing wit