You can only exceed your limits if you’ve discovered them.

― Roel van Sleeuwen

About 15 years ago I wrote a simple paper with Len Margolin about options for modifying limiters used in high resolution schemes. Generally the paper didn’t get the success it deserved like so much else. It was studied by folks at Brown University, and was repurposed as a general positivity limiter. I will grant that the researchers at Brown did some really good work with the limiter especially proving how it could preserve accuracy (the positivity preserving limiter also preserves the design accuracy of the original reconstruction). I returned to this form in one of my recent papers, “Reconsidering Remap Methods” published earlier this year and the topic of a number of blog posts. Last week I realized that even more can be done with it and the form is really convenient for expressing a wide variety of nonlinear stability mechanisms in a more uniform manner.

The moment that crystalized my thinking was a re-reading and analysis of a limiter devised my Phil Colella and Michael Sekora for the PPM algorithm. This limiter was a “competitor” to an entirely different “limiting” strategy that I worked with Jeff Greenough and Jim Kamm. I now realized that the two limiters could be written in very nearly identical ways. Moreover, both of these ideas can be viewed as rather direct decedents of work by HT Huynh and Suresh from the late 1990’s. Each method  attempts to extend nonlinear stability ideas beyond simply preserving monotonicity, to preserving valid extrema in solutions. Monontonicity preservation has the problem of seriously damping extrema with the limiter. All of these limiters can be expressed as different choices for a common form allowing direct comparison or mix and match ideas to be utilized effectively.

attempts to extend nonlinear stability ideas beyond simply preserving monotonicity, to preserving valid extrema in solutions. Monontonicity preservation has the problem of seriously damping extrema with the limiter. All of these limiters can be expressed as different choices for a common form allowing direct comparison or mix and match ideas to be utilized effectively.

So, what is this common form?

Given a polynomial (or more general functions perhaps) representation of a solution in a computational cell, where

, the limiter is implemented as

,

is the general limiter and

. The limiter has a general form,

. The “Standard” is the test that defines the nonlinear control of the solution while “Reconstruction Choice” is the feature being controlled. I will give some examples below. It is important to note that

is the reconstruction that produces an upwind scheme that is the canonical linear monotonicity preserving (positive) method, but only first-order accurate. Higher order polynomials can be defined for higher accuracy, but without the limiter they are invariably oscillatory.

The most common choice is the definition of a positivity preserving limiter. In this case where

is the lowest value of

that will be tolerated for physical reasons. Next our reconstruction is interrogated

.

The same can be done for monotonicity-preserving limiters. Here the most common application is “slope-limiting” where a common choice is here

is the famous minimum modulus function that returns the smallest magnitude argument if the arguments have the same sign.

where

is the “high-order” slope that you desire to use and control the stability of.

For extrema preservation I will provide a couple of choices from my own work and Colella & Sekora. In both cases the extrema preservation will only be applied if a local extrema is detected by the scheme. Generally speaking a monotonicity preserving limiter will help do this by setting .

The idea that I worked on had a couple of key concepts: don’t give up on the original high-order scheme yet, at an extrema a ENO or WENO scheme might be useful, and the monotonicity preserving method has good nonlinear stability properties. My limiter worked on edge values that then helped define the reconstruction polynomial. It turns out that the general limiter can work here too, ,

and everything else follows. The parts of the limiter are

with

being the monotonicity preserving edge value,

is the high-order edge values and

is the WENO edge value. Next, the limiter is completed with

. Part of the key idea is to use two complimentary nonlinear stability properties from monotonicity preservation and WENO to assure the stability of the method should the high-order method be chosen. In this case the high-order method is bounded by two nonlinearly stable approximation choices. I thought it actually worked pretty well.

Colella and Sekora had a different approach based on looking at the local curvature (second derivative) of the parabolic reconstruction used in PPM. If the second derivatives of the reconstruction and the neighborhood of the reconstruction all have the same sign and roughly the same magnitude, the extrema is viewed as valid and worth propagating undistorted by the sort of damping monotonicity preserving methods produce. A simple way to implement this approach can be implemented using the general limiter I discuss. First we need to define the second derivative of the polynomial reconstruction . In this case the specifics of the limiter are

where

is the classical second derivative on the mesh and

. The choice of method to define the denominator in the limiter is

. The resulting limiter can either be applied to the edge values or equivalently to the reconstructing polynomial.

In my next post I will talk about how edge values, can be used productively to improve the robustness, resolution and stability of these methods.

Set Goals, not Limits.

― Manoj Vaz

Rider, William J., and Len G. Margolin. “Simple modifications of monotonicity-preserving limiter.” Journal of Computational Physics 174.1 (2001): 473-488.

Qiu, Jianxian, and Chi-Wang Shu. “A Comparison of Troubled-Cell Indicators for Runge–Kutta Discontinuous Galerkin Methods Using Weighted Essentially Nonoscillatory Limiters.” SIAM Journal on Scientific Computing 27.3 (2005): 995-1013.

Zhang, Xiangxiong, and Chi-Wang Shu. “Maximum-principle-satisfying and positivity-preserving high-order schemes for conservation laws: survey and new developments.” Proceedings of the Royal Society of London A: Mathematical, Physical and Engineering Sciences. The Royal Society, 2011.

Zhang, Xiangxiong, and Chi-Wang Shu. “On maximum-principle-satisfying high order schemes for scalar conservation laws.” Journal of Computational Physics229.9 (2010): 3091-3120.

Colella, Phillip, and Michael D. Sekora. “A limiter for PPM that preserves accuracy at smooth extrema.” Journal of Computational Physics 227.15 (2008): 7069-7076.

Suresh, A., and H. T. Huynh. “Accurate monotonicity-preserving schemes with Runge–Kutta time stepping.” Journal of Computational Physics 136.1 (1997): 83-99.

Huynh, Hung T. “Accurate upwind methods for the Euler equations.” SIAM Journal on Numerical Analysis 32.5 (1995): 1565-1619.

Rider, William J., Jeffrey A. Greenough, and James R. Kamm. “Accurate monotonicity-and extrema-preserving methods through adaptive nonlinear hybridizations.” Journal of Computational Physics 225.2 (2007): 1827-1848.

wheelhouse (along with all the hijinks that the child movie viewers will enjoy).

wheelhouse (along with all the hijinks that the child movie viewers will enjoy).

methods in CFD codes. Methods that were introduced at that time remain at the core of CFD codes today. The reason was the development of new methods that were so unambiguously better than the previous alternatives that the change was a fait accompli. Codes produced results with the new methods that were impossible to achieve with previous methods. At that time a broad and important class of physical problems in fluid dynamics were suddenly open to successful simulation. Simulation results were more realistic and physically appealing and the artificial and unphysical results of the past were no longer a limitation.

methods in CFD codes. Methods that were introduced at that time remain at the core of CFD codes today. The reason was the development of new methods that were so unambiguously better than the previous alternatives that the change was a fait accompli. Codes produced results with the new methods that were impossible to achieve with previous methods. At that time a broad and important class of physical problems in fluid dynamics were suddenly open to successful simulation. Simulation results were more realistic and physically appealing and the artificial and unphysical results of the past were no longer a limitation.

virtually any conceivable standard. In addition, the new methods were not either overly complex or expensive to use. The principles associated with their approach to solving the equations combined the best, most appealing aspects of previous methods in a novel fashion. They became the standard method almost overnight.

virtually any conceivable standard. In addition, the new methods were not either overly complex or expensive to use. The principles associated with their approach to solving the equations combined the best, most appealing aspects of previous methods in a novel fashion. They became the standard method almost overnight. This was accomplished because the methods were nonlinear even for linear equations meaning that the domain of dependence for the approximation is a function of the solution itself. Earlier methods were linear meaning that the approximation was the same without regard for the solution. Before the high-resolution methods you had two choices either a low-order method that would wash out the solution, or a high-order solution that would have unphysical solutions. Theoretically the low-order solution is superior in a sense because the solution could be guaranteed to be physical. This happened because the solution was found using a great deal of numerical or artificial viscosity. The solutions were effectively laminar (meaning viscously dominated) thus not having energetic structures that make fluid dynamics so exciting, useful and beautiful.

This was accomplished because the methods were nonlinear even for linear equations meaning that the domain of dependence for the approximation is a function of the solution itself. Earlier methods were linear meaning that the approximation was the same without regard for the solution. Before the high-resolution methods you had two choices either a low-order method that would wash out the solution, or a high-order solution that would have unphysical solutions. Theoretically the low-order solution is superior in a sense because the solution could be guaranteed to be physical. This happened because the solution was found using a great deal of numerical or artificial viscosity. The solutions were effectively laminar (meaning viscously dominated) thus not having energetic structures that make fluid dynamics so exciting, useful and beautiful. safe to do so), and only use the lower accuracy, dissipative method when absolutely necessary. Making these choices on the fly is the core of the magic of these methods. The new methods alleviated the bulk of this viscosity, but did not entirely remove it. This is good and important because some viscosity in the solution is essential to connect the results to the real world. Real world flows all have some amount of viscous dissipation. This fact is essential for success in computing shock waves where having dissipation allows the selection of the correct solution.

safe to do so), and only use the lower accuracy, dissipative method when absolutely necessary. Making these choices on the fly is the core of the magic of these methods. The new methods alleviated the bulk of this viscosity, but did not entirely remove it. This is good and important because some viscosity in the solution is essential to connect the results to the real world. Real world flows all have some amount of viscous dissipation. This fact is essential for success in computing shock waves where having dissipation allows the selection of the correct solution. In the case of simple hyperbolic conservation laws that define the inertial part of fluid dynamics, the low order accuracy methods solve an equation with classical viscous terms that match those seen in reality although generally the magnitude of viscosity is much larger than the real world. Thus these methods produce laminar (syrupy) flows as a matter of course. This makes these methods unsuitable for simulating most conditions of interest to engineering and science. It also makes these methods very safe to use and virtually guarantee a physically reasonable (if inaccurate) solution.

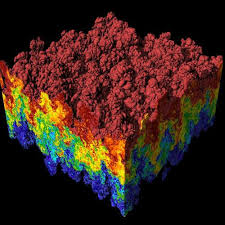

In the case of simple hyperbolic conservation laws that define the inertial part of fluid dynamics, the low order accuracy methods solve an equation with classical viscous terms that match those seen in reality although generally the magnitude of viscosity is much larger than the real world. Thus these methods produce laminar (syrupy) flows as a matter of course. This makes these methods unsuitable for simulating most conditions of interest to engineering and science. It also makes these methods very safe to use and virtually guarantee a physically reasonable (if inaccurate) solution. The new methods get rid of these large viscous terms and replace it with a smaller viscosity that depends on the structure of the solution. The results with the new methods are stunningly different and produce the sort of rich nonlinear structures found in nature (or something closely related). Suddenly codes produced solutions that matched reality far more closely. It was a night and day difference in method performance, once you tried the new methods there was no going back.

The new methods get rid of these large viscous terms and replace it with a smaller viscosity that depends on the structure of the solution. The results with the new methods are stunningly different and produce the sort of rich nonlinear structures found in nature (or something closely related). Suddenly codes produced solutions that matched reality far more closely. It was a night and day difference in method performance, once you tried the new methods there was no going back.

The publisher is the American Mathematical Society (AMS) and the book is a wonderfully technical and personal account of the fascinating and influential life of Peter Lax. Hersh’s account goes far beyond the obvious public and professional impact of Lax into his personal life and family although these are colored greatly by the greatest events of the 20th Century. Lax also has a deep connection to three themes in my own life: scientific computing, hyperbolic conservation laws and Los Alamos. He was a contributing member of the Manhattan Project despite being a corporal in the US Army and only 18 years old! Los Alamos and John von Neumann in particular had an immense influence on his life’s work with the fingerprints of that influence all over his greatest professional achievements.

The publisher is the American Mathematical Society (AMS) and the book is a wonderfully technical and personal account of the fascinating and influential life of Peter Lax. Hersh’s account goes far beyond the obvious public and professional impact of Lax into his personal life and family although these are colored greatly by the greatest events of the 20th Century. Lax also has a deep connection to three themes in my own life: scientific computing, hyperbolic conservation laws and Los Alamos. He was a contributing member of the Manhattan Project despite being a corporal in the US Army and only 18 years old! Los Alamos and John von Neumann in particular had an immense influence on his life’s work with the fingerprints of that influence all over his greatest professional achievements.

me ten years ago, I’d have thought aliens delivered the technology to humans.

me ten years ago, I’d have thought aliens delivered the technology to humans.

In a sense the modern trajectory of supercomputing is quintessentially American, bigger and faster is better by fiat. Excess and waste are virtues rather than flaw. Except the modern supercomputer it is not better, and not just because they don’t hold a candle to the old Crays. These computers just suck in so many ways; they are soulless and devoid of character. Moreover they are already a massive pain in the ass to use, and plans are afoot to make them even worse. The unrelenting priority of speed over utility is crushing. Terrible is the only path to speed, and terrible is coming with a tremendous cost too. When a colleague recently quipped that she would like to see us get a computer we actually wanted to use, I’m convinced that she had the older generation of Crays firmly in mind.

In a sense the modern trajectory of supercomputing is quintessentially American, bigger and faster is better by fiat. Excess and waste are virtues rather than flaw. Except the modern supercomputer it is not better, and not just because they don’t hold a candle to the old Crays. These computers just suck in so many ways; they are soulless and devoid of character. Moreover they are already a massive pain in the ass to use, and plans are afoot to make them even worse. The unrelenting priority of speed over utility is crushing. Terrible is the only path to speed, and terrible is coming with a tremendous cost too. When a colleague recently quipped that she would like to see us get a computer we actually wanted to use, I’m convinced that she had the older generation of Crays firmly in mind. We have to go back to the mid-1990’s and the combination of computing and geopolitical issues that existed then. The path taken by the classic Cray supercomputers appeared to be running out of steam insofar as improving performance. The attack of the killer micros was defined as the path to continued growth in performance. Overall hardware functionality was effectively abandoned in favor of pure performance. The pure performance was only achieved in the case of benchmark problems that had little in common with actual applications. Performance on real application took a nosedive; a nosedive that the benchmark conveniently covered up. We still haven’t woken up to the reality.

We have to go back to the mid-1990’s and the combination of computing and geopolitical issues that existed then. The path taken by the classic Cray supercomputers appeared to be running out of steam insofar as improving performance. The attack of the killer micros was defined as the path to continued growth in performance. Overall hardware functionality was effectively abandoned in favor of pure performance. The pure performance was only achieved in the case of benchmark problems that had little in common with actual applications. Performance on real application took a nosedive; a nosedive that the benchmark conveniently covered up. We still haven’t woken up to the reality.

are also prone to failures where ideas simply don’t pan out. Without the failure you don’t have the breakthroughs hence the fatal nature of risk aversion. Integrated over decades of timid low-risk behavior we have the makings of a crisis. Our low-risk behavior has already created a fast immeasurable gulf in what we can do today versus what we should be doing today.

are also prone to failures where ideas simply don’t pan out. Without the failure you don’t have the breakthroughs hence the fatal nature of risk aversion. Integrated over decades of timid low-risk behavior we have the makings of a crisis. Our low-risk behavior has already created a fast immeasurable gulf in what we can do today versus what we should be doing today.