Tags

“You’re overthinking it.’ ‘I have anxiety. I have no other type of thinking available.” ― Matt Haig, The Midnight Library

tl;dr

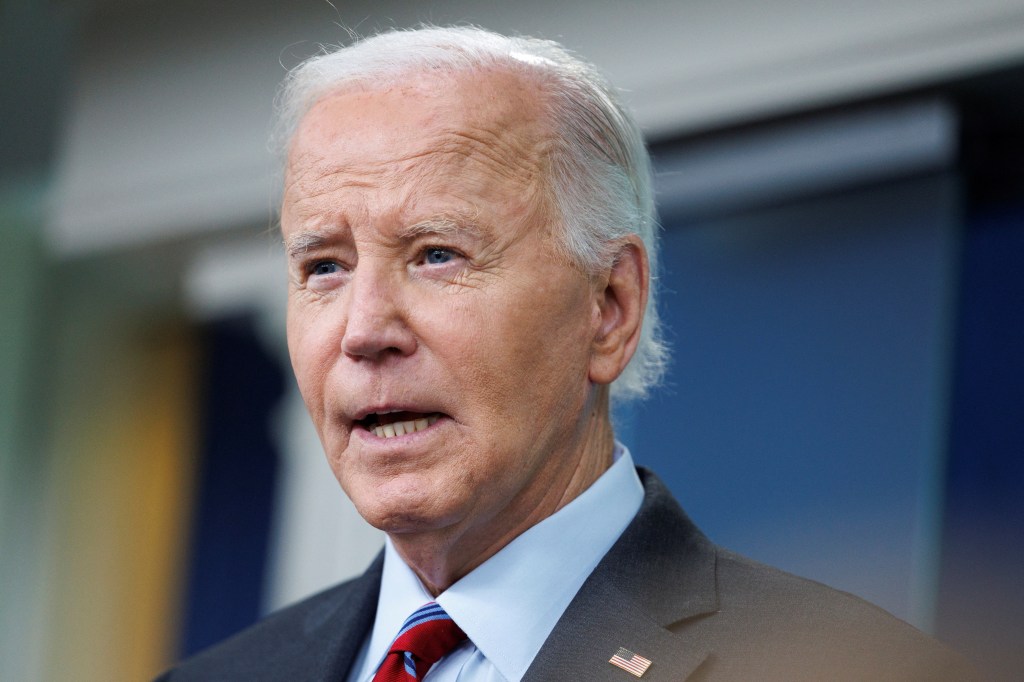

The anxiety is sky-high everywhere you look. The uncertainty is huge and palpable. The upcoming election feels like a doom or a massive relief. This is true no matter who you support. In the meantime, everything seems perpetually frozen. We are all waiting to see what kind of World will greet us in 2025. Will we have hope, or enter into an apocalyptic hellscape? The likely outcome will be something in-between no matter who wins.

“Maturity, one discovers, has everything to do with the acceptance of ‘not knowing.” ― Mark Z. Danielewski, House of Leaves

What I see?

The Nation seems like it is in a purgatory. It matters little who you support to feel this way. Both sides are running on fear of the other. The stakes of the election seem impossibly high and this is paralyzing everyone. All decisions and actions stemming from our governance have ground to a halt. I see it at work where nothing is happening. Everything seems to be frozen in place, waiting for the resolution. That resolution could be swift on November 5th, and that would be kind and merciful. That resolution could take all the way into December and even to January 6th. This would be brutal and the freeze would only deepen.

“Our anxiety does not empty tomorrow of its sorrows, but only empties today of its strengths.” ― C. H. Spurgeon

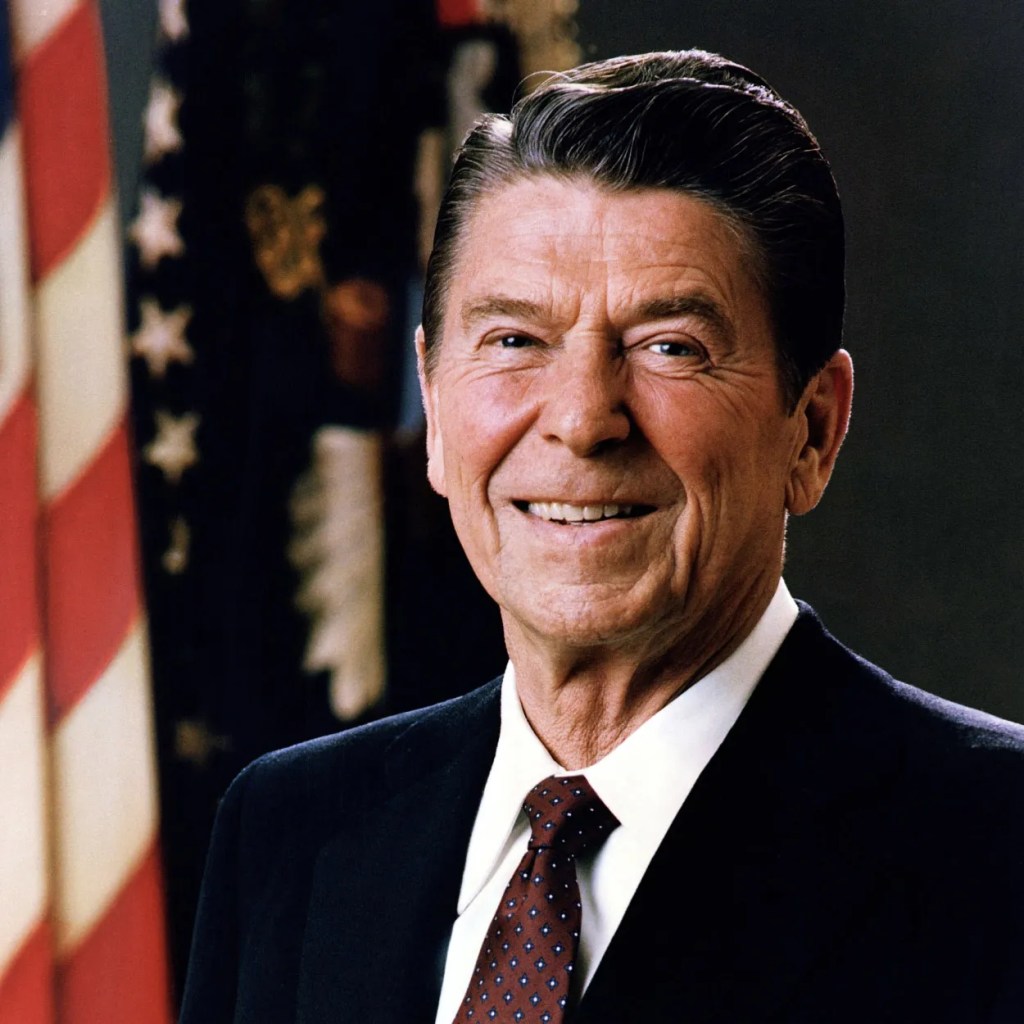

On the one hand, the society we know is being described in terms of doom and horror. For some people this feels true, and they crave change. It seems to me that they simply want to elect a destroyer who will sweep aside the reality that isn’t working for them. They care little about the nature of the destruction. The system we have today is not working for them. This is not entirely true of course. Others (Elon Musk, Peter Thiel, …) see a system that stands in the way of their greed and domination. In Trump, they see their savior, or their ally, or their dupe, and the path toward annihilation of society’s order. I see the problems too, but want someone to fix them.

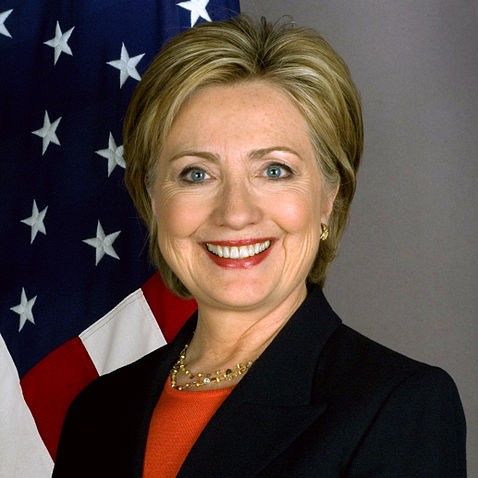

On the other side, we have normalcy. Ironically this normalcy is the problem and the strength. Part of the normal is the multiple factions comprising the Democratic party. There are many entrenched interests. We have the people who want progress and acceptance socially for women and LBGTQ people. The biggest block of people is the educated and succeeding part of America. These people are generally okay and doing alright in the current system. They see tearing the current system apart as dangerous. They don’t like the system and often see imperfections, but don’t want to destroy it. They would get on board to fix it. The key is that many people benefit from the current system.

“The only thing that makes life possible is permanent, intolerable uncertainty: not knowing what comes next.” ― Ursula K. Le Guin, The Left Hand of Darkness

What is the reality?

Somewhere between the nihilism of the Trump faction and the normies is truth. Our system is a fucking mess. We have profoundly great inequality in society. We see those losing, the poor and blue-collar folks and the ultra-rich teaming up to take on the educated and reasonably well-off. Social and work life is incredibly uncomfortable. This is due to political, social, and sexual dynamics that are a powderkeg. We all walk around on eggshells almost everywhere. The homeless population is exploding. They are the sign that many are falling off the edge of society. We are not taking care of our citizens and throwing them to the wolves. The government over-regulates and is incredibly inefficient. Everything is getting worse and nothing is getting fixed (systems, roads, etc,…). From where I sit I can see multiple National security programs floundering under the weight of all of this.

At the forefront of our woes as a society are young men. Current society is not working for them. I see it in the young men I know personally and at work. Many of them are flocking toward Trump. His fake masculinity and toughness appeal to them. He puts on an MMA/WWE version of masculinity that is cartoonish. The problem for the Democrats is a lack of response. Tim Walz is part of the reaction. He represents a better more modern form of masculinity, but his impact is dimming. The whole thing has taken gender politics to new dysfunctional highs. Women are under siege from the right, and the type of men they promote is truly toxic. The problem is that the Democrats do not offer something in return. They support movements that seemingly oppose men. This may cost them the election.

“Be the change that you wish to see in the world.” ― Mahatma Gandhi

What I fear?

So you reader might be wondering that with all the problems I see why would I support the normie point-of-view. I really don’t. The issue is the Trump-MAGA won’t fix any problems. They only destroy and only work to make our problems worse. Trump will surely make the inequality worse and do nothing for the common man. He will give them “red meat” in attacking their enemies and doing various cruel things. At the same time, he will enable people like Elon Musk to get even richer. They will continue to exist in a world that 99.99% of Americans can’t fathom. Trump won’t make political corruption leave. He will weaponize it for himself and shift the corruption to help him. Putting a criminal and corrupt man in charge will only supercharge the problem.

“I must not fear. Fear is the mind-killer. Fear is the little-death that brings total obliteration. I will face my fear. I will permit it to pass over me and through me. And when it has gone past I will turn the inner eye to see its path. Where the fear has gone there will be nothing. Only I will remain.” ― Frank Herbert

My real fear is that none of our problems as a Nation will be addressed for many more years. All the problems will just get worse while Americans continue to be divided into warring tribes. I fear bigotry and hatred will be legitimized and supercharged. Progress for women and LBGTQ people will be erased. The American evangelical movement will rule like an American Taliban imposing their morality on everyone. Homeless people will grow and be criminalized rather than helped. Regulation will be destroyed and greed will be pursued absent any morality or ethics. Those with money can escape law, morality, and justice with even greater ease. If you support Trump you are not necessarily a bigot, but you are okay with being ruled by one.

The worst thing I can imagine is myself dying with an epitaph: “born into a democracy; died under a dictator.” America will be swept aside and cast into the dustbin. Worse yet, we could descend into war or simply be auctioned off. If the level of incompetence is allowed to continue unabated our Nation cannot survive. We will fall into incompetence and corruption fueled purely by greed and malice.

Were it the malice of a foreign invaded, it wouldn’t hurt so much. This is the worst case, but little doubt that Trump 2.0 would be a giant shit show. Trump 1.0 was a shit show, but at least some adults with actual ethics were there to limit it. The adults are all gone now.

“An abnormal reaction to an abnormal situation is normal behavior.” ― Victor Frankl

How to Cope?

The thing to remember most of all is that none of us can control what is going to happen. This is the result of forces and events beyond any of our control We are taking part, but only in the smallest way. We are for the most part observers. We will react to the events and our lives will be shaped by them. The shape of the future will be drawn by what is about to occur. This is a big deal. Not knowing what this future holds is the source of the anxiety.

I think the first thing to put your arms around is that things will be bad no matter what. It is a matter of degree. History in the long run is on the side of all of this shit working out. The USA has survived many horrible events and eras. We have continued to exist and even thrive through it all. We will most likely muddle our way through this disaster. In a sense, this is the answer of tragic optimism. Nonetheless, this is a moment of peril for the USA not experienced since the Civil War. Even the fascist threat of World War two didn’t feature this level of threat. Now the fascist threat is inside our Nation. About half the voters seem okay with being led by that fascist.

“Forces beyond your control can take away everything you possess except one thing, your freedom to choose how you will respond to the situation.” ― Victor Frankl

Nonetheless, we should probably be OK, eventually. We’ve been alright before and weathered storms. The biggest question in that statement is how much blood will be shed to get us there. Can we navigate this crisis without killing a lot of our fellow citizens? Can we break the fever and start solving our very real problems in a rational, constructive way? The alternative is a rampant destruction of our institutions and governance followed by a reconstruction. At best, this will be a near-death experience. It will be a truly shitty way to exist.

Americans are fond of saying they hate the government. The thing is that our government is us, and not some separate entity. The lesson is that we hate ourselves. The choice is ours, but I’m not confident we have wisdom. It would be far better to rectify problems and create a government we can love and be proud of. A government that reflects the best of our people and our legacy. In about a week, the future will begin to show itself.

“Two things are infinite: the universe and human stupidity; and I’m not sure about the universe.” ― Albert Einstein