We are losing the ability to understand anything that’s even vaguely complex.

― Chuck Klosterman

I get asked, “what do you do?” quite often in conversation, and I realize the truth needs to be packaged carefully for most people. One of my issues is that advertise what I do on my body with some incredibly nerdy tattoos including an equation that describes one form of the second law of thermodynamics. What I do is complex and highly technical full of incredible subtlety. Even when talking with someone from a nearby technical background the subtlety of approximating physical laws numerically in a manner suitable for computing can be daunting. For someone without a technical background it is positively alien. This character comes to play rather acutely in the design and construction of research programs where complex, technical and subtle does not sell. This is especially true in today’s world where expertise and knowledge is regarded as suspicious, dangerous and threatening to so many. In today’s world one of the biggest insults to hurl at some one is to accuse them of being one of the “elite”. Increasingly it is clear that this isn’t just an American issue, but Worldwide in its scope. It is a clear and present threat to a better future.

form of the second law of thermodynamics. What I do is complex and highly technical full of incredible subtlety. Even when talking with someone from a nearby technical background the subtlety of approximating physical laws numerically in a manner suitable for computing can be daunting. For someone without a technical background it is positively alien. This character comes to play rather acutely in the design and construction of research programs where complex, technical and subtle does not sell. This is especially true in today’s world where expertise and knowledge is regarded as suspicious, dangerous and threatening to so many. In today’s world one of the biggest insults to hurl at some one is to accuse them of being one of the “elite”. Increasingly it is clear that this isn’t just an American issue, but Worldwide in its scope. It is a clear and present threat to a better future.

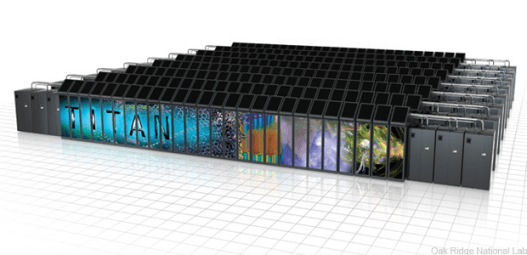

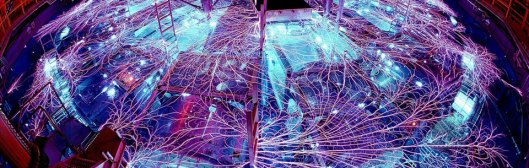

I’ve written often about the sorry state of high performance computing. Our computing programs are blunt and naïve constructed to squeeze money out of funding agencies and legislatures rather then get the job done. The brutal simplicity of the arguments used to support funding is breathtaking. Rather than construct programs to be effective and efficient getting the best from every dollar spent, we construct programs to be marketed at the lowest common denominator. For this reason something subtle, complex and technical like numerical approximation gets no play. In today’s world subtlety is utterly objectionable and a complete buzz kill. We don’t care that it’s the right thing to do, or that it is massively greater in return than simply building giant monstrosities of computing. It would take an expert from the numerical elite to explain it, and those people are untrustworthy nerds, so we will simply get the money to waste on the monstrosities instead. So here I am, an expert and one of the elite using my knowledge and experience to make recommendations on how to be more effective and efficient. You’ve been warned.

I’ve written often about the sorry state of high performance computing. Our computing programs are blunt and naïve constructed to squeeze money out of funding agencies and legislatures rather then get the job done. The brutal simplicity of the arguments used to support funding is breathtaking. Rather than construct programs to be effective and efficient getting the best from every dollar spent, we construct programs to be marketed at the lowest common denominator. For this reason something subtle, complex and technical like numerical approximation gets no play. In today’s world subtlety is utterly objectionable and a complete buzz kill. We don’t care that it’s the right thing to do, or that it is massively greater in return than simply building giant monstrosities of computing. It would take an expert from the numerical elite to explain it, and those people are untrustworthy nerds, so we will simply get the money to waste on the monstrosities instead. So here I am, an expert and one of the elite using my knowledge and experience to make recommendations on how to be more effective and efficient. You’ve been warned.

Truth is much too complicated to allow anything but approximations.

— John Von Neumann

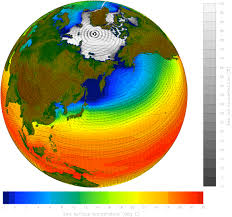

If we want to succeed at remaining a high performance computing superpower, we need change our approach and fast. Part of what is needed is a greater focus on numerical approximation. This is part of deep need to refocus on the more valuable aspects of the scientific computing ecosystem. The first thing to recognize is that our current hardware first focus is oriented on the least valuable part of the ecosystem, the computer itself. A computer is necessary, but horribly insufficient for high performance computing supremacy. The real value for scientific computing is the opposite end of the spectrum where work is grounded in physics, engineering and applied mathematics.

Although this may seem a paradox, all exact science is dominated by the idea of approximation.

— Bertrand Russell

I’ve made this argument before and it is instructive to unpack it. The model solved via simulation is the single most important aspect of the simulation. If the model is flawed, no amount of raw computer speed, numerical accuracy, or efficient computer code can rescue the solution and make it better. The model must be changed, improved, or corrected to produce better answers. If a model is correct the accuracy, robustness, fidelity and efficiency of its numerical solution is essential. Everything upstream of the numerical solution aimed toward the computer hardware is less important. We can move down the chain of activities all of which are necessary seeing the same effect, the further you get from the model of reality, the less efficient the measures are. This whole thing is referred to an ecosystem these days and every bit of it needs to be in place. What also needs to be in place is a sense of the value of each activity, and priority placed toward those that have the greatest impact, or the greatest opportunity. Instead of doing this today, we are focused on the thing with least impact, farthest from reality and starving the most valuable parts of the ecosystem. One might argue that the hardware is a subject of opportunity, but the truth is the opposite. The environment for improving the performance of hardware is at a historical nadir; Moore’s law is dead, dead, dead. Our focus on hardware is throwing money at an opportunity that has passed into history.

What also needs to be in place is a sense of the value of each activity, and priority placed toward those that have the greatest impact, or the greatest opportunity. Instead of doing this today, we are focused on the thing with least impact, farthest from reality and starving the most valuable parts of the ecosystem. One might argue that the hardware is a subject of opportunity, but the truth is the opposite. The environment for improving the performance of hardware is at a historical nadir; Moore’s law is dead, dead, dead. Our focus on hardware is throwing money at an opportunity that has passed into history.

I’m a physicist, and we have something called Moore’s Law, which says computer power doubles every 18 months. So every Christmas, we more or less assume that our toys and appliances are more or less twice as powerful as the previous Christmas.

— Michio Kaku

At some point, Moore’s law will break down.

— Seth Lloyd

There is one word to describe this strategy, stupid!

At the core of the argument is a strategy that favors brute force over subtleties understood mainly by experts (or the elite!). Today the brute force argument always takes the lead over anything that might require some level of explanation. In modeling and simulation the esoteric activities such as the actual modeling and its numerical solution are quite subtle and technical in detail compared to the raw computing power that can be understood with ease by the layperson. This is the reason the computing power gets the lead in the program, not because of its efficacy in improving the bottom line. As a result our high performance-computing world is dominated by meaningless discussions of computing power defined by a meaningless benchmark. The political dynamics is basically a modern day “missile gap” like we had during the Cold War. It has exactly as much virtue as the original “missile gap”; it is a pure marketing and political tool with absolutely no technical or strategic validity aside from its ability to free up funding.

At the core of the argument is a strategy that favors brute force over subtleties understood mainly by experts (or the elite!). Today the brute force argument always takes the lead over anything that might require some level of explanation. In modeling and simulation the esoteric activities such as the actual modeling and its numerical solution are quite subtle and technical in detail compared to the raw computing power that can be understood with ease by the layperson. This is the reason the computing power gets the lead in the program, not because of its efficacy in improving the bottom line. As a result our high performance-computing world is dominated by meaningless discussions of computing power defined by a meaningless benchmark. The political dynamics is basically a modern day “missile gap” like we had during the Cold War. It has exactly as much virtue as the original “missile gap”; it is a pure marketing and political tool with absolutely no technical or strategic validity aside from its ability to free up funding.

Each piece, or part, of the whole of nature is always merely an approximation to the complete truth, or the complete truth so far as we know it. In fact, everything we know is only some kind of approximation because we know that we do not know all the laws as yet.

— Richard P. Feynman

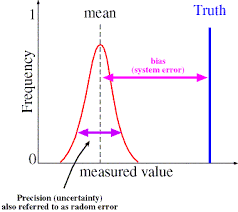

Once you have an entire program founded on bullshit arguments, it is hard to work your way back to technical brilliance. It is easier to double down on the bullshit and simply define everything in terms of the original fallacies. A big part of the problem is the application of modern verification and validation to the process. Both verification and validation are modern practices to accumulate evidence on the accuracy, correctness and fidelity of computational simulations. Validation is the comparison of simulation with experiments and in this comparison the relative correctness of models is determined. Verification determines the correctness and accuracy of the numerical solution of the  model. Together the two activities should help energize high quality work. In reality most programs consider them to be nuisances and box checking exercises to be finished and ignored as soon as possible. Programs like to say they are doing V&V, but don’t want to emphasize or pay for doing it well. V&V is a mark of quality, but the programs want its approval rather than attend to its result. Even worse, if the results are poor or indicate problems, they are likely to be ignored or dismissed as being inconvenient. Programs get away with this because the practice of V&V is technical and subtle and in the modern world highly susceptible to bullshit.

model. Together the two activities should help energize high quality work. In reality most programs consider them to be nuisances and box checking exercises to be finished and ignored as soon as possible. Programs like to say they are doing V&V, but don’t want to emphasize or pay for doing it well. V&V is a mark of quality, but the programs want its approval rather than attend to its result. Even worse, if the results are poor or indicate problems, they are likely to be ignored or dismissed as being inconvenient. Programs get away with this because the practice of V&V is technical and subtle and in the modern world highly susceptible to bullshit.

Far better an approximate answer to the right question, which is often vague, than an exact answer to the wrong question, which can always be made precise.

— John W. Tukey

Numerical methods for solving models are even more technical and subtle. As such they are the focus of suspicion and ignorance. For high performance computing today they are considered to be yesterday’s work and largely a finished, completed product now simply needing a bigger computer to do better. In a sense this notion is correct, the bigger computer will produce a better result. The issue is that using the computer power, as the route to improvement is inefficient under the best of circumstances. We are not living under of the best of circumstances! Things are far from efficient, as we have been losing the share of computer power advances useful for modeling and  simulation for decades now. Let us be clear, when we receive an ever-smaller proportion of the maximum computing power as each year passes. Thirty years ago we would commonly get 10, 20 or even 50 percent of the peak performance of the cutting edge supercomputers. Today even one percent of the peak performance is exceptional, and most codes doing real application work are significantly less than that. Worse yet, this dismal performance is getting worse with every passing year. This is one element of the autopsy of Moore’s law that we have been avoiding while its corpse rots before us.

simulation for decades now. Let us be clear, when we receive an ever-smaller proportion of the maximum computing power as each year passes. Thirty years ago we would commonly get 10, 20 or even 50 percent of the peak performance of the cutting edge supercomputers. Today even one percent of the peak performance is exceptional, and most codes doing real application work are significantly less than that. Worse yet, this dismal performance is getting worse with every passing year. This is one element of the autopsy of Moore’s law that we have been avoiding while its corpse rots before us.

So we are prioritizing improvement in an area where the payoffs are fleeting and suboptimal. Even these improvements are harder and harder to achieve as computers become ever more parallel and memory access costs become ever more extreme. Simultaneously we are starving more efficient means of improvement of resources and emphasis. Numerical methods and algorithms are two key areas not getting any significant attention or priority. Moreover support for these areas is actually diminishing so that support for the inefficient hardware path can be increased. Let’s not mince words; we are emphasizing a crude naïve and inefficient route to improvement at the cost of a complex and subtle route that is far more efficient and effective.

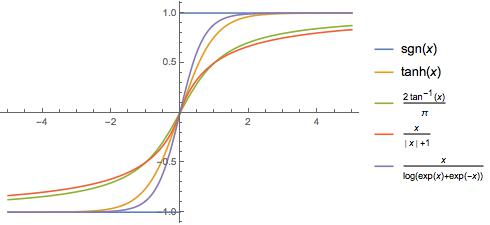

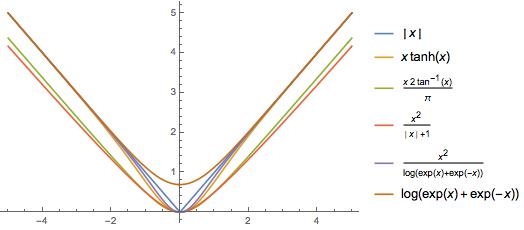

Numerical approximations and algorithms are complex and highly technical things  poorly understood by non-experts even if they are scientists. The relative merits of one method or algorithm compared to another is difficult to articulate. The merits and comparison is highly technical and subtle. Since creating new methods and algorithms makes progress, this means improvements are hard to explain and articulate to non-experts. In some cases both methods and algorithms can produce breakthrough results and produce huge speed-ups. These cases are easy to explain. More generally a new method or algorithm produces subtle improvements like more robustness or flexibility or accuracy than the older options. Most of these changes are not obvious, but making this progress over time leads to enormous improvements that swamp the progress made by faster computers.

poorly understood by non-experts even if they are scientists. The relative merits of one method or algorithm compared to another is difficult to articulate. The merits and comparison is highly technical and subtle. Since creating new methods and algorithms makes progress, this means improvements are hard to explain and articulate to non-experts. In some cases both methods and algorithms can produce breakthrough results and produce huge speed-ups. These cases are easy to explain. More generally a new method or algorithm produces subtle improvements like more robustness or flexibility or accuracy than the older options. Most of these changes are not obvious, but making this progress over time leads to enormous improvements that swamp the progress made by faster computers.

An expert is someone who knows some of the worst mistakes that can be made in his subject, and how to avoid them.

― Werner Heisenberg

The huge breakthroughs are far and few between but provide much greater value than any hardware over similar periods of time. To get these huge breakthroughs requires continual investment in research for extended periods of time. For much of the time the research is mostly a failure producing small or non-existent improvements, until they don’t. Without the continual investment, the failure and the expertise failure produces, the breakthroughs will not happen. They are mostly serendipitous and the end product of many unsuccessful ideas. Today the failures and lack of progress is not supported; we exist in a system where insufficient trust exists to support the sort of failure needed for progress. The result is the addiction to Moore’s law and its seemingly guaranteed payoff because it frees us from subtlety.

Often a sign of expertise is noticing what doesn’t happen.

― Malcolm Gladwell

A huge aspect of expertise is the taste for subtlety. Expertise is built upon mistakes and  failure just as basic learning is. Without the trust to allow people to gloriously make professional mistakes and fail in the pursuit of knowledge, we cannot develop expertise or progress. All of this lands heavily on the most effective and difficult aspects of scientific computing, the modeling and solution of the models numerically. Progress on these aspects is both highly rewarding in terms of improvement, and very risky being prone to failure. To compound matters progress is often highly subjective itself needing great expertise to explain and be understood. In an environment where the elite are suspect and expertise is not trusted such work is unsupported. This is exactly what we see, the most important and effective aspects of high performance computing are being starved in favor of brutish and naïve aspects, which sell well. The price we pay for our lack of trust is an enormous waste of time, money and effort.

failure just as basic learning is. Without the trust to allow people to gloriously make professional mistakes and fail in the pursuit of knowledge, we cannot develop expertise or progress. All of this lands heavily on the most effective and difficult aspects of scientific computing, the modeling and solution of the models numerically. Progress on these aspects is both highly rewarding in terms of improvement, and very risky being prone to failure. To compound matters progress is often highly subjective itself needing great expertise to explain and be understood. In an environment where the elite are suspect and expertise is not trusted such work is unsupported. This is exactly what we see, the most important and effective aspects of high performance computing are being starved in favor of brutish and naïve aspects, which sell well. The price we pay for our lack of trust is an enormous waste of time, money and effort.

Wise people understand the need to consult experts; only fools are confident they know everything.

― Ken Poirot

Again, I’ll note that we still have so much to do. Numerical approximations for existing models are inadequate and desperately in need of improvement. We are burdened by theory that is insufficient and heavily challenged by our models. Our models are all flawed and the proper conduct of science should energize them to improve.

…all models are approximations. Essentially, all models are wrong, but some are useful. However, the approximate nature of the model must always be borne in mind… [Co-author with Norman R. Draper]

— George E.P. Box

he solution of models via numerical approximations. The fact that numerical approximation is the key to unlocking its potential seems largely lost in the modern perspective, and engaged in any increasingly naïve manner. For example much of the dialog around high performance computing is predicated on the notion of convergence. In principle, the more computing power one applies to solving a problem, the better the solution. This is applied axiomatically and relies upon a deep mathematical result in numerical approximation. This heritage and emphasis is not considered in the conversation to the detriment of its intellectual depth.

he solution of models via numerical approximations. The fact that numerical approximation is the key to unlocking its potential seems largely lost in the modern perspective, and engaged in any increasingly naïve manner. For example much of the dialog around high performance computing is predicated on the notion of convergence. In principle, the more computing power one applies to solving a problem, the better the solution. This is applied axiomatically and relies upon a deep mathematical result in numerical approximation. This heritage and emphasis is not considered in the conversation to the detriment of its intellectual depth. systematically ignored by the dialog. The impact of this willful ignorance is felt across the modeling and simulation world, a general lack of progress and emphasis on numerical approximation is evident. We have produced a situation where the most valuable aspect of numerical modeling is not getting focused attention. People are behaving as if the major problems are all solved and not worthy of attention or resources. The nature of the numerical approximation is the second most important and impactful aspect of modeling and simulation work. Virtually all the emphasis today is on the computers themselves based on the assumption of their utility in producing better answers. The most important aspect is the modeling itself; the nature and fidelity of the models define the power of the whole process. Once a model has been defined, the numerical solution of the model is the second most important aspect. The nature of this numerical solution is most dependent on the approximation methodology rather than the power of the computer.

systematically ignored by the dialog. The impact of this willful ignorance is felt across the modeling and simulation world, a general lack of progress and emphasis on numerical approximation is evident. We have produced a situation where the most valuable aspect of numerical modeling is not getting focused attention. People are behaving as if the major problems are all solved and not worthy of attention or resources. The nature of the numerical approximation is the second most important and impactful aspect of modeling and simulation work. Virtually all the emphasis today is on the computers themselves based on the assumption of their utility in producing better answers. The most important aspect is the modeling itself; the nature and fidelity of the models define the power of the whole process. Once a model has been defined, the numerical solution of the model is the second most important aspect. The nature of this numerical solution is most dependent on the approximation methodology rather than the power of the computer. So why are we so hell bent on investing in a more inefficient manner of progressing? Our mindless addiction to Moore’s law providing improvements in computing power over the last fifty years for what in effect has been free for the modeling and simulation community.

So why are we so hell bent on investing in a more inefficient manner of progressing? Our mindless addiction to Moore’s law providing improvements in computing power over the last fifty years for what in effect has been free for the modeling and simulation community. Our modeling and simulation programs are addicted to Moore’s law as surely as a crackhead is addicted to crack. Moore’s law has provided a means to progress without planning or intervention for decades, time passes and capability grows almost if by magic. The problem we have is that Moore’s law is dead, and rather than moving on, the modeling and simulation community is attempting to raise the dead. By this analogy, the exascale program is basically designed to create zombie computers that completely suck to use. They are not built to get results or do science, they are built to get exascale performance on some sort of bullshit benchmark.

Our modeling and simulation programs are addicted to Moore’s law as surely as a crackhead is addicted to crack. Moore’s law has provided a means to progress without planning or intervention for decades, time passes and capability grows almost if by magic. The problem we have is that Moore’s law is dead, and rather than moving on, the modeling and simulation community is attempting to raise the dead. By this analogy, the exascale program is basically designed to create zombie computers that completely suck to use. They are not built to get results or do science, they are built to get exascale performance on some sort of bullshit benchmark. approximations is risky and highly prone to failure. You can invest in improving numerical approximations for a very long time without any seeming progress until one gets a quantum leap in performance. The issue in the modern world is the lack of predictability to such improvements. Breakthroughs cannot be predicted and cannot be relied upon to happen on a regular schedule. The breakthrough requires innovative thinking and a lot of trial and error. The ultimate quantum leap in performance is founded on many failures and false starts. If these failures are engaged in a mode where we continually learn and adapt our approach, we eventually solve problems. The problem is that it must be approached as an article of faith, and cannot be planned. Today’s management environment is completely intolerant of such things, and demands continual results. The result is squalid incrementalism and an utter lack of innovative leaps forward.

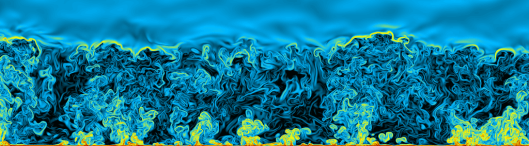

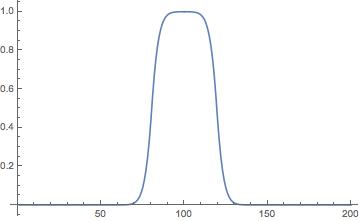

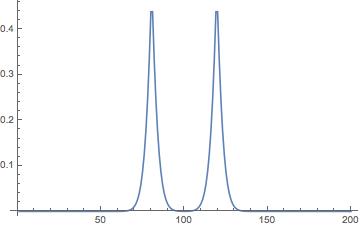

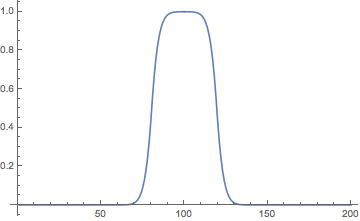

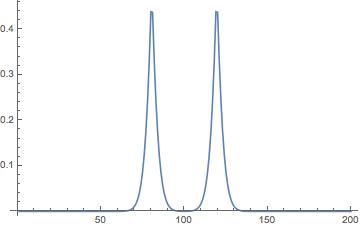

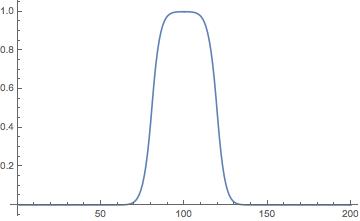

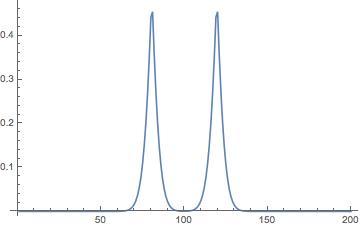

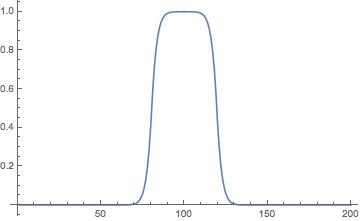

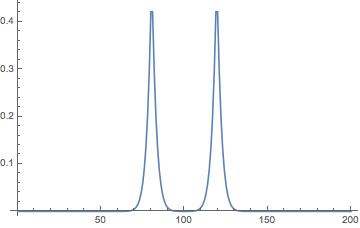

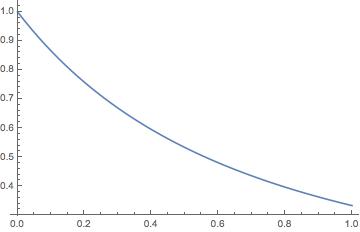

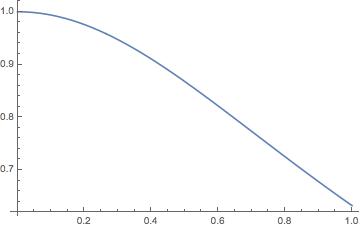

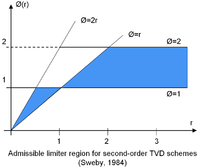

approximations is risky and highly prone to failure. You can invest in improving numerical approximations for a very long time without any seeming progress until one gets a quantum leap in performance. The issue in the modern world is the lack of predictability to such improvements. Breakthroughs cannot be predicted and cannot be relied upon to happen on a regular schedule. The breakthrough requires innovative thinking and a lot of trial and error. The ultimate quantum leap in performance is founded on many failures and false starts. If these failures are engaged in a mode where we continually learn and adapt our approach, we eventually solve problems. The problem is that it must be approached as an article of faith, and cannot be planned. Today’s management environment is completely intolerant of such things, and demands continual results. The result is squalid incrementalism and an utter lack of innovative leaps forward. ayoff is far more extreme than these simple arguments. The archetype of this extreme payoff is the difference between first and second order monotone schemes. For general fluid flows, second-order monotone schemes produce results that are almost infinitely more accurate than first-order. The reason for this stunning claim are acute differences in the results comes from the impact of the form of the truncation error expressed via the modified equations (the equations solved more accurately by the numerical methods). For first-order methods there is a large viscous effect that makes all flows laminar. Second-order methods are necessary for simulating high Reynolds number turbulent flows because their dissipation doesn’t interfere directly with the fundamental physics.

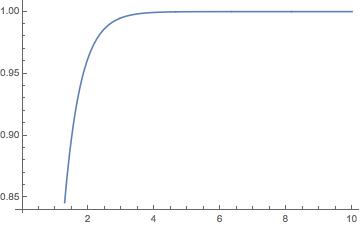

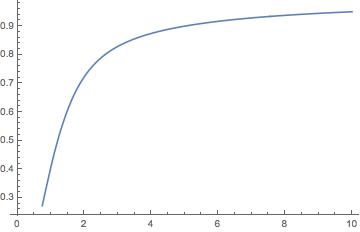

ayoff is far more extreme than these simple arguments. The archetype of this extreme payoff is the difference between first and second order monotone schemes. For general fluid flows, second-order monotone schemes produce results that are almost infinitely more accurate than first-order. The reason for this stunning claim are acute differences in the results comes from the impact of the form of the truncation error expressed via the modified equations (the equations solved more accurately by the numerical methods). For first-order methods there is a large viscous effect that makes all flows laminar. Second-order methods are necessary for simulating high Reynolds number turbulent flows because their dissipation doesn’t interfere directly with the fundamental physics. We don’t generally have good tools for numerical error approximation in non-standard (or unresolved) cases. One digestion of one of the key problems is found in Banks, Aslam, Rider where sub-first-order convergence is described and analyzed for solutions of a discontinuous problem for the one-way wave equation. The key result in this paper is the nature of mesh convergence for discontinuous or non-differentiable solutions. In this case we see sub-linear fractional order convergence. The key result is a general relationship between the convergence rate and the formal order of accuracy for the method,

We don’t generally have good tools for numerical error approximation in non-standard (or unresolved) cases. One digestion of one of the key problems is found in Banks, Aslam, Rider where sub-first-order convergence is described and analyzed for solutions of a discontinuous problem for the one-way wave equation. The key result in this paper is the nature of mesh convergence for discontinuous or non-differentiable solutions. In this case we see sub-linear fractional order convergence. The key result is a general relationship between the convergence rate and the formal order of accuracy for the method,  The much less well-appreciated aspect comes with the practice of direct numerical simulation of turbulence (DNS really of anything). One might think that having a DNS would mean that the solution is completely resolved and highly accurate. They are not! Indeed they are not highly convergent even for integral measures. Generally speaking, one gets first-order accuracy or less under mesh refinement. The problem is the highly sensitive nature of the solutions and the scaling of the mesh with the Kolmogorov scale, which is a mean squared measure of the turbulence scale. Clearly there are effects that come from scales that are much smaller than the Kolmogorov scale associated with highly intermittent behavior. To fully resolve such flows would require the scale of turbulence to be described by the maximum norm of the velocity gradient instead of the RMS.

The much less well-appreciated aspect comes with the practice of direct numerical simulation of turbulence (DNS really of anything). One might think that having a DNS would mean that the solution is completely resolved and highly accurate. They are not! Indeed they are not highly convergent even for integral measures. Generally speaking, one gets first-order accuracy or less under mesh refinement. The problem is the highly sensitive nature of the solutions and the scaling of the mesh with the Kolmogorov scale, which is a mean squared measure of the turbulence scale. Clearly there are effects that come from scales that are much smaller than the Kolmogorov scale associated with highly intermittent behavior. To fully resolve such flows would require the scale of turbulence to be described by the maximum norm of the velocity gradient instead of the RMS. When we get to the real foundational aspects of numerical error and limitations, we come to the fundamental theorem of numerical analysis. For PDEs it only applies to linear equations and basically states that consistency and stability is equivalent to convergence. Everything is tied to this. Consistency means you are solving the equations in a valid and correct approximation, stability is getting a result that doesn’t blow up. What is missing is the theoretical application to more general nonlinear equations along with deeper relationships to accuracy, consistency and stability. This theorem was derived back in the early 1950’s and we probably need something more, but there is no effort or emphasis on this today. We need great effort and immensely talented people to progress. While I’m convinced that we have no limit on talent today, we lack effort and perhaps don’t develop or encourage the talent to develop appropriately.

When we get to the real foundational aspects of numerical error and limitations, we come to the fundamental theorem of numerical analysis. For PDEs it only applies to linear equations and basically states that consistency and stability is equivalent to convergence. Everything is tied to this. Consistency means you are solving the equations in a valid and correct approximation, stability is getting a result that doesn’t blow up. What is missing is the theoretical application to more general nonlinear equations along with deeper relationships to accuracy, consistency and stability. This theorem was derived back in the early 1950’s and we probably need something more, but there is no effort or emphasis on this today. We need great effort and immensely talented people to progress. While I’m convinced that we have no limit on talent today, we lack effort and perhaps don’t develop or encourage the talent to develop appropriately. Beyond the issues with hardware emphasis, today’s focus on software is almost equally harmful to progress. Our programs are working steadfastly on maintaining large volumes of source code full of the ideas of the past. Instead of building on the theory, methods, algorithms and idea of the past, we are simply worshiping them. This is the construction of a false ideology. We would do far greater homage to the work of the past if we were building on that work. The theory is not done by a long shot. Our current attitudes toward high performance computing are a travesty, and embodied in a national program that makes the situation worse only to serve the interests of the willfully naive. We are undermining the very foundation upon which the utility of computing is built. We are going to end up wasting a lot of money and getting very little value for it.

Beyond the issues with hardware emphasis, today’s focus on software is almost equally harmful to progress. Our programs are working steadfastly on maintaining large volumes of source code full of the ideas of the past. Instead of building on the theory, methods, algorithms and idea of the past, we are simply worshiping them. This is the construction of a false ideology. We would do far greater homage to the work of the past if we were building on that work. The theory is not done by a long shot. Our current attitudes toward high performance computing are a travesty, and embodied in a national program that makes the situation worse only to serve the interests of the willfully naive. We are undermining the very foundation upon which the utility of computing is built. We are going to end up wasting a lot of money and getting very little value for it. On Saturday I participated in the March for Science in downtown Albuquerque along with many other marches across the World. This was advertised as a non-partisan event, but to anyone there it was clearly and completely partisan and biased. Two things united the people at the march: a philosophy of progressive and liberalism and opposition to conservatism and Donald Trump. The election of a wealthy paragon of vulgarity and ignorance has done wonders for uniting the left wing of politics. Of course, the left wing in the United States is really a moderate wing, made to seem libera

On Saturday I participated in the March for Science in downtown Albuquerque along with many other marches across the World. This was advertised as a non-partisan event, but to anyone there it was clearly and completely partisan and biased. Two things united the people at the march: a philosophy of progressive and liberalism and opposition to conservatism and Donald Trump. The election of a wealthy paragon of vulgarity and ignorance has done wonders for uniting the left wing of politics. Of course, the left wing in the United States is really a moderate wing, made to seem libera l by the extreme views of the right. Among the greater proponents of the left wing are science as an engine of knowledge and progress. The reason for this dichotomy is the right wing’s embrace of ignorance, fear and bigotry as its electoral tools. The right is really the party of money and the rich with fear, bigotry and ignorance wielded as tools to “inspire” enough of the people to vote against their best (long term) interests. Part of this embrace is a logical opposition to virtually every principle science holds dear.

l by the extreme views of the right. Among the greater proponents of the left wing are science as an engine of knowledge and progress. The reason for this dichotomy is the right wing’s embrace of ignorance, fear and bigotry as its electoral tools. The right is really the party of money and the rich with fear, bigotry and ignorance wielded as tools to “inspire” enough of the people to vote against their best (long term) interests. Part of this embrace is a logical opposition to virtually every principle science holds dear. The premise that a march for science should be non-partisan is utterly wrong on the face of it; science is and has always been a completely political thing. The reasoning for this is simple and persuasive. Politics is the way human beings settle their affairs, assign priorities and make decisions. Politics is an essential human endeavor. Science is equally human in its composition being a structured vehicle for societal curiosity leading to the creation of understanding and knowledge. When the political dynamic is arrayed in the manner we see today, science is absolutely and utterly political. We have two opposing views of the future, one consistent with science favoring knowledge and progress, with the other inconsistent with science favoring fear and ignorance. In such an environment science is completely partisan and political. To expect things to be different is foolish and naïve.

The premise that a march for science should be non-partisan is utterly wrong on the face of it; science is and has always been a completely political thing. The reasoning for this is simple and persuasive. Politics is the way human beings settle their affairs, assign priorities and make decisions. Politics is an essential human endeavor. Science is equally human in its composition being a structured vehicle for societal curiosity leading to the creation of understanding and knowledge. When the political dynamic is arrayed in the manner we see today, science is absolutely and utterly political. We have two opposing views of the future, one consistent with science favoring knowledge and progress, with the other inconsistent with science favoring fear and ignorance. In such an environment science is completely partisan and political. To expect things to be different is foolish and naïve. One of the key things to understand is that science has always been a political thing although the contrast has been turned up in recent years. The thing driving the political context is the rightward movement of the Republican, which has led to their embrace of extreme views including religiosity, ignorance and bigotry. Of course, these extreme views are not really the core of the GOP’s soul, money is, but the cult of ignorance and anti-science is useful in propelling their political interests. The Republican Party has embraced extremism in a virulent form because it pushes its supporters to unthinking devotion and obedience. They will support their party without regard for their own best interests. The republican voter base hurts their economic standing in favor of policies that empower their hatreds and bigotry while calming their fear. All forms of fact and truth have become utterly unimportant unless they support their world-view. The upshot is the rule of a political class hell bent on establishing a ruling class in the United States composed of the wealthy. Most of the people voting for the Republican candidates are simply duped by their support of extreme fear, hate and bigotry. The Democratic Party is only marginally better since they have been seduced by the same money leaving voters with no one to work for them. The rejection of science by the right will ultimately be the undoing of the Nation as other nations will eventually usurp the United States militarily and economically.

One of the key things to understand is that science has always been a political thing although the contrast has been turned up in recent years. The thing driving the political context is the rightward movement of the Republican, which has led to their embrace of extreme views including religiosity, ignorance and bigotry. Of course, these extreme views are not really the core of the GOP’s soul, money is, but the cult of ignorance and anti-science is useful in propelling their political interests. The Republican Party has embraced extremism in a virulent form because it pushes its supporters to unthinking devotion and obedience. They will support their party without regard for their own best interests. The republican voter base hurts their economic standing in favor of policies that empower their hatreds and bigotry while calming their fear. All forms of fact and truth have become utterly unimportant unless they support their world-view. The upshot is the rule of a political class hell bent on establishing a ruling class in the United States composed of the wealthy. Most of the people voting for the Republican candidates are simply duped by their support of extreme fear, hate and bigotry. The Democratic Party is only marginally better since they have been seduced by the same money leaving voters with no one to work for them. The rejection of science by the right will ultimately be the undoing of the Nation as other nations will eventually usurp the United States militarily and economically.

values of progress, love and knowledge are viewed as weakness. In this lens it is no wonder that science is rejected.

values of progress, love and knowledge are viewed as weakness. In this lens it is no wonder that science is rejected. id this problem is kill the knowledge before it is produced. We can find example after example of science being silenced because it is likely to produce results that do not match their view of the world. Among the key engines of the ignorance of conservatism is its alliance with extreme religious views. Historically religion and science are frequently at odds because the faith and truth are often incompatible. This isn’t necessarily all religious faith, but rather that stemming from a fundamentalist approach, which is usually grounded in old and antiquated notions (i.e., classically conservative and opposing anything looking like progress). Fervent religious belief cannot deal with truths that do not align with dictums. The best way to avoid this problem is get rid of the truth. When the government is controlled by extremists this translates to reducing and controlling

id this problem is kill the knowledge before it is produced. We can find example after example of science being silenced because it is likely to produce results that do not match their view of the world. Among the key engines of the ignorance of conservatism is its alliance with extreme religious views. Historically religion and science are frequently at odds because the faith and truth are often incompatible. This isn’t necessarily all religious faith, but rather that stemming from a fundamentalist approach, which is usually grounded in old and antiquated notions (i.e., classically conservative and opposing anything looking like progress). Fervent religious belief cannot deal with truths that do not align with dictums. The best way to avoid this problem is get rid of the truth. When the government is controlled by extremists this translates to reducing and controlling not support deeper research that forms the foundation allowing us to develop technology. As a result our ability to be the best at killing people is at risk in the long run. Eventually the foundation of science used to create all our weapons will run out, and we no longer will be the top dogs. The basic research used for weapons work today is largely a relic of the 1960’s and 1970’s. The wholesale diminishment in societal support for research during the 1980’s and onward will start to hurt us more obviously. In addition we have poisoned the research environment in a fairly bipartisan way leading to a huge drop in the effectiveness and efficiency of the fewer research dollars spent.

not support deeper research that forms the foundation allowing us to develop technology. As a result our ability to be the best at killing people is at risk in the long run. Eventually the foundation of science used to create all our weapons will run out, and we no longer will be the top dogs. The basic research used for weapons work today is largely a relic of the 1960’s and 1970’s. The wholesale diminishment in societal support for research during the 1980’s and onward will start to hurt us more obviously. In addition we have poisoned the research environment in a fairly bipartisan way leading to a huge drop in the effectiveness and efficiency of the fewer research dollars spent. hey hate so much. The right wing has been engaged in an all out assault on universities in part because they view them as the center of left wing views. Attacking and contracting science is part of this assault. In addition to a systematic attack on universities is an increasing categorization of certain research as unlawful because its results will almost certainly oppose right wing views. Examples of this include drug research (e.g. marijuana in particular), anything sexual, climate research, health effects of firearms, evolution, and the list grows. The deepest wounds to science are more subtle. They have created an environment that poisons intellectual approaches and undermines the education of the population because educated intellectual people naturally oppose their ideas.

hey hate so much. The right wing has been engaged in an all out assault on universities in part because they view them as the center of left wing views. Attacking and contracting science is part of this assault. In addition to a systematic attack on universities is an increasing categorization of certain research as unlawful because its results will almost certainly oppose right wing views. Examples of this include drug research (e.g. marijuana in particular), anything sexual, climate research, health effects of firearms, evolution, and the list grows. The deepest wounds to science are more subtle. They have created an environment that poisons intellectual approaches and undermines the education of the population because educated intellectual people naturally oppose their ideas. upset the status quo hurting the ability of traditional industry to make money (with the exception of geological work associated with energy and mining). Much of the ecological research has informed us how human activity is damaging the environment. Industry does not want to adopt practices that preserve the environment primarily due to greed. Moreover the religious extremism opposes ecological research because it opposes the dictums of their faith as chosen people who may exploit the Earth to the limits of their desire. Climate change is the single greatest research threat to the conservative worldview. The fact that mankind is a threat to the Earth is blasphemous to the right wing extremists either impacting their greed or the religious conviction. The denial of climate change is based primarily on religious faith and greed, the pillars of modern right wing extremism.

upset the status quo hurting the ability of traditional industry to make money (with the exception of geological work associated with energy and mining). Much of the ecological research has informed us how human activity is damaging the environment. Industry does not want to adopt practices that preserve the environment primarily due to greed. Moreover the religious extremism opposes ecological research because it opposes the dictums of their faith as chosen people who may exploit the Earth to the limits of their desire. Climate change is the single greatest research threat to the conservative worldview. The fact that mankind is a threat to the Earth is blasphemous to the right wing extremists either impacting their greed or the religious conviction. The denial of climate change is based primarily on religious faith and greed, the pillars of modern right wing extremism. enable the implicit bigotry in how laws are enforced and people are imprisoned ignoring the damage to society at large.

enable the implicit bigotry in how laws are enforced and people are imprisoned ignoring the damage to society at large. punishment. This works to destroy people’s potential for economic advancement and burden the World with poor, unwanted children. Sex education for children is another example where ignorance is promoted as the societal response. Science could make the World far better and more prosperous, and the right wing stands in the way. It has everything to do with sex and nothing to do with reproduction.

punishment. This works to destroy people’s potential for economic advancement and burden the World with poor, unwanted children. Sex education for children is another example where ignorance is promoted as the societal response. Science could make the World far better and more prosperous, and the right wing stands in the way. It has everything to do with sex and nothing to do with reproduction. ach even though it is utterly and completely ineffective and actually damages people. Those children are then spared the knowledge of their sexuality and their reproductive rights through an educational system that suppresses information. Rather than teach our children about sex in a way that honors their intelligence and arms them to deal with life, we send them out ignorant. This ignorance is yet another denial of reality and science by the right. The right wing does not want to seem like they are endorsing basic human instinct around reproduction and sex for pleasure. The result is to create more problems, more STD’s, more unwanted children, and more abortions. It gets worse because we also assure that a new generation of people will reach sexual maturity without the knowledge that could make their lives better. We know how to teach people to take charge of their reproductive decisions, sexual health and pleasure. Our government denies them this knowledge largely driven by antiquated moral and religious ideals, which only serve to give the right voters.

ach even though it is utterly and completely ineffective and actually damages people. Those children are then spared the knowledge of their sexuality and their reproductive rights through an educational system that suppresses information. Rather than teach our children about sex in a way that honors their intelligence and arms them to deal with life, we send them out ignorant. This ignorance is yet another denial of reality and science by the right. The right wing does not want to seem like they are endorsing basic human instinct around reproduction and sex for pleasure. The result is to create more problems, more STD’s, more unwanted children, and more abortions. It gets worse because we also assure that a new generation of people will reach sexual maturity without the knowledge that could make their lives better. We know how to teach people to take charge of their reproductive decisions, sexual health and pleasure. Our government denies them this knowledge largely driven by antiquated moral and religious ideals, which only serve to give the right voters. For a lot of people working at a National Lab there are two divergent paths for work, the research path that leads to lots of publishing, deep technical work and strong external connection, or the mission path that leads to internal focus and technical shallowness. The research path is for the more talented and intellectual people who can compete in this difficult world. For the less talented, creative or intelligent people, the mission world offers greater security at the price of intellectual impoverishment. Those who fail at the research focus can fall back onto the mission work and be employed comfortably after such failure. This perspective is a cynical truth for those who work at the Labs and represents a false dichotomy. If properly harnessed the mission focus and empower and energize better research, but it must be mindfully approached.

For a lot of people working at a National Lab there are two divergent paths for work, the research path that leads to lots of publishing, deep technical work and strong external connection, or the mission path that leads to internal focus and technical shallowness. The research path is for the more talented and intellectual people who can compete in this difficult world. For the less talented, creative or intelligent people, the mission world offers greater security at the price of intellectual impoverishment. Those who fail at the research focus can fall back onto the mission work and be employed comfortably after such failure. This perspective is a cynical truth for those who work at the Labs and represents a false dichotomy. If properly harnessed the mission focus and empower and energize better research, but it must be mindfully approached. much better for engaging innovation and producing breakthrough results. It also means a great amount of risk and lots of failure. Pure research can chase unique results, but the utility of those results is often highly suspect. This sort of research entails less risk and less failure as well. If the results are necessarily impactful on the mission, the utility is obvious. The difficulty is noting the broader aspects of research applicability that mission application might hide.

much better for engaging innovation and producing breakthrough results. It also means a great amount of risk and lots of failure. Pure research can chase unique results, but the utility of those results is often highly suspect. This sort of research entails less risk and less failure as well. If the results are necessarily impactful on the mission, the utility is obvious. The difficulty is noting the broader aspects of research applicability that mission application might hide. Ubiquitous aspects of modernity such as the Internet, cell phones and GPS all owe their existence to Cold War research focused on some completely different mission. All of these technologies were created through steadfast focus on utility that drove innovation as a mode of problem solving. This model for creating value has fallen into disrepair due to its uncertainty and risk. Risk is something we have lost the capacity to withstand as a result the failure necessary to learn and succeed with research never happens.

Ubiquitous aspects of modernity such as the Internet, cell phones and GPS all owe their existence to Cold War research focused on some completely different mission. All of these technologies were created through steadfast focus on utility that drove innovation as a mode of problem solving. This model for creating value has fallen into disrepair due to its uncertainty and risk. Risk is something we have lost the capacity to withstand as a result the failure necessary to learn and succeed with research never happens. nd the mission will suffer as result. This is both shortsighted and foolhardy. The truth is vastly different than this fear-based reaction and the only thing that suffers from shying away from research in mission-based work is the quality of the mission-based work. Doing research causes people to work with deep knowledge and understanding of their area of endeavor. Research is basically the process of learning taken to the extreme of discovery. In the process of getting to discovery one becomes an expert in what is known and capable of doing exceptional work. Today to much mission focused work is technically shallow and risk adverse. It is over-managed and underled in the pursuit of false belief that risk and failure are bad things.

nd the mission will suffer as result. This is both shortsighted and foolhardy. The truth is vastly different than this fear-based reaction and the only thing that suffers from shying away from research in mission-based work is the quality of the mission-based work. Doing research causes people to work with deep knowledge and understanding of their area of endeavor. Research is basically the process of learning taken to the extreme of discovery. In the process of getting to discovery one becomes an expert in what is known and capable of doing exceptional work. Today to much mission focused work is technically shallow and risk adverse. It is over-managed and underled in the pursuit of false belief that risk and failure are bad things. In my own experience the drive to connect mission and research can provide powerful incentives for personal enrichment. For much of my early career the topic of turbulence was utterly terrifying, and I avoided it like the plague. It seemed like a deep, complex and ultimately unsolvable problem that I was afraid of. As I began to become deeply engaged with a mission organization at Los Alamos it became clear to me that I had to understand it. Turbulence is ubiquitous in highly energetic systems governed by the equations of fluid dynamics. The modeling of turbulence is almost always done using dissipative techniques, which end up destroying most of the fidelity in numerical methods used to compute the underlying ostensibly non-turbulent flow. These high fidelity numerical methods were my focus at the time. Of course these energy rich flows are naturally turbulent. I came to the conclusion that I had to tackle understanding turbulence.

In my own experience the drive to connect mission and research can provide powerful incentives for personal enrichment. For much of my early career the topic of turbulence was utterly terrifying, and I avoided it like the plague. It seemed like a deep, complex and ultimately unsolvable problem that I was afraid of. As I began to become deeply engaged with a mission organization at Los Alamos it became clear to me that I had to understand it. Turbulence is ubiquitous in highly energetic systems governed by the equations of fluid dynamics. The modeling of turbulence is almost always done using dissipative techniques, which end up destroying most of the fidelity in numerical methods used to compute the underlying ostensibly non-turbulent flow. These high fidelity numerical methods were my focus at the time. Of course these energy rich flows are naturally turbulent. I came to the conclusion that I had to tackle understanding turbulence. ty to work on my computer codes over the break (those were the days!). So I went back to my office (those were the days!) and grabbed seven books on turbulence that had been languishing on my bookshelves unread due to my overwhelming fear of the topic. I started to read these books cover to cover, one by one and learn about turbulence. I’ve included some of these references below for your edification. The best and most eye opening was Uriel Frisch’s “Turbulence: the Legacy of A. N. Kolmogorov”. In the end, the mist began to clear and turbulence began to lose its fearful nature. Like most things one fears; the lack of knowledge of a thing gives it power and turbulence was no different. Turbulence is actually kind of a sad thing; its not understood and very little progress is being made.

ty to work on my computer codes over the break (those were the days!). So I went back to my office (those were the days!) and grabbed seven books on turbulence that had been languishing on my bookshelves unread due to my overwhelming fear of the topic. I started to read these books cover to cover, one by one and learn about turbulence. I’ve included some of these references below for your edification. The best and most eye opening was Uriel Frisch’s “Turbulence: the Legacy of A. N. Kolmogorov”. In the end, the mist began to clear and turbulence began to lose its fearful nature. Like most things one fears; the lack of knowledge of a thing gives it power and turbulence was no different. Turbulence is actually kind of a sad thing; its not understood and very little progress is being made. The main point is that the mission focus energized me to attack the topic despite my fear of it. The result was a deeply rewarding and successful research path resulting in many highly cited papers and a book. All of a sudden the topic that had terrified me was understood and I could actually conduct research in it. All of this happened because I took contributing work to the mission as an imperative. I did not have the option of turning my back on the topic because of my discomfort over it. I also learned a valuable lesion about fearsome technical topics; most of them are fearsome because we don’t know what we are doing and overelaborate the theory. Today the best things we know about turbulence are simple, and old discovered by Kolmogorov as he evaded the Nazis in 1941.

The main point is that the mission focus energized me to attack the topic despite my fear of it. The result was a deeply rewarding and successful research path resulting in many highly cited papers and a book. All of a sudden the topic that had terrified me was understood and I could actually conduct research in it. All of this happened because I took contributing work to the mission as an imperative. I did not have the option of turning my back on the topic because of my discomfort over it. I also learned a valuable lesion about fearsome technical topics; most of them are fearsome because we don’t know what we are doing and overelaborate the theory. Today the best things we know about turbulence are simple, and old discovered by Kolmogorov as he evaded the Nazis in 1941. results in software. Producing software in the conduct of applied mathematics used to be a necessary side activity instead of the core of value and work. Today software is the main thing produced and actual mathematics is often virtually absent. Actual mathematical research is difficult, failure prone and hard to measure. Software on the other hand is tangible and managed. It is still is hard to do, but ultimately software is only as valuable as what it contains, and increasingly our software is full of someone else’s old ideas. We are collectively stewarding other people’s old intellectual content, and not producing our own, nor progressing in our knowledge.

results in software. Producing software in the conduct of applied mathematics used to be a necessary side activity instead of the core of value and work. Today software is the main thing produced and actual mathematics is often virtually absent. Actual mathematical research is difficult, failure prone and hard to measure. Software on the other hand is tangible and managed. It is still is hard to do, but ultimately software is only as valuable as what it contains, and increasingly our software is full of someone else’s old ideas. We are collectively stewarding other people’s old intellectual content, and not producing our own, nor progressing in our knowledge. of the problem rather than the problem to the research. The result of this model is the tendency to confront difficult thorny issues rather than shirk them. At the same time this form of research can also lead to failure and risk manifesting itself. This tendency is the rub, and leads to people shying away from it. We are societally incapable of supporting failure as a viable outcome. The result is the utter and complete inability to do anything hard. This all stems from a false sense of the connection between risk, failure and achievement.

of the problem rather than the problem to the research. The result of this model is the tendency to confront difficult thorny issues rather than shirk them. At the same time this form of research can also lead to failure and risk manifesting itself. This tendency is the rub, and leads to people shying away from it. We are societally incapable of supporting failure as a viable outcome. The result is the utter and complete inability to do anything hard. This all stems from a false sense of the connection between risk, failure and achievement. Research is about learning at a fundamental, deep level, and learning is powered by failure. Without failure you cannot effectively learn, and without learning you cannot do research. Failure is one of the core attributes of risk. Without the risk of failing there is a certainty of achieving less. This lower achievement has become the socially acceptable norm for work. Acting in a risky way is a sure path to being punished, and we are being conditioned to not risk and not fail. For this reason the mission-focused research is shunned. The sort of conditions that mission-focused research produces are no longer acceptable and our effective social contract with the rest of society has destroyed it.

Research is about learning at a fundamental, deep level, and learning is powered by failure. Without failure you cannot effectively learn, and without learning you cannot do research. Failure is one of the core attributes of risk. Without the risk of failing there is a certainty of achieving less. This lower achievement has become the socially acceptable norm for work. Acting in a risky way is a sure path to being punished, and we are being conditioned to not risk and not fail. For this reason the mission-focused research is shunned. The sort of conditions that mission-focused research produces are no longer acceptable and our effective social contract with the rest of society has destroyed it.

tics and deep physical principle daily. All of these things are very complex and require immense amounts of training, experience and effort. For most people the things I do, think about, or work on are difficult to understand or put into context. None of this is the hardest thing I do every day. The thing that we trip up on, and fail at more than anything is simple, communication. Scientists fail to communicate effectively with each other in a myriad of ways leading to huge problems in marshaling our collective efforts. Given that we can barely communicate with each other, the prospect of communicating with the public becomes almost impossible.

tics and deep physical principle daily. All of these things are very complex and require immense amounts of training, experience and effort. For most people the things I do, think about, or work on are difficult to understand or put into context. None of this is the hardest thing I do every day. The thing that we trip up on, and fail at more than anything is simple, communication. Scientists fail to communicate effectively with each other in a myriad of ways leading to huge problems in marshaling our collective efforts. Given that we can barely communicate with each other, the prospect of communicating with the public becomes almost impossible.

t they give people the impression that communication has taken place when it hasn’t. The meeting doesn’t provide effective broadcast of information and it’s even worse as a medium for listening. Our current management culture seems to have gotten the idea that a meeting is sufficient to do the communication job. Meetings seem efficient in the sense that everyone is there and words are spoken, and even time for questions is granted. With the meeting, the managers go through the motions. The problems with this approach are vast and boundless. The first issue is the general sense that the messaging is targeted for a large audience and lacks the texture that individuals require. The message isn’t targeted to people’s acute and individual interests. Conversations don’t happen naturally, and people’s questions are usually equally limited in scope. To make matters worse, the managers think they have done their communication job.

t they give people the impression that communication has taken place when it hasn’t. The meeting doesn’t provide effective broadcast of information and it’s even worse as a medium for listening. Our current management culture seems to have gotten the idea that a meeting is sufficient to do the communication job. Meetings seem efficient in the sense that everyone is there and words are spoken, and even time for questions is granted. With the meeting, the managers go through the motions. The problems with this approach are vast and boundless. The first issue is the general sense that the messaging is targeted for a large audience and lacks the texture that individuals require. The message isn’t targeted to people’s acute and individual interests. Conversations don’t happen naturally, and people’s questions are usually equally limited in scope. To make matters worse, the managers think they have done their communication job. nt assumes the exchange of information was successful. A lot of the necessary vehicles for communication are overlooked or discounted in the process. Managers avoid the one-on-one conversations needed to establish deep personal connections and understanding. We have filled manager’s schedules with lots of activities involving other managers and paperwork, but not prioritized and valued the task of communication. We have strongly tended to try to make it efficient, and not held it in the esteem it deserves. Many hold office hours where people can talk to them rather than the more effective habit of seeking people out. All of these mechanisms give the advantage to the extroverts among us, and fail to engage the quiet introverted souls or the hardened cynics whose views and efforts have equal value and validity. All of this gets to a core message that communication is pervasive and difficult. We have many means of communicating and all of them should be utilized. We also need to assure and verify that communication has taken place and is two ways.

nt assumes the exchange of information was successful. A lot of the necessary vehicles for communication are overlooked or discounted in the process. Managers avoid the one-on-one conversations needed to establish deep personal connections and understanding. We have filled manager’s schedules with lots of activities involving other managers and paperwork, but not prioritized and valued the task of communication. We have strongly tended to try to make it efficient, and not held it in the esteem it deserves. Many hold office hours where people can talk to them rather than the more effective habit of seeking people out. All of these mechanisms give the advantage to the extroverts among us, and fail to engage the quiet introverted souls or the hardened cynics whose views and efforts have equal value and validity. All of this gets to a core message that communication is pervasive and difficult. We have many means of communicating and all of them should be utilized. We also need to assure and verify that communication has taken place and is two ways. ays of delivering information in a professional setting. It forms a core of opportunity for peer review in a setting that allows for free exchange. Conferences are an orgy of this and should form a backbone of information exchange. Instead conferences have become a bone of contention. People are assumed to only have a role there as speakers, and not part of the audience. Again the role of listening as an important aspect of communication is completely disregarded in the dynamic. The digestion of information and learning or providing peer feedback provide none of the justification for going to conferences, yet these all provide invaluable conduits for communication in the technical world.

ays of delivering information in a professional setting. It forms a core of opportunity for peer review in a setting that allows for free exchange. Conferences are an orgy of this and should form a backbone of information exchange. Instead conferences have become a bone of contention. People are assumed to only have a role there as speakers, and not part of the audience. Again the role of listening as an important aspect of communication is completely disregarded in the dynamic. The digestion of information and learning or providing peer feedback provide none of the justification for going to conferences, yet these all provide invaluable conduits for communication in the technical world. communication, which varies depending on people’s innate taste for clarity and focus. We have issues with transparency of communication even with automatic and pervasive use of all the latest technological innovations. These days we have e-mail, instant messaging, blogging, Internet content, various applications (Twitter, Snapchat,…), social media and other vehicles information transfer through people. The key to making the technology work to enable better performance still comes down to people’s willingness to pass along ideas within the vehicles available. This problem is persistent whether communications are on Twitter or in-person. Again the asymmetry between broadcasting and receiving is amplified by the technology. I am personally guilty of the sin that I’m pointing out, we never prize listening as a key aspect of communicating. If no one listens, it doesn’t matter who is talking.

communication, which varies depending on people’s innate taste for clarity and focus. We have issues with transparency of communication even with automatic and pervasive use of all the latest technological innovations. These days we have e-mail, instant messaging, blogging, Internet content, various applications (Twitter, Snapchat,…), social media and other vehicles information transfer through people. The key to making the technology work to enable better performance still comes down to people’s willingness to pass along ideas within the vehicles available. This problem is persistent whether communications are on Twitter or in-person. Again the asymmetry between broadcasting and receiving is amplified by the technology. I am personally guilty of the sin that I’m pointing out, we never prize listening as a key aspect of communicating. If no one listens, it doesn’t matter who is talking. sually associated with vested interests, or solving the problems might involve some sort of trade space where some people win or lose. Most of the time we can avoid the conflict for a bit longer by ignoring it. Facing the problems means entering into conflict, and conflict terrifies people (this is where ghosting and breadcrumbing come in quite often). The avoidance is usually illusory; eventually the situation will devolve to the point where the problems can no longer be ignored. Usually the situation is much worse, and the solution is much more painful. We need to embrace means of making facing up to problems sooner rather than later, and seek solutions when problems are small and well confined.

sually associated with vested interests, or solving the problems might involve some sort of trade space where some people win or lose. Most of the time we can avoid the conflict for a bit longer by ignoring it. Facing the problems means entering into conflict, and conflict terrifies people (this is where ghosting and breadcrumbing come in quite often). The avoidance is usually illusory; eventually the situation will devolve to the point where the problems can no longer be ignored. Usually the situation is much worse, and the solution is much more painful. We need to embrace means of making facing up to problems sooner rather than later, and seek solutions when problems are small and well confined. neral public is almost impossible to manage. In many ways the modern world acts to amplify the issues that the technical world has with communication to an almost unbearable level. Perhaps excellence in communication is too much to ask, but the inabilities to talk and listen effectively with the public are hurting science. If science is hurt than society also suffers from the lack of progress and knowledge advancement science provides. When science fails, everyone suffers. Ultimately we need to have understanding and empathy across our societal divides whether it is scientists and lay people or red and blue. Our failure to focus on effective, deep two-way communication is limiting our ability to succeed at almost everything.

neral public is almost impossible to manage. In many ways the modern world acts to amplify the issues that the technical world has with communication to an almost unbearable level. Perhaps excellence in communication is too much to ask, but the inabilities to talk and listen effectively with the public are hurting science. If science is hurt than society also suffers from the lack of progress and knowledge advancement science provides. When science fails, everyone suffers. Ultimately we need to have understanding and empathy across our societal divides whether it is scientists and lay people or red and blue. Our failure to focus on effective, deep two-way communication is limiting our ability to succeed at almost everything. a very grim evolution of the narrative. Two things have happened to undermine the maturity of V&V. One I’ve spoken about in the past, the tendency to drop verification and focus solely on validation, which is bad enough. In the absence of verification, validation starts to become rather strained and drift toward calibration. Assurances that one is properly solving the model they are claiming to be solving are unsupported by evidence. This is bad enough all by itself. The use of V&V as a vehicle for improving modeling and simulation credibility is threatened by this alone, but something worse looms even larger.

a very grim evolution of the narrative. Two things have happened to undermine the maturity of V&V. One I’ve spoken about in the past, the tendency to drop verification and focus solely on validation, which is bad enough. In the absence of verification, validation starts to become rather strained and drift toward calibration. Assurances that one is properly solving the model they are claiming to be solving are unsupported by evidence. This is bad enough all by itself. The use of V&V as a vehicle for improving modeling and simulation credibility is threatened by this alone, but something worse looms even larger. al cycles thus eliciting lots of love and support in HPC circles. Validation must be about experiments and a broad cross section of uncertainties that may only be examined through a devotion to multi-disciplinary work and collaboration. One must always remember that validation can never be separated from measurements in the real world whether experimental or observational. The experiment-simulation connection in validation is primal and non-negotiable.