As long as you’re moving, it’s easier to steer.

― Anonymous

Just to be clear, this isn’t a good thing; it is a very bad thing!

I have noticed that we tend to accept a phenomenally common and undeniably unfortunate practice where a failure to assess uncertainty means that the uncertainty reported (acknowledged, accepted) is identically ZERO. In other words if we do nothing at all, no work, no judgment, the work (modeling, simulation, experiment, test) is allowed to provide an uncertainty that is ZERO. This encourages scientists and engineers to continue to do nothing because this wildly optimistic assessment is a seeming benefit. If somebody does work to estimate the uncertainty the degree of uncertainty always gets larger as a result. This practice is desperately harmful to the practice and progress in science and incredibly common.

I have noticed that we tend to accept a phenomenally common and undeniably unfortunate practice where a failure to assess uncertainty means that the uncertainty reported (acknowledged, accepted) is identically ZERO. In other words if we do nothing at all, no work, no judgment, the work (modeling, simulation, experiment, test) is allowed to provide an uncertainty that is ZERO. This encourages scientists and engineers to continue to do nothing because this wildly optimistic assessment is a seeming benefit. If somebody does work to estimate the uncertainty the degree of uncertainty always gets larger as a result. This practice is desperately harmful to the practice and progress in science and incredibly common.

Of course this isn’t the reality, the uncertainty is actually some value, but the lack of assessed uncertainty is allowed to be accepted as ZERO. The problem is the failure of other scientists and engineers to demand an assessment instead of simply accepting the lack of due diligence or outright curiosity and common sense. The reality is that the situation where the lack of knowledge is so dramatic, the estimated uncertainty should actually be much larger to account for this lack of knowledge. Instead we create a cynical cycle where more information is greeted by more uncertainty rather than less. The only way to create a virtuous cycle is the acknowledgement that little information should mean large uncertainties, and part of the reward for good work is greater certainty (and lower uncertainty).

This entire post is related to a rather simple observation that has broad applications for how science and engineering is practiced today. A great deal of work has this zero uncertainty writ large, i.e., there is no reported uncertainty at all, none, ZERO. Yet, despite of the demonstrable and manifes t shortcomings, a gullible or lazy community readily accepts the incomplete work. Some of the better work has uncertainties associated with it, but almost always varying degrees of incompleteness. Of course one should acknowledge up front that uncertainty estimation is always incomplete, but the degree of incompleteness can be spellbindingly large.

t shortcomings, a gullible or lazy community readily accepts the incomplete work. Some of the better work has uncertainties associated with it, but almost always varying degrees of incompleteness. Of course one should acknowledge up front that uncertainty estimation is always incomplete, but the degree of incompleteness can be spellbindingly large.

One way to deal with all of this uncertainty is to introduce a taxonomy of uncertainty where we can start to organize our lack of knowledge. For modeling and simulation exercises I’m suggesting that three big bins for uncertainty be used: numerical, epistemic modeling, and modeling discrepancy. Each of these categories has additional subcategories that may be used to organize the work toward a better and more complete technical assessment. In the definition for each category we get the idea of the texture in each, and an explicit view of intrinsic incompleteness.

- Numerical: Discretization (time, space, distribution), nonlinear approximation, linear convergence, mesh, geometry, parallel computation, roundoff,…

- Epistemic Modeling: black box parametric, Bayesian, white box testing, evidence theory, polynomial chaos, boundary conditions, initial conditions, statistical,…

- Modeling discrepancy: Data uncertainty, model form, mean uncertainty, systematic bias, boundary conditions, initial conditions, measurement, statistical, …

A very specific thing to note is that the ability to assess any of these uncertainties is always incomplete and inadequate. Admitting and providing some deference to this nature is extremely important in getting to a better state of affairs. A general principle to strive for in uncertainty estimation is a state where the application of greater effort yields smaller uncertainties. A way to achieve this nature of things is to penalize the uncertainty estimation to account for incomplete information. Statistical methods always account for sampling by increasing a standard error proportionally to the root of the number of samples. As such there is an explicit benefit for gathering more data to reduce the uncertainty. This sort of measure is well suited to encourage a virtuous cycle of information collection. Instead modeling and simulation accepts a poisonous cycle where more information implicitly penalizes the effort by increasing uncertainty.

This whole post is predicated on the observation that we willingly enter into a system where effort increases the uncertainty. The direct opposite should be the objective where more effort results in smaller uncertainty. We also need to embrace a state where we recognize that the universe has an irreducible core of uncertainty. Admitting that perfect knowledge and prediction is impossible will allow us to focus more acutely on what we can predict. This is really a situation where we are willfully ignorant and over-confident about your knowledge. One might tag some of the general issue with reproducibility and replicatability of science to the same phenomena. Any effort that reports to provide a perfect set of data perfectly predicting reality should be rejected as being utterly ridiculous.

One of the next things to bring to the table is the application of expert knowledge and judgment to fill in where stronger technical work is missing. Today expert judgment is implicitly present in the lack of assessment. It is a dangerous situation where experts simply assert that things are true or certain. Instead of this expert system being directly identified, it is embedded in the results. A much better state of affairs is to ask for the uncertainty and the evidence for its value. If there has been work to assess the uncertainty this can be provided. If instead, the uncertainty is based on some sort expert judgment or previous experience, the evidence can be provided in this form.

Now let us be more concrete in the example of what this sort of evidence might look like  within the expressed taxonomy for uncertainty. I’ll start with numerical uncertainty estimation that is the most commonly completely non-assessed uncertainty. Far too often a single calculation is simply shown and used without any discussion. In slightly better cases, the calculation will be given with some comments on the sensitivity of the results to the mesh and the statement that numerical errors are negligible at the mesh given. Don’t buy it! This is usually complete bullshit! In every case where no quantitative uncertainty is explicitly provided, you should be suspicious. In other cases unless the reasoning is stated as being expertise or experience it should be questioned. If it is stated as being experiential then the basis for this experience and its documentation should be given explicitly along with evidence that it is directly relevant.

within the expressed taxonomy for uncertainty. I’ll start with numerical uncertainty estimation that is the most commonly completely non-assessed uncertainty. Far too often a single calculation is simply shown and used without any discussion. In slightly better cases, the calculation will be given with some comments on the sensitivity of the results to the mesh and the statement that numerical errors are negligible at the mesh given. Don’t buy it! This is usually complete bullshit! In every case where no quantitative uncertainty is explicitly provided, you should be suspicious. In other cases unless the reasoning is stated as being expertise or experience it should be questioned. If it is stated as being experiential then the basis for this experience and its documentation should be given explicitly along with evidence that it is directly relevant.

So what does a better assessment look like?

Under ideal circumstances you would use a model for the error (uncertainty) and do enough computational work to determine the model. The model or models would characterize all of the numerical effects influencing results. Most commonly, the discretization error is assumed to be the dominant numerical uncertainty (again evidence should be given). If the error can be defined as being dependent on a single spatial length scale, the standard error model can be used and requires three meshes be used to determine its coefficients. This best practice is remarkably uncommon in practice. If fewer meshes are used, the model is under-determined and information in terms of expert judgment should be added. I have worked on the case of only two meshes being used, but it is clear what to do in that case.

In many cases there is no second mesh to provide any basis for standard numerical error  estimation. Far too many calculational efforts provide a single calculation without any idea of the requisite uncertainties. In a nutshell, the philosophy in many cases is that the goal is to complete the best single calculation possible and creating a calculation that is capable of being assessed is not a priority. In other words the value proposition for computation is either do the best single calculation without any idea of the uncertainty versus a lower quality simulation with a well-defined assessment of uncertainty. Today the best single calculation is the default approach. This best single calculation then uses the default uncertainty estimate of exactly ZERO because nothing else is done. We need to adopt an attitude that will reject this approach because of the dangers associated with accepting a calculation without any quality assessment.

estimation. Far too many calculational efforts provide a single calculation without any idea of the requisite uncertainties. In a nutshell, the philosophy in many cases is that the goal is to complete the best single calculation possible and creating a calculation that is capable of being assessed is not a priority. In other words the value proposition for computation is either do the best single calculation without any idea of the uncertainty versus a lower quality simulation with a well-defined assessment of uncertainty. Today the best single calculation is the default approach. This best single calculation then uses the default uncertainty estimate of exactly ZERO because nothing else is done. We need to adopt an attitude that will reject this approach because of the dangers associated with accepting a calculation without any quality assessment.

In the absence of data and direct work to support a strong technical assessment of uncertainty we have no choice except to provide evidence via expert judgment and experience. A significant advance would be a general sense that such assessments be expected and the default ZERO uncertainty is never accepted. For example there are situations where single experiments are conducted without any knowledge of how the results of the experiment fit within any distribution of results. The standard approach to modeling is a desire to exactly replicate the results as if the experiment were a well-posed initial value problem instead of one realization of a distribution of results. We end up chasing our tails in the process and inhibiting progress. Again we are left in the same boat, as before, the default uncertainty in the experimental data is ZERO. Instead we have no serious attempt to examine the width and nature of the distribution in our assessments. The result is a lack of focus on the true nature of our problems and inhibitions on progress.

The problems just continue in the assessment of various uncertainty sources. In many cases the practice of uncertainty estimation is viewed only as the establishment of the degree of uncertainty is modeling parameters used in various closure models. This is often termed as epistemic uncertainty or lack of knowledge. This sometimes provides the only identified uncertainty in a calculation because tools exist for creating this data from calculations (often using a Monte Carlo sampling approach). In other words the parametric uncertainty is often presented as being all the uncertainty! Such studies are rarely complete and always fail to include the full spectrum of parameters in modeling. Such studies are intrinsically limited by being embedded in a code that has other unchallenged assumptions.

This is a virtue, but ignores broader modeling issues almost completely. For example the basic equations and model used in simulations is rarely, if ever questioned. The governing equations minus the closure are assumed to be correct a priori. This is an extremely dangerous situation because these equations are not handed down from the creator on stone tablets, but full of assumptions that should be challenged and validated with regularity. Instead this happens with such complete rarity despite being the dominant source of error in cases. When this is true the capacity to create a predictive simulation is completely impossible. Take the application of incompressible flow equations, which is rarely questioned. These equations have a number of stark approximations that are taken as the truth almost without thought. The various unphysical aspects of the approximation are ignored. For compressible flow the equations are based on equilibrium assumptions, which are rarely challenged or studied.

A second area of systematic and egregious oversight by the community is aleatory or  random uncertainty. This sort of uncertainty is clearly overlooked by our modeling approach in a way that most people fail to appreciate. Our models and governing equations are oriented toward solving the average or mean solution for a given engineering or science problem. This key question is usually muddled together in modeling by adopting an approach that mixes a specific experimental event with a model focused on the average. This results in a model that has an unclear separation of the general and specific. Few experiments or events being simulated are viewed from the context that they are simply a single instantiation of a distribution of possible outcomes. The distribution of possible outcomes is generally completely unknown and not even considered. This leads to an important source of systematic uncertainty that is completely ignored.

random uncertainty. This sort of uncertainty is clearly overlooked by our modeling approach in a way that most people fail to appreciate. Our models and governing equations are oriented toward solving the average or mean solution for a given engineering or science problem. This key question is usually muddled together in modeling by adopting an approach that mixes a specific experimental event with a model focused on the average. This results in a model that has an unclear separation of the general and specific. Few experiments or events being simulated are viewed from the context that they are simply a single instantiation of a distribution of possible outcomes. The distribution of possible outcomes is generally completely unknown and not even considered. This leads to an important source of systematic uncertainty that is completely ignored.

It doesn’t matter how beautiful your theory is … If it doesn’t agree with experiment, it’s wrong.

― Richard Feynman

Almost every validation exercise tries to examine the experiment as a well-posed initial value problem with a single correct answer instead of a single possible realization from an unknown distribution. More and more the nature of the distribution is the core of the scientific or engineering question we want to answer, yet our modeling approach is hopelessly stuck in the past because we are not framing the question we are answering thoughtfully. Often the key question we need to answer is how likely a certain bad outcome will be. We want to know the likelihood of extreme events given a set of changes in a system. Think about things like what does a hundred year flood look like under a scenario of climate change, or the likelihood that a mechanical part might fail under normal usage. Instead our fundamental models being the average response for the system are left to infer these extreme events from the average often without any knowledge of the underlying distributions. This implies a need to change the fundamental approach we take to modeling, but we won’t until we start to ask the right questions and characterize the right uncertainties.

One should avoid carrying out an experiment requiring more than 10 per cent accuracy.

― Walther Nernst

The key to progress is work toward some best practices that avoid these pitfalls. First and foremost a modeling and simulation activity should never allow itself to report or even imply that key uncertainties be ZERO. If one has lots of data and make efforts to assess then uncertainties can be assigned through strong technical arguments. This is terribly or even embarrassingly uncommon even today. If one does not have the data or calculations to support uncertainty estimation then significant amounts of expert judgment and strong assumptions are necessary to estimate uncertainties. The key is to make a significant commitment to being honest about what isn’t known and take a penalty for lack of knowledge and understanding. That penalty should be well grounded in evidence and experience. Making progress in these areas is essential to make modeling and simulation a vehicle appropriate to the hype we hear all the time.

Stagnation is self-abdication.

― Ryan Talbot

Modeling and simulation is looked at as one of the great opportunities for industrial, scientific and engineering improvements for society. Right now we are hinging our improvements on a mass of software being moved onto increasingly exotic (and powerful) computers. Increasingly the whole of our effort in modeling and simulation is being reduced to nothing but a software development activity. The holistic and integrating nature of modeling and simulation is being hollowed out and lost to a series of fatal assumptions. One of the places where computing’s power cannot change is how we practice our computational efforts. It can enable the practices in modeling and simulation by making it possible to do more computation. The key to fixing this dynamic is a commitment to understanding the nature and limits of our capability. Today we just assume that our modeling and simulation has mastery and no such assessment is needed.

The computational capability does nothing to improve experimental sciences necessary  value in challenging our theory. Moreover the whole sequence of necessary activities like model development, and analysis, method and algorithm development along with experimental science and engineering are all receiving almost no attention today. These activities are absolutely necessary for modeling and simulation success along with the sort of systematic practices I’ve elaborated on in this post. Without a sea change in the attitude toward how modeling and simulation is practiced and what it depends upon, its promise as a technology will be stillborn and nullified by our collective hubris.

value in challenging our theory. Moreover the whole sequence of necessary activities like model development, and analysis, method and algorithm development along with experimental science and engineering are all receiving almost no attention today. These activities are absolutely necessary for modeling and simulation success along with the sort of systematic practices I’ve elaborated on in this post. Without a sea change in the attitude toward how modeling and simulation is practiced and what it depends upon, its promise as a technology will be stillborn and nullified by our collective hubris.

It is high time for those working to progress modeling and simulation to focus energy and effort it is needed. Today we are avoiding a rational discussion of how to make modeling and simulation successful, and relying on hype to govern our decisions. The goal should not be to assure that high performance computing is healthy, but rather modeling and simulation (or big data analysis) is healthy. High performance computing is simply a necessary tool for these capabilities, but not the soul of either. We need to make sure the soul of modeling and simulation is healthy rather than the corrupted mass of stagnation we have.

You view the world from within a model.

― Nassim Nicholas Taleb

Fluid mechanics at its simplest is something called Stokes flow, basically motion so slow that it is solely governed by viscous forces. This is the asymptotic state where the Reynolds number (the ratio of inertial to viscous forces) is identically zero. It’s a bit oxymoronic as it is never reached, it’s the equations of motion without any motion or where the motion can be ignored. In this limit flows preserve their basic symmetries to a very high degree.

Fluid mechanics at its simplest is something called Stokes flow, basically motion so slow that it is solely governed by viscous forces. This is the asymptotic state where the Reynolds number (the ratio of inertial to viscous forces) is identically zero. It’s a bit oxymoronic as it is never reached, it’s the equations of motion without any motion or where the motion can be ignored. In this limit flows preserve their basic symmetries to a very high degree. The fundamental asymmetry in physics is the arrow of time, and its close association with entropy. The connection with asymmetry and entropy is quite clear and strong for shock waves where the mathematical theory is well-developed and accepted. The simplest case to examine is Burgers’ equation,

The fundamental asymmetry in physics is the arrow of time, and its close association with entropy. The connection with asymmetry and entropy is quite clear and strong for shock waves where the mathematical theory is well-developed and accepted. The simplest case to examine is Burgers’ equation,  On the one hand the form seems to be unavoidable dimensionally, on the other it is a profound result that provides the basis of the Clay prize for turbulence. It gets to the core of my belief that to a very large degree the understanding of turbulence will elude us as long as we use the intrinsically unphysical incompressible approximation. This may seem controversial, but incompressibility is an approximation to reality, not a fundamental relation. As such its utility is dependent upon the application. It is undeniably useful, but has limits, which are shamelessly exposed by turbulence. Without viscosity the equations governing incompressible flows are pathological in the extreme. Deep mathematical analysis has been unable to find singular solutions of the nature needed to explain turbulence in incompressible flows.

On the one hand the form seems to be unavoidable dimensionally, on the other it is a profound result that provides the basis of the Clay prize for turbulence. It gets to the core of my belief that to a very large degree the understanding of turbulence will elude us as long as we use the intrinsically unphysical incompressible approximation. This may seem controversial, but incompressibility is an approximation to reality, not a fundamental relation. As such its utility is dependent upon the application. It is undeniably useful, but has limits, which are shamelessly exposed by turbulence. Without viscosity the equations governing incompressible flows are pathological in the extreme. Deep mathematical analysis has been unable to find singular solutions of the nature needed to explain turbulence in incompressible flows.

simulation is build the next generation of computers. The proposition is so shallow on the face of it as to be utterly laughable. Except no one is laughing, the programs are predicated on it. The whole mentality is damaging because it intrinsically limits our thinking about how to balance the various elements needed for progress. We see a lack of the sort of approach that can lead to progress with experimental work starved of funding and focus without the needed mathematical modeling effort necessary for utility. Actual applied mathematics has become a veritable endangered species only seen rarely in the wild.

simulation is build the next generation of computers. The proposition is so shallow on the face of it as to be utterly laughable. Except no one is laughing, the programs are predicated on it. The whole mentality is damaging because it intrinsically limits our thinking about how to balance the various elements needed for progress. We see a lack of the sort of approach that can lead to progress with experimental work starved of funding and focus without the needed mathematical modeling effort necessary for utility. Actual applied mathematics has become a veritable endangered species only seen rarely in the wild.

structures and models for average behavior. One of the key things to really straighten out is the nature of the question we are asking the model to answer. If the question isn’t clearly articulated, the model will provide deceptive answers that will send scientists and engineers in the wrong direction. Getting this model to question dynamic sorted out is far more important to the success of modeling and simulation than any advance in computing power. It is also completely and utterly off the radar of the modern research agenda. I worry that the present focus will produce damage to the forces of progress that may take decades to undue.

structures and models for average behavior. One of the key things to really straighten out is the nature of the question we are asking the model to answer. If the question isn’t clearly articulated, the model will provide deceptive answers that will send scientists and engineers in the wrong direction. Getting this model to question dynamic sorted out is far more important to the success of modeling and simulation than any advance in computing power. It is also completely and utterly off the radar of the modern research agenda. I worry that the present focus will produce damage to the forces of progress that may take decades to undue. If there is any place where singularities are dealt with systematically and properly it is fluid mechanics. Even in fluid mechanics there is a frighteningly large amount of missing territory most acutely in turbulence. The place where things really work is shock waves and we have some very bright people to thank for the order. We can calculate an immense amount of physical phenomena where shock waves are important while ignoring a tremendous amount of detail. All that matter is for the calculation to provide the appropriate integral content of dissipation from the shock wave, and the calculation is wonderfully stable and physical. It is almost never necessary and almost certainly wasteful to compute the full gory details of a shock wave.

If there is any place where singularities are dealt with systematically and properly it is fluid mechanics. Even in fluid mechanics there is a frighteningly large amount of missing territory most acutely in turbulence. The place where things really work is shock waves and we have some very bright people to thank for the order. We can calculate an immense amount of physical phenomena where shock waves are important while ignoring a tremendous amount of detail. All that matter is for the calculation to provide the appropriate integral content of dissipation from the shock wave, and the calculation is wonderfully stable and physical. It is almost never necessary and almost certainly wasteful to compute the full gory details of a shock wave. y to progress. I believe the issue is the nature of the governing equations and a need to change this model away from incompressibility, which is a useful and unphysical approximation, not a fundamental physical law. In spite of all the problems, the state of affairs in turbulence is remarkably good compared with solid mechanics.

y to progress. I believe the issue is the nature of the governing equations and a need to change this model away from incompressibility, which is a useful and unphysical approximation, not a fundamental physical law. In spite of all the problems, the state of affairs in turbulence is remarkably good compared with solid mechanics. An example of lower mathematical maturity can be seen in the field of solid mechanics. In solids, the mathematical theory is stunted by comparison to fluids. A clear part of the issue is the approach taken by the fathers of the field in not providing a clear path for combined analytical-numerical analysis as fluids had. The result of this is a numerical background that is completely left adrift of the analytical structure of the equations. In essence the only option is to fully resolve everything in the governing equations. No structural and systematic explanation exists for the key singularities in material, which is absolutely vital for computational utility. In a nutshell the notion of the regularized singularity so powerful in fluid mechanics is foreign. This has a dramatically negative impact on the capacity of modeling and simulation to have a maximal impact.

An example of lower mathematical maturity can be seen in the field of solid mechanics. In solids, the mathematical theory is stunted by comparison to fluids. A clear part of the issue is the approach taken by the fathers of the field in not providing a clear path for combined analytical-numerical analysis as fluids had. The result of this is a numerical background that is completely left adrift of the analytical structure of the equations. In essence the only option is to fully resolve everything in the governing equations. No structural and systematic explanation exists for the key singularities in material, which is absolutely vital for computational utility. In a nutshell the notion of the regularized singularity so powerful in fluid mechanics is foreign. This has a dramatically negative impact on the capacity of modeling and simulation to have a maximal impact. Being thrust into a leadership position at work has been an eye-opening experience to say the least. It makes crystal clear a whole host of issues that need to be solved. Being a problem-solver at heart, I’m searching for a root cause for all the problems that I see. One can see the symptoms all around, poor understanding, poor coordination, lack of communication, hidden agendas, ineffective vision, and intellectually vacuous goals… I’ve come more and more to the view that all of these things, evident as the day is long are simply symptomatic of a core problem. The core problem is a lack of trust so broad and deep that it rots everything it touches.

Being thrust into a leadership position at work has been an eye-opening experience to say the least. It makes crystal clear a whole host of issues that need to be solved. Being a problem-solver at heart, I’m searching for a root cause for all the problems that I see. One can see the symptoms all around, poor understanding, poor coordination, lack of communication, hidden agendas, ineffective vision, and intellectually vacuous goals… I’ve come more and more to the view that all of these things, evident as the day is long are simply symptomatic of a core problem. The core problem is a lack of trust so broad and deep that it rots everything it touches. on the whole of society leeches. They claim to be helping society even while they damage our future to feed their hunger. The deeper physic wound is the feeling that everyone is so motivated leading to the broad-based lack of trust of your fellow man.

on the whole of society leeches. They claim to be helping society even while they damage our future to feed their hunger. The deeper physic wound is the feeling that everyone is so motivated leading to the broad-based lack of trust of your fellow man. In the overall execution of work another aspect of the current environment can be characterized as the proprietary attitude. Information hiding and lack of communication seem to be a growing problem even as the capacity for transmitting information grows. Various legal, or political concerns seem to outweigh the needs for efficiency, progress and transparency. Today people seem to know much less than they used to instead of more. People are narrower and more tactical in their work rather than broader and strategic. We are encouraged to simply mind our own business rather than seek a broader attitude. The thing that really suffers in all of this is the opportunity to make progress for a better future.

In the overall execution of work another aspect of the current environment can be characterized as the proprietary attitude. Information hiding and lack of communication seem to be a growing problem even as the capacity for transmitting information grows. Various legal, or political concerns seem to outweigh the needs for efficiency, progress and transparency. Today people seem to know much less than they used to instead of more. People are narrower and more tactical in their work rather than broader and strategic. We are encouraged to simply mind our own business rather than seek a broader attitude. The thing that really suffers in all of this is the opportunity to make progress for a better future. The simplest thing to do is value the truth, value excellence and cease rewarding the sort of trust-busting actions enumerated above. Instead of allowing slip-shod work to be reported as excellence we need to make strong value judgments about the quality of work, reward excellence, and punish incompetence. The truth and fact needs to be valued above lies and spin. Bad information needs to be identified as such and eradicated without mercy. Many greedy self-interested parties are strongly inclined to seed doubt and push lies and spin. The battle is for the nature of society. Do we want to live in a World of distrust and cynicism or one of truth and faith in one another? The balance today is firmly stuck at distrust and cynicism. The issue of excellence is rather pregnant. Today everyone is an expert, and no one is an expert with the general notion of expertise being highly suspected. The impact of such a milieu is absolutely damaging to the structure of society and the prospects for progress. We need to seed, reward and nurture excellence across society instead of doubting and demonizing it.

The simplest thing to do is value the truth, value excellence and cease rewarding the sort of trust-busting actions enumerated above. Instead of allowing slip-shod work to be reported as excellence we need to make strong value judgments about the quality of work, reward excellence, and punish incompetence. The truth and fact needs to be valued above lies and spin. Bad information needs to be identified as such and eradicated without mercy. Many greedy self-interested parties are strongly inclined to seed doubt and push lies and spin. The battle is for the nature of society. Do we want to live in a World of distrust and cynicism or one of truth and faith in one another? The balance today is firmly stuck at distrust and cynicism. The issue of excellence is rather pregnant. Today everyone is an expert, and no one is an expert with the general notion of expertise being highly suspected. The impact of such a milieu is absolutely damaging to the structure of society and the prospects for progress. We need to seed, reward and nurture excellence across society instead of doubting and demonizing it. Ultimately we need to conscientiously drive for trust as a virtue in how we approach each other. A big part of trust is the need for truth in our communication. The sort of lying, spinning and bullshit in communication does nothing but undermine trust, and empower low quality work. We need to empower excellence through our actions rather than simply declare things to be excellent by definition and fiat. Failure is a necessary element in achievement and expertise. It must be encouraged. We should promote progress and quality as a necessary outcome across the broadest spectrum of work. Everything discussed above needs to be based in a definitive reality and have actual basis in facts instead of simply bullshitting about it, or it only having “truthiness”. Not being able to see the evidence of reality in claims of excellence and quality simply amplifies the problems with trust, and risks devolving into a viscous cycle dragging us down instead of a virtuous cycle that lifts us up.

Ultimately we need to conscientiously drive for trust as a virtue in how we approach each other. A big part of trust is the need for truth in our communication. The sort of lying, spinning and bullshit in communication does nothing but undermine trust, and empower low quality work. We need to empower excellence through our actions rather than simply declare things to be excellent by definition and fiat. Failure is a necessary element in achievement and expertise. It must be encouraged. We should promote progress and quality as a necessary outcome across the broadest spectrum of work. Everything discussed above needs to be based in a definitive reality and have actual basis in facts instead of simply bullshitting about it, or it only having “truthiness”. Not being able to see the evidence of reality in claims of excellence and quality simply amplifies the problems with trust, and risks devolving into a viscous cycle dragging us down instead of a virtuous cycle that lifts us up. I chose the name the “Regularized Singularity” because it’s so important to the conduct of computational simulations of significance. For real world computations, the nonlinearity of the models dictates that the formation of a singularity is almost a foregone conclusion. To remain well behaved and physical, the singularity must be regularized, which means the singular behavior is moderated into something computable. This almost always accomplished with the application of a dissipative mechanism and effectively imposes the second law of thermodynamics on the solution.

I chose the name the “Regularized Singularity” because it’s so important to the conduct of computational simulations of significance. For real world computations, the nonlinearity of the models dictates that the formation of a singularity is almost a foregone conclusion. To remain well behaved and physical, the singularity must be regularized, which means the singular behavior is moderated into something computable. This almost always accomplished with the application of a dissipative mechanism and effectively imposes the second law of thermodynamics on the solution. One of the really big ideas to grapple with is the utter futility of using computers to simply crush problems into submission. For most problems of any practical significance this will not be happening, ever. In terms of the physics of the problems, this is often the coward’s way out of the issue. In my view, if nature were going to be submitting to our mastery via computational power, it would have already happened. The next generation of computing won’t be doing the trick either. Progress depends on actually thinking about modeling. A more likely outcome will be the diversion of resources away from the sort of thinking that will allow progress to be made. Most systems do not depend on the intricate details of the problem anyway. The small-scale dynamics are universal and driven by large scales. The trick to modeling these systems is to unveil the essence and core of the large-scale dynamics leading to what we observe.

One of the really big ideas to grapple with is the utter futility of using computers to simply crush problems into submission. For most problems of any practical significance this will not be happening, ever. In terms of the physics of the problems, this is often the coward’s way out of the issue. In my view, if nature were going to be submitting to our mastery via computational power, it would have already happened. The next generation of computing won’t be doing the trick either. Progress depends on actually thinking about modeling. A more likely outcome will be the diversion of resources away from the sort of thinking that will allow progress to be made. Most systems do not depend on the intricate details of the problem anyway. The small-scale dynamics are universal and driven by large scales. The trick to modeling these systems is to unveil the essence and core of the large-scale dynamics leading to what we observe.

computed with pervasive instability and making them stable and practically useful. It provides the fundamental foundation for shock capturing and the ability to compute discontinuous flows on grids. In many respects the entire CFD field is grounded upon this method. The notable aspect of the method is the dependence of the dissipation on the product of the coefficient

computed with pervasive instability and making them stable and practically useful. It provides the fundamental foundation for shock capturing and the ability to compute discontinuous flows on grids. In many respects the entire CFD field is grounded upon this method. The notable aspect of the method is the dependence of the dissipation on the product of the coefficient  This isn’t generally considered important for viscosity, but in the content of more complex systems of equations may have importance. One of the keys to bringing this up is that generally speaking linear hyperviscosity will not have this property, but we can build nonlinear hyperviscosity that will preserve this property. At some level this probably explains the utility of nonlinear hyperviscosity for shock capturing. In nonlinear hyperviscosity we have immense freedom in designing the viscosity as long as we keep it positive. We then have a positive viscosity multiplying a positive definite operator, and this provides a deep form of stability we want along with a connection that guarantees of physically relevant solutions.

This isn’t generally considered important for viscosity, but in the content of more complex systems of equations may have importance. One of the keys to bringing this up is that generally speaking linear hyperviscosity will not have this property, but we can build nonlinear hyperviscosity that will preserve this property. At some level this probably explains the utility of nonlinear hyperviscosity for shock capturing. In nonlinear hyperviscosity we have immense freedom in designing the viscosity as long as we keep it positive. We then have a positive viscosity multiplying a positive definite operator, and this provides a deep form of stability we want along with a connection that guarantees of physically relevant solutions. Sometimes the blog is just an open version of a personal journal. I feel myself torn between wanting to write about some thoroughly nerdy topic that holds my intellectual interest (like hyperviscosity for example), but end up ranting about some aspect of my professional life (like last week). I genuinely felt like the rant from last week would be followed this week by a technical post because things would be better. Was I ever wrong! This week is even more appalling! I’m getting to see the rollout of the new national program reaching for Exascale computers. As deeply problematic as the current NNSA program might be, it is a paragon of technical virtue compared with the broader DOE program. Its as if we already had a President Trump in the White House to lead our Nation over the brink toward chaos, stupidity and making everything an episode in the World’s scariest reality show. Electing Trump would just make the stupidity obvious, make no mistake, we are already stupid.

Sometimes the blog is just an open version of a personal journal. I feel myself torn between wanting to write about some thoroughly nerdy topic that holds my intellectual interest (like hyperviscosity for example), but end up ranting about some aspect of my professional life (like last week). I genuinely felt like the rant from last week would be followed this week by a technical post because things would be better. Was I ever wrong! This week is even more appalling! I’m getting to see the rollout of the new national program reaching for Exascale computers. As deeply problematic as the current NNSA program might be, it is a paragon of technical virtue compared with the broader DOE program. Its as if we already had a President Trump in the White House to lead our Nation over the brink toward chaos, stupidity and making everything an episode in the World’s scariest reality show. Electing Trump would just make the stupidity obvious, make no mistake, we are already stupid. I think there are parallels that are worth discussing in depth. Something big is happening and right now it looks like a great unraveling. People are choosing sides and the outcome will determine the future course of our World. On one side we have the forces of conservatism, which want to preserve the status quo through the application of fear to control the populace. This allows control, lack of initiative, deprivation and a herd mentality. The prime directive for the forces on the right is the maintenance of the existing structures of power in society. The forces of liberalization and progress are arrayed on the other side wanting freedom, personal meaning, individuality, diversity, and new societal structure. These forces are colliding on many fronts and the outcome is starting to be violent. The outcome still hangs in the balance.

I think there are parallels that are worth discussing in depth. Something big is happening and right now it looks like a great unraveling. People are choosing sides and the outcome will determine the future course of our World. On one side we have the forces of conservatism, which want to preserve the status quo through the application of fear to control the populace. This allows control, lack of initiative, deprivation and a herd mentality. The prime directive for the forces on the right is the maintenance of the existing structures of power in society. The forces of liberalization and progress are arrayed on the other side wanting freedom, personal meaning, individuality, diversity, and new societal structure. These forces are colliding on many fronts and the outcome is starting to be violent. The outcome still hangs in the balance. The Internet is a great liberalizing force, but it also provides a huge amplifier for ignorance, propaganda and the instigation of violence. It is simply a technology and it is not intrinsically good or bad. On the one hand the Internet allows people to connect in ways that were unimaginable mere years ago. It allows access to incredible levels of information. The same thing creates immense fear in society because new social structures are emerging. Some of these structures are criminal or terrorists, some of these are dissidents against the establishment, and some of these are viewed as immoral. The information availability for the general public becomes an overload. This works in favor of the establishment, which benefits from propaganda and ignorance. The result is a distinct tension between knowledge and ignorance, freedom and tyranny hinging upon fear and security. I can’t see who is winning, but signs are not good.

The Internet is a great liberalizing force, but it also provides a huge amplifier for ignorance, propaganda and the instigation of violence. It is simply a technology and it is not intrinsically good or bad. On the one hand the Internet allows people to connect in ways that were unimaginable mere years ago. It allows access to incredible levels of information. The same thing creates immense fear in society because new social structures are emerging. Some of these structures are criminal or terrorists, some of these are dissidents against the establishment, and some of these are viewed as immoral. The information availability for the general public becomes an overload. This works in favor of the establishment, which benefits from propaganda and ignorance. The result is a distinct tension between knowledge and ignorance, freedom and tyranny hinging upon fear and security. I can’t see who is winning, but signs are not good. The core of the issue is an unhealthy relationship to risk, fear and failure. Our management is focused upon controlling risk, fear of bad things, and outright avoidance of failure. The result is an implemented culture of caution and compliance manifesting itself as a gulf of leadership. The management becomes about budgets and money while losing complete sight of purpose and direction. The focus on leading ourselves in new directions gets lost completely. The ability take risks get destroyed because of fears and outright fear of failure. People are so completely wrapped up in trying to avoid ever fucking up that all the energy behind doing progressive things moving forward are completely sapped. We are so tied to compliance that plans must be followed even when they make no sense at all.

The core of the issue is an unhealthy relationship to risk, fear and failure. Our management is focused upon controlling risk, fear of bad things, and outright avoidance of failure. The result is an implemented culture of caution and compliance manifesting itself as a gulf of leadership. The management becomes about budgets and money while losing complete sight of purpose and direction. The focus on leading ourselves in new directions gets lost completely. The ability take risks get destroyed because of fears and outright fear of failure. People are so completely wrapped up in trying to avoid ever fucking up that all the energy behind doing progressive things moving forward are completely sapped. We are so tied to compliance that plans must be followed even when they make no sense at all. ry small degree. Our managers are human and limited in capacity for complexity and time available to provide focus. If all of the focus is applied to management nothing is left for leadership. The impact is clear, the system is full of management assurance, compliance and surety, yet virtually absent of vision and inspiration. We are bereft of aspirational perspectives with clear goals looking forward. The management focus breeds an incremental approach that too concretely grounds future vision completely on what is possible today. All of this is brewing in a sea of risk aversion and intolerance for failure.

ry small degree. Our managers are human and limited in capacity for complexity and time available to provide focus. If all of the focus is applied to management nothing is left for leadership. The impact is clear, the system is full of management assurance, compliance and surety, yet virtually absent of vision and inspiration. We are bereft of aspirational perspectives with clear goals looking forward. The management focus breeds an incremental approach that too concretely grounds future vision completely on what is possible today. All of this is brewing in a sea of risk aversion and intolerance for failure. that I work for. It has resulted in the wholesale divestiture of quality because quality no longer matters to success. It is creating a thoroughly awful and untenable situation where truth and reality are completely detached from how we operate. Every time that something of low quality is allowed to be characterized as being high quality, we undermine our culture. Capability to make real progress is completely undermined because progress is extremely difficult and prone to failure and setbacks. It is much easier to simply incrementally move along doing what we are already doing. We know that will work and frankly those managing us don’t know the difference anyway. Doing what we are already doing is simply the status quo and orthogonal to progress.

that I work for. It has resulted in the wholesale divestiture of quality because quality no longer matters to success. It is creating a thoroughly awful and untenable situation where truth and reality are completely detached from how we operate. Every time that something of low quality is allowed to be characterized as being high quality, we undermine our culture. Capability to make real progress is completely undermined because progress is extremely difficult and prone to failure and setbacks. It is much easier to simply incrementally move along doing what we are already doing. We know that will work and frankly those managing us don’t know the difference anyway. Doing what we are already doing is simply the status quo and orthogonal to progress. micromanagement. Each step in micromanagement produces another tax on the time and energy of every one impacted by the system and diminishes the good that can be done. In essence we are draining our system of energy for creating positive outcomes. The management system is unremittingly negative in its focus, trying to stop stuff from happening rather than enable stuff. It is ultimately a losing battle, which is gutting our ability to produce great things.

micromanagement. Each step in micromanagement produces another tax on the time and energy of every one impacted by the system and diminishes the good that can be done. In essence we are draining our system of energy for creating positive outcomes. The management system is unremittingly negative in its focus, trying to stop stuff from happening rather than enable stuff. It is ultimately a losing battle, which is gutting our ability to produce great things. management work shouldn’t be done in the abstract. Almost all of the management stuff are a good ideas and “good”. They are bad in the sense of what they displace from the sorts of efforts we have the time and energy to engage in. We all have limits in terms of what we can reasonably achieve. If we spend our energy on good, but low value activities, we do not have the energy to focus on difficult high value activities. A lot of these management activities are good, easy, and time consuming and directly displace lots of hard high value work. The core of our problems is the inability to focus sufficient energy on hard things. Without focus the hard things simply don’t get done. This is where we are today, consumed by easy low value things, and lacking the energy and focus to do anything truly great.

management work shouldn’t be done in the abstract. Almost all of the management stuff are a good ideas and “good”. They are bad in the sense of what they displace from the sorts of efforts we have the time and energy to engage in. We all have limits in terms of what we can reasonably achieve. If we spend our energy on good, but low value activities, we do not have the energy to focus on difficult high value activities. A lot of these management activities are good, easy, and time consuming and directly displace lots of hard high value work. The core of our problems is the inability to focus sufficient energy on hard things. Without focus the hard things simply don’t get done. This is where we are today, consumed by easy low value things, and lacking the energy and focus to do anything truly great. s a general ambiguity regarding the purpose of our Labs, the goals of our science and the importance of the work. None of this is clear. It is the generic implication of the lack of leadership within the specific context of our Labs, or federally supported science. It is probably a direct result of a broader and deeper vacuum of leadership nationally infecting all areas of endeavor. We have no visionary or aspirational goals as a society either.

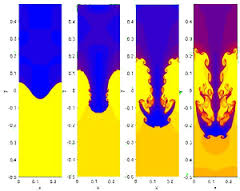

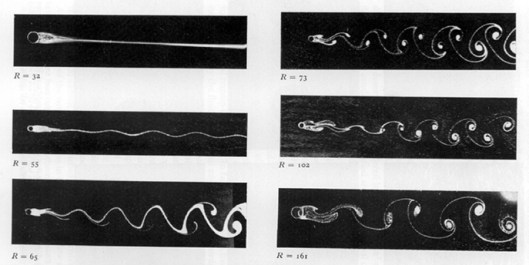

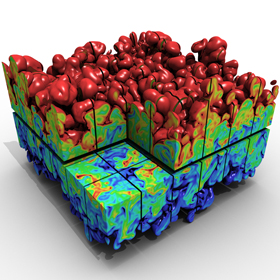

s a general ambiguity regarding the purpose of our Labs, the goals of our science and the importance of the work. None of this is clear. It is the generic implication of the lack of leadership within the specific context of our Labs, or federally supported science. It is probably a direct result of a broader and deeper vacuum of leadership nationally infecting all areas of endeavor. We have no visionary or aspirational goals as a society either. I’ll write the equation in TeX and show all of you a picture, you can make out a little of my other ink too, a lithium-7 atom and a Rayleigh-Taylor instability (I also have my favorite dog’s paw on my right shoulder and the famous Von Karmen vortex street on my forearm). The equation is how I think about the second law of thermodynamics in operation through the application of a vanishing viscosity principle tying the solution of equations to a concept of physical admissibility. In other words I believe in entropy and its power to guide us to solutions that matter in the physical world.

I’ll write the equation in TeX and show all of you a picture, you can make out a little of my other ink too, a lithium-7 atom and a Rayleigh-Taylor instability (I also have my favorite dog’s paw on my right shoulder and the famous Von Karmen vortex street on my forearm). The equation is how I think about the second law of thermodynamics in operation through the application of a vanishing viscosity principle tying the solution of equations to a concept of physical admissibility. In other words I believe in entropy and its power to guide us to solutions that matter in the physical world.

was “play”. In talking about what the concept of play means to me first in the context of childhood then adulthood I had several epiphanies about the health and vitality of our current society and workplaces. Basically, the concept of play is under siege by forces that find it too frivolous to be supported. Societally we have destroyed play as a free wheeling unstructured activity for children, and crushed the freedom to play at work under the banner of accountability. We are poorer and more unhappy as a result and it is yet another manifestation of unremitting fear governing our behaviors.

was “play”. In talking about what the concept of play means to me first in the context of childhood then adulthood I had several epiphanies about the health and vitality of our current society and workplaces. Basically, the concept of play is under siege by forces that find it too frivolous to be supported. Societally we have destroyed play as a free wheeling unstructured activity for children, and crushed the freedom to play at work under the banner of accountability. We are poorer and more unhappy as a result and it is yet another manifestation of unremitting fear governing our behaviors. The greatest realization in the dialog came when I took note of how I used to play at work and all the good that came from it. The times when I have been the most productive, creative and happy with work have all been associated with being allowed to play at work. By play I mean allowed to experiment, test, and create new ideas in an environment allowing for failure and risk (essentially by placing very few constraints and limitations on what I was doing). The key was the creation and commitment to very high level goals and the freedom to pursue these goals in a relatively free way. The key is the pursuit of the broad objectives using methods that are not strongly prescribed a priori.

The greatest realization in the dialog came when I took note of how I used to play at work and all the good that came from it. The times when I have been the most productive, creative and happy with work have all been associated with being allowed to play at work. By play I mean allowed to experiment, test, and create new ideas in an environment allowing for failure and risk (essentially by placing very few constraints and limitations on what I was doing). The key was the creation and commitment to very high level goals and the freedom to pursue these goals in a relatively free way. The key is the pursuit of the broad objectives using methods that are not strongly prescribed a priori.

We end up working extremely hard across everything in society to make sure that bad things don’t ever happen. We put all sorts of measures in place to prevent bad things. We don’t seem to have the capacity to realize that bad things just happen and it’s a fact of life. We spend so much effort trying to manage all the risks that life is just passing us by. This manifests itself with the destructive belief that the government’s job is to protect all of us from bad things (like terrorism). We are willing to give up freedom, accomplishment and productivity to assure a slight increase in safety. Often the risks we are sacrificing so much to diminish are vanishingly small and trivial (like terrorism), yet we are making this trade over and over again. We are allowing ourselves to drown in a sea of safety measures against risks that are inconsequential. The aggregate cost of all of these risk control measures exceeds the value of almost any of the measures. It represents the true threat to our future.

We end up working extremely hard across everything in society to make sure that bad things don’t ever happen. We put all sorts of measures in place to prevent bad things. We don’t seem to have the capacity to realize that bad things just happen and it’s a fact of life. We spend so much effort trying to manage all the risks that life is just passing us by. This manifests itself with the destructive belief that the government’s job is to protect all of us from bad things (like terrorism). We are willing to give up freedom, accomplishment and productivity to assure a slight increase in safety. Often the risks we are sacrificing so much to diminish are vanishingly small and trivial (like terrorism), yet we are making this trade over and over again. We are allowing ourselves to drown in a sea of safety measures against risks that are inconsequential. The aggregate cost of all of these risk control measures exceeds the value of almost any of the measures. It represents the true threat to our future. In economic policy it is well known that monopolies are bad. They are bad for everyone except the people who own and control those monopolies (who invest a lot in retaining their power!). They are drags on growth, innovation and progress. They are the essence of the too big to fail problem. In a very real sense the same thing is happening in science. We are being swallowed by monopolistic ideas. We are too invested in a variety of traditional solutions to problem (which solve traditional problems). Innovation, invention and progress are falling victim to this seemingly societal-wide trend.

In economic policy it is well known that monopolies are bad. They are bad for everyone except the people who own and control those monopolies (who invest a lot in retaining their power!). They are drags on growth, innovation and progress. They are the essence of the too big to fail problem. In a very real sense the same thing is happening in science. We are being swallowed by monopolistic ideas. We are too invested in a variety of traditional solutions to problem (which solve traditional problems). Innovation, invention and progress are falling victim to this seemingly societal-wide trend. Looking at our soon to be, if not already ancient codes based on ancient technology I asked how often did we build a new code in the old days? Sure as could be the answer was radically different than today’s world, we build new codes every five to seven years. FIVE TO SEVEN YEARS!!!! Today we are sheparding codes that are at least a quarter of a century old, and nothing new is in sight. We just continue to accrete capability on to these old codes horribly constrained by sets of decisions increasingly divorced from today’s reality, technology and problems. It is a recipe for failure, but not the good kind of failure, the kind of failure that crushes the future slowly and painlessly like the hardening of the arteries.

Looking at our soon to be, if not already ancient codes based on ancient technology I asked how often did we build a new code in the old days? Sure as could be the answer was radically different than today’s world, we build new codes every five to seven years. FIVE TO SEVEN YEARS!!!! Today we are sheparding codes that are at least a quarter of a century old, and nothing new is in sight. We just continue to accrete capability on to these old codes horribly constrained by sets of decisions increasingly divorced from today’s reality, technology and problems. It is a recipe for failure, but not the good kind of failure, the kind of failure that crushes the future slowly and painlessly like the hardening of the arteries. Perhaps no greater emblem of our addiction to shortsightedness exists than the crumbling infrastructure. The roads, bridges, electrical grids, airports, sewers, water systems, power plants,… that our core economy depend upon are in horrible shape and no will exists to support them. We can’t even conjure up the vision to create the infrastructure for the new century and leave it to privatized interests that will never deliver it. We are setting ourselves up to be permanently behind the rest of the World. We have no pride as a nation, no leadership and no vision of anything different. We just have short-term narcissistic self-interest embodied by the low tax, low service mentality. The same dynamic is happening at work.

Perhaps no greater emblem of our addiction to shortsightedness exists than the crumbling infrastructure. The roads, bridges, electrical grids, airports, sewers, water systems, power plants,… that our core economy depend upon are in horrible shape and no will exists to support them. We can’t even conjure up the vision to create the infrastructure for the new century and leave it to privatized interests that will never deliver it. We are setting ourselves up to be permanently behind the rest of the World. We have no pride as a nation, no leadership and no vision of anything different. We just have short-term narcissistic self-interest embodied by the low tax, low service mentality. The same dynamic is happening at work. undations, we have stale old codes, models, methods and algorithms that ill-serve our potential. The application of too big to fail to our codes is creating a slow-motion failure of epic proportions. The basis for the failure is the loss of innovation and a sense that we are creating the future. Instead we simply curate the past. Our best should be ahead of us and any leadership worth its salt would demand that we work steadfastly to seize greatness. In modeling and simulation the creation of new codes should be an energizing factor creating effective laboratories for innovation, invention and creativity providing new avenues for progress.

undations, we have stale old codes, models, methods and algorithms that ill-serve our potential. The application of too big to fail to our codes is creating a slow-motion failure of epic proportions. The basis for the failure is the loss of innovation and a sense that we are creating the future. Instead we simply curate the past. Our best should be ahead of us and any leadership worth its salt would demand that we work steadfastly to seize greatness. In modeling and simulation the creation of new codes should be an energizing factor creating effective laboratories for innovation, invention and creativity providing new avenues for progress.