tl;dr

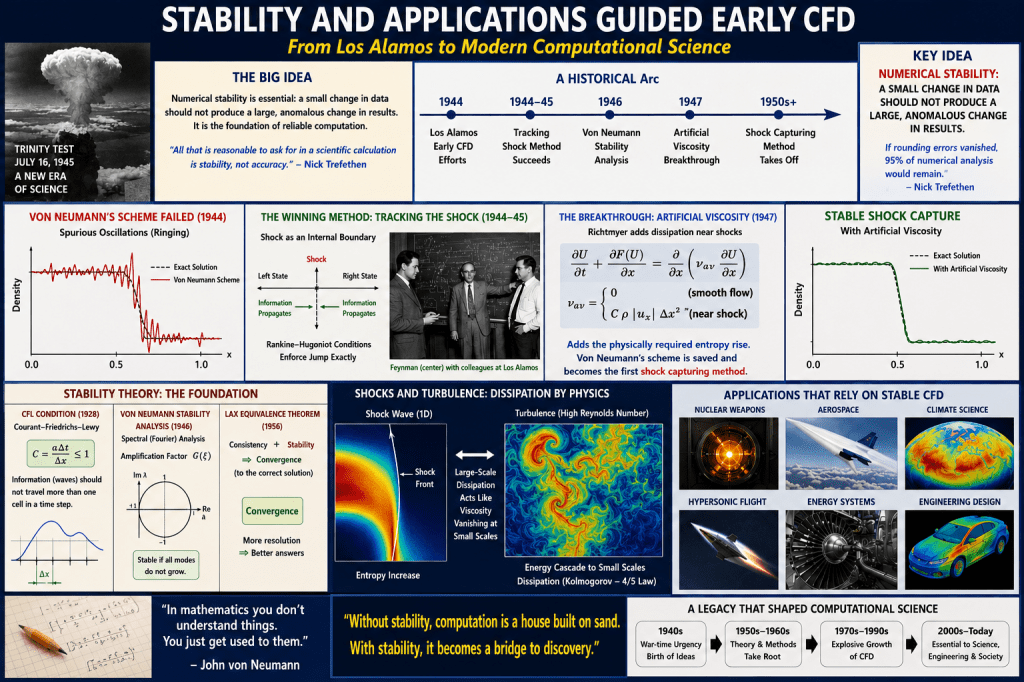

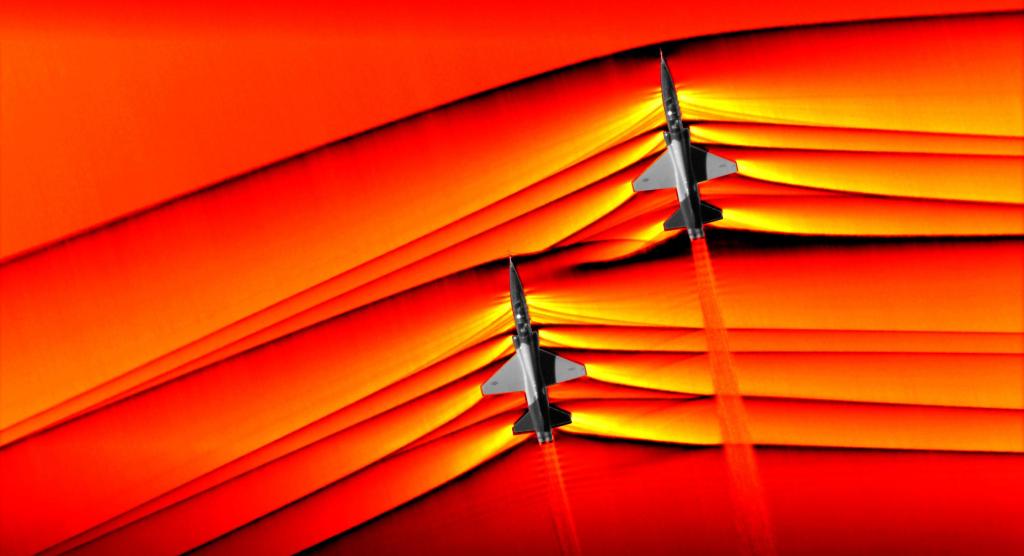

Algorithmic stability is an essential concept for solving problems with computers. Studying stability provides a foundation for everything a computer does. Any algorithm for any purpose can exhibit stability issues that are fatal. Simply put, a lack of stability arises from a small change in data, yielding a huge change in results in an anomalous way. The archetype of stability is the numerical solution of differential equations. This arose from key wartime applications and early computing use (WW2 and the atom bomb). Von Neumann’s numerical algorithm for computing shock waves failed miserably. It became a key topic to study and understand. This spurred essential developments in computational science. Despite progress, problems still exist needing attention. There are parallels to AI that we should look to for better outcomes with that technology.

“Mathematics is the art of explanation.” ― Paul Lockhart

In the Beginning

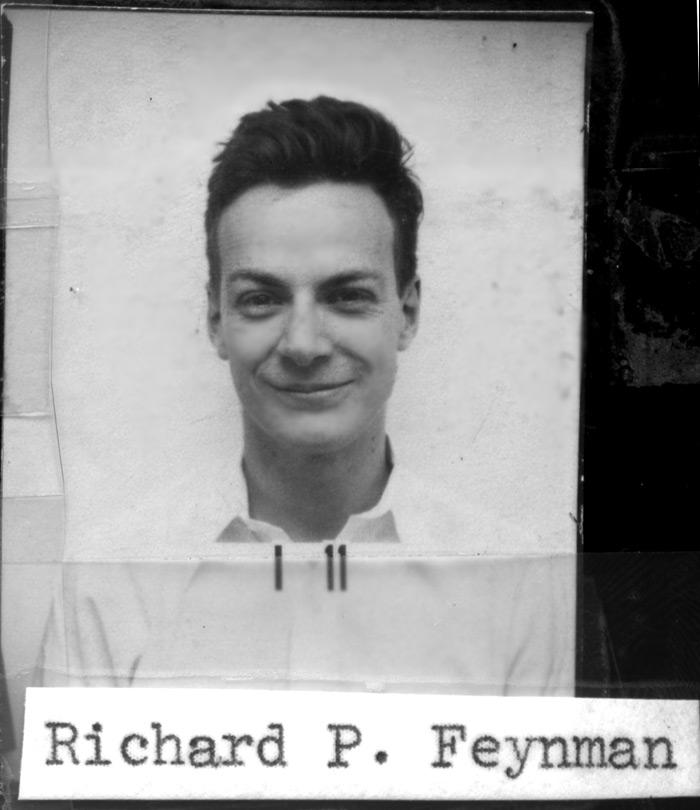

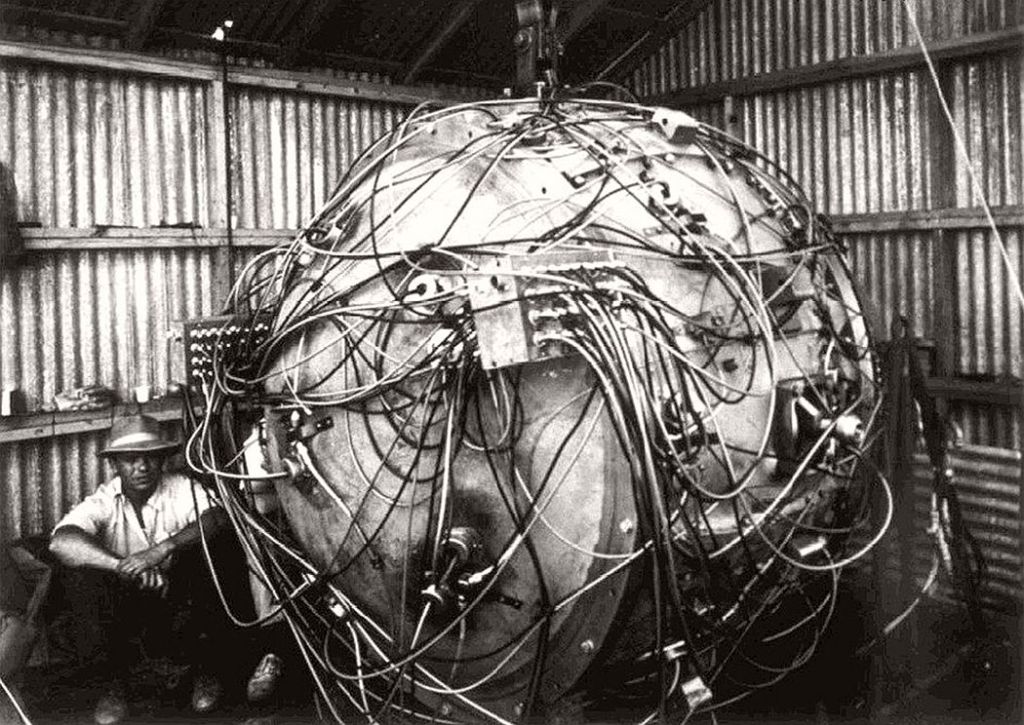

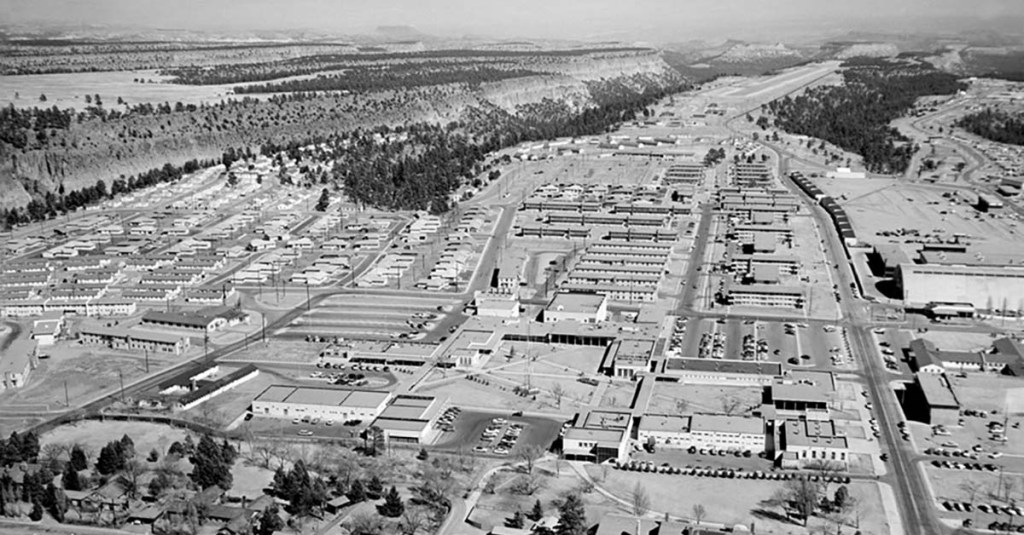

By 1944, the American effort behind creating the atomic bomb was beginning to make genuine progress. Part of the Manhattan Project was nascent computational physics efforts led by the vision of John Von Neumann. In Los Alamos, they were examining design ideas numerically. The effort was led by Hans Bethe and Richard Feynman, both future Nobel Prize winners in Physics. Von Neumann had led the concept of using computing for science. He also devised a computational scheme for shock hydrodynamics. Bethe and Feynman did early calculations using Von Neumann’s method. It failed, catastrophically. The method produced horrible oscillating results (ringing). Today, we would recognize this as numerical instability.

This outcome was recognized as a problem. Scientists in Los Alamos (Peierls) also conceptualized ways to mitigate it. These changes to Von Neumann’s method would not be realized until after the war. During the war, success was achieved with another algorithm devised by the British mission at Los Alamos. The method was first proposed by Peierls and then refined by Skyrme. It was fundamentally different than Von Neumann’s. It involved some similar methods to Von Neumann’s, but computed the shock wave explicitly via tracking. The shock was treated with precision by Feynman via the Rankine-Hugoniot conditions as an internal boundary. Von Neumann’s method was more general, but fatally flawed.

“Mathematics is the cheapest science. Unlike physics or chemistry, it does not require any expensive equipment. All one needs for mathematics is a pencil and paper.” ― George Polya

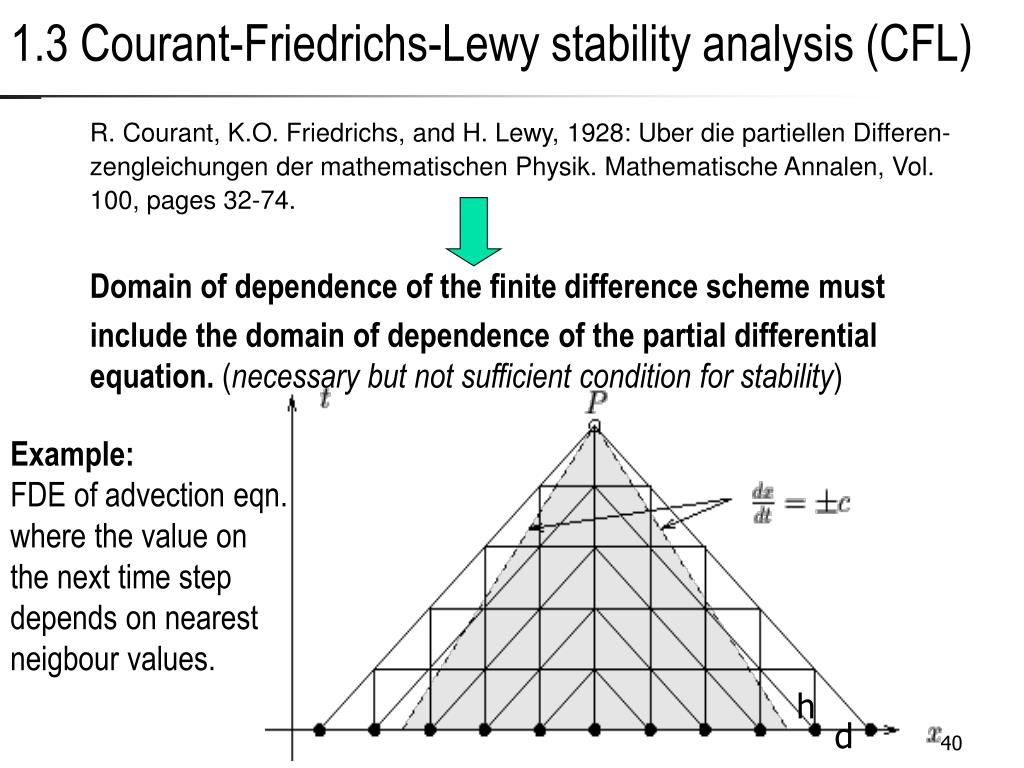

From the foundational work of Courant, Friedrichs, and Lewy in 1928, the methods used a heuristic for stability. This is the famous CFL condition named for them. This is a simple argument about the domain of dependence for information (waves). This means a finite speed of propagation of sound waves should not move more than the spacing of the mesh in a time step. It is simple and rational, and still the core of stability today. It is also subtly flawed, as I’ve written. Both of the two WW2 methods used it, and it holds today (for explicit methods). For Von Neumann’s method, it was insufficient. Something else was needed to keep it viable. This seeded two developments key to the future of computational physics.

A key question to consider is whether current difficulties are seeding any similar fundamental mathematics. The failures and problems are things to explore that lead to discovery. AI has vast swaths of problems needing math. Efforts are generally lacking and sub rosa.

“But in my opinion, all things in nature occur mathematically.” ― Rene Decartes

Context and Modern Significance

“If rounding errors vanished, 95% of numerical analysis would remain.” – Nick Trefethen

My focus here is numerical stability for solving differential equations, especially partial differential equations. Other forms of numerical stability are equally important for computation. The classical case is Gaussian elimination, which drove much of the early work, including von Neumann’s efforts with Goldstine. This study algorithms and how to structure them for stable computation. It also draws attention to when computation is untrustworthy, defined by the structure of the problem. By and large, these algorithms are designed to produce exact or precise results, except when numerical errors occur. Roundoff error and changes in the order of operations can lead to stability issues. These approaches focus on eliminating those problems and ensuring reliable results.

The primacy of stability in numerical computations powered the growth of the technology. Contrast this with the lack of a coherent, encompassing theory for large language models. This is true when you look at how these models behave during training or use. That behavior is stochastic, so it is perhaps logical that stability would not be a key concern. I would counter that stability concepts might yield order to AI where it is lacking today. The absence of stability theory erodes confidence in the underlying techniques. Addressing it should be a priority going forward, and would likely yield practical benefits.

“Do not imagine that mathematics is hard and crabbed, and repulsive to common sense. It is merely the etherealization of common sense.” ― Lord Kelvin

Unfortunately, as noted previously, the United States is loath to invest in this kind of work of late. The applied math that has been so crucial to computational physics is largely absent today in AI. It is absent in almost every scientific endeavor. The partnership between the two is all but dead. This all points to an indictment of the American strategy in AI (or lack thereof). We assume we’re in the lead, but we’re increasingly failing to do the things that would sustain and expand that lead. Instead, the USA is doing everything it can to lose the lead in the long run. This applies to what we are and are not doing. My goal is to outline a key methodology for the birth and growth of computational physics. In contrast, it will highlight the lack of a similar framework for our current revolution. This is badly needed and would be a huge boon to AI in the future, improving every aspect of the technology.

Many things stand in the way, but one thing is galling in the extreme. I’ll note that claims of industrial espionage are true, but it is also propaganda. They’re also used to make Americans think they’re stealing the edge of technology through spying. It belittles an adversary we should fear for their own creativity and creation. We avoid recognizing our own self-defeating philosophy: our systematic internal attack on science and technology. These self-defeating actions are the core of the danger.

“If you know the enemy and know yourself, you need not fear the result of a hundred battles. If you know yourself but not the enemy, for every victory gained you will also suffer a defeat.” —Sun Tzu

Industrial espionage happens and is part of the picture, but it pales in comparison to the absolute incompetence and the attack on science and technology that the government and industry are waging on themselves. We’re the ones laying the groundwork for Chinese supremacy in science and technology, because China is inherently competent at doing the right things while we do all the wrong things. It will become clear that the Americans are the ones who need to engage in industrial espionage of the Chinese quite soon, because we will increasingly be firmly behind. The coffers of science and technology that the Chinese might be emptying were filled decades ago, and those coffers will now be empty and threadbare. Given American attacks on its own internal science and technology, any claims otherwise are simply hubris and empty patriotism, so common these days.

What’s clear today is that the United States is losing its dominance, and it’s losing it because of its own actions. The Chinese are pulling ahead because they’re competent and doing the right things, while the USA is undermining its science and technology.

The first principle is that you must not fool yourself — and you are the easiest person to fool.” — Richard Feynman

To anyone who questions the current American thought, I’ll share a personal anecdote from right before I left Sandia.

I was taking the required training for a conference where British scientists would be present for classified discussions. The training is standard before these meetings, and the Sandia person leading it was a young fellow. During the training, he made comments belittling Russian or Soviet accomplishments in nuclear weapons. He seemed to believe those achievements were illegitimate and based on espionage, particularly pointing to Klaus Fuchs.

While the Russians did engage in espionage and stole much of the design for early atomic bombs, it would be wrong to belittle their capabilities or the brilliance of how they executed their program. Once they knew an atomic weapon could be produced, they could build it from scratch. The only thing the American design did at Trinity was confirm that it could be done. Once you know it can be done, it becomes a much simpler matter to do it.

This also belittles the brilliance of scientists like Andrei Sakharov, who did unique and brilliant work in support of the Soviet hydrogen bomb program. They had many other extraordinary theoretical physicists like Landau and Zel’dovich. I corrected the young man and encouraged him to look at the real history, as explained by Richard Rhodes. The literature has some obscure publications by Russian scientists who were key to their program. These are eye-popping. The same fictions are at work today with regard to the Chinese and their advances in AI. The same fictions in a host of fields. We Americans need to be guided by facts and not pulled in by faux patriotism and hubris about our scientific prowess. We should be completely in sync with the rather politically incorrect notion that the Manhattan Project was powered more by the efforts of immigrants than by homegrown American science.

“Intellectual freedom is essential to human society — freedom to obtain and distribute information, freedom for open-minded and unfearing debate, and freedom from pressure by officialdom and prejudices.” — Andrei Sakharov

Another key aspect of this dynamic is the cost of information protection. The American system operates under the premise of assumed superiority. This then leads to protectionist policies in classification and export control. These policies can be quite effective in limiting spying and keeping information from being lost. It is also effective in controlling this information domestically. The impact harms innovation and progress in the USA. American institutions have cracked down on information dissemination more and more. This has played a significant role in dragging American science down. Even worse, if American science is behind, these policies will act as friction, undermining catching up. We may already be behind, and the approach taken is foolish at best.

The question is whether the United States will wake up to a Sputnik-like moment and turn things around. Alternatively, the United States may be done, and the nearly century-long era of international dominance, fueled by supremacy in science and technology, could come to an end. That chapter has not been written yet, but the current signs are worrying. The American public seems asleep and is not taking the steps needed to return to the approach that helped us achieve our dominance.

With that table setting out of the way, let’s get back to our story.

“All that it is reasonable to ask for in a scientific calculation is stability, not accuracy.” – Nick Trefethen

Shock Capturing Methods and Applications

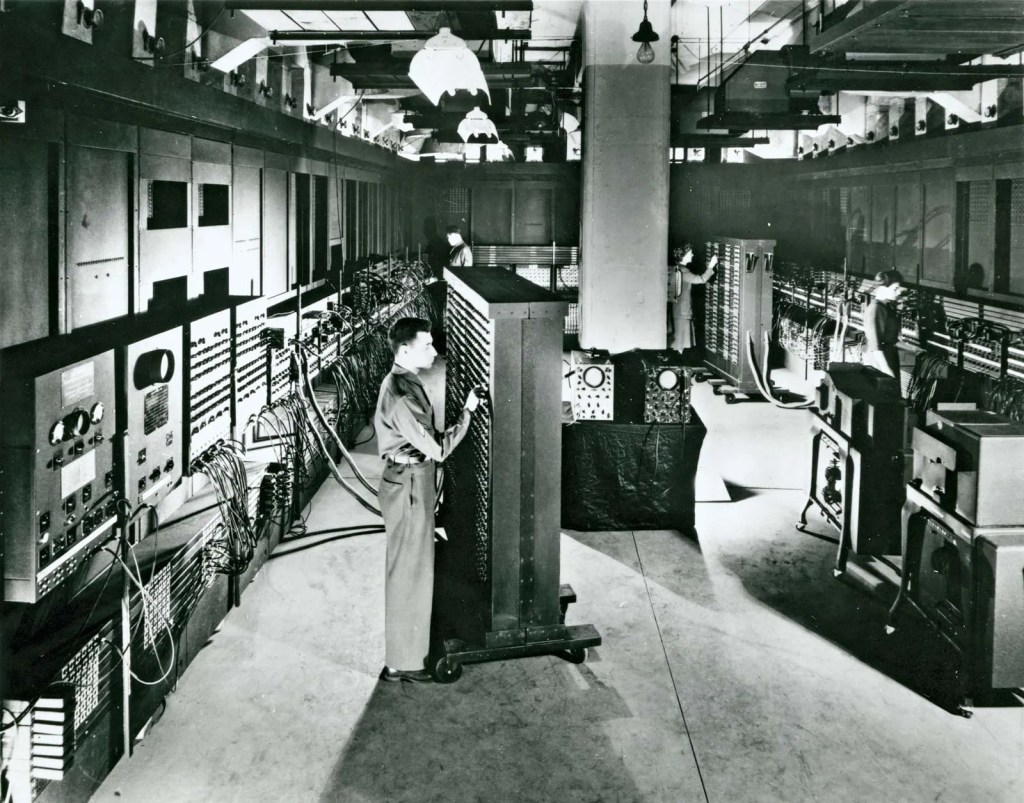

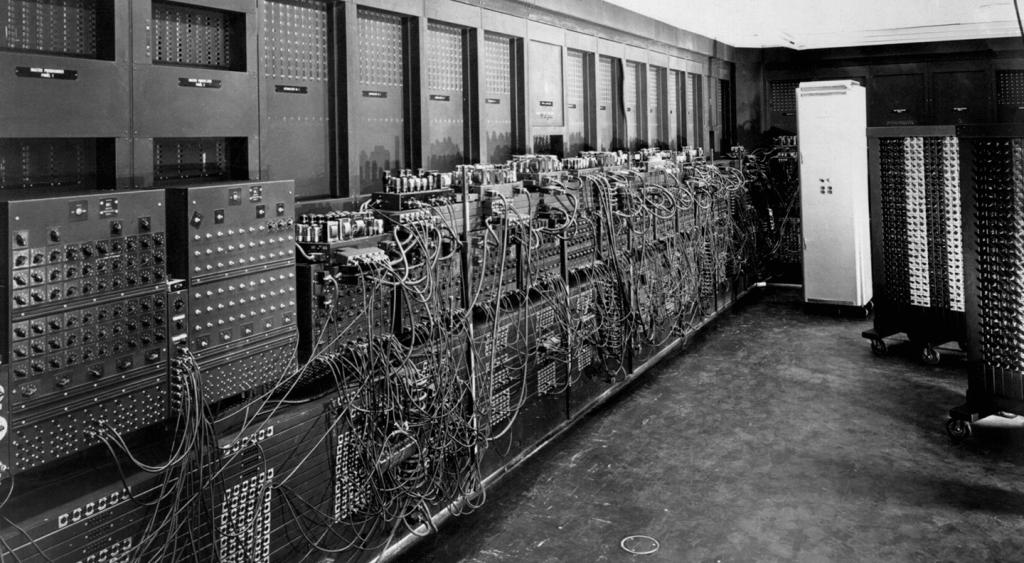

After World War 2, science jumped to the attention of everyone. This was powered by the stunning use of atomic bombs against Japan, shining a light on the Manhattan Project. In the wake of this, resources and importance flowed toward scientific work. This allowed the powerful vision of John Von Neumann to begin to come to fruition. Part of his vision was the use of computers for scientific work. Part of his vision was chastened by the lack of success for his differencing scheme. Its results were unstable. This was not an isolated problem, as other schemes that seemed reasonable produced bad results. He sought to understand this.

By the summer of 1946, he had produced an analytical tool to examine stability. This was his spectral stability analysis of finite difference schemes. He presented it in Los Alamos that summer for parabolic equations. It only applies to linear equations, but provides results that guide nonlinear methods and equations. It is still an essential tool for understanding methods. It can provide answers to subtle stability problems invisible to inspection. The method is still used broadly today. This method leaked out over the remainder of the 1940s, most notably by Crank and Nicolson. Von Neumann published the method in 1950 along with another key invention.

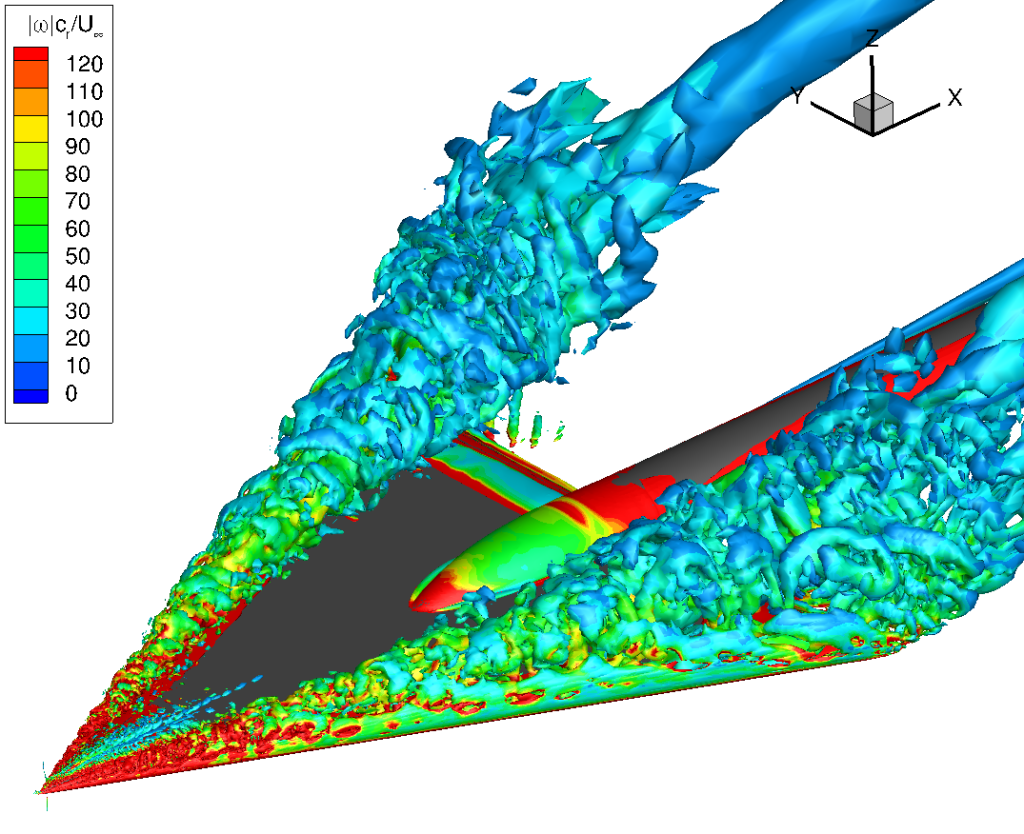

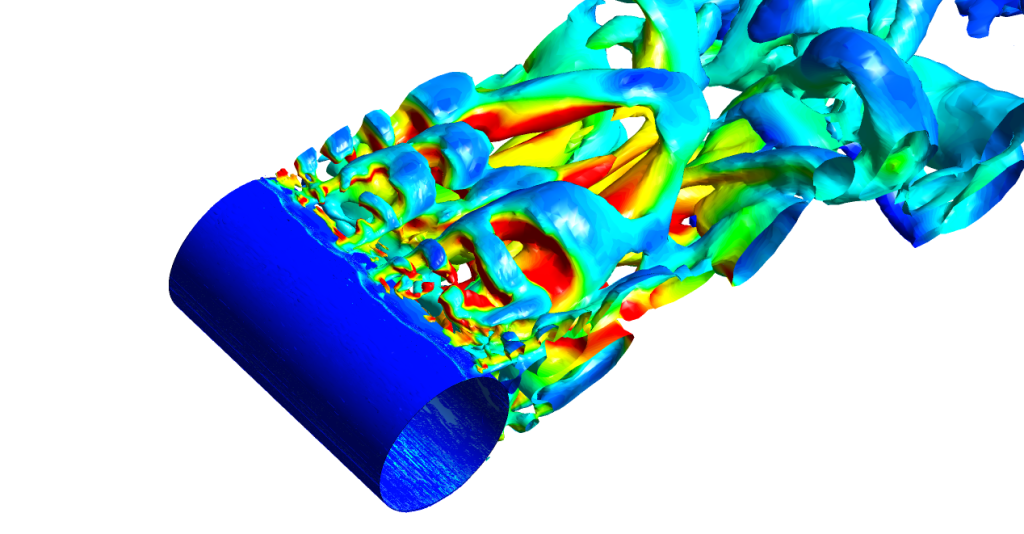

The method used successfully in the War was a tracking method. As application complexity grew, the tracking method became intractable. Los Alamos was looking at the H-bomb (super, as they called it). A more general method was needed to support progress. Robert Richtmyer, who was leading the Theoretical Division, sought to produce this. He worked on the suggestion of Peierls to add dissipation to Von Neumann’s method. By 1947, Richtmyer worked out the solution. He would add an extra term in and near shock waves to produce the entropy needed by a shock’s passage. This was dubbed artificial viscosity. In my opinion, an unfortunate choice. It is not artificial, but entirely physical. Shocks create singularities, and entropy rise is necessary to navigate the singularity. It allowed shocks to be captured and not tracked. Von Neumann’s method was salvaged, and the stage was set for numerical methods to flourish on complex applications.

This was the first shock-capturing method. The paper Von Neumann and Richtmyer published had three major advances. First, there was the differencing scheme Von Neumann devised in 1944, but it failed in use. The second was the stability technique Von Neumann devised. Finally, the third was the artificial viscosity invented by Richtmyer to stabilize shocks. As I’ve noted before, this viscosity has also become the foundational method in Large Eddy Simulation. The reason for this commonality is dissipation in turbulence that functionally acts similarly to shocks. In both cases, the large-scale dissipation acts as viscosity vanishes in nearly identical manners. The understanding of shocks is primarily one-dimensional. For turbulence, this is fully three-dimensional and introduced by Kolmogorov as his “4/5 law.”

“As technology advances, the ingenious ideas that make progress possible vanish into the inner workings of our machines, where only experts may be aware of their existence. Numerical algorithms, being exceptionally uninteresting and incomprehensible to the public, vanish exceptionally fast.” – Nick Trefethen

Many of the most important aspects of computational science arose out of this single thread of science and math. These works are foundational to the entire field. They show us the path not taken today with AI. We should heed this as a warning.

Subtle Stability

“Computing has changed not only the way mathematics is practiced, but mathematics itself.” —Peter Lax

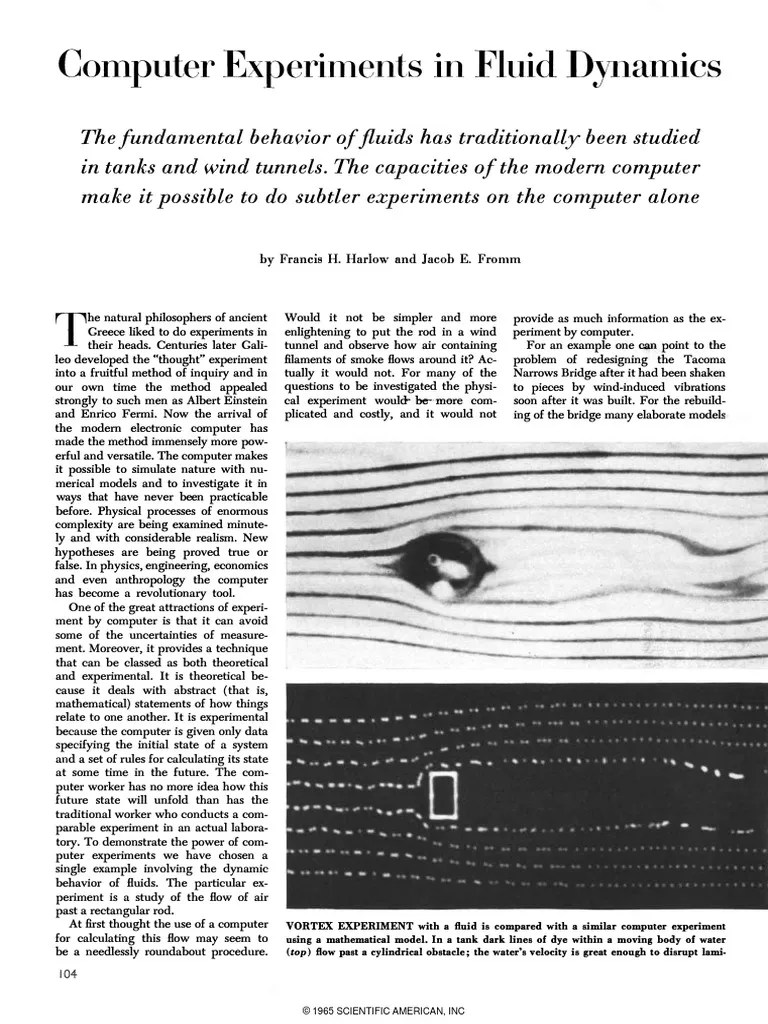

Given the success of this method, numerical solutions exploded onto the scene. Applications of numerical methods multiplied, especially in fluid dynamics. The key aspect was the proof that it could be done. Everyone knew it was possible, especially in Los Alamos. This seeded ideas with luminaries like Peter Lax and Frank Harlow. Both of these men pioneered whole fields of practice in what we now call CFD. Harlow started to invent a host of methods still used today, first in compressible fluids, then in incompressible fluids. He also invented an important aspect of turbulence modeling. Lax produced fundamental methods for compressible fluid dynamics. He produced several methods that are foundations today, and the theory of conservation form, which underpins computational aerodynamics.

“One must watch the convergence of a numerical code as carefully as a father watching his four year old play near a busy road.” — J. P. Boyd

More importantly, he produced foundational mathematics for computational science. Lax’s equivalence theorem connects stability, consistent approximations and convergence. Convergence is the promise that more computing power yields better answers. This has underpinned the pursuit of better computers for better science. It took the stability work discussed above and connected it to numerical approximation accuracy to ground all efforts. The practice of code verification is dependent on this theory. Scientists design stable, accurate methods to solve equations and implement these in code. If they can be shown to converge to the correct solution, we have assurances of correctness. Lax provided the theory to see this.

“The unreasonable effectiveness of mathematics in the natural sciences.” – Eugene Wigner

The importance of the applications to the world meant that the theory for partial differential equations came first. Ordinary differential equation theory from Dahlquist actually came afterward. One might logically think the order would be reversed, but not. This is due to the importance and energy for PDEs.

The inspiration for this post is my discovery of stability problems that infest our codes today. These are associated with strong expansions I’ve discussed recently. The problems are multiple, with under-estimates of wave speeds leading to unstable time steps and schemes. The saving grace is that the instability is a mid-frequency and not at the grid scale. Shocks produce instability at the grid scale Thus they are catastrophic almost immediately. The expansion instability is mitigated by a little resolution and a few time steps. The question is whether the instability has a lasting influence on the solution. Does the instability leave a lingering corruption of the solution that is never healed? Right now, theory can’t tell us. Evidence says that this may well be the case/

Postscript

By embracing the past, the current administration is killing the future. The future is about moving forward and adapting to the problems we already have. The solutions of the past will not work in the future. That is true in every area, from warfare to science. This administration is focused on the past, and its actions will destroy a positive future for the United States. I spent my entire professional career at nuclear weapons laboratories. I watched them decline and lose capability. I can say without equivocation that we are not ready for what is coming. Our science is not prepared to compete in the world that is about to unfold.

We’ve arranged a society on science and technology in which nobody understands anything about science and technology, and this combustible mixture of ignorance and power, sooner or later, is going to blow up in our faces.” — Carl Sagan

We need to get our shit together, fast. The current administration is damaging our ability to compete on every front. True, whether it is national security or economics. We are not ready for the world that is coming. If you want to highlight how unprepared we are, just look at Iran or Ukraine. In both wars, American power is failing in spades. We are not adapting to the world that is already here.

America has rejected expertise, and it is going to do our country harm. Whether we are talking about nukes, fusion, AI or drones, we need experts to define our strategy. Then to execute it and adapt to an every changing landscape. I have seen this rejection of expertise all the way down to the working level at a national laboratory. There, my own expertise was deemed too cutting and too critical. It was way too much for the incompetent leaders to take seriously.

Our current leaders and their approach to leadership are failing the country at this critical juncture, and time is running out. Looking to billionaires to lead us is foolish. They are increasingly driven by their own greed and could not care less about society as a whole. Just look at how they have stewarded the technologies that have driven their wealth. In every case, those technologies have caused real harm to our society, to our children, and to the way we live. They show no responsibility other than maximizing their own wealth and power. Letting them guide something with the power of AI is suicidal.

“In mathematics you don’t understand things. You just get used to them.” —John von Neumann

This essay shows a small vignette of how fundamental math supports computational science. The computational science has become essential for work supporting a host of applications. These include everything from nuclear weapons to climate science to car design. It encapsulates much of what is missing from science today (including, but not limited to AI).

“Mathematics, rightly viewed, possesses not only truth, but supreme beauty—a beauty cold and austere, like that of sculpture, without appeal to any part of our weaker nature, without the gorgeous trappings of painting or music, yet sublimely pure, and capable of a stern perfection such as only the greatest art can show.” ― Bertrand Russell

References

Von Neumann, John. Proposal and analysis of a new numerical method for the treatment of hydrodynamical shock problems. Applied Mathematics Group, Institute for Advanced Study, 1944.

VonNeumann, John, and Robert D. Richtmyer. “A method for the numerical calculation of hydrodynamic shocks.” Journal of applied physics 21, no. 3 (1950): 232-237.

Mattsson, Ann E., and William J. Rider. “Artificial viscosity: back to the basics.” International Journal for Numerical Methods in Fluids 77, no. 7 (2015): 400-417. Morgan, Nathaniel R., and Billy J. Archer. “On the origins of Lagrangian hydrodynamic methods.” Nuclear Technology 207, no. sup1 (2021): S147-S175.

Margolin, Len G., and K. L. Van Buren. “Richtmyer on Shocks:“Proposed Numerical Method for Calculation of Shocks,” an Annotation of LA-671.” Fusion Science and Technology 80, no. sup1 (2024): S168-S185.

Lax, Peter D. “Hyperbolic difference equations: A review of the Courant-Friedrichs-Lewy paper in the light of recent developments.” IBM Journal of Research and Development11, no. 2 (1967): 235-238.

Lax, Peter D., and Robert D. Richtmyer. “Survey of the stability of linear finite difference equations.” Communications on pure and applied mathematics 9, no. 2 (1956): 267-293.

Kolmogorov, Andrey Nikolaevich. “A refinement of previous hypotheses concerning the local structure of turbulence in a viscous incompressible fluid at high Reynolds number.” Journal of Fluid Mechanics 13, no. 1 (1962): 82-85.

Smagorinsky, Joseph. “General circulation experiments with the primitive equations: I. The basic experiment.” Monthly weather review 91, no. 3 (1963): 99-164.

Higham, Nicholas J. Accuracy and stability of numerical algorithms. Society for industrial and applied mathematics, 2002.

Grcar, Joseph F. “John von Neumann’s analysis of Gaussian elimination and the origins of modern Numerical Analysis.” SIAM review 53, no. 4 (2011): 607-682.

Lax, Peter D. “The flowering of applied mathematics in America.” Siam Review 31, no. 4 (1989): 533-541.