Study hard what interests you the most in the most undisciplined, irreverent and original manner possible.

― Richard Feynman

When I got my first job out of school it was in Los Alamos home of one of the greatest scientific institutions in the World. This Lab birthed the Atomic Age and changed the World. I went there to work, but also learn and grow in a place where science reigned supreme and technical credibility really and truly mattered. Los Alamos did not disappoint at all. The place lived and breathed science, and I was bathed in knowledge and expertise. I can’t think of a better place to be a young scientist. Little did I know that the era of great science and technical superiority was drawing to a close. The place that welcomed me with so much generosity of spirit was dying. Today it is a mere shell of its former self along with Laboratories strewn across the country whose former greatness has been replaced by rampant mediocrity, pathetic leadership and a management class that rules this decline. Money has replaced achievement, integrity and quality as the lifeblood of science. Starting with a quote by Feynman is apt because the spirit he represents so well is the very thing we have completely beat out of the system.

When I got my first job out of school it was in Los Alamos home of one of the greatest scientific institutions in the World. This Lab birthed the Atomic Age and changed the World. I went there to work, but also learn and grow in a place where science reigned supreme and technical credibility really and truly mattered. Los Alamos did not disappoint at all. The place lived and breathed science, and I was bathed in knowledge and expertise. I can’t think of a better place to be a young scientist. Little did I know that the era of great science and technical superiority was drawing to a close. The place that welcomed me with so much generosity of spirit was dying. Today it is a mere shell of its former self along with Laboratories strewn across the country whose former greatness has been replaced by rampant mediocrity, pathetic leadership and a management class that rules this decline. Money has replaced achievement, integrity and quality as the lifeblood of science. Starting with a quote by Feynman is apt because the spirit he represents so well is the very thing we have completely beat out of the system.

Don’t think money does everything or you are going to end up doing everything for money.

― Voltaire

If one takes a look at the people who get celebrated by organizations today, it is almost invariably managers. This happens internally to organizations, their external face and alumni recognition by universities. In almost every case the people who are highlighted to represent achievement are managers. One explanation is managers have a direct connection to money. One of the key characteristics of the modern age is the centrality of money to organizational success. Money is connected to management, and increasingly disconnected from technical achievement. This is true in industry, government and university worlds, the entire scientific universe. The whole post could have replaced “the rise of management” with the “rise of money”. We increasingly look at aggregate budget as coequal to quality. The more money an organization has, the better it is, and more important it is. A few organizations still struggle to hang on to celebrating technical achievers, Los Alamos among them. These celebrations weaken with each passing year. The real celebration is how much budget the Lab has, and how many employees that can support.

People who don’t take risks generally make about two big mistakes a year. People who do take risks generally make about two big mistakes a year.

― Peter F. Drucker

The days of technical competence and scientific accomplishment are over. This foundation for American greatness has been overrun by risk aversion, fear and compliance with a spirit of commonness. I use the word “greatness” with gritted teeth because of the perversion of its meaning by the current President. This perversion is acute in the context of science because he represents everything that is destroying the greatness of the United States. Rather than “making America great again” he is accelerating every trend that has been eroding the foundation of American achievement. The management he epitomizes is the very thing that is the blunt tool bludgeoning American greatness into a bloody pulp. Trump’s pervasive incompetence masquerading as management expertise will surely push numerous American institutions further over the edge into mediocrity. His brand of management is all to prevalent today and utterly toxic to quality and integrity.

The days of technical competence and scientific accomplishment are over. This foundation for American greatness has been overrun by risk aversion, fear and compliance with a spirit of commonness. I use the word “greatness” with gritted teeth because of the perversion of its meaning by the current President. This perversion is acute in the context of science because he represents everything that is destroying the greatness of the United States. Rather than “making America great again” he is accelerating every trend that has been eroding the foundation of American achievement. The management he epitomizes is the very thing that is the blunt tool bludgeoning American greatness into a bloody pulp. Trump’s pervasive incompetence masquerading as management expertise will surely push numerous American institutions further over the edge into mediocrity. His brand of management is all to prevalent today and utterly toxic to quality and integrity.

In my life, the erosion of American greatness in science is profound, evident and continual. I had a good decade of basking in the greatness of Los Alamos before the forces of mediocrity descended upon the Lab and proceeded to spoil, distort and destroy every bit of greatness in sight. A large part of the destruction was the replacement of technical excellence with management. The management is there to control the “butthead cowboys” and keep them from fucking up. Put differently, the management is there to destroy any individuality and make sure no one ever achieves anything great because no one can take a risk sufficient to achieve something miraculous. Anyone expressing individuality is a threat and needs to be chained up. We replaced stunning World class technical achievement with controlled staff, copious reporting, milestone setting, project management and compliance all delivered with mediocrity. This is bad enough by itself, but for an institution responsible for maintaining our nuclear weapons stockpile, the consequences are dire. Los Alamos isn’t remotely alone. Everything in the United States is being assaulted by the arrayed forces of mediocrity. It is reasonable to ask whether the responsibilities the Labs are charged with continue to be competently achieved.

“butthead cowboys” and keep them from fucking up. Put differently, the management is there to destroy any individuality and make sure no one ever achieves anything great because no one can take a risk sufficient to achieve something miraculous. Anyone expressing individuality is a threat and needs to be chained up. We replaced stunning World class technical achievement with controlled staff, copious reporting, milestone setting, project management and compliance all delivered with mediocrity. This is bad enough by itself, but for an institution responsible for maintaining our nuclear weapons stockpile, the consequences are dire. Los Alamos isn’t remotely alone. Everything in the United States is being assaulted by the arrayed forces of mediocrity. It is reasonable to ask whether the responsibilities the Labs are charged with continue to be competently achieved.

There is nothing so useless as doing efficiently that which should not be done at all.

― Peter F. Drucker

The march of the United States toward a squalid mediocrity had already begun years earlier. Management has led the way at every stage of the transformation. For scientific institutions, the decline began in the 1970’s with the Department of Defense Labs. Once these Labs were shining beacons of achievement, but management unleashed on them put a stop to this. Since then we have seen NASA, Universities, and the DOE Labs all brought under the jack boots of management. All of this management was brought in to enforce a formality of operations, provide a safe or secure workplace, and keep scandals at bay. The Nation has decided that phenomenal success and great achievements aren’t worth the risks or side-effects of being successful. The management is the delivery vehicle for the mediocrity inducing control. The power and achievement of the technical class is the causality. Management is necessary, but today the precious balance between control and achievement is completely lost.

The managers aren’t evil, but neither are most of the people who simply carry out the orders of their superiors. Most managers are good people who simply carry out awful things because they are expected to do so. We now put everything except technical achievement as a priority. Doing great technical work is always the last priority. It can always get pushed out by something else. The most important thing is compliance with all the rules and regulations. Management stands there to make sure it all gets done. This involves lots of really horrible training designed to show compliance but teach people almost nothing. We have project management to make sure we are on time and budget. Since the biggest maxim of our pathetic management culture is never making a mistake, risks are the last thing you can take. It helps a lot when we really aren’t accomplishing anything worthwhile. When the fix is in and technical standards disappear, it doesn’t matter how terrible the work is. All work is World class by definition. Eventually everyone starts to believe the bullshit. The work is great, right, of course it is.

All of this is now blazoned across the political landscape with an inescapable sense that America’s best days are behind us. The deeply perverse outcome of the latest National election is a president who is a cartoonish version of a successful manager. We have put our abuser and a representative of the class that has undermined our Nation’s true greatness in the position of restoring that greatness. What a grand farce! Every day produces evidence that the current efforts toward restoring greatness are using the very things undermining it. The level of irony is so great as to defy credulity. The current administration’s efforts are the end point of a process that started over 20 years ago, obliterating professional government service and hollowing out technical expertise in every corner. The management class that has arisen in their place cannot achieve anything but moving money and people. Their ability to create the new and wonderful foundation of technical achievement is absent.

All of this is now blazoned across the political landscape with an inescapable sense that America’s best days are behind us. The deeply perverse outcome of the latest National election is a president who is a cartoonish version of a successful manager. We have put our abuser and a representative of the class that has undermined our Nation’s true greatness in the position of restoring that greatness. What a grand farce! Every day produces evidence that the current efforts toward restoring greatness are using the very things undermining it. The level of irony is so great as to defy credulity. The current administration’s efforts are the end point of a process that started over 20 years ago, obliterating professional government service and hollowing out technical expertise in every corner. The management class that has arisen in their place cannot achieve anything but moving money and people. Their ability to create the new and wonderful foundation of technical achievement is absent.

Greatness is a product of hard work, luck and taking appropriate risks. In science it is grounded upon technical achievements arising from intellectual labors along with a lot of failures, false starts and mistakes. Today’s highly managed World everything that leads to greatness is undermined. Hard work is taxed by a variety of non-productive actions that compliance demands. Appropriate risks are avoided as a matter of course because risks court failure and failure of any sort is virtually outlawed. False starts never happening any more in today’s project managed reality. Mistakes are fatal for careers. Risk, failure and mistakes are all necessary for learning, and ultimately producing unique and advanced ideas come from the intellectual product of a healthy environment. An environment that cannot tolerate failure and risk is unhealthy. It is stagnant and unproductive. This is exactly where today’s workplace has arrived.

Money is a great servant but a bad master.

― Francis Bacon

With the twin pillars of destruction coming from money’s stranglehold on science and the inability to take risks, peer review has been undermined. Our current standards of peer review lack any integrity whatsoever. Success by definition is the rule of the day. A peer review cannot point out flaws without threatening the reviewers with dire consequences. This has fueled a massive downward spiral in the quality of technical work. Why take risks necessary for progress, when success can be so much more easily faked. Today peer review is so weak that bullshitting your way to success has become the norm. To point out real shortcomings in work has become unacceptable and courts scandal. It puts monetary issues at risk and potentially produces consequences for the work that management cannot accept. In the current environment scientific achievement does not happen because achievement is invariably risk prone. Such risks cannot be taken because of the hostile environment toward any problems or failures. Without failure, we are not learning, and learning at its apex is essentially research. Weak peer review is a large contributor to the decline in technical achievement and the loss of importance for the technical contributor.

Perhaps the greatest blow to science was the end of the Cold War. The Soviet bloc represented a genuine threat to the West and a worthy adversary. Technical and scientific competence and achievement was a key aspect in the defense of the West. Good work couldn’t be faked, and everyone knew that the West needed to bring their “A” game, or risk losing. When the Soviet bloc crumbled, so did a great deal of the unfettered support for science. Society lost its taste for the sorts of risks necessary for high levels of achievement. To some extent, the loss of ability to take risks and accept failures was already underway with the end of the Cold War simply providing a hammer blow to support for science. It ended the primacy of true achievement as a route to National security. It might be useful to note that the science behind “Star Wars” was specious from the beginning. In a very real way the bullshit science of Star Wars was a trail blazer for today’s rampant scientific charlatans. Rather than give science a free reign to seek breakthroughs along with the inevitable failure, society suddenly sought guaranteed achievement at a reduced cost. In reality it got neither achievement or economized results. With the flow of money being equated to quality as opposed to results, the combination has poisoned science.

from the beginning. In a very real way the bullshit science of Star Wars was a trail blazer for today’s rampant scientific charlatans. Rather than give science a free reign to seek breakthroughs along with the inevitable failure, society suddenly sought guaranteed achievement at a reduced cost. In reality it got neither achievement or economized results. With the flow of money being equated to quality as opposed to results, the combination has poisoned science.

How do you defeat terrorism? Don’t be terrorized.

― Salman Rushdie

This transformation was already bad enough then the war on terror erupted to further complicate matters. The war on terror was a new cash cow for the broader defense establishment but came with all the trappings of guaranteed safety and assured results. It solidified the hold of money as the medium for science. Since terrorists represent no actual threat to society, technical success was unnecessary for victory. The only risk to society from terrorism is the self-inflicted damage we do to ourselves, and we’ve done the terrorists work for them masterfully. In most respects the only thing that matters at the Labs is funding. Quality, duty, integrity and virtually anything is up for sale for money. Money has become the sole determining factor for quality and  the dominant factor in every decision. Since the managers are the gate keepers for funding they have uprooted technical achievement and progress as the core of organizational identity. It is no understatement to say that the dominance of financial concerns is tied to the ascendency of management and the decline of technical work. At the same time the desire for assured results produced a legion of charlatans who began to infest the research establishment. This combination has produced the corrosive effect of reducing the integrity of the entire system where money rules and results can be finessed to outright fabricated. Standards are so low now that it doesn’t really matter.

the dominant factor in every decision. Since the managers are the gate keepers for funding they have uprooted technical achievement and progress as the core of organizational identity. It is no understatement to say that the dominance of financial concerns is tied to the ascendency of management and the decline of technical work. At the same time the desire for assured results produced a legion of charlatans who began to infest the research establishment. This combination has produced the corrosive effect of reducing the integrity of the entire system where money rules and results can be finessed to outright fabricated. Standards are so low now that it doesn’t really matter.

Government has three primary functions. It should provide for military defense of the nation. It should enforce contracts between individuals. It should protect citizens from crimes against themselves or their property. When government– in pursuit of good intentions tries to rearrange the economy, legislate morality, or help special interests, the cost come in inefficiency, lack of motivation, and loss of freedom. Government should be a referee, not an active player.

― Milton Friedman

One of the key trends impacting our government funded Labs and research is the languid approach to science by the government. Spearheading this systematic decline in support is the long-term Republican approach to starving government that really took the stage in 1994 with the “Contract with America”. Since that time the funding for science has declined in real dollars along with a decrease in the support for professionalism by those in government. Over time the salaries and level of professional management has been under siege as part of an overall assault on governing. A compounding effect has been an ever-present squeeze on the rules related to conducting science. On the one hand we are told that the best business practices will be utilized to make science more efficient. Simultaneously, best practices in support for science have denied us. The result is no efficiency along with no best practices and simply a decline in overall professionalism for the Labs. All of this is deeply compounding the overall decline in support for research.

One of the key trends impacting our government funded Labs and research is the languid approach to science by the government. Spearheading this systematic decline in support is the long-term Republican approach to starving government that really took the stage in 1994 with the “Contract with America”. Since that time the funding for science has declined in real dollars along with a decrease in the support for professionalism by those in government. Over time the salaries and level of professional management has been under siege as part of an overall assault on governing. A compounding effect has been an ever-present squeeze on the rules related to conducting science. On the one hand we are told that the best business practices will be utilized to make science more efficient. Simultaneously, best practices in support for science have denied us. The result is no efficiency along with no best practices and simply a decline in overall professionalism for the Labs. All of this is deeply compounding the overall decline in support for research.

Rank does not confer privilege or give power. It imposes responsibility.

― Peter F. Drucker

What can be done to fix all this?

Sometimes the road back to effective and productive technical work seems so daunting as to defy description. I’d say that a couple of important things are needed to pave the road. Mostly importantly, the purpose and importance of the work needs centrality to the identity of science. Purpose and service needs to replace money as the key organizing principle. A high-quality product needs to replace financial interests as the driving force in managing efforts. This step alone would make a huge difference and drive most of the rest of the necessary elements for a return to technical focus. First and foremost, among these elements is an embrace of risk. We need to take risks and concomitantly accept failures as an essential element in success. We must let ourselves fail in attempting to achieve great progress through thoughtful risks. Learning, progress and genuine expertise need to become the measure of success and the lifeblood for our scientific and technical worlds. Management needs to shrink into the background where it becomes a service to technical achievement and an enabler for those producing the work. The organizations need to celebrate the science and technical achievements as the zenith of their collective identity. As part of this we need to have enough integrity to hold ourselves to high standards, welcoming and demanding hard hitting critiques.

In a nutshell we need to do almost the complete opposite of everything we do today.

We are trying to prove ourselves wrong as quickly as possible, because only in that way can we find progress.

― Richard Feynman

Meetings. Meetings, Meetings. Meetings suck. Meetings are awful. Meeting are soul sucking, time wasters. Meetings are a good way to “work” without actually working. Meetings absolutely deserve the bad rap they get. Most people think that meetings should be abolished. One of the most dreaded workplace events is a day that is completely full of meetings. These days invariably feel like complete losses, draining all productive energy from what ought to be a day full of promise. I say this as an unabashed extrovert knowing that the introvert is going to feel overwhelmed by the prospect.

Meetings. Meetings, Meetings. Meetings suck. Meetings are awful. Meeting are soul sucking, time wasters. Meetings are a good way to “work” without actually working. Meetings absolutely deserve the bad rap they get. Most people think that meetings should be abolished. One of the most dreaded workplace events is a day that is completely full of meetings. These days invariably feel like complete losses, draining all productive energy from what ought to be a day full of promise. I say this as an unabashed extrovert knowing that the introvert is going to feel overwhelmed by the prospect.

If there is one thing that unifies people at work, it is meetings, and how much we despise them. Workplace culture is full of meetings and most of them are genuinely awful. Poorly run meetings are a veritable plague in the workplace. Meetings are also an essential human element in work, and work is a completely human and social endeavor. A large part of the problem is the relative difficulty of running a meeting well, which exceeds the talent and will of most people (managers). It is actually very hard to do this well. We have now gotten to the point where all of us almost reflexively expect a meeting to be awful and plan accordingly. For my own part, I take something to read, or my computer to do actual work, or the old stand-by of passing time (i.e., fucking off) on my handy dandy iPhone. I’ve even resorted to the newest meeting past-time of texting another meeting attendee to talk about how shitty the meeting is. All of this can be avoided by taking meetings more seriously and crafting time that is well spent. If this can’t be done the meeting should be cancelled until the time is well spent.

If there is one thing that unifies people at work, it is meetings, and how much we despise them. Workplace culture is full of meetings and most of them are genuinely awful. Poorly run meetings are a veritable plague in the workplace. Meetings are also an essential human element in work, and work is a completely human and social endeavor. A large part of the problem is the relative difficulty of running a meeting well, which exceeds the talent and will of most people (managers). It is actually very hard to do this well. We have now gotten to the point where all of us almost reflexively expect a meeting to be awful and plan accordingly. For my own part, I take something to read, or my computer to do actual work, or the old stand-by of passing time (i.e., fucking off) on my handy dandy iPhone. I’ve even resorted to the newest meeting past-time of texting another meeting attendee to talk about how shitty the meeting is. All of this can be avoided by taking meetings more seriously and crafting time that is well spent. If this can’t be done the meeting should be cancelled until the time is well spent.

Conferences, Talks and symposiums. This is a form of meeting that generally works pretty well. The conference has a huge advantage as a form of meeting. Time spend at a conference is almost always time well spent. Even at their worst, a conference should be a banquet of new information and exposure to new ideas. Of course, they can be done very poorly and the benefits can be undermined by poor execution and lack of attention to detail.

Conferences, Talks and symposiums. This is a form of meeting that generally works pretty well. The conference has a huge advantage as a form of meeting. Time spend at a conference is almost always time well spent. Even at their worst, a conference should be a banquet of new information and exposure to new ideas. Of course, they can be done very poorly and the benefits can be undermined by poor execution and lack of attention to detail. Conversely, a conference’s benefits can be magnified by careful and professional planning and execution. One way to augment a conference significantly is find really great keynote speakers to set the tone, provide energy and engage the audience. A thoughtful and thought-provoking talk delivered by an expert who is a great speaker can propel a conference to new heights and send people away with renewed energy. Conferences can also go to greater lengths to make the format and approach welcoming to greater audience participation especially getting the audience to ask questions and stay awake and aware. It’s too easy to tune out these days with a phone or laptop. Good time keeping and attention to the schedule is another way of making a conference work to the greatest benefit. This means staying on time and on schedule. It means paying attention to scheduling so that the best talks don’t compete with each other if there are multiple sessions. It means not letting speaker filibuster through the Q&A period. All of these maxims hold for a talk given in the work hours, just on a smaller and specific scale. There the setting, time of the talk and the time keeping all help to make the experience better. Another hugely beneficial aspect of meetings is food and drink. Sharing food or drink at a meeting is a wonderful way for people to bond and seek greater depth of connection. This sort of engagement can help to foster collaboration and greater information exchange. It engages with the innate human social element that meeting should foster (I will note that my workplace has mostly outlawed food and drink helping to make our meetings suck more uniformly). Too often aspects of the talk or conference that would make the great expense of people’s time worthwhile are skimped on undermining and diminishing the value.

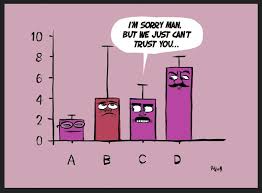

Conversely, a conference’s benefits can be magnified by careful and professional planning and execution. One way to augment a conference significantly is find really great keynote speakers to set the tone, provide energy and engage the audience. A thoughtful and thought-provoking talk delivered by an expert who is a great speaker can propel a conference to new heights and send people away with renewed energy. Conferences can also go to greater lengths to make the format and approach welcoming to greater audience participation especially getting the audience to ask questions and stay awake and aware. It’s too easy to tune out these days with a phone or laptop. Good time keeping and attention to the schedule is another way of making a conference work to the greatest benefit. This means staying on time and on schedule. It means paying attention to scheduling so that the best talks don’t compete with each other if there are multiple sessions. It means not letting speaker filibuster through the Q&A period. All of these maxims hold for a talk given in the work hours, just on a smaller and specific scale. There the setting, time of the talk and the time keeping all help to make the experience better. Another hugely beneficial aspect of meetings is food and drink. Sharing food or drink at a meeting is a wonderful way for people to bond and seek greater depth of connection. This sort of engagement can help to foster collaboration and greater information exchange. It engages with the innate human social element that meeting should foster (I will note that my workplace has mostly outlawed food and drink helping to make our meetings suck more uniformly). Too often aspects of the talk or conference that would make the great expense of people’s time worthwhile are skimped on undermining and diminishing the value. Reviews. A review meeting is akin to a project meeting, but has an edge that makes it worse. Reviews often teem with political context and fear. A common form is a project team, reviewers and then stakeholders. The project team presents work to the reviewers, and if things are working well, the reviewers ask lots of questions. The stakeholders sit nervously and watch rarely participating. The spirit of the review is the thing that determines whether the engagement is positive and productive. The core value about which value revolves is honesty and trust. If honesty and trust are high, those being reviewed are forthcoming and their work is presented in a way where everyone learns and benefits. If the reviewers are confident in their charge and role, they can ask probing questions and provide value to the project and the stakeholders. Under the best of circumstances, the audience of stakeholders can be profitably engaged in deepening the discussion, and themselves learn greater context for the work. Too often, the environment is so charged that honesty is not encouraged, and the project team tends to hide unpleasant things. If reviewers do not trust the reception for a truly probing and critical review, they will pull their punches and the engagement will be needlessly and harmfully moderated. A sign that neither trust nor honesty is present comes from an anxious and uninvolved audience.

Reviews. A review meeting is akin to a project meeting, but has an edge that makes it worse. Reviews often teem with political context and fear. A common form is a project team, reviewers and then stakeholders. The project team presents work to the reviewers, and if things are working well, the reviewers ask lots of questions. The stakeholders sit nervously and watch rarely participating. The spirit of the review is the thing that determines whether the engagement is positive and productive. The core value about which value revolves is honesty and trust. If honesty and trust are high, those being reviewed are forthcoming and their work is presented in a way where everyone learns and benefits. If the reviewers are confident in their charge and role, they can ask probing questions and provide value to the project and the stakeholders. Under the best of circumstances, the audience of stakeholders can be profitably engaged in deepening the discussion, and themselves learn greater context for the work. Too often, the environment is so charged that honesty is not encouraged, and the project team tends to hide unpleasant things. If reviewers do not trust the reception for a truly probing and critical review, they will pull their punches and the engagement will be needlessly and harmfully moderated. A sign that neither trust nor honesty is present comes from an anxious and uninvolved audience.

Better meetings are a mechanism where our workplaces have an immense ability to improve. A broad principle is that a meeting needs to have a purpose and desired outcome that is well known and communicated to all participants. The meeting should engage everyone attending, and no one should be a potted plant, or otherwise engaged. Everyone’s time is valuable and expensive, the meeting should be structured and executed in a manner fitting its costs. A simple way of testing the waters are people’s attitudes toward the meeting and whether they are positive or negative. Do they want to go? Are they looking forward to it? Do they know why the meeting is happening? Is there an outcome that they are invested in? If these questions are answered honestly, those calling the meeting will know a lot and they should act accordingly.

Better meetings are a mechanism where our workplaces have an immense ability to improve. A broad principle is that a meeting needs to have a purpose and desired outcome that is well known and communicated to all participants. The meeting should engage everyone attending, and no one should be a potted plant, or otherwise engaged. Everyone’s time is valuable and expensive, the meeting should be structured and executed in a manner fitting its costs. A simple way of testing the waters are people’s attitudes toward the meeting and whether they are positive or negative. Do they want to go? Are they looking forward to it? Do they know why the meeting is happening? Is there an outcome that they are invested in? If these questions are answered honestly, those calling the meeting will know a lot and they should act accordingly. It is time to return to great papers of the past. The past has clear lessons about how progress can be achieved. Here, I will discuss a trio of papers that came at a critical juncture in the history of numerically solving hyperbolic conservation laws. In a sense, these papers were nothing new, but provided a systematic explanation and skillful articulation of the progress at that time. In a deep sense these papers represent applied math at its zenith, providing a structural explanation along with proof to accompany progress made by others. These papers helped mark the transition of modern methods from heuristic ideas to broad adoption and common use. Interestingly, the depth of applied mathematics ended up paving the way for broader adoption in the engineering world. This episode also provides a cautionary lesson about what holds higher order methods back from broader acceptance, and the relatively limited progress since.

It is time to return to great papers of the past. The past has clear lessons about how progress can be achieved. Here, I will discuss a trio of papers that came at a critical juncture in the history of numerically solving hyperbolic conservation laws. In a sense, these papers were nothing new, but provided a systematic explanation and skillful articulation of the progress at that time. In a deep sense these papers represent applied math at its zenith, providing a structural explanation along with proof to accompany progress made by others. These papers helped mark the transition of modern methods from heuristic ideas to broad adoption and common use. Interestingly, the depth of applied mathematics ended up paving the way for broader adoption in the engineering world. This episode also provides a cautionary lesson about what holds higher order methods back from broader acceptance, and the relatively limited progress since.

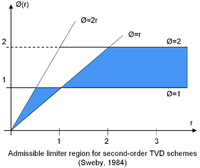

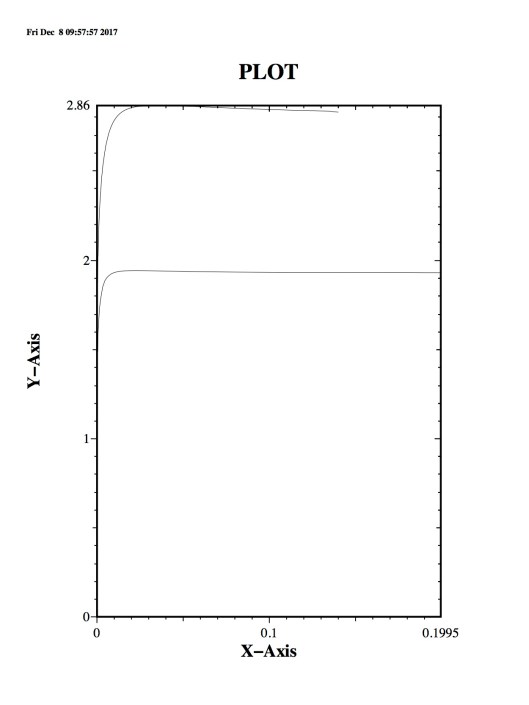

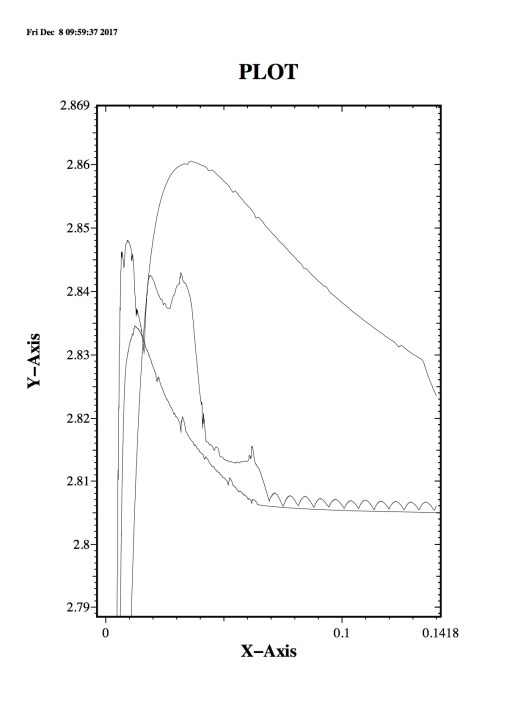

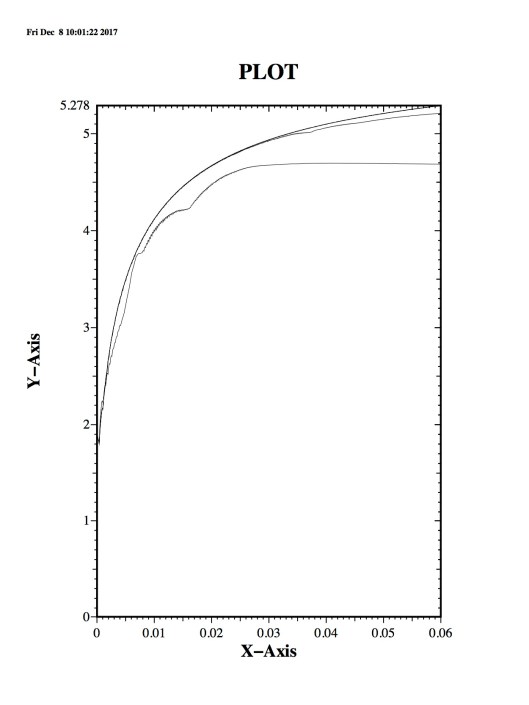

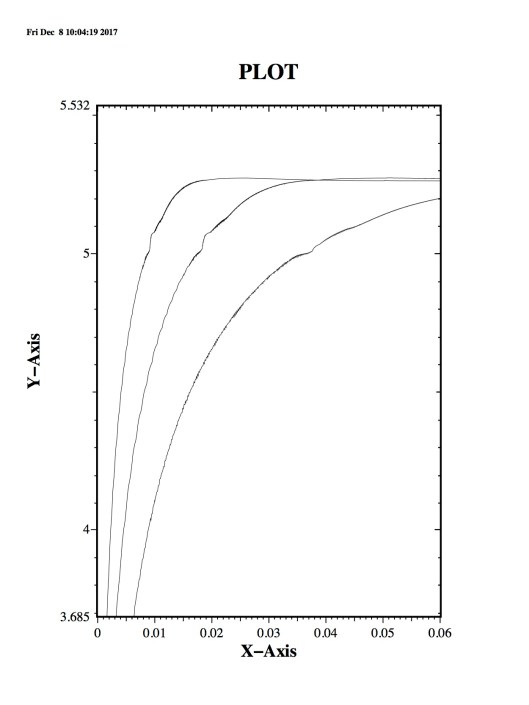

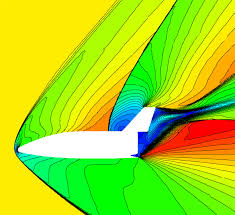

What Sweby did was provide a wonderful narrative description of TVD methods, and a graphical manner to depict them. In the form that Sweby described, TVD methods were a nonlinear combination of classical methods: upwind, Lax-Wendroff and Beam Warming. The limiter was drawn out of the formulation and parameterized by the ratio of local finite differences. The limiter is a way to take an upwind method and modify it with some part of the selection of second-order methods and satisfy the inequalities needed to be TVD. This technical specification took the following form, $ C_{j-1/2} = \nu \left( 1 + 1/2\nu(1-\nu) \phi\ledt(r_{j-1/2}\right) \right) $ and

What Sweby did was provide a wonderful narrative description of TVD methods, and a graphical manner to depict them. In the form that Sweby described, TVD methods were a nonlinear combination of classical methods: upwind, Lax-Wendroff and Beam Warming. The limiter was drawn out of the formulation and parameterized by the ratio of local finite differences. The limiter is a way to take an upwind method and modify it with some part of the selection of second-order methods and satisfy the inequalities needed to be TVD. This technical specification took the following form, $ C_{j-1/2} = \nu \left( 1 + 1/2\nu(1-\nu) \phi\ledt(r_{j-1/2}\right) \right) $ and  My own connection to this work is a nice way of rounding out this discussion. When I started looking at modern numerical methods, I started to look at the selection of approaches. FCT was the first thing I hit upon and tried. Compared to the classical methods I was using, it was clearly better, but its lack of theory was deeply unsatisfying. FCT would occasionally do weird things. TVD methods had the theory and this made is far more appealing to my technically immature mind. After the fact, I tried to project FCT methods onto the TVD theory. I wrote a paper documenting this effort. It was my first paper in the field. Unknowingly, I walked into a veritable mine field and complete shit show. All three of my reviewers were very well-known contributors to the field (I know it is supposed to be anonymous, and the shit show that unveiled itself, unveiled the reviewers too).

My own connection to this work is a nice way of rounding out this discussion. When I started looking at modern numerical methods, I started to look at the selection of approaches. FCT was the first thing I hit upon and tried. Compared to the classical methods I was using, it was clearly better, but its lack of theory was deeply unsatisfying. FCT would occasionally do weird things. TVD methods had the theory and this made is far more appealing to my technically immature mind. After the fact, I tried to project FCT methods onto the TVD theory. I wrote a paper documenting this effort. It was my first paper in the field. Unknowingly, I walked into a veritable mine field and complete shit show. All three of my reviewers were very well-known contributors to the field (I know it is supposed to be anonymous, and the shit show that unveiled itself, unveiled the reviewers too). exaggeration to say that getting funding for science has replaced the conduct and value of that science today. This is broadly true, and particularly true in scientific computing where getting something funded has replaced funding what is needed or wise. The truth of the benefit of pursuing computer power above all else is decided upon a priori. The belief was that this sort of program could “make it rain” and produce funding because this sort of marketing had in the past. All results in the

exaggeration to say that getting funding for science has replaced the conduct and value of that science today. This is broadly true, and particularly true in scientific computing where getting something funded has replaced funding what is needed or wise. The truth of the benefit of pursuing computer power above all else is decided upon a priori. The belief was that this sort of program could “make it rain” and produce funding because this sort of marketing had in the past. All results in the program must bow to this maxim, and support its premise. All evidence to the contrary is rejected because it is politically incorrect and threatens the attainment of the cargo, the funding, the money. A large part of this utterly rotten core of modern science is the ascendency of the science manager as the apex of the enterprise. The accomplished scientist and expert is merely now a useful and necessary detail, the manager reigns as the peak of achievement.

program must bow to this maxim, and support its premise. All evidence to the contrary is rejected because it is politically incorrect and threatens the attainment of the cargo, the funding, the money. A large part of this utterly rotten core of modern science is the ascendency of the science manager as the apex of the enterprise. The accomplished scientist and expert is merely now a useful and necessary detail, the manager reigns as the peak of achievement. In this putrid environment, faster computers seem an obvious benefit to science. They are a benefit and pathway to progress, this is utterly undeniable. Unfortunately, it is an expensive and inefficient path to progress, and an incredibly bad investment in comparison to alternative. The numerous problems with the exascale program are subtle, nuanced, highly technical and pathological. As I’ve pointed out before the modern age is no place for subtlety or nuance, we live it an age of brutish simplicity where bullshit reigns and facts are optional. In such an age, exascale is an exemplar, it is a brutally simple approach tailor made for the ignorant and witless. If one is willing to cast away the cloak of ignorance and embrace subtlety and nuance, a host of investments can be described that would benefit scientific computing vastly more than the current program. If we followed a better balance of research, computing to contribute to science far more greatly and scale far greater heights than the current path provides.

In this putrid environment, faster computers seem an obvious benefit to science. They are a benefit and pathway to progress, this is utterly undeniable. Unfortunately, it is an expensive and inefficient path to progress, and an incredibly bad investment in comparison to alternative. The numerous problems with the exascale program are subtle, nuanced, highly technical and pathological. As I’ve pointed out before the modern age is no place for subtlety or nuance, we live it an age of brutish simplicity where bullshit reigns and facts are optional. In such an age, exascale is an exemplar, it is a brutally simple approach tailor made for the ignorant and witless. If one is willing to cast away the cloak of ignorance and embrace subtlety and nuance, a host of investments can be described that would benefit scientific computing vastly more than the current program. If we followed a better balance of research, computing to contribute to science far more greatly and scale far greater heights than the current path provides. Today supercomputing is completely at odds with the commercial industry. After decades of first pacing advances in computing hardware, then riding along with increases in computing power, supercomputing has become separate. The separation occurred when Moore’s law died at the chip level (in about 2007). The supercomputing world has become increasingly disparate to continue the free lunch, and tied to an outdated model for delivering results. Basically, supercomputing is still tied to the mainframe model of computing that died in the business World long ago. Supercomputing has failed to embrace modern computing with its pervasive and multiscale nature moving all the way from mobile to cloud.

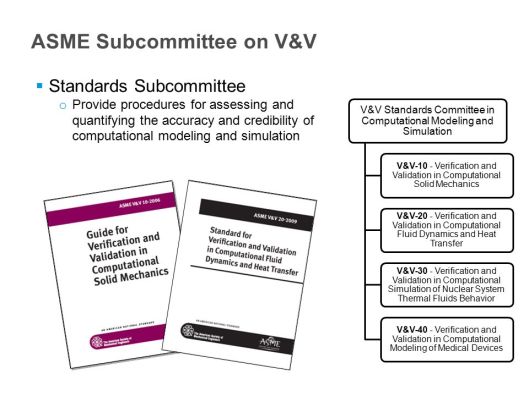

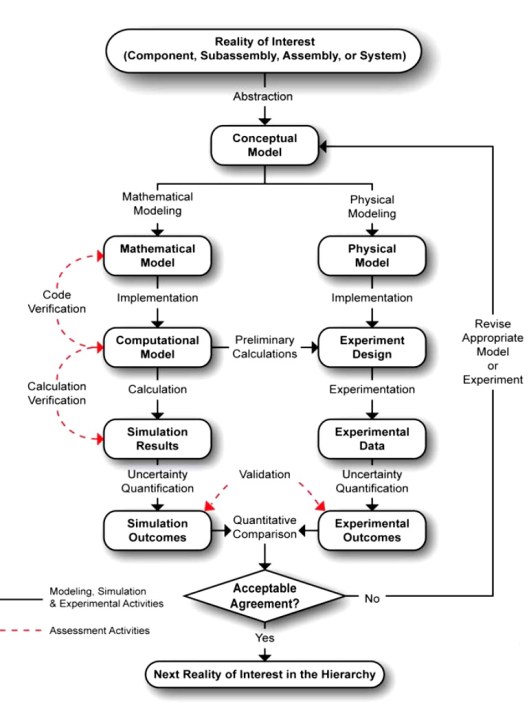

Today supercomputing is completely at odds with the commercial industry. After decades of first pacing advances in computing hardware, then riding along with increases in computing power, supercomputing has become separate. The separation occurred when Moore’s law died at the chip level (in about 2007). The supercomputing world has become increasingly disparate to continue the free lunch, and tied to an outdated model for delivering results. Basically, supercomputing is still tied to the mainframe model of computing that died in the business World long ago. Supercomputing has failed to embrace modern computing with its pervasive and multiscale nature moving all the way from mobile to cloud.  Expansive uncertainty quantification – too many uncertainties are ignored rather than considered and addressed. Uncertainty is a big part V&V, a genuinely hot topic in computational circles, and practiced quite incompletely. Many view uncertainty quantification as only being a small set of activities that only address a small piece of the uncertainty question. Too much benefit is achieved by simply ignoring a real uncertainty because the value of zero that is implicitly assumed is not challenged. This is exacerbated significantly by a half funded and deemphasized V&V effort in scientific computing. Significant progress was made several decades ago, but the signs now point to regression. The result of this often willful ignorance is a lessening of impact of computing and limiting the true benefits.

Expansive uncertainty quantification – too many uncertainties are ignored rather than considered and addressed. Uncertainty is a big part V&V, a genuinely hot topic in computational circles, and practiced quite incompletely. Many view uncertainty quantification as only being a small set of activities that only address a small piece of the uncertainty question. Too much benefit is achieved by simply ignoring a real uncertainty because the value of zero that is implicitly assumed is not challenged. This is exacerbated significantly by a half funded and deemphasized V&V effort in scientific computing. Significant progress was made several decades ago, but the signs now point to regression. The result of this often willful ignorance is a lessening of impact of computing and limiting the true benefits.  progress are the computer codes. Old computer codes are still being used, and most of them use operator splitting. Back in the 1990’s a big deal was made regarding replacing legacy codes with new codes. The codes developed then are still in use, and no one is replacing them. The methods in these old codes are still being used and now we are told that the codes need to be preserved. The codes, the models, the methods and the algorithms all come along for the ride. We end up having no practical route to advancing the methods.

progress are the computer codes. Old computer codes are still being used, and most of them use operator splitting. Back in the 1990’s a big deal was made regarding replacing legacy codes with new codes. The codes developed then are still in use, and no one is replacing them. The methods in these old codes are still being used and now we are told that the codes need to be preserved. The codes, the models, the methods and the algorithms all come along for the ride. We end up having no practical route to advancing the methods.  Complete code refresh – we have produced and now we are maintaining a new generation of legacy codes. A code is a storage for vast stores of knowledge in modeling, numerical methods, algorithms, computer science and problem solving. When we fail to replace codes, we fail to replace knowledge. The knowledge comes directly from those who write the code and create the ability to solve useful problems with that code. Much of the methodology for problem solving is complex and problem specific. Ultimately a useful code becomes something that many people are deeply invested in. In addition, the people who originally write the code move on taking their expertise, history and knowledge with them. The code becomes an artifact for this knowledge, but it is also a deeply imperfect reflection of the knowledge. The code usually contains some techniques that are magical, and unexplained. These magic bits of code are often essential for success. If they get changed the code ceases to be useful. The result of this process is a deep loss of expertise and knowledge that arises from the process of creating a code that can solve real problems. If a legacy code continues to be used it also acts to block progress of all the things it contains starting with the model and its fundamental assumption. As a result, progress stops because even when there is research advances, it has no practical outlet. This is where we are today.

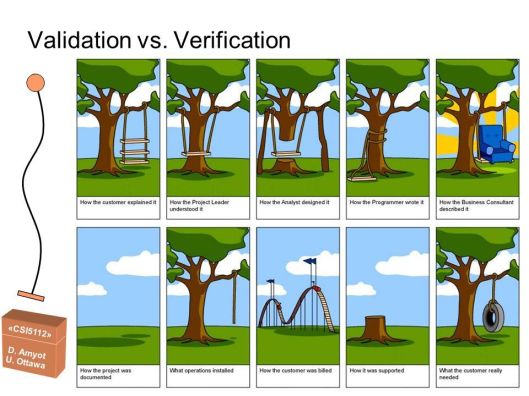

Complete code refresh – we have produced and now we are maintaining a new generation of legacy codes. A code is a storage for vast stores of knowledge in modeling, numerical methods, algorithms, computer science and problem solving. When we fail to replace codes, we fail to replace knowledge. The knowledge comes directly from those who write the code and create the ability to solve useful problems with that code. Much of the methodology for problem solving is complex and problem specific. Ultimately a useful code becomes something that many people are deeply invested in. In addition, the people who originally write the code move on taking their expertise, history and knowledge with them. The code becomes an artifact for this knowledge, but it is also a deeply imperfect reflection of the knowledge. The code usually contains some techniques that are magical, and unexplained. These magic bits of code are often essential for success. If they get changed the code ceases to be useful. The result of this process is a deep loss of expertise and knowledge that arises from the process of creating a code that can solve real problems. If a legacy code continues to be used it also acts to block progress of all the things it contains starting with the model and its fundamental assumption. As a result, progress stops because even when there is research advances, it has no practical outlet. This is where we are today.  Democratization of expertise – the manner in which codes are applied has a very large impact on solutions. The overall process is often called a workflow, encapsulating activities starting with problem conception, meshing, modeling choices, code input, code execution, data analysis, visualization. One of the problems that has arisen is the use of codes by non-experts. Increasingly code users are simply not sophisticated and treat codes like black boxes. Many refer to this as the democratization of the simulation capability, which is generally beneficial. On the other hand, we increasingly see calculations conducted by novices who are generally ignorant of vast swaths of the underlying science. This characteristic is keenly related to a lack of V&V focus and loose standards of acceptance for calculations. Calibration is becoming more prevalent again, and distinctions between calibration and validation are vanishing anew. The creation of broadly available simulation tools must be coupled to first rate practices and appropriate professional education. In both of these veins the current trends are completely in the wrong direction. V&V practices are in decline and recession. Professional education is systematically getting worse as the educational mission of universities is attacked, and diminished along with the role of elites in society.

Democratization of expertise – the manner in which codes are applied has a very large impact on solutions. The overall process is often called a workflow, encapsulating activities starting with problem conception, meshing, modeling choices, code input, code execution, data analysis, visualization. One of the problems that has arisen is the use of codes by non-experts. Increasingly code users are simply not sophisticated and treat codes like black boxes. Many refer to this as the democratization of the simulation capability, which is generally beneficial. On the other hand, we increasingly see calculations conducted by novices who are generally ignorant of vast swaths of the underlying science. This characteristic is keenly related to a lack of V&V focus and loose standards of acceptance for calculations. Calibration is becoming more prevalent again, and distinctions between calibration and validation are vanishing anew. The creation of broadly available simulation tools must be coupled to first rate practices and appropriate professional education. In both of these veins the current trends are completely in the wrong direction. V&V practices are in decline and recession. Professional education is systematically getting worse as the educational mission of universities is attacked, and diminished along with the role of elites in society.

Last week I tried to envision a better path forward for scientific computing. Unfortunately, a true better path flows invariably through a better path for science itself and the Nation as a whole. Ultimately scientific computing, and science more broadly is dependent on the health of society in the broadest sense. It also depends on leadership and courage, two other attributes we are lacking in almost every respect. Our society is not well, the problems we are confronting are deep and perhaps the most serious crisis since the Civil War. I believe that historians will look back to 2016-2018 and perhaps longer as the darkest period in American history since the Civil War. We can’t build anything great when the Nation is tearing itself apart. I hope and pray that it will be resolved before we plunge deeper into the abyss we find ourselves. We see the forces opposed to knowledge, progress and reason emboldened and running amok. The Nation is presently moving backward and embracing a deeply disturbing and abhorrent philosophy. In such an environment science cannot flourish, it can only survive. We all hope the darkness will lift and we can again move forward toward a better future; one with purpose and meaning where science can be a force for the betterment of society as a whole.

Last week I tried to envision a better path forward for scientific computing. Unfortunately, a true better path flows invariably through a better path for science itself and the Nation as a whole. Ultimately scientific computing, and science more broadly is dependent on the health of society in the broadest sense. It also depends on leadership and courage, two other attributes we are lacking in almost every respect. Our society is not well, the problems we are confronting are deep and perhaps the most serious crisis since the Civil War. I believe that historians will look back to 2016-2018 and perhaps longer as the darkest period in American history since the Civil War. We can’t build anything great when the Nation is tearing itself apart. I hope and pray that it will be resolved before we plunge deeper into the abyss we find ourselves. We see the forces opposed to knowledge, progress and reason emboldened and running amok. The Nation is presently moving backward and embracing a deeply disturbing and abhorrent philosophy. In such an environment science cannot flourish, it can only survive. We all hope the darkness will lift and we can again move forward toward a better future; one with purpose and meaning where science can be a force for the betterment of society as a whole. It would really be great to be starting 2018 feeling good about the work I do. Useful work that impacts important things would go a long way toward achieving this. I’ve put some thought into considering what might constitute work having these properties. This has two parts, what work would be useful and impactful in general, and what would be important to contribute to. As a necessary subtext to this conversation is a conclusion that most of the work we are doing in scientific computing today is neither useful, nor impactful and nothing important is at stake. This alone is a rather bold assertion. Simply put, as a Nation and society we are not doing anything aspirational, nothing big. This shows up in the lack of substance in the work we are paid to pursue. More deeply, I believe that if we did something big and aspirational, the utility and impact of our work would simply sort itself out as part of a natural order.

It would really be great to be starting 2018 feeling good about the work I do. Useful work that impacts important things would go a long way toward achieving this. I’ve put some thought into considering what might constitute work having these properties. This has two parts, what work would be useful and impactful in general, and what would be important to contribute to. As a necessary subtext to this conversation is a conclusion that most of the work we are doing in scientific computing today is neither useful, nor impactful and nothing important is at stake. This alone is a rather bold assertion. Simply put, as a Nation and society we are not doing anything aspirational, nothing big. This shows up in the lack of substance in the work we are paid to pursue. More deeply, I believe that if we did something big and aspirational, the utility and impact of our work would simply sort itself out as part of a natural order. The march of science is the 20th Century was deeply impacted by international events, several World Wars and a Cold (non) War that spurred National interests in supporting science and technology. The twin projects of the atom bomb and the nuclear arms race along with space exploration drove the creation of much of the science and technology today. These conflicts steeled resolve, purpose and granted resources needed for success. They were important enough that efforts were earnest. Risks were taken because risk is necessary for achievement. Today we don’t take risks because nothing important is a stake. We can basically fake results and market progress where little or none exists. Since nothing is really that essential bullshit reigns supreme.

The march of science is the 20th Century was deeply impacted by international events, several World Wars and a Cold (non) War that spurred National interests in supporting science and technology. The twin projects of the atom bomb and the nuclear arms race along with space exploration drove the creation of much of the science and technology today. These conflicts steeled resolve, purpose and granted resources needed for success. They were important enough that efforts were earnest. Risks were taken because risk is necessary for achievement. Today we don’t take risks because nothing important is a stake. We can basically fake results and market progress where little or none exists. Since nothing is really that essential bullshit reigns supreme. resistance was not real. Ironically the Soviets were ultimately defeated by bullshit. The Strategic Defense Initiative, or Star Wars bankrupted the Soviets. It was complete bullshit and never had a chance to succeed. This was a brutal harbinger of today’s World where reality is optional, and marketing is the coin of the realm. Today American power seems unassailable. This is partially true and partially over-confidence. We are not on our game at all, and far to much of our power is based on bullshit. As a result, we can basically just pretend to try, and actually not execute anything with substance and competence. This is where we are today; we are doing nothing important, and wasting lots of time and money in the process.

resistance was not real. Ironically the Soviets were ultimately defeated by bullshit. The Strategic Defense Initiative, or Star Wars bankrupted the Soviets. It was complete bullshit and never had a chance to succeed. This was a brutal harbinger of today’s World where reality is optional, and marketing is the coin of the realm. Today American power seems unassailable. This is partially true and partially over-confidence. We are not on our game at all, and far to much of our power is based on bullshit. As a result, we can basically just pretend to try, and actually not execute anything with substance and competence. This is where we are today; we are doing nothing important, and wasting lots of time and money in the process. The result of the current model is a research establishment that only goes through the motions and does little or nothing. We make lots of noise and produce little substance. Our nation deeply needs a purpose that is greater. There are plenty of worthier National goals. If war-making is needed, Russia and China are still worthy adversaries. For some reason, we have chosen to capitulate to Putin’s Russia simply because they are an ally against the non-viable threat of Islamic fundamentalism. This is a completely insane choice that is only rhetorically useful. If we want peaceful goals, there are challenges aplenty. Climate change and weather are worthy problems to tackle requiring both scientific understanding and societal transformation to conquer. Creating clean and renewable energy that does not create horrible environmental side-effects remains unsolved. Solving the international needs for food and prosperity for mankind is always there. Scientific exploration and particularly space remain unconquered frontiers. Medicine and genetics offer new vistas for scientific exploration. All of these areas could transform the Nation in broad ways socially and economically. All of these could meet broad societal needs. More to the point of my post, all need scientific computing in one form or another to fully succeed. Computing always works best as a useful tool employed to help achieve objectives in the real World. The real-World problems provide constraints and objectives that spur innovation and keep the enterprise honest.

The result of the current model is a research establishment that only goes through the motions and does little or nothing. We make lots of noise and produce little substance. Our nation deeply needs a purpose that is greater. There are plenty of worthier National goals. If war-making is needed, Russia and China are still worthy adversaries. For some reason, we have chosen to capitulate to Putin’s Russia simply because they are an ally against the non-viable threat of Islamic fundamentalism. This is a completely insane choice that is only rhetorically useful. If we want peaceful goals, there are challenges aplenty. Climate change and weather are worthy problems to tackle requiring both scientific understanding and societal transformation to conquer. Creating clean and renewable energy that does not create horrible environmental side-effects remains unsolved. Solving the international needs for food and prosperity for mankind is always there. Scientific exploration and particularly space remain unconquered frontiers. Medicine and genetics offer new vistas for scientific exploration. All of these areas could transform the Nation in broad ways socially and economically. All of these could meet broad societal needs. More to the point of my post, all need scientific computing in one form or another to fully succeed. Computing always works best as a useful tool employed to help achieve objectives in the real World. The real-World problems provide constraints and objectives that spur innovation and keep the enterprise honest. Instead our scientific computing is being applied as a shallow marketing ploy to shore up a vacuous program. Nothing really important or impactful is at stake. The applications for computing are mostly make believe and amount to nothing of significance. The marketing will tell you otherwise, but the lack of gravity for the work is clear and poisons the work. The result of this lack of gravity are phony goals and objectives that have the look and feel of impact, but contribute nothing toward an objective reality. This lack of contribution comes from the deeper malaise of purpose as a Nation, and science’s role as an engine of progress. With little or nothing at stake the tools used for success suffer, scientific computing is no different. The standards of success simply are not real, and lack teeth. Even stockpile stewardship is drifting into the realm of bullshit. It started as a worthy program, but over time it has been allowed to lose its substance. Political and financial goals have replaced science and fact, the goals of the program losing connection to objective reality.

Instead our scientific computing is being applied as a shallow marketing ploy to shore up a vacuous program. Nothing really important or impactful is at stake. The applications for computing are mostly make believe and amount to nothing of significance. The marketing will tell you otherwise, but the lack of gravity for the work is clear and poisons the work. The result of this lack of gravity are phony goals and objectives that have the look and feel of impact, but contribute nothing toward an objective reality. This lack of contribution comes from the deeper malaise of purpose as a Nation, and science’s role as an engine of progress. With little or nothing at stake the tools used for success suffer, scientific computing is no different. The standards of success simply are not real, and lack teeth. Even stockpile stewardship is drifting into the realm of bullshit. It started as a worthy program, but over time it has been allowed to lose its substance. Political and financial goals have replaced science and fact, the goals of the program losing connection to objective reality. We would still be chasing faster computers, but the faster computers would not be the primary focus. We would focus on using computing to solve problems that were important. We would focus on making computers that were useful first and foremost. We would want computers that were faster as long as they enabled progress on problem solving. As a result, efforts would be streamlined toward utility. We would not throw vast amounts of effort into making computers faster, just to make them faster (this is what is happening today there is no rhyme or reason to exascale other than, faster is like better, Duh!). Utility means that we would honestly look at what is limiting problem solving and putting our efforts into removing those limits. The effects of this dose of reality on our current efforts would be stunning; we would see a wholesale change in our emphasis and focus away from hardware. Computing hardware would take its proper role as an important tool for scientific computing and no longer be the driving force. The fact that hardware is a driving force for scientific computing is one of clearest indicators of how unhealthy the field is today.

We would still be chasing faster computers, but the faster computers would not be the primary focus. We would focus on using computing to solve problems that were important. We would focus on making computers that were useful first and foremost. We would want computers that were faster as long as they enabled progress on problem solving. As a result, efforts would be streamlined toward utility. We would not throw vast amounts of effort into making computers faster, just to make them faster (this is what is happening today there is no rhyme or reason to exascale other than, faster is like better, Duh!). Utility means that we would honestly look at what is limiting problem solving and putting our efforts into removing those limits. The effects of this dose of reality on our current efforts would be stunning; we would see a wholesale change in our emphasis and focus away from hardware. Computing hardware would take its proper role as an important tool for scientific computing and no longer be the driving force. The fact that hardware is a driving force for scientific computing is one of clearest indicators of how unhealthy the field is today. Current computing focus is only porting old codes to new computers, a process that keeps old models, methods and algorithms in place. This is one of the most corrosive elements in the current mix. The porting of old codes is the utter abdication of intellectual ownership. These old codes are scientific dinosaurs and act to freeze antiquated models, methods and algorithms in place while acting to squash progress. Worse yet, the skillsets necessary for improving the most valuable and important parts of modeling and simulation are allowed to languish. This is worse than simply choosing a less efficient road, this is going backwards. When we need to turn our attention to serious real work, our scientists will not be ready. These choices are dooming an entire generation that could have been making breakthroughs to simply become caretakers. To be proper stewards of our science we need to write new codes containing new models using new methods and algorithms. Porting codes turns our scientists into mindless monks simply transcribing sacred texts without any depth of understanding. It is a recipe for transforming our science into magic. It is the recipe for defeat and the passage away from the greatness we once had.

Current computing focus is only porting old codes to new computers, a process that keeps old models, methods and algorithms in place. This is one of the most corrosive elements in the current mix. The porting of old codes is the utter abdication of intellectual ownership. These old codes are scientific dinosaurs and act to freeze antiquated models, methods and algorithms in place while acting to squash progress. Worse yet, the skillsets necessary for improving the most valuable and important parts of modeling and simulation are allowed to languish. This is worse than simply choosing a less efficient road, this is going backwards. When we need to turn our attention to serious real work, our scientists will not be ready. These choices are dooming an entire generation that could have been making breakthroughs to simply become caretakers. To be proper stewards of our science we need to write new codes containing new models using new methods and algorithms. Porting codes turns our scientists into mindless monks simply transcribing sacred texts without any depth of understanding. It is a recipe for transforming our science into magic. It is the recipe for defeat and the passage away from the greatness we once had. My work day is full of useless bullshit. There is so much bullshit that it has choked out the room for inspiration and value. We are not so much managed as controlled. This control comes from a fundamental distrust of each other to a degree that any independent ideas are viewed as dangerous. This realization has come upon me in the past few years. It has also occurred to me that this could simply be a mid-life crisis manifesting itself, but the evidence might seem to indicate that it is something more significant (look at the bigger picture of the constant crisis my Nation is in). My mid-life attitudes are simply much less tolerant of time-wasting activities with little or no redeeming value. You realize that your time and energy is limited, why waste it on useless things.

My work day is full of useless bullshit. There is so much bullshit that it has choked out the room for inspiration and value. We are not so much managed as controlled. This control comes from a fundamental distrust of each other to a degree that any independent ideas are viewed as dangerous. This realization has come upon me in the past few years. It has also occurred to me that this could simply be a mid-life crisis manifesting itself, but the evidence might seem to indicate that it is something more significant (look at the bigger picture of the constant crisis my Nation is in). My mid-life attitudes are simply much less tolerant of time-wasting activities with little or no redeeming value. You realize that your time and energy is limited, why waste it on useless things. I read a book that had a big impact on my thinking, “The Subtle Art of Not Giving a Fuck” by Mark Manson . In a nutshell, the book says that you have a finite number of fucks to give in life and you should optimize your life by mindfully not giving a fuck about unimportant things. This gives you the time and energy to actually give a fuck about things that actually matter. The book isn’t about not caring, it is about caring about the right things and dismissing the wrong things. What I realized is that increasingly my work isn’t competing for my fucks, they just assume that I will spend my limited fucks on complete bullshit out of duty. It is actually extremely disrespectful of me and my limited time and effort. One conclusion is that the “bosses” (the Lab, the Department of Energy) not give enough of a fuck about me to treat my limited time and energy with respect and make sure my fucks actually matter.

I read a book that had a big impact on my thinking, “The Subtle Art of Not Giving a Fuck” by Mark Manson . In a nutshell, the book says that you have a finite number of fucks to give in life and you should optimize your life by mindfully not giving a fuck about unimportant things. This gives you the time and energy to actually give a fuck about things that actually matter. The book isn’t about not caring, it is about caring about the right things and dismissing the wrong things. What I realized is that increasingly my work isn’t competing for my fucks, they just assume that I will spend my limited fucks on complete bullshit out of duty. It is actually extremely disrespectful of me and my limited time and effort. One conclusion is that the “bosses” (the Lab, the Department of Energy) not give enough of a fuck about me to treat my limited time and energy with respect and make sure my fucks actually matter.

If we look at work, it might seem that an inspired workforce would be a benefit worth creating. People would work hard and create wonderful things because of the depth of their commitment to a deeper purpose. An employer would benefit mightily from such an environment, and the employees could flourish brimming with satisfaction and growth. With all these benefits, we should expect the workplace to naturally create the conditions for inspiration. Yet this is not happening; the conditions are the complete opposite. The reason is that inspired employees are not entirely controlled. Creative people do things that are unexpected and unplanned. The job of managing a work place like this is much harder. In addition, mistakes and bad things happen too. Failure and mistakes are an inevitable consequence of hard working inspired people. This is the thing that our work places cannot tolerate. The lack of control and unintended consequences are unacceptable. Fundamentally this stems from a complete lack of trust. Our employers do not trust their employees at all. In turn, the employees do not trust the workplace. It is vicious cycles that drags inspiration under and smothers it. The entire environment is overflowing with micromanagement, control suspicion and doubt.

If we look at work, it might seem that an inspired workforce would be a benefit worth creating. People would work hard and create wonderful things because of the depth of their commitment to a deeper purpose. An employer would benefit mightily from such an environment, and the employees could flourish brimming with satisfaction and growth. With all these benefits, we should expect the workplace to naturally create the conditions for inspiration. Yet this is not happening; the conditions are the complete opposite. The reason is that inspired employees are not entirely controlled. Creative people do things that are unexpected and unplanned. The job of managing a work place like this is much harder. In addition, mistakes and bad things happen too. Failure and mistakes are an inevitable consequence of hard working inspired people. This is the thing that our work places cannot tolerate. The lack of control and unintended consequences are unacceptable. Fundamentally this stems from a complete lack of trust. Our employers do not trust their employees at all. In turn, the employees do not trust the workplace. It is vicious cycles that drags inspiration under and smothers it. The entire environment is overflowing with micromanagement, control suspicion and doubt. If we can’t say NO to all this useless stuff, we can’t say YES to things either. My work and time budget is completely stocked up with non-optional things that I should say NO to. They are largely useless and produce no value. Because I can’t say NO, I can’t say YES to something better. My employer is sending a message to me with very clear emphasis, we don’t trust you to make decisions. Your ideas are not worth working on. You are expected to implement other people’s ideas no matter how bad they are. You have no ability to steer the ideas to be better. Your expertise has absolutely no value. A huge part of this problem is the ascendency of the management class as the core of organizational value. We are living in the era of the manager; the employee is a cog and not valued. Organizations voice platitudes toward the employees, but they are hollow. The actions of the organization spell out their true intent. Employees are not to be trusted, they are to be controlled and they need to do what they are told to do. Inspired employees would do things that are not intended, and take organizations in new directions, focused on new things. This would mean losing control and changing plans. More importantly, the value of the organization would move away from the managers and move to the employees. Managers are much happier with employees that are “seen and not heard”.