From the bad things and bad people, you learn the right way and right direction towards the successful life.

― Ehsan Sehgal

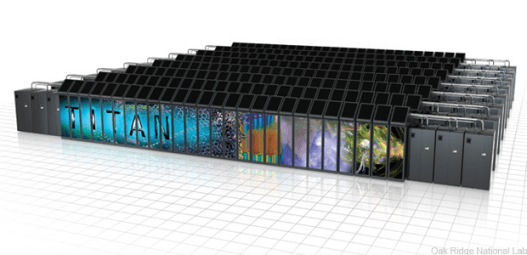

Computational science is an extremely powerful set of disciplines for conducting scientific investigations. The end result of computational science is usually grounded in the physical sciences, and engineering, but depends on a chain of expertise spanning much of modern science. Doing computational science well completely depends on all of these disparate disciplines working in concert. A big area of focus these days are the supercomputers being used. The predicate for acquiring a these immensely expensive machines is the improvement in scientific and engineering product arising from their use. While this should be true, getting across this finish line requires a huge chain of activities to be done correctly.

Let’s take a look at all the things we need to do right. Computer engineering and computer science are closest to the machines needed for computational science. These disciplines make these exotic computers accessible and useful for domain science and engineering. A big piece of this work is computer programming and software engineering. The computer program is a way of expressing mathematics in a way for the computer to operate on. Efficient and correct computer programs are a difficult endeavor all by themselves. Mathematics is the language of physics and engineering and essential for the conduct of computing. Mathematics is a middle layer of work between the computer and their practical utility. It is a deeply troubling and ironic trend that applied mathematics is disappearing from computational science. As the bridge between the computer and its practical use, it forms the basis for conducting and believing the computed results. Instead of being an area of increased focus, the applied math is disappearing into either the maw of computer programming or domain science/engineering. It is being lost as a separate contributor. Finally, we have the end result in science and engineering. Quite often we lose sight of computers and computing as a mere tool that must follow its specific rules for quality, reliable results. Too often the computer is treated like it is a magic wand.

Let’s take a look at all the things we need to do right. Computer engineering and computer science are closest to the machines needed for computational science. These disciplines make these exotic computers accessible and useful for domain science and engineering. A big piece of this work is computer programming and software engineering. The computer program is a way of expressing mathematics in a way for the computer to operate on. Efficient and correct computer programs are a difficult endeavor all by themselves. Mathematics is the language of physics and engineering and essential for the conduct of computing. Mathematics is a middle layer of work between the computer and their practical utility. It is a deeply troubling and ironic trend that applied mathematics is disappearing from computational science. As the bridge between the computer and its practical use, it forms the basis for conducting and believing the computed results. Instead of being an area of increased focus, the applied math is disappearing into either the maw of computer programming or domain science/engineering. It is being lost as a separate contributor. Finally, we have the end result in science and engineering. Quite often we lose sight of computers and computing as a mere tool that must follow its specific rules for quality, reliable results. Too often the computer is treated like it is a magic wand.

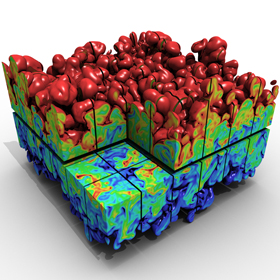

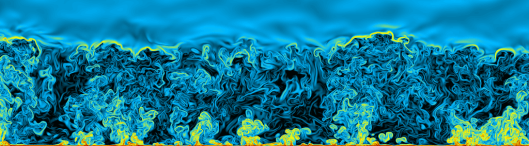

Another common thread to horribleness is the increasing tendency for science and engineering to be marketed. The press release has given way to the tweet, but the sentiment is the same. Science is marketed for the masses who have no taste for the details necessary for high quality work. A deep problem is that this lack of focus and detail is creeping back into science itself. Aspects of scientific and engineering work that used to be utterly essential are becoming increasingly optional. Much of this essential intellectual labor is associated with the hidden aspects of the investigation. Things related to mathematics, checking for correctness, assessment of error, preceding work, various doubts about results and alternative means of investigation. This sort of deep work has been crowded out by flashy graphics, movies and undisciplined demonstrations of vast computing power.

Another common thread to horribleness is the increasing tendency for science and engineering to be marketed. The press release has given way to the tweet, but the sentiment is the same. Science is marketed for the masses who have no taste for the details necessary for high quality work. A deep problem is that this lack of focus and detail is creeping back into science itself. Aspects of scientific and engineering work that used to be utterly essential are becoming increasingly optional. Much of this essential intellectual labor is associated with the hidden aspects of the investigation. Things related to mathematics, checking for correctness, assessment of error, preceding work, various doubts about results and alternative means of investigation. This sort of deep work has been crowded out by flashy graphics, movies and undisciplined demonstrations of vast computing power.

Some of the terrible things we discuss here are simply bad science and e ngineering. These terrible things would be awful with or without a computer being involved. Other things come from a lack of understanding of how to add computing to an investigation in a quality focused manner. The failure to recognize the multidisciplinary nature of computational science is often at the root of many of the awful things I will now describe.

ngineering. These terrible things would be awful with or without a computer being involved. Other things come from a lack of understanding of how to add computing to an investigation in a quality focused manner. The failure to recognize the multidisciplinary nature of computational science is often at the root of many of the awful things I will now describe.

Fake is the new real, You gotta keep a lot a shit to yourself.

― Genereux Philip

Without further ado, here are some terrible things to look out for. Every single item on the list will be accompanied by a link to a full blog post expanding on the topic.

- If one follows high performance computing online (institutional sites, Facebook, Twitter) you might believe that the biggest calculations on the fastest computers are the very best science. You are sold that these massive calculations have the greatest impact on the bottom line. This is absolutely not the case. These calculations are usually one-off demonstrations with little or no technical value. Almost everything of enduring value happens on the computers being used by the rank and file to do the daily work of science and engineering. These press release calculations are simply marketing. They almost never have the pedigree or hard-nosed quality work necessary for good science and engineering. – https://williamjrider.wordpress.com/2016/11/17/a-single-massive-calculation-isnt-science-its-a-tech-demo/, https://williamjrider.wordpress.com/2017/02/10/it-is-high-time-to-envision-a-better-hpc-future/

- The second thing you come across is the notion that a calculation with larger-finer mesh is better than one with a coarser mesh. In the naïve pedestrian analysis, this would seem to be utterly axiomatic. The truth is that computational modeling is an assembly of many things all working in concert. This is another example of proof by brute force. In the best circumstances this would hold, but most modeling is hardly taking places under the best conditions. The proposition is that the fine mesh allows one to include all sorts of geometric details, so the computational world looks more like reality. This is a priori What isn’t usually discussed is where the challenge is in modeling. Is geometric detail driving uncertainty? What is biggest challenge, and is the modeling focused there? – https://williamjrider.wordpress.com/2017/07/21/the-foundations-of-verification-solution-verification/, https://williamjrider.wordpress.com/2017/03/03/you-want-quality-you-cant-handle-the-quality/, https://williamjrider.wordpress.com/2014/04/04/unrecognized-bias-can-govern-modeling-simulation-quality/

- In concert with these two horrible trends, you often see results presented as the result of single massive calculation that magically unveils the mysteries of the universe. This is computing as a magic wand, and has very little to do with science or engineering. This simply does not happen. Real science and engineering takes 100’s or 1000’s of calculations to happen. There is an immense amount of

background work needed to create high quality results. A great deal of modeling is associated with bounding uncertainty or bounding the knowledge we possess. A single calculation is incapable of this sort of rigor and focus. If you see a single massive calculation as the sole evidence of work, you should smell and call “bullshit”. – https://williamjrider.wordpress.com/2016/11/17/a-single-massive-calculation-isnt-science-its-a-tech-demo/

background work needed to create high quality results. A great deal of modeling is associated with bounding uncertainty or bounding the knowledge we possess. A single calculation is incapable of this sort of rigor and focus. If you see a single massive calculation as the sole evidence of work, you should smell and call “bullshit”. – https://williamjrider.wordpress.com/2016/11/17/a-single-massive-calculation-isnt-science-its-a-tech-demo/ - One of the key elements in modern computing is the complete avoidance of discussing how the equations in the code are being solved. The notion is that this detail has no importance. On the one hand, this is evidence of progress, our methods for solving equations are pretty damn good. The methods and the code itself is still an immensely important detail, and constitute part of the effective model. There seems to be a mentality that the methods and codes are so good that this sort of thing can be ignored. All one needs are a sufficiently fine mesh, and the results are pristine. This is almost always false. What this almost willful ignorance shows are lack of sophistication. The methods are immensely important to the results, and we are a very long way from being able to apply the sort of ignorance of this detail that is rampant. The powers that be want you to believe that the method disappears from importance because the computers are so fast. Don’t fall for it. – https://williamjrider.wordpress.com/2017/05/19/we-need-better-theory-and-understanding-of-numerical-errors/, https://williamjrider.wordpress.com/2017/05/12/numerical-approximation-is-subtle-and-we-dont-do-subtle/

- The George Box maxim about models being wrong, but useful is essential to keep in mind. This maxim is almost uniformly ignored in the high-performance computing bullshit machine. The politically correct view is that the super-fast computers will solve the models so accurately that we can stop doing experiments. The truth is that eventually, if we are doing everything correct, the models will be solved with great accuracy and their incorrectness will be made evident. I strongly expect that we are already there in many cases; the models are being solved too accurately and the real answer to our challenges is building new models. Model building as an enterprise is being systematically disregarded in favor of chasing faster computers. We need far greater balance and focus on building better models worthy of the computers they are being solved on. We need to build the models that are needed for better science and engineering befitting the work we need to do. –https://williamjrider.wordpress.com/2017/09/01/if-you-dont-know-uncertainty-bounding-is-the-first-step-to-estimating-it/

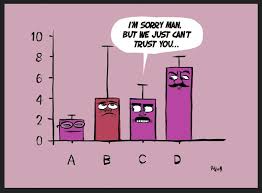

- Calculationa

l error bars are an endangered species. We never see them in practice even though we know how to compute them. They should simply be a routine element of modern computing. They are almost never demanded by anyone, and their lack never precludes publication. It certainly never precludes a calculation being promoted as marketing for computing. If I was cynically minded, I might even day that error bars when used are opposed to marketing the calculation. The implicit message in the computing marketing is that the calculations are so accurate that they are basically exact, no error at all. If you don’t see error bars or some explicit discussion of uncertainty you should see the calculation as flawed, and potentially simply bullshit. – https://williamjrider.wordpress.com/2017/07/07/good-validation-practices-are-our-greatest-opportunity-to-advance-modeling-and-simulation/, https://williamjrider.wordpress.com/2017/09/22/testing-the-limits-of-our-knowledge/, https://williamjrider.wordpress.com/2017/04/06/validation-is-much-more-than-uncertainty-quantification/

l error bars are an endangered species. We never see them in practice even though we know how to compute them. They should simply be a routine element of modern computing. They are almost never demanded by anyone, and their lack never precludes publication. It certainly never precludes a calculation being promoted as marketing for computing. If I was cynically minded, I might even day that error bars when used are opposed to marketing the calculation. The implicit message in the computing marketing is that the calculations are so accurate that they are basically exact, no error at all. If you don’t see error bars or some explicit discussion of uncertainty you should see the calculation as flawed, and potentially simply bullshit. – https://williamjrider.wordpress.com/2017/07/07/good-validation-practices-are-our-greatest-opportunity-to-advance-modeling-and-simulation/, https://williamjrider.wordpress.com/2017/09/22/testing-the-limits-of-our-knowledge/, https://williamjrider.wordpress.com/2017/04/06/validation-is-much-more-than-uncertainty-quantification/ - One way for a calculation to seem really super valuable is to declare that it is direct numerical simulation (DNS). Sometimes this is an utterly valid designator. The other term that follows DNS is “first principles”. Each of these terms seeks to endow the calculation with legitimacy that it may, or may not deserve. One of the biggest problems with DNS is the general lack of evidence for quality and legitimacy. There is a broad spectrum of the technical World that seems to be OK with treating DNS as equivalent (or even better) with experiments. This is tremendously dangerous to the scientific process. DNS and first principles is still based on solving a model, and models are always wrong. This doesn’t say that DNS isn’t useful, but this utility needs to be proven and bounded by uncertainty. – https://williamjrider.wordpress.com/2017/11/02/how-to-properly-use-direct-numerical-simulations-dns/

- Most press releases are rather naked in the implicit assertion that the bigger computer gives a better answer. This is treated as being completely axiomatic. As such there is no evidence provided to underpin this assertion. Usually some colorful graphics, or color movies beautifully rendered accompany the calculation. Their coolness is all the proof we need. This is not science or engineering even though this mode of delivery dominates the narrative today. –https://williamjrider.wordpress.com/2017/01/20/breaking-bad-priorities-intentions-and-responsibility-in-high-performance-computing/, https://williamjrider.wordpress.com/2014/09/19/what-would-we-actually-do-with-an-exascale-computer/, https://williamjrider.wordpress.com/2014/10/03/colorful-fluid-dynamics/

- Modeling is the use of mathematics to connect reality to theory and understanding. Mathematics is translated into methods and algorithms implemented in computer code. It is ironic that the mathematics that forms the bridge between physical world and the computer is increasingly ignored by science. Applied mathematics has been a tremendous partner for physics, engineering and computing throughout the history of computational science. This partnership has waned in priority over the last thirty years. Less and less applied math is called upon and happens being replaced by computer programming or domain science and engineering. Our programs seem to think that the applied math part of the problem is basically done. Nothing could be further from the truth. – https://williamjrider.wordpress.com/2014/10/16/what-is-the-point-of-applied-math/, https://williamjrider.wordpress.com/2016/09/27/the-success-of-computing-depends-on-more-than-computers/

- A frequent way of describing a computation is to describe the mesh as defining the solution. Little else is given about the calculation such as the equations being solved or how the equations are being approximated. Frequently, the fact that the solutions are approximated is left out. This fact is damaging to the accuracy narrative of massive computing. The designed message is that the massive computer is so powerful that the solution to the equations is effectively exact. The equations themselves basically describe reality without error. All of this is in service of saying computing can replace experiments, or real-world observations. The entire narrative is anathema to science and engineering doing each great disservice. – https://williamjrider.wordpress.com/2015/07/03/modeling-issues-for-exascale-computation/

- Computational science is often described in terms that are not consistent with the rest of science. We act like it is somehow different in a fundamental way. Computers are just tools for doing science, and allowing us to solve models of reality far more generally than analytical methods. With all of this power comes a lot of tedious detail needed to do things with quality. This quality comes from the skillful execution of this entire chain of activities described at the beginning of this Post. These details all need to be done right to get good results. One of the biggest problems in the current computing narrative is ignorance to the huge set of activities bridging a model of reality and the computer itself. The narrative wants to ignore all of this because it diminishes the sense that these computers are magical in their ability. The power isn’t magic, it is hard work, success is not a forgone conclusion, and everyone should ask for evidence, not take their word for it. – https://williamjrider.wordpress.com/2016/12/22/verification-and-validation-with-uncertainty-quantification-is-the-scientific-method/

Taking the word of the marketing narrative is injurious to high quality science and engineering. The narrative seeks to defend the idea is that buying these super expensive computers is worthwhile, and magically produces great science and engineering. The path to advancing the impact of computational science dominantly flows through computing hardware. This is simply a deeply flawed and utterly naïve perspective. Great science and engineering is hard work and never a foregone conclusion. Getting high quality results depends on spanning the full range of disciplines associated with computational science adaptively as evidence and results demand. We should always ask hard questions of scientific work, and demand hard evidence of claims. Press releases and tweets are renowned for simply being cynical advertisements and lacking all rigor and substance.

Taking the word of the marketing narrative is injurious to high quality science and engineering. The narrative seeks to defend the idea is that buying these super expensive computers is worthwhile, and magically produces great science and engineering. The path to advancing the impact of computational science dominantly flows through computing hardware. This is simply a deeply flawed and utterly naïve perspective. Great science and engineering is hard work and never a foregone conclusion. Getting high quality results depends on spanning the full range of disciplines associated with computational science adaptively as evidence and results demand. We should always ask hard questions of scientific work, and demand hard evidence of claims. Press releases and tweets are renowned for simply being cynical advertisements and lacking all rigor and substance.

One reason for elaborating upon things that are superficially great, but really terrible is cautionary. The current approach allows shitty work to be viewed as successful by receiving lots of attention. The bad habit of selling horrible low-quality work as success destroys progress and undermines accomplishing truly high-quality work. We all need to be able to recognize these horrors and strenuously reject them. If we start to effectively police ourselves perhaps this plague can be driven back, and progress can flourish.

The thing about chameleoning your way through life is that it gets to where nothing is real.

― John Green

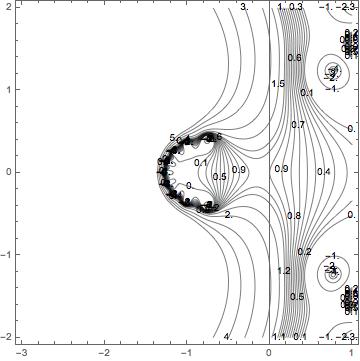

developments for solving hyperbolic PDEs in the 1980’s. For a long time this was one of the best methods available to solve the Euler equations. It still outperforms most of the methods in common use today. For astrophysics, it is the method of choice, and also made major inroads to the weather and climate modeling communities. In spite of having over 4000 citations, I can’t help but think that this paper wasn’t as influential as it could have been. This is saying a lot, but I think this is completely true. This partly due to its style, and relative difficulty as a read. In other words, the paper is not as pedagogically effective as it could have been. The most complex and difficult to understand version of the method is presented in the paper. The paper could have used a different approach to great effect by perhaps providing a simplified version to introduce the reader and deliver the more complex approach as a specific instance. Nonetheless, the paper was a massive milestone in the field.

developments for solving hyperbolic PDEs in the 1980’s. For a long time this was one of the best methods available to solve the Euler equations. It still outperforms most of the methods in common use today. For astrophysics, it is the method of choice, and also made major inroads to the weather and climate modeling communities. In spite of having over 4000 citations, I can’t help but think that this paper wasn’t as influential as it could have been. This is saying a lot, but I think this is completely true. This partly due to its style, and relative difficulty as a read. In other words, the paper is not as pedagogically effective as it could have been. The most complex and difficult to understand version of the method is presented in the paper. The paper could have used a different approach to great effect by perhaps providing a simplified version to introduce the reader and deliver the more complex approach as a specific instance. Nonetheless, the paper was a massive milestone in the field. Riemann solution. Advances that occurred later greatly simplified and clarified this presentation. This is a specific difficulty of being an early adopter of methods, the clarity of presentation and understanding is dimmed by purely narrative effects. Many of these shortcomings have been addressed in the recent literature discussed below.

Riemann solution. Advances that occurred later greatly simplified and clarified this presentation. This is a specific difficulty of being an early adopter of methods, the clarity of presentation and understanding is dimmed by purely narrative effects. Many of these shortcomings have been addressed in the recent literature discussed below.

elaborate ways of producing dissipation while achieving high quality. For very nonlinear problems this is not enough. The paper describes several ways of adding a little bit more, one of these is the shock flattening, and another is an artificial viscosity. Rather than use the classical Von Neumann-Richtmyer approach (that really is more like the Riemann solver), they add a small amount of viscosity using a technique developed by Lapidus appropriate for conservation form solvers. There are other techniques such as grid-jiggling that only really work with PPMLR and may not have any broader utility. Nonetheless, there may be aspects of the thought process that may be useful.

elaborate ways of producing dissipation while achieving high quality. For very nonlinear problems this is not enough. The paper describes several ways of adding a little bit more, one of these is the shock flattening, and another is an artificial viscosity. Rather than use the classical Von Neumann-Richtmyer approach (that really is more like the Riemann solver), they add a small amount of viscosity using a technique developed by Lapidus appropriate for conservation form solvers. There are other techniques such as grid-jiggling that only really work with PPMLR and may not have any broader utility. Nonetheless, there may be aspects of the thought process that may be useful. Riemann solvers with clarity and refinement hadn’t been developed by 1984. Nevertheless, the monolithic implementation of PPM has been a workhorse method for computational science. Through Paul Woodward’s efforts it is often the first real method to be applied to brand new supercomputers, and generates the first scientific results of note on them.

Riemann solvers with clarity and refinement hadn’t been developed by 1984. Nevertheless, the monolithic implementation of PPM has been a workhorse method for computational science. Through Paul Woodward’s efforts it is often the first real method to be applied to brand new supercomputers, and generates the first scientific results of note on them.

A year ago, I sat in one of my manager’s office seething in anger. After Trump’s election victory, my emotions shifted from despair to anger seamlessly. At that particular moment, it was anger that I felt. How could the United States possibly have elected this awful man President? Was the United States so completely broken that Donald Trump was a remotely plausible candidate, much less victor.

A year ago, I sat in one of my manager’s office seething in anger. After Trump’s election victory, my emotions shifted from despair to anger seamlessly. At that particular moment, it was anger that I felt. How could the United States possibly have elected this awful man President? Was the United States so completely broken that Donald Trump was a remotely plausible candidate, much less victor. Apparently, the answer is yes, the United States is that broken. I said something to the effect that we too are to blame for this horrible moment in history. I knew that both of us voted for Clinton, but felt that we played our own role in the election of our reigning moron-in-chief. Today a year into this national nightmare, the nature of our actions leading to this unfolding national and global tragedy is taking shape. We have grown to accept outright incompetence in many things, and now we have a genuinely incompetent manager as President. Lots of incompetence is accepted daily without even blinking, I see it every single day. We have a system that increasingly renders, the competent, incompetent by brutish compliance with directives born of broad-based societal dysfunction.

Apparently, the answer is yes, the United States is that broken. I said something to the effect that we too are to blame for this horrible moment in history. I knew that both of us voted for Clinton, but felt that we played our own role in the election of our reigning moron-in-chief. Today a year into this national nightmare, the nature of our actions leading to this unfolding national and global tragedy is taking shape. We have grown to accept outright incompetence in many things, and now we have a genuinely incompetent manager as President. Lots of incompetence is accepted daily without even blinking, I see it every single day. We have a system that increasingly renders, the competent, incompetent by brutish compliance with directives born of broad-based societal dysfunction. science, or really anything other than marketing himself. His is an utterly self-absorbed anti-intellectual completely lacking empathy and the basic knowledge we should expect him to have. The societal destruction wrought by this buffoon-in-chief is profound. Our most important institutions are being savaged. Divisions in society are being magnified and we stand on the brink of disaster. The worst thing is that this disaster is virtually everyone’s fault whether you stand on the right or the left, you are to blame. The United States was in a weakened state and the Trump virus was poised to infect us. Our immune system was seriously compromised and failed to reject this harmful organism.

science, or really anything other than marketing himself. His is an utterly self-absorbed anti-intellectual completely lacking empathy and the basic knowledge we should expect him to have. The societal destruction wrought by this buffoon-in-chief is profound. Our most important institutions are being savaged. Divisions in society are being magnified and we stand on the brink of disaster. The worst thing is that this disaster is virtually everyone’s fault whether you stand on the right or the left, you are to blame. The United States was in a weakened state and the Trump virus was poised to infect us. Our immune system was seriously compromised and failed to reject this harmful organism. argument about the need to diminish it. The result has been a steady march toward dysfunction and poor performance along with deep seated mistrust, if not outright distain.

argument about the need to diminish it. The result has been a steady march toward dysfunction and poor performance along with deep seated mistrust, if not outright distain.

The Democrats are no better other than some basic human capacity for empathy. For example, the Clintons were quite competent, but competence is something we as a nation don’t need any more, or even believe in. Americans chose the incompetent candidate for President over the competent one. At the same time the Democrats feed into the greedy and corrupt nature of modern governance with a fervor only exceeded by the Republicans. They are what my dad called “limousine liberals” and really cater to the rich and powerful first and foremost while appealing to some elements of compassion (it is still better than “limousine douchebags” on the right). As a result the Democratic party ends up being only slightly less corrupt than the Republican while offering none of the cultural red meat that drives the conservative culture warriors to the polls.

The Democrats are no better other than some basic human capacity for empathy. For example, the Clintons were quite competent, but competence is something we as a nation don’t need any more, or even believe in. Americans chose the incompetent candidate for President over the competent one. At the same time the Democrats feed into the greedy and corrupt nature of modern governance with a fervor only exceeded by the Republicans. They are what my dad called “limousine liberals” and really cater to the rich and powerful first and foremost while appealing to some elements of compassion (it is still better than “limousine douchebags” on the right). As a result the Democratic party ends up being only slightly less corrupt than the Republican while offering none of the cultural red meat that drives the conservative culture warriors to the polls. While both parties cater to the greedy needs of the rich and powerful, the differences in the approach is completely seen in the approach to social issues. The Republicans appeal to traditional values along with enough fear and hate to bring the voters out. They stand in the way of scary progress and the future as the guardians of the past. They are the force that defends American values, which means white people and Christian values. With the Republicans, you can be sure that the Nation will treat those we fear and hate with violence and righteous anger without regard to effectiveness. We will have a criminal justice system that exacts vengeance on the guilty, but does nothing to reform or treat criminals. The same forces provide just enough racially biased policy to make the racists in the Republican ranks happy.

While both parties cater to the greedy needs of the rich and powerful, the differences in the approach is completely seen in the approach to social issues. The Republicans appeal to traditional values along with enough fear and hate to bring the voters out. They stand in the way of scary progress and the future as the guardians of the past. They are the force that defends American values, which means white people and Christian values. With the Republicans, you can be sure that the Nation will treat those we fear and hate with violence and righteous anger without regard to effectiveness. We will have a criminal justice system that exacts vengeance on the guilty, but does nothing to reform or treat criminals. The same forces provide just enough racially biased policy to make the racists in the Republican ranks happy. correctness. They are indeed “snowflakes” who are incapable of debate and standing up for their beliefs. When they don’t like what someone has to say, they attack them and completely oppose the right to speak. The lack of tolerance on the left is one of the forces that powered Trump to the White House. It did this through a loss of any moral high ground, and the production of a divided and ineffective liberal movement. The left has science, progress, empathy and basic human decency on their side yet continue to lose. A big part of their losing strategy is the failure to support each other, and engage in an active dialog on the issues they care so much about.

correctness. They are indeed “snowflakes” who are incapable of debate and standing up for their beliefs. When they don’t like what someone has to say, they attack them and completely oppose the right to speak. The lack of tolerance on the left is one of the forces that powered Trump to the White House. It did this through a loss of any moral high ground, and the production of a divided and ineffective liberal movement. The left has science, progress, empathy and basic human decency on their side yet continue to lose. A big part of their losing strategy is the failure to support each other, and engage in an active dialog on the issues they care so much about. science. Both are wrought by the destructive tendency of the Republican party that makes governing impossible. They are a party of destruction, not creation. When Republicans are put in power they can’t do anything, their entire being is devoted to taking things apart. The Democrats are no better because of their devotion to compliance, regulation and compulsive rule following without thought. This tendency is paired with the liberal’s inability to tolerate any discussion or debate over a litany of politically correct talking points.

science. Both are wrought by the destructive tendency of the Republican party that makes governing impossible. They are a party of destruction, not creation. When Republicans are put in power they can’t do anything, their entire being is devoted to taking things apart. The Democrats are no better because of their devotion to compliance, regulation and compulsive rule following without thought. This tendency is paired with the liberal’s inability to tolerate any discussion or debate over a litany of politically correct talking points. f you are doing anything of real substance and performing at a high level, fuck ups are inevitable. The real key to the operation is the ability of technical competence to be faked. Our false confidence in the competent execution of our work is a localized harbinger of “fake news”.

f you are doing anything of real substance and performing at a high level, fuck ups are inevitable. The real key to the operation is the ability of technical competence to be faked. Our false confidence in the competent execution of our work is a localized harbinger of “fake news”. on raising our level of excellence across the board in science and engineering. Our technical standards should be higher than ever because of the difficulty and importance of this enterprise. Requiring 100% success might seem to be a way to do this, but it isn’t.

on raising our level of excellence across the board in science and engineering. Our technical standards should be higher than ever because of the difficulty and importance of this enterprise. Requiring 100% success might seem to be a way to do this, but it isn’t. This is the place where we get to the core of the accent of Trump. When we lower our standards on leadership we get someone like Trump. The lowering of standards has taken place across the breadth of society. This is not simply National leadership, but corporate and social leadership. Greedy, corrupt and incompetent leaders are increasingly tolerated at all levels of society. At the Labs where I work, the leadership has to say yes to the government, no matter how moronic the direction is. If you don’t say yes, you are removed and punished. We now have leadership that is incapable of engaging in active discussion about how to succeed in our enterprise. The result are labs that simply take the money and execute whatever work they are given without regard for the wisdom of the direction. We now have the blind leading the spineless, and the blind are walking us right over the cliff. Our dysfunctional political system has finally shit the bed and put a moron in the White House. Everyone knows it, and yet a large portion of the population is completely fooled (or simply to foolish or naïve to understand how bad the situations is).

This is the place where we get to the core of the accent of Trump. When we lower our standards on leadership we get someone like Trump. The lowering of standards has taken place across the breadth of society. This is not simply National leadership, but corporate and social leadership. Greedy, corrupt and incompetent leaders are increasingly tolerated at all levels of society. At the Labs where I work, the leadership has to say yes to the government, no matter how moronic the direction is. If you don’t say yes, you are removed and punished. We now have leadership that is incapable of engaging in active discussion about how to succeed in our enterprise. The result are labs that simply take the money and execute whatever work they are given without regard for the wisdom of the direction. We now have the blind leading the spineless, and the blind are walking us right over the cliff. Our dysfunctional political system has finally shit the bed and put a moron in the White House. Everyone knows it, and yet a large portion of the population is completely fooled (or simply to foolish or naïve to understand how bad the situations is). administration lessens the United States’ prestige. The World had counted on the United States for decades, but cannot any longer. We have made a decision as a nation that disqualifies us from a position of leadership. The Republican party has the greatest responsibility for this, but the Democrats are not blameless. Our institutional leadership shares the blame too. Places like the Labs where I work are being destroyed one incompetent step at a time. All of us need to fix this.

administration lessens the United States’ prestige. The World had counted on the United States for decades, but cannot any longer. We have made a decision as a nation that disqualifies us from a position of leadership. The Republican party has the greatest responsibility for this, but the Democrats are not blameless. Our institutional leadership shares the blame too. Places like the Labs where I work are being destroyed one incompetent step at a time. All of us need to fix this. The saddest thing about DNS is the tendency for scientist’s brains to almost audibly click into the off position when its invoked. All one has to say is that their calculation is a DNS and almost any question or doubt leaves the room. No need to look deeper, or think about the results, we are solving the fundamental laws of physics with stunning accuracy! It must be right! They will assert, “this is a first principles” calculation, and predictive at that. Simply marvel at the truths waiting to be unveiled in the sea of bits. Add a bit of machine learning, or artificial intelligence to navigate the massive dataset produced by DNS, (the datasets are so fucking massive, they must have something good! Right?) and you have the recipe for the perfect bullshit sandwich. How dare some infidel cast doubt, or uncertainty on the results! Current DNS practice is a religion within the scientific community, and brings an intellectual rot into the core computational science. DNS reflects some of the worst wishful thinking in the field where the desire for truth, and understanding overwhelms good sense. A more damning assessment would be a tendency to submit to intellectual laziness when pressed by expediency, or difficulty in progress.

The saddest thing about DNS is the tendency for scientist’s brains to almost audibly click into the off position when its invoked. All one has to say is that their calculation is a DNS and almost any question or doubt leaves the room. No need to look deeper, or think about the results, we are solving the fundamental laws of physics with stunning accuracy! It must be right! They will assert, “this is a first principles” calculation, and predictive at that. Simply marvel at the truths waiting to be unveiled in the sea of bits. Add a bit of machine learning, or artificial intelligence to navigate the massive dataset produced by DNS, (the datasets are so fucking massive, they must have something good! Right?) and you have the recipe for the perfect bullshit sandwich. How dare some infidel cast doubt, or uncertainty on the results! Current DNS practice is a religion within the scientific community, and brings an intellectual rot into the core computational science. DNS reflects some of the worst wishful thinking in the field where the desire for truth, and understanding overwhelms good sense. A more damning assessment would be a tendency to submit to intellectual laziness when pressed by expediency, or difficulty in progress. Let’s unpack this issue a bit and get to the core of the problems. First, I will submit that DNS is an unambiguously valuable scientific tool. A large body of work valuable to a broad swath of science can benefit from DNS. We can study our understanding of the universe in myriad ways at phenomenal detail. On the other hand, DNS is not ever a substitute for observations. We do not know the fundamental laws of the universe with such certainty that the solutions provide an absolute truth. The laws we know are models plain and simple. They will always be models. As models, they are approximate and incomplete by their basic nature. This is how science works, we have a theory that explains the universe, and we test that theory (i.e., model) against what we observe. If the model produces the observations with high precision, the model is confirmed. This model confirmation is always tentative and subject to being tested with new or more accurate observations. Solving a model does not replace observations, ever, and some uses of DNS are masking laziness or limitations in observational (experimental) science.

Let’s unpack this issue a bit and get to the core of the problems. First, I will submit that DNS is an unambiguously valuable scientific tool. A large body of work valuable to a broad swath of science can benefit from DNS. We can study our understanding of the universe in myriad ways at phenomenal detail. On the other hand, DNS is not ever a substitute for observations. We do not know the fundamental laws of the universe with such certainty that the solutions provide an absolute truth. The laws we know are models plain and simple. They will always be models. As models, they are approximate and incomplete by their basic nature. This is how science works, we have a theory that explains the universe, and we test that theory (i.e., model) against what we observe. If the model produces the observations with high precision, the model is confirmed. This model confirmation is always tentative and subject to being tested with new or more accurate observations. Solving a model does not replace observations, ever, and some uses of DNS are masking laziness or limitations in observational (experimental) science.

approximate. The approximate solution is never free of numerical error. In DNS, the estimate of the magnitude of approximation error is almost universally lacking from results.

approximate. The approximate solution is never free of numerical error. In DNS, the estimate of the magnitude of approximation error is almost universally lacking from results. Unfortunately, we also need to address an even more deplorable DNS practice. Sometimes people simply declare that their calculation is a DNS without any evidence to support this assertion. Usually this means the calculation is really, really, really, super fucking huge and produces some spectacular graphics with movies and color (rendered in super groovy ways). Sometimes the models being solved are themselves extremely crude or approximate. For example, the Euler equations are being solved with or without turbulence models instead of Navier-Stokes in cases where turbulence is certainly present. This practice is so abominable as to be almost a cartoon of credibility. This is the use of proof by overwhelming force. Claims of DNS should always be taken with a grain of salt. When the claims take the form of marketing they should be met with extreme doubt since it is a form of bullshitting that tarnishes those working to practice scientific integrity.

Unfortunately, we also need to address an even more deplorable DNS practice. Sometimes people simply declare that their calculation is a DNS without any evidence to support this assertion. Usually this means the calculation is really, really, really, super fucking huge and produces some spectacular graphics with movies and color (rendered in super groovy ways). Sometimes the models being solved are themselves extremely crude or approximate. For example, the Euler equations are being solved with or without turbulence models instead of Navier-Stokes in cases where turbulence is certainly present. This practice is so abominable as to be almost a cartoon of credibility. This is the use of proof by overwhelming force. Claims of DNS should always be taken with a grain of salt. When the claims take the form of marketing they should be met with extreme doubt since it is a form of bullshitting that tarnishes those working to practice scientific integrity. Part of doing science correctly is honesty about challenges. Progress can be made with careful consideration of the limitations of our current knowledge. Some of these limits are utterly intrinsic. We can observe reality, but various challenges limit the fidelity and certainty of what we can sense. We can model reality, but these models are always approximate. The models encode simplifications and assumptions. Progress is made by putting these two forms of understanding into tension. Do our models predict or reproduce the observations to within their certainty? If so, we need to work on improving the observations until they challenge the models. If not, the models need to be improved, so that the observations are produced. The current use of DNS short-circuits this tension and acts to undermine progress. It wrongly puts modeling in the place of reality, which only works to derail necessary work on improving models, or work to improve observation. As such, poor DNS practices are actually stalling scientific progress.

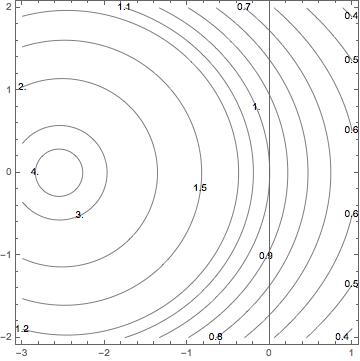

Part of doing science correctly is honesty about challenges. Progress can be made with careful consideration of the limitations of our current knowledge. Some of these limits are utterly intrinsic. We can observe reality, but various challenges limit the fidelity and certainty of what we can sense. We can model reality, but these models are always approximate. The models encode simplifications and assumptions. Progress is made by putting these two forms of understanding into tension. Do our models predict or reproduce the observations to within their certainty? If so, we need to work on improving the observations until they challenge the models. If not, the models need to be improved, so that the observations are produced. The current use of DNS short-circuits this tension and acts to undermine progress. It wrongly puts modeling in the place of reality, which only works to derail necessary work on improving models, or work to improve observation. As such, poor DNS practices are actually stalling scientific progress. The standards of practice in verification of computer codes and applied calculations are generally appalling. Most of the time when I encounter work, I’m just happy to see anything at all done to verify a code. Put differently, most of the published literature accepts a slip shod practice in terms of verification. In some areas like shock physics, the viewgraph norm still reigns supreme. It actually rules supreme in a far broader swath of science, but you talk about what you know. The missing element in most of the literature is the lack of quantitative analysis of results. Even when the work is better and includes detailed quantitative analysis, the work usually lacks a deep connection with numerical analysis results. The typical best practice in verification only includes the comparison of the observed rate of convergence with the theoretical rate of convergence. Worse yet, the result is asymptotic and codes are rarely practically used with asymptotic meshes. Thus, standard practice is largely superficial, and only scratches the surface of the connections with numerical analysis.

The standards of practice in verification of computer codes and applied calculations are generally appalling. Most of the time when I encounter work, I’m just happy to see anything at all done to verify a code. Put differently, most of the published literature accepts a slip shod practice in terms of verification. In some areas like shock physics, the viewgraph norm still reigns supreme. It actually rules supreme in a far broader swath of science, but you talk about what you know. The missing element in most of the literature is the lack of quantitative analysis of results. Even when the work is better and includes detailed quantitative analysis, the work usually lacks a deep connection with numerical analysis results. The typical best practice in verification only includes the comparison of the observed rate of convergence with the theoretical rate of convergence. Worse yet, the result is asymptotic and codes are rarely practically used with asymptotic meshes. Thus, standard practice is largely superficial, and only scratches the surface of the connections with numerical analysis. One of things to understand is that code verification also contains a complete accounting of the numerical error. This error can be used to compare methods with “identical” orders of accuracy for levels of numerical error, which can be useful in making decisions about code options. By the same token solution verification provides information about the observed order of accuracy. Because the applied problems are not analytical or smooth enough, they generally can’t be expected to provide the theoretical order of convergence. The rate of convergence is then an auxiliary result of the solution verification exercise just as the error is an auxiliary result for code verification. It contains useful information on the solution, but it is subservient to the error estimate. Conversely, the error provided in code verification is subservient to the order of accuracy. Nonetheless, the current practice simply scratches the surface of what could be done via verification and its unambiguous ties to numerical analysis.

One of things to understand is that code verification also contains a complete accounting of the numerical error. This error can be used to compare methods with “identical” orders of accuracy for levels of numerical error, which can be useful in making decisions about code options. By the same token solution verification provides information about the observed order of accuracy. Because the applied problems are not analytical or smooth enough, they generally can’t be expected to provide the theoretical order of convergence. The rate of convergence is then an auxiliary result of the solution verification exercise just as the error is an auxiliary result for code verification. It contains useful information on the solution, but it is subservient to the error estimate. Conversely, the error provided in code verification is subservient to the order of accuracy. Nonetheless, the current practice simply scratches the surface of what could be done via verification and its unambiguous ties to numerical analysis.

ted in the shadows for years and years as one of Hollywood’s worst kept secrets. Weinstein preyed on women with virtual impunity with his power and prestige acting to keep his actions in the dark. The promise and threat of his power in that industry gave him virtual license to act. The silence of the myriad of insiders who knew about the pattern of abuse allowed the crimes to continue unabated. Only after the abuse came to light broadly and outside the movie industry did the unacceptability arise. When the abuse stayed in the shadows, and its knowledge limited to industry insiders, it continued.

ted in the shadows for years and years as one of Hollywood’s worst kept secrets. Weinstein preyed on women with virtual impunity with his power and prestige acting to keep his actions in the dark. The promise and threat of his power in that industry gave him virtual license to act. The silence of the myriad of insiders who knew about the pattern of abuse allowed the crimes to continue unabated. Only after the abuse came to light broadly and outside the movie industry did the unacceptability arise. When the abuse stayed in the shadows, and its knowledge limited to industry insiders, it continued. Our current President is serial abuser of power whether it be the legal system, women, business associates or the American people, his entire life is constructed around abuse of power and the privileges of wealth. Many people are his enablers, and nothing enables it more than silence. Like Weinstein, his sexual misconducts are many and well known, yet routinely go unpunished. Others either remain silence or ignore and excuse the abuse a being completely normal.

Our current President is serial abuser of power whether it be the legal system, women, business associates or the American people, his entire life is constructed around abuse of power and the privileges of wealth. Many people are his enablers, and nothing enables it more than silence. Like Weinstein, his sexual misconducts are many and well known, yet routinely go unpunished. Others either remain silence or ignore and excuse the abuse a being completely normal. ower and ability to abuse it. They are an entire collection of champion power abusers. Like all abusers, they maintain their power through the cowering masses below them. When we are silent their power is maintained. They are moving the squash all resistance. My training was pointed at the inside of the institutions and instruments of government where they can use “legal” threats to shut us up. They have waged an all-out assault against the news media. Anything they don’t like is labeled as “fake news” and attacked. The legitimacy of facts has been destroyed, providing the foundation for their power. We are now being threatened to cut off the supply of facts to base resistance upon. This training was the act of people wanting to rule like dictators in an authoritarian manner.

ower and ability to abuse it. They are an entire collection of champion power abusers. Like all abusers, they maintain their power through the cowering masses below them. When we are silent their power is maintained. They are moving the squash all resistance. My training was pointed at the inside of the institutions and instruments of government where they can use “legal” threats to shut us up. They have waged an all-out assault against the news media. Anything they don’t like is labeled as “fake news” and attacked. The legitimacy of facts has been destroyed, providing the foundation for their power. We are now being threatened to cut off the supply of facts to base resistance upon. This training was the act of people wanting to rule like dictators in an authoritarian manner. the set-up is perfect. They are the wolves and we, the sheep, are primed for slaughter. Recent years have witnessed an explosion in the amount of information deemed classified or sensitive. Much of this information is controlled because it is embarrassing or uncomfortable for those in power. Increasingly, information is simply hidden based on non-existent standards. This is a situation that is primed for abuse of power. People is positions of power can hide anything they don’t like. For example, something bad or embarrassing can be deemed to be proprietary or business-sensitive, and buried from view. Here the threats come in handy to make sure that everyone keeps their mouths shut. Various abuses of power can now run free within the system without risk of exposure. Add a weakened free press and you’ve created the perfect storm.

the set-up is perfect. They are the wolves and we, the sheep, are primed for slaughter. Recent years have witnessed an explosion in the amount of information deemed classified or sensitive. Much of this information is controlled because it is embarrassing or uncomfortable for those in power. Increasingly, information is simply hidden based on non-existent standards. This is a situation that is primed for abuse of power. People is positions of power can hide anything they don’t like. For example, something bad or embarrassing can be deemed to be proprietary or business-sensitive, and buried from view. Here the threats come in handy to make sure that everyone keeps their mouths shut. Various abuses of power can now run free within the system without risk of exposure. Add a weakened free press and you’ve created the perfect storm. him. No one even asks the question, and the abuse of power goes unchecked. Worse yet, it becomes the “way things are done”. This takes us full circle to the whole Harvey Weinstein scandal. It is a textbook example of unchecked power, and the “way we do things”.

him. No one even asks the question, and the abuse of power goes unchecked. Worse yet, it becomes the “way things are done”. This takes us full circle to the whole Harvey Weinstein scandal. It is a textbook example of unchecked power, and the “way we do things”.

oo often we make the case that their misdeeds are acceptable because of the power they grant to your causes through their position. This is exactly the bargain Trump makes with the right wing, and Weinstein made with the left.

oo often we make the case that their misdeeds are acceptable because of the power they grant to your causes through their position. This is exactly the bargain Trump makes with the right wing, and Weinstein made with the left. I’d like to be independent empowered and passionate about work, and I definitely used to be. Instead I find that I’m generally disempowered compliant and despondent these days. The actions that manage us have this effect; sending the clear message that we are not in control; we are to be controlled, and our destiny is determined by our subservience. With the National environment headed in this direction, institutions like our National Labs cannot serve their important purpose. The situation is getting steadily worse, but as I’ve seen there is always somewhere worse. By the standards of most people I still have a good job with lots of perks and benefits. Most might tell me that I’ve got it good, and I do, but I’ve never been satisfied with such mediocrity. The standard of “it could be worse” is simply an appalling way to live. The truth is that I’m in a velvet cage. This is said with the stark realization that the same forces are dragging all of us down. Just because I’m relatively fortunate doesn’t mean that the situation is tolerable. The quip that things could be worse is simply a way of accepting the intolerable.

I’d like to be independent empowered and passionate about work, and I definitely used to be. Instead I find that I’m generally disempowered compliant and despondent these days. The actions that manage us have this effect; sending the clear message that we are not in control; we are to be controlled, and our destiny is determined by our subservience. With the National environment headed in this direction, institutions like our National Labs cannot serve their important purpose. The situation is getting steadily worse, but as I’ve seen there is always somewhere worse. By the standards of most people I still have a good job with lots of perks and benefits. Most might tell me that I’ve got it good, and I do, but I’ve never been satisfied with such mediocrity. The standard of “it could be worse” is simply an appalling way to live. The truth is that I’m in a velvet cage. This is said with the stark realization that the same forces are dragging all of us down. Just because I’m relatively fortunate doesn’t mean that the situation is tolerable. The quip that things could be worse is simply a way of accepting the intolerable. beings (people) into a hive where their basic humanity and individuality is lost. Everything is controlled and managed for the good of the collective. Science Fiction is an allegory for society, and the forces of depersonalized control embodied by the Borg have only intensified in our world. Even people working in my chosen profession are under the thrall of a mindless collective. Most of the time it is my maturity and experience as an adult that is called upon. My expertise and knowledge should be my most valuable commodity as a professional, yet they go unused and languishing. They come to play in an almost haphazard catch-what-catch-can manner. Most of the time it happens when I engage with someone external. It is never planned or systematic. My management is much more concerned about me being up on my compliance training than productively employing my talents. The end result is the loss of identity and sense of purpose, so that now I am simply the ninth member of the bottom unit of the collective, 9 of 13.

beings (people) into a hive where their basic humanity and individuality is lost. Everything is controlled and managed for the good of the collective. Science Fiction is an allegory for society, and the forces of depersonalized control embodied by the Borg have only intensified in our world. Even people working in my chosen profession are under the thrall of a mindless collective. Most of the time it is my maturity and experience as an adult that is called upon. My expertise and knowledge should be my most valuable commodity as a professional, yet they go unused and languishing. They come to play in an almost haphazard catch-what-catch-can manner. Most of the time it happens when I engage with someone external. It is never planned or systematic. My management is much more concerned about me being up on my compliance training than productively employing my talents. The end result is the loss of identity and sense of purpose, so that now I am simply the ninth member of the bottom unit of the collective, 9 of 13.

actually manage the work going on and the people doing the work. They are managing our compliance and control, not the work; the work we do is mere afterthought that increasingly does not need me any competent person would do. At one time work felt good and important with a deep sense of personal value and accomplishment. Slowly and surely this sense is being under-mined. We have gone on a long slow march away from being empowered and valued as contributing individuals. Today we are simply ever-replicable cogs in a machine that cannot tolerate a hint of individuality or personality.

actually manage the work going on and the people doing the work. They are managing our compliance and control, not the work; the work we do is mere afterthought that increasingly does not need me any competent person would do. At one time work felt good and important with a deep sense of personal value and accomplishment. Slowly and surely this sense is being under-mined. We have gone on a long slow march away from being empowered and valued as contributing individuals. Today we are simply ever-replicable cogs in a machine that cannot tolerate a hint of individuality or personality. great, and I believe in it. Management should be the art of enabling and working to get the most out of employees. If the system was working properly this would happen. For some reason society has removed its trust for people. Our systems are driven and motivated by fear. The systems are strongly motivated to make sure that people don’t fuck up. A large part of the overhead and lack of empowerment is designed to keep people from making mistakes. A big part of the issue is the punishment meted out for any fuck ups. Our institutions are mercilessly punished for any mistakes. Honest mistakes and failures are met with negative outcomes and a lack of tolerance. The result is a system that tries to defend itself through caution, training and control of people. Our innate potential is insufficient justification for risking the reaction a fuck up might generate. The result is an increasingly meek and subdued workforce unwilling to take risks because failure is such a grim prospect.

great, and I believe in it. Management should be the art of enabling and working to get the most out of employees. If the system was working properly this would happen. For some reason society has removed its trust for people. Our systems are driven and motivated by fear. The systems are strongly motivated to make sure that people don’t fuck up. A large part of the overhead and lack of empowerment is designed to keep people from making mistakes. A big part of the issue is the punishment meted out for any fuck ups. Our institutions are mercilessly punished for any mistakes. Honest mistakes and failures are met with negative outcomes and a lack of tolerance. The result is a system that tries to defend itself through caution, training and control of people. Our innate potential is insufficient justification for risking the reaction a fuck up might generate. The result is an increasingly meek and subdued workforce unwilling to take risks because failure is such a grim prospect.

The same thing is happening to our work. Fear and risk is dominating our decision-making. Human potential, talent, productivity, and lives of value are sacrificed at the altar of fear. Caution has replaced boldness. Compliance has replaced value. Control has replaced empowerment. In the process work has lost meaning and the ability for an individual to make a difference has disappeared. Resistance is futile, you will be assimilated.

The same thing is happening to our work. Fear and risk is dominating our decision-making. Human potential, talent, productivity, and lives of value are sacrificed at the altar of fear. Caution has replaced boldness. Compliance has replaced value. Control has replaced empowerment. In the process work has lost meaning and the ability for an individual to make a difference has disappeared. Resistance is futile, you will be assimilated.  (

( being in the audience. Giving talks is pretty low on the list of reasons, but not in the mind of our overlords, which starts to get at the problems I’ll discuss below. Given the track record of this meeting my expectations were sky-high, and the lack of inspiring ideas left me slightly despondent.

being in the audience. Giving talks is pretty low on the list of reasons, but not in the mind of our overlords, which starts to get at the problems I’ll discuss below. Given the track record of this meeting my expectations were sky-high, and the lack of inspiring ideas left me slightly despondent.

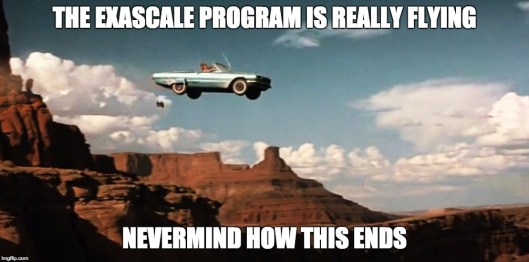

This outcome is conflated with the general lack of intellectual vigor in any public discourse. The same lack of intellectual vigor has put this foolish exascale program in place. Ideas are viewed as counter-productive today in virtually every public square. Alarmingly, science is now suffering from the same ill. Experts and the intellectual elite are viewed unfavorably and their views held in suspicion. Their work is not supported, nor is projects and programs dependent on deep thinking, ideas or intellectual labor. The fingerprints of this systematic dumbing down of our work have reached computational science, and reaping a harvest of poisoned fruit. Another sign of the problem is the lack of engagement of our top scientists in driving new directions in research. Today, managers who do not have any active research define new directions. Every year our manager’s work gets further from any technical content. We have the blind leading the sighted and telling them to trust them, they can see where we are going. This problem highlights the core of the issue; the only thing that matters today is money. What we spend the money on, and the value of that work to advance science is essentially meaningless.

This outcome is conflated with the general lack of intellectual vigor in any public discourse. The same lack of intellectual vigor has put this foolish exascale program in place. Ideas are viewed as counter-productive today in virtually every public square. Alarmingly, science is now suffering from the same ill. Experts and the intellectual elite are viewed unfavorably and their views held in suspicion. Their work is not supported, nor is projects and programs dependent on deep thinking, ideas or intellectual labor. The fingerprints of this systematic dumbing down of our work have reached computational science, and reaping a harvest of poisoned fruit. Another sign of the problem is the lack of engagement of our top scientists in driving new directions in research. Today, managers who do not have any active research define new directions. Every year our manager’s work gets further from any technical content. We have the blind leading the sighted and telling them to trust them, they can see where we are going. This problem highlights the core of the issue; the only thing that matters today is money. What we spend the money on, and the value of that work to advance science is essentially meaningless. Effectively we are seeing the crisis that has infested our broader public sphere moving into science. The lack of intellectual thought and vitality pushing our public discourse to the lowest common denominator is now attacking science. Rather than integrate the best in scientific judgment into our decisions on research direction, it is ignored. The experts are simply told to get in line with the right answer or be silent. In addition, the programs defined through this process then feed back to the scientific community savaging the expertise further. The fact that this science is intimately connected to national and international security should provide a sharper point on the topic. We are caught in a vicious cycle and we are seeing the evidence in the hollowing out of good work at this conference. If one is looking for a poster child for bad research directions, the exascale programs are a good place to look. I’m sure other areas of science are suffering through similar ills. This global effort is genuinely poorly thought through and lacks any sort of intellectual curiosity.

Effectively we are seeing the crisis that has infested our broader public sphere moving into science. The lack of intellectual thought and vitality pushing our public discourse to the lowest common denominator is now attacking science. Rather than integrate the best in scientific judgment into our decisions on research direction, it is ignored. The experts are simply told to get in line with the right answer or be silent. In addition, the programs defined through this process then feed back to the scientific community savaging the expertise further. The fact that this science is intimately connected to national and international security should provide a sharper point on the topic. We are caught in a vicious cycle and we are seeing the evidence in the hollowing out of good work at this conference. If one is looking for a poster child for bad research directions, the exascale programs are a good place to look. I’m sure other areas of science are suffering through similar ills. This global effort is genuinely poorly thought through and lacks any sort of intellectual curiosity. Priority is placed on our existing codes working on the new super expensive computers. The up front cost of these computers is the tip of the proverbial cost iceberg. The explicit cost of the computers is their purchase price, their massive electrical bill and the cost of using these monstrosities. The computers are not the computers we want to use, they are the ones we are forced to use. As such the cost of developing codes on these computers is extreme. These new computers are immensely unproductive environments. They are a huge tax on everyone’s efforts. This sucks the creative air from the room and leads to a reduction in the ability to do anything else. Since all the things being suffocated by exascale are more useful for modeling and simulation, the ability to actually improve our computational modeling is hurt. The only things that benefit from the exascale program are trivial and already exist as well-defined modeling efforts.

Priority is placed on our existing codes working on the new super expensive computers. The up front cost of these computers is the tip of the proverbial cost iceberg. The explicit cost of the computers is their purchase price, their massive electrical bill and the cost of using these monstrosities. The computers are not the computers we want to use, they are the ones we are forced to use. As such the cost of developing codes on these computers is extreme. These new computers are immensely unproductive environments. They are a huge tax on everyone’s efforts. This sucks the creative air from the room and leads to a reduction in the ability to do anything else. Since all the things being suffocated by exascale are more useful for modeling and simulation, the ability to actually improve our computational modeling is hurt. The only things that benefit from the exascale program are trivial and already exist as well-defined modeling efforts.

rse. Most of the activity for working scientists is at the boundaries of our knowledge working to push back our current limits on what is known. The scientific method is there to provide structure and order to the expansion of knowledge. We have well chosen and understood ways to test proposed knowledge. A method of using and testing our theoretical knowledge in science is computational simulation. Within computational work the use of verification, validation with uncertainty quantification is basically the scientific method in action (

rse. Most of the activity for working scientists is at the boundaries of our knowledge working to push back our current limits on what is known. The scientific method is there to provide structure and order to the expansion of knowledge. We have well chosen and understood ways to test proposed knowledge. A method of using and testing our theoretical knowledge in science is computational simulation. Within computational work the use of verification, validation with uncertainty quantification is basically the scientific method in action ( If the uncertainty is irreducible and unavoidable, the problem with not assessing uncertainty and taking an implied value of ZERO for uncertainty becomes truly dangerous (

If the uncertainty is irreducible and unavoidable, the problem with not assessing uncertainty and taking an implied value of ZERO for uncertainty becomes truly dangerous ( may prove deadly in rather commonly encountered situations. As systems become more complex and energetic, chaotic character becomes more acute and common. This chaotic character leads to solutions that have natural variability. Understanding this natural variability is essential to understanding the system. Building this knowledge is the first step in moving to a capability to control and engineer it, and perhaps if wise, reduce it. If one does not possess the understanding of what the variability is, such variability cannot be addressed via systematic engineering or accommodation.

may prove deadly in rather commonly encountered situations. As systems become more complex and energetic, chaotic character becomes more acute and common. This chaotic character leads to solutions that have natural variability. Understanding this natural variability is essential to understanding the system. Building this knowledge is the first step in moving to a capability to control and engineer it, and perhaps if wise, reduce it. If one does not possess the understanding of what the variability is, such variability cannot be addressed via systematic engineering or accommodation.

systematically is an ever-growing limit for science. We have a major scientific gap open in front of us and we are failing to acknowledge and attack it with our scientific tools. It is simply ignored almost by fiat. Changing our perspective would make a huge difference in experimental and theoretical science, and remove our collective heads from the sand about this matter.

systematically is an ever-growing limit for science. We have a major scientific gap open in front of us and we are failing to acknowledge and attack it with our scientific tools. It is simply ignored almost by fiat. Changing our perspective would make a huge difference in experimental and theoretical science, and remove our collective heads from the sand about this matter.

willful uncertainty ignorance. Probably the most common uncertainty to be willfully ignorant of is numerical error. The key numerical error is discretization error that arises from the need to make a continuous problem, discrete and computable. The basic premise of computing is that more discrete degrees of freedom should produce a more accurate answer. Through examining the rate that this happens, the magnitude of the error can be estimated. Other estimates can be had though making some assumptions about the solution and relating the error the nature of the solution (like the magnitude of estimated derivatives). Other generally smaller numerical errors arise from solving systems of equations to a specified tolerance, parallel consistency error and round-off error. In most circumstances these are much smaller than discretization error, but are still non-zero.