It doesn’t matter how beautiful your theory is … If it doesn’t agree with experiment, it’s wrong.

― Richard Feynman

It is an oft-stated maxim that we should grasp the lowest hanging fruit. In real life this often is hidden in plain sight with modeling and simulation being a prime example in my mind. Even a casual observer could see that the emphasis today is focused on computing speed and power as the path to the future. At the same time one can also see that the push for faster computers is foolhardy and hardly comes at an  opportune time. Moore’s law is dead, and may be dead at all scales of computation. It may be the highest hanging fruit pursued at great cost while lower hanging fruits rots away without serious attention, or even conscious neglect. Perhaps nothing typifies this issue more than the state of validation in modeling and simulation.

opportune time. Moore’s law is dead, and may be dead at all scales of computation. It may be the highest hanging fruit pursued at great cost while lower hanging fruits rots away without serious attention, or even conscious neglect. Perhaps nothing typifies this issue more than the state of validation in modeling and simulation.

Validation can be simply stated, but is immensely complex to do correctly. Simply put, validation is the comparison of observations with modeling and simulation results with the intent of understanding the fitness of the model for its intended purpose. More correctly, it is an assessment of modeling correctness, which demands observational data to ground the comparison in reality. It involves deep understanding of experimental and observational science including inherent error and uncertainty. It also involves equally deep understanding of errors and uncertainty of the model. It must be couched in the proper context philosophically including understanding what a model is. Each of these endeavors is in itself a complex and difficult professional activity, and validation is the synthesis of all of it. Being so complex and difficult it is rarely done correctly, and its value is grossly underappreciated. A large part of the reason for this state of affairs is the tendency to completely accept genuinely shoddy validation. I used to give a talk on the validation horrors in the published literature and finding targets for critique basically comes down to looking at almost any paper that does validation. The hard part is finding examples where the validation is done well.

One of the greatest tenets of modeling is the quote by George Box, “all models are wrong, but some are useful.” We have failed to recognize one of the most important, but poorly appreciate maxims of modeling and simulation and corollary to Box’s observation. It is that no amount of computer speed, algorithmic efficiency or accuracy of approximation can make a bad model better. If the model is wrong, solving it faster or more accurately or more efficiently will not improve it. A question that should immediately come to mind “what is useful?” “what is a bad?” and “what is better?” In a deep sense both of these questions are completely answered by a comprehensive validation assessment of the simulation of a model. One needs to define what is bad and what is better. Both concepts depend deeply upon deciding what one wants from a model. What is its point and purpose, and most likely what question is it designed to answer. A question to start things off first understands, “what is a model?”

“What is a model?”

“What is a model?”

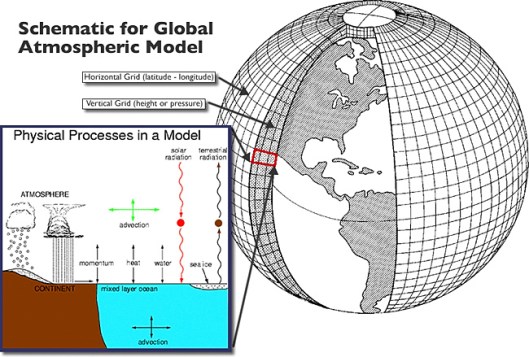

A model is virtually everything associated with a simulation including the code itself, the input to the code, the computer used for the computation, and the analysis of the results. Together all these elements comprise the model. At the core of the model and the code are the theoretical equations being used simulating the real World? More often than not, this is a system of differential equations or something more complex (like integral differential equations for example). These equations are then solved using methods, approximations and algorithms all of which leave their imprint on the results. Putting all of this involves creating a computer code, creating a discrete description of the World and computing that result. Each of these steps constitutes a part of the model. Once the computation has been completed, the results need to analyze and results drawn out of the mountain of numbers produced by the computer. All of these comprise the model we are validating. To separate one thing from another requires good disciplined work and lots of rigor. Usually this discipline is lacking and rigor is replaced by assumptions and slothful practices. In very many cases we are watching willful ignorance in action, or simple negligence. We know how to do validation; we simply don’t demand that people practice it. People are often comforted not knowing and don’t want to actually understand the depth of their structural ignorance.

Science is not about making predictions or performing experiments. Science is about explaining.

― Bill Gaede

Observing and understanding are two different things.

― Mary E. Pearson

To conduct a validation assessment you need observations to compare to. This is an absolute necessity; if you have no observational data, you have no validation. Once the data is at hand, you need to understand how good it is. This means understanding how uncertain the data is. This uncertainty can come from three major aspects of the process: errors in measurement, errors in statistics, and errors in interpretation. In the order of how these were mentioned each of these categories become more difficult to assess and less common to actually be assessed in practice. Most commonly assessed is measurement error that is the uncertainty of the value of a measured quantity. This is a function of the measurement technology or the inference of the quantity from other data. The second aspect is associated with the statistical nature of the measurement. Is the observation or experiment repeatable? If it is not how much might the measured value differ due to changes in the system being observed? How typical are the measured values? In many cases this issue is ignored in a willfully ignorant manner. Finally, the hardest part of observational bias often defined as answering the question, “how do we know that we a measuring what we think we are?” Is there something systematic in our observed system that we have not accounted for that might be changing our observations. This may come from some sort of problem in calibrating measurements, or looking at the observed system in a manner that is inconsistent. These all lead to potential bias and distortion of the measurements.

To conduct a validation assessment you need observations to compare to. This is an absolute necessity; if you have no observational data, you have no validation. Once the data is at hand, you need to understand how good it is. This means understanding how uncertain the data is. This uncertainty can come from three major aspects of the process: errors in measurement, errors in statistics, and errors in interpretation. In the order of how these were mentioned each of these categories become more difficult to assess and less common to actually be assessed in practice. Most commonly assessed is measurement error that is the uncertainty of the value of a measured quantity. This is a function of the measurement technology or the inference of the quantity from other data. The second aspect is associated with the statistical nature of the measurement. Is the observation or experiment repeatable? If it is not how much might the measured value differ due to changes in the system being observed? How typical are the measured values? In many cases this issue is ignored in a willfully ignorant manner. Finally, the hardest part of observational bias often defined as answering the question, “how do we know that we a measuring what we think we are?” Is there something systematic in our observed system that we have not accounted for that might be changing our observations. This may come from some sort of problem in calibrating measurements, or looking at the observed system in a manner that is inconsistent. These all lead to potential bias and distortion of the measurements.

The intrinsic benefit of this approach is a systematic investigation of the ability of the model to produce the features of reality. Ultimately the model needs to produce the features of reality that we care about, and can measure. This combination is good to balance in the process of validation, the ability to produce the reality necessary to conduct engineering and science, but also general observations. A really good confidence builder is the ability of model to produce proper results on things that we care as well as those don’t care about. One of the core issues is the high probability that many of the things we care about in a model cannot be observed, and the model acts as an inference device for science. In this case the observations act to provide confidence that the model’s inferences can be trusted. One of the keys to the whole enterprise is understanding the uncertainty intrinsic to these inferences, and good validation provides essential information for this.

The intrinsic benefit of this approach is a systematic investigation of the ability of the model to produce the features of reality. Ultimately the model needs to produce the features of reality that we care about, and can measure. This combination is good to balance in the process of validation, the ability to produce the reality necessary to conduct engineering and science, but also general observations. A really good confidence builder is the ability of model to produce proper results on things that we care as well as those don’t care about. One of the core issues is the high probability that many of the things we care about in a model cannot be observed, and the model acts as an inference device for science. In this case the observations act to provide confidence that the model’s inferences can be trusted. One of the keys to the whole enterprise is understanding the uncertainty intrinsic to these inferences, and good validation provides essential information for this.

One of the things few people recognize is the inability of other means to provide remediation from problems with the model. If a model is flawed there is no amount of computer power that can rectify its shortcomings. A computer of infinite speed would (should) only make the problems more apparent. This obvious outcome only becomes available with a complete, rigorous and focused validation of the model. Slipshod validation practices simply allow the wrong model to be propagated without necessary feedback. It is bad science plain and simple. No numerical method or algorithm in the code could provide relief either. The leadership in high performance computing is utterly oblivious to this. As a result almost no effort whatsoever is being put into validation, and models are being propagated forward without any thought regarding their validity. No serious effort exists to put the models to the test either. If our leadership is remotely competent this is an act of willful ignorance, i.e., negligence. While our models today are wonderful in many regards, they are far from perfect (remember what George Box said!). A well-structured scientific and engineering enterprise would make this evident, and employ means to improving them. These new models would open broad new vistas of utility in science and engineering. A lack of recognition of this opportunity makes modeling and simulation self-limiting in its impact.

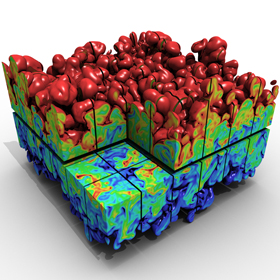

A prime example where our modeling and simulation are deficient is reproducing the variability seen in the real World. In many cases the experimental practice is equally deficient. For most phenomena of genuine interest and challenge, events and engineered products the exact same response cannot be produced. There are variations in the response because of small differences in the system being studied coming from external conditions (boundary conditions) or the state of system (initial conditions), or simply a degree of heterogeneous character in the system itself. In many cases the degree of variation in response is very large and terribly important. In engineered systems this leads to the application of large and expensive safety factors along with the risk of disaster. This depends to some extent on the nature of the response be sought. The more localized the response, the greater the tendency to be variable, while global-integrated responses can be far more reliably reproduced.

Our scientific and engineering attention is being drawn increasingly to the local responses for significant events, and their importance is growing. These are often worst-case conditions that we strive to avoid. At the same time our models are completely ill suited to address these responses. Our models cannot effectively simulate these sorts of features. Our models are almost without exception focused on a mean-field model producing a model of the average system involving far more homogeneous properties and responses than seen in reality. As such the extremes in response are removed a priori. By the same token our observational and experimental practices are not arrayed to unveil this increasingly essential aspect of reality. The ability of modeling and simulation to impact the real World effectively suffers and its impact is limited by failing to progress.

Our scientific and engineering attention is being drawn increasingly to the local responses for significant events, and their importance is growing. These are often worst-case conditions that we strive to avoid. At the same time our models are completely ill suited to address these responses. Our models cannot effectively simulate these sorts of features. Our models are almost without exception focused on a mean-field model producing a model of the average system involving far more homogeneous properties and responses than seen in reality. As such the extremes in response are removed a priori. By the same token our observational and experimental practices are not arrayed to unveil this increasingly essential aspect of reality. The ability of modeling and simulation to impact the real World effectively suffers and its impact is limited by failing to progress.

…if you’re doing an experiment, you should report everything that you think might make it invalid—not only what you think is right about it: other causes that could possibly explain your results; and things you thought of that you’ve eliminated by some other experiment, and how they worked—to make sure the other fellow can tell they have been eliminated.

― Richard Feynman

One of the greatest issues in validation is “negligible” errors and uncertainties. In many cases these errors are negligible by assertion and no evidence is given. A standing suggestion is that any negligible error or uncertainty be given a numerical value along with evidence for that value. If this cannot be done, the assertion is most likely to be specious, or at least poorly thought through. If you know it is small then you should know how small and why. It is more likely is that it is based on some combination of laziness and wishful thinking. In other cases this practice is an act of negligence, and worse yet it is simply willful ignorance on the part of practitioners. This is an equal opportunity issue for computational modeling and experiments. Often (almost always!) numerical errors are completely ignored in validation. The most brazen violators will simply assert without evidence that the errors are small or the calculated is converged without offering any evidence beyond authority.

One of the greatest issues in validation is “negligible” errors and uncertainties. In many cases these errors are negligible by assertion and no evidence is given. A standing suggestion is that any negligible error or uncertainty be given a numerical value along with evidence for that value. If this cannot be done, the assertion is most likely to be specious, or at least poorly thought through. If you know it is small then you should know how small and why. It is more likely is that it is based on some combination of laziness and wishful thinking. In other cases this practice is an act of negligence, and worse yet it is simply willful ignorance on the part of practitioners. This is an equal opportunity issue for computational modeling and experiments. Often (almost always!) numerical errors are completely ignored in validation. The most brazen violators will simply assert without evidence that the errors are small or the calculated is converged without offering any evidence beyond authority.

The greatest enemy of knowledge is not ignorance, it is the illusion of knowledge.

― Daniel J. Boorstin

Similarly, in experiments measurements will be offered without any measurement error, and often no evidence along with an assertion that the error is too small to be concerned about. Experimental or observational results are also highly prone to ignore variability in outcomes and treat each case as a well-determined result even when the physics of the problem is strongly dependent on the details of the initial conditions (or the prevailing models strongly imply this!). Similar sins are committed with modeling uncertainties where an incomplete assessment is made of uncertainty, and no accounting is made of the incompleteness and its impact. To make matters worse other obvious sources of uncertainty are ignored. The result of these patterns of conduct is an almost universal under-estimate of uncertainty from both modeling and observations. This under-estimate results in modeling and simulation being applied in a manner that is non-conservative from a decision-making perspective.

The result of these rather sloppy practices is a severely limited capacity to properly offer an assessment of model validation. Using rather complete uncertainties can produce the sort of result needed to produce definitive results that offer feedback on modeling. If uncertainties can be driven small enough we can drive improvement in the underlying science and engineering. For example, very precise and well-controlled experiments with small uncertainties can produce evidence that models must be improved. Exceptionally small modeling uncertainty could produce a similar effect in pushing experiments. Too often the work is conducted with a strong confirmation bias that takes the possibility of model incorrectness off the table. The result is a stagnant situation where models are not improving and shoddy professional practice is accepted. All of this stems from a lack of understanding or priority for proper validation assessment.

Confidence is ignorance. If you’re feeling cocky, it’s because there’s something you don’t know.

― Eoin Colfer

A mature realization for scientists is that validation is never complete. Models are validated, not codes. The model is a broad set of simulation features, including the model equations, and the code, but also a huge swath of other things. The validation is simply an assessment of all those things. This assessment looks at whether the model and the data are consistent with each other given the uncertainties in each. This assessment is predicated on the completeness of the uncertainty estimation. In the grand scheme of things one wants drive the uncertainties down in either the model or the observations of reality. The big scientific endeavor is locating the source of error in the model; is it in how the model is solved? Or are the model equations flawed? A flawed theoretical model can be a major scientific result requiring a deep theoretical response. Repairing these flaws can open new doors of understanding and drive our knowledge forward in miraculous ways. We need to adopt practices that allow us to identify problems that new models are needed for. The current modeling and simulation practice removes this outcome as a possibility at the outset.

A man is responsible for his ignorance.

― Milan Kundera

Rider, William J. A Rogue’s Gallery of V&V Practice. No. SAND2009-4667C. Sandia National Laboratories (SNL-NM), Albuquerque, NM (United States), 2009.

Rider, William J. What Makes A Calculation Good? Or Bad?. No. SAND2011-7666C. Sandia National Laboratories (SNL-NM), Albuquerque, NM (United States), 2011.

Rider, William J. What is verification and validation (and what is not!)?. No. SAND2010-1954C. Sandia National Laboratories, 2010.

tempt at perfection.

tempt at perfection. For gas dynamics, the mathematics of the model provide us with some very general character to the problems we solve. Shock waves are the preeminent feature of compressible gas dynamics, and a relatively predominant focal point for methods’ development and developer attention. Shock waves are nonlinear and naturally steepen thus countering dissipative effects. Shocks benefit through their character as garbage collectors, they are dissipative features and as a result destroy information. Some of this destruction limits the damage done by poor choices of numerical treatment. Being nonlinear one has to be careful with shocks. The very worst thing you can do is to add too little dissipation because this will allow the solution to generate unphysical noise or oscillations that are emitted by the shock. These oscillations will then become features of the solution. A lot of the robustness we seek comes from not producing oscillations, which can be best achieved with generous dissipation at shocks. Shocks receive so much attention because their improper treatment is utterly catastrophic, but they are not the only issue; the others are just more subtle and less apparently deadly.

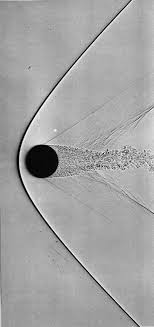

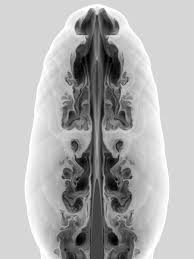

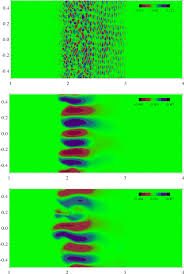

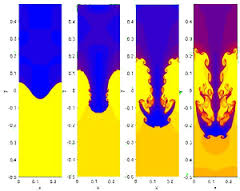

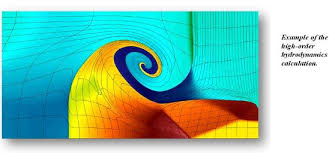

For gas dynamics, the mathematics of the model provide us with some very general character to the problems we solve. Shock waves are the preeminent feature of compressible gas dynamics, and a relatively predominant focal point for methods’ development and developer attention. Shock waves are nonlinear and naturally steepen thus countering dissipative effects. Shocks benefit through their character as garbage collectors, they are dissipative features and as a result destroy information. Some of this destruction limits the damage done by poor choices of numerical treatment. Being nonlinear one has to be careful with shocks. The very worst thing you can do is to add too little dissipation because this will allow the solution to generate unphysical noise or oscillations that are emitted by the shock. These oscillations will then become features of the solution. A lot of the robustness we seek comes from not producing oscillations, which can be best achieved with generous dissipation at shocks. Shocks receive so much attention because their improper treatment is utterly catastrophic, but they are not the only issue; the others are just more subtle and less apparently deadly. The fourth standard feature is shear waves, which are a different form of linearly degenerate waves. Shear waves are heavily related to turbulence, thus being a huge source of terror. In one dimension shear is rather innocuous being just another contact, but in two or three dimensions our current knowledge and technical capabilities are quickly overwhelmed. Once you have a turbulent flow, one must deal with the conflation of numerical error, and modeling becomes a pernicious aspect of a calculation. In multiple dimensions the shear is almost invariably unstable and solutions become chaotic and boundless in terms of complexity. This boundless complexity means that solutions are significantly mesh dependent, and demonstrably non-convergent in a point wise sense. There may be a convergence in a measure-valued sense, but these concepts are far from well defined, fully explored and technically agreed upon.

The fourth standard feature is shear waves, which are a different form of linearly degenerate waves. Shear waves are heavily related to turbulence, thus being a huge source of terror. In one dimension shear is rather innocuous being just another contact, but in two or three dimensions our current knowledge and technical capabilities are quickly overwhelmed. Once you have a turbulent flow, one must deal with the conflation of numerical error, and modeling becomes a pernicious aspect of a calculation. In multiple dimensions the shear is almost invariably unstable and solutions become chaotic and boundless in terms of complexity. This boundless complexity means that solutions are significantly mesh dependent, and demonstrably non-convergent in a point wise sense. There may be a convergence in a measure-valued sense, but these concepts are far from well defined, fully explored and technically agreed upon. ns. The usual aim of solvers is to completely remove dissipation, but that runs the risk of violating the second law. It may be more advisable to keep a small positive dissipation working (perhaps using a hyperviscosity partially because control volumes add a nonlinear anti-dissipative error). This way the code stays away from circumstances that violate this essential physical law. We can work with other forms of entropy satisfaction too. Most notably is Lax’s condition that identifies the structures in a flow by the local behavior of the relevant characteristics of the flow. Across a shock the characteristics flow into the shock, and this condition should be met with dissipation. These structures are commonly present in the head of rarefactions.

ns. The usual aim of solvers is to completely remove dissipation, but that runs the risk of violating the second law. It may be more advisable to keep a small positive dissipation working (perhaps using a hyperviscosity partially because control volumes add a nonlinear anti-dissipative error). This way the code stays away from circumstances that violate this essential physical law. We can work with other forms of entropy satisfaction too. Most notably is Lax’s condition that identifies the structures in a flow by the local behavior of the relevant characteristics of the flow. Across a shock the characteristics flow into the shock, and this condition should be met with dissipation. These structures are commonly present in the head of rarefactions. hods I have devised or the MP methods of Suresh and Huynh. Both of these methods are significantly more accurate than WENO methods.

hods I have devised or the MP methods of Suresh and Huynh. Both of these methods are significantly more accurate than WENO methods. technology and communication unthinkable a generation before. My chosen profession looks increasingly like a relic of that Cold War, and increasingly irrelevant to the World that unfolds before me. My workplace is failing to keep pace with change, and I’m seeing it fall behind modernity in almost every respect. It’s a safe, secure job, but lacks most of the edge the real World offers. All of the forces unleashed in today’s World make for incredible excitement, possibility, incredible terror and discomfort. The potential for humanity is phenomenal, and the risks are profound. In short, we live in simultaneously in incredibly exciting and massively sucky times. Yes, both of these seemingly conflicting things can be true.

technology and communication unthinkable a generation before. My chosen profession looks increasingly like a relic of that Cold War, and increasingly irrelevant to the World that unfolds before me. My workplace is failing to keep pace with change, and I’m seeing it fall behind modernity in almost every respect. It’s a safe, secure job, but lacks most of the edge the real World offers. All of the forces unleashed in today’s World make for incredible excitement, possibility, incredible terror and discomfort. The potential for humanity is phenomenal, and the risks are profound. In short, we live in simultaneously in incredibly exciting and massively sucky times. Yes, both of these seemingly conflicting things can be true. violence increasingly in a state-sponsored way is increasing. The violence against change is growing whether it is by governments or terrorists. What isn’t commonly appreciated is the alliance in violence of the right wing and terrorists. Both are fighting against the sorts of changes modernity is bringing. The fight against terror is giving the violent right wing more power. The right wing and Islamic terrorists have the same aim, undoing the push toward progress with modern views of race, religion and morality. The only difference is the name of the prophet the violence is done in name of.

violence increasingly in a state-sponsored way is increasing. The violence against change is growing whether it is by governments or terrorists. What isn’t commonly appreciated is the alliance in violence of the right wing and terrorists. Both are fighting against the sorts of changes modernity is bringing. The fight against terror is giving the violent right wing more power. The right wing and Islamic terrorists have the same aim, undoing the push toward progress with modern views of race, religion and morality. The only difference is the name of the prophet the violence is done in name of. which provides the rich and powerful the tools to control their societies as well. They also wage war against the sources of terrorism creating the conditions and recruiting for more terrorists. The police states have reduced freedom and lots of censorship, imprisonment, and opposition to personal empowerment. The same police states are effective at repressing minority groups within nations using the weapons gained to fight terror. Together these all help the cause of the right wing in blunting progress. The two allies like to kill each other too, but the forces of hate; fear and doubt work to their greater ends. In fighting terrorism we are giving them exactly what they want, the reduction of our freedom and progress. This is also the aim of right wing; stop the frightening future from arriving through the imposition of traditional values. This is exactly what the religious extremists want be they Islamic or Christian.

which provides the rich and powerful the tools to control their societies as well. They also wage war against the sources of terrorism creating the conditions and recruiting for more terrorists. The police states have reduced freedom and lots of censorship, imprisonment, and opposition to personal empowerment. The same police states are effective at repressing minority groups within nations using the weapons gained to fight terror. Together these all help the cause of the right wing in blunting progress. The two allies like to kill each other too, but the forces of hate; fear and doubt work to their greater ends. In fighting terrorism we are giving them exactly what they want, the reduction of our freedom and progress. This is also the aim of right wing; stop the frightening future from arriving through the imposition of traditional values. This is exactly what the religious extremists want be they Islamic or Christian. Driving the conservatives to such heights of violence and fear are changes to society of massive scale. Demographics are driving the changes with people of color becoming impossible to ignore, along with an aging population. Sexual freedom has emboldened people to break free of traditional boundaries of behavior, gender and relationships. Technology is accelerating the change and empowering people in amazing ways. All of this terrifies many people and provides extremists with the sort of alarmist rhetoric needed to grab power. We see these forces rushing headlong toward each other with society-wide conflict the impact. Progressive and conservative blocks are headed toward a massive fight. This also lines up along urban and rural lines, young and old, educated and uneducated. The future hangs in the balance and it is not clear who has the advantage.

Driving the conservatives to such heights of violence and fear are changes to society of massive scale. Demographics are driving the changes with people of color becoming impossible to ignore, along with an aging population. Sexual freedom has emboldened people to break free of traditional boundaries of behavior, gender and relationships. Technology is accelerating the change and empowering people in amazing ways. All of this terrifies many people and provides extremists with the sort of alarmist rhetoric needed to grab power. We see these forces rushing headlong toward each other with society-wide conflict the impact. Progressive and conservative blocks are headed toward a massive fight. This also lines up along urban and rural lines, young and old, educated and uneducated. The future hangs in the balance and it is not clear who has the advantage. maintain communication via text, audio or video with amazing ease. This starts to form different communities and relationships that are shaking culture. It’s mostly a middle class phenomenon and thus the poor are left out, and afraid.

maintain communication via text, audio or video with amazing ease. This starts to form different communities and relationships that are shaking culture. It’s mostly a middle class phenomenon and thus the poor are left out, and afraid. For example in my public life, I am not willing to give up the progress, and feel that the right wing is agitating to push the clock back. The right wing feels the changes are immoral and frightening and want to take freedom away. They will do it in the name of fighting terrorism while clutching the mantle of white nationalism and the Bible in the other hand. Similar mixes are present in Europe, Russia, and remarkably across the Arab world. My work World is increasingly allied with the forces against progress. I see a deep break in the not to distant future where the progress scientific research depends upon will be utterly incongruent with the values of my security-obsessed work place. The two things cannot live together effectively and ultimately the work will be undone by its inability to commit itself to being part of the modern World. The forces of progress are powerful and seemingly unstoppable too. We are seeing the unstoppable force of progress meet the immovable object of fear and oppression. It is going to be violent and sudden. My belief in progress is steadfast, and unwavering. Nonetheless, we have had episodes in human history where progress was stopped. Anyone heard of the dark ages? It can happen.

For example in my public life, I am not willing to give up the progress, and feel that the right wing is agitating to push the clock back. The right wing feels the changes are immoral and frightening and want to take freedom away. They will do it in the name of fighting terrorism while clutching the mantle of white nationalism and the Bible in the other hand. Similar mixes are present in Europe, Russia, and remarkably across the Arab world. My work World is increasingly allied with the forces against progress. I see a deep break in the not to distant future where the progress scientific research depends upon will be utterly incongruent with the values of my security-obsessed work place. The two things cannot live together effectively and ultimately the work will be undone by its inability to commit itself to being part of the modern World. The forces of progress are powerful and seemingly unstoppable too. We are seeing the unstoppable force of progress meet the immovable object of fear and oppression. It is going to be violent and sudden. My belief in progress is steadfast, and unwavering. Nonetheless, we have had episodes in human history where progress was stopped. Anyone heard of the dark ages? It can happen. work. This is delivered with disempowering edicts and policies that conspire to shackle me, and keep me from doing anything. The real message is that nothing you do is important enough to risk fucking up. I’m convinced that the things making work suck are strongly connected to everything else.

work. This is delivered with disempowering edicts and policies that conspire to shackle me, and keep me from doing anything. The real message is that nothing you do is important enough to risk fucking up. I’m convinced that the things making work suck are strongly connected to everything else. adapting and pushing themselves forward, the organization will stagnate or be left behind by the exciting innovations shaping the World today. When the motivation of the organization fails to emphasize productivity and efficiency, the recipe is disastrous. Modern technology offers the potential for incredible advances, but only if they’re seeking advantage. If minds are not open to making things better, it is a recipe for frustration.

adapting and pushing themselves forward, the organization will stagnate or be left behind by the exciting innovations shaping the World today. When the motivation of the organization fails to emphasize productivity and efficiency, the recipe is disastrous. Modern technology offers the potential for incredible advances, but only if they’re seeking advantage. If minds are not open to making things better, it is a recipe for frustration. unwittingly enter into the opposition to change, and assist the conservative attempt to hold onto the past.

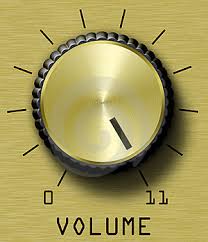

unwittingly enter into the opposition to change, and assist the conservative attempt to hold onto the past. code. You found some really good problems that “go to eleven.” If you do things right, your code will eventually “break” in some way. The closer you look, the more likely it’ll be broken. Heck your code is probably already broken, and you just don’t know it! Once it’s broken, what should you do? How do you get the code back into working order? What can you do to figure out why it’s broken? How do you live with the knowledge of the limitations of the code? Those limitations are there, but usually you don’t know them very well. Essentially you’ve gone about the process of turning over rocks with your code until you find something awfully dirty-creepy crawly underneath. You then have mystery to solve, and/or ambiguity to live with from the results.

code. You found some really good problems that “go to eleven.” If you do things right, your code will eventually “break” in some way. The closer you look, the more likely it’ll be broken. Heck your code is probably already broken, and you just don’t know it! Once it’s broken, what should you do? How do you get the code back into working order? What can you do to figure out why it’s broken? How do you live with the knowledge of the limitations of the code? Those limitations are there, but usually you don’t know them very well. Essentially you’ve gone about the process of turning over rocks with your code until you find something awfully dirty-creepy crawly underneath. You then have mystery to solve, and/or ambiguity to live with from the results.

failures that can go unnoticed without expert attention. One of the key things is the loss of accuracy in a solution. This could be the numerical level of error being wrong, or the rate of convergence of the solution being outside the theoretical guarantees for the method (convergence rates are a function of the method and the nature of the solution itself). Sometimes this character is associated with an overly dissipative solution where numerical dissipation is too large to be tolerated. At this subtle level we are judging failure by a high standard based on knowledge and expectations driven by deep theoretical understanding. These failings generally indicate you are at a good level of testing and quality.

failures that can go unnoticed without expert attention. One of the key things is the loss of accuracy in a solution. This could be the numerical level of error being wrong, or the rate of convergence of the solution being outside the theoretical guarantees for the method (convergence rates are a function of the method and the nature of the solution itself). Sometimes this character is associated with an overly dissipative solution where numerical dissipation is too large to be tolerated. At this subtle level we are judging failure by a high standard based on knowledge and expectations driven by deep theoretical understanding. These failings generally indicate you are at a good level of testing and quality. methods. You should be intimately familiar with the conditions for stability for the code’s methods. You should assure that the stability conditions are not being exceeded. If a stability condition is missed, or calculated incorrectly, the impact is usually immediate and catastrophic. One way to do this on the cheap is modify the code’s stability condition to a more conservative version usually with a smaller safety factor. If the catastrophic behavior goes away then it points a finger at the stability condition with some certainty. Either the method is wrong, or not coded correctly, or you don’t really understand the stability condition properly. It is important to figure out which of these possibilities you’re subject to. Sometimes this needs to be studied using analytical techniques to examine the stability theoretically.

methods. You should be intimately familiar with the conditions for stability for the code’s methods. You should assure that the stability conditions are not being exceeded. If a stability condition is missed, or calculated incorrectly, the impact is usually immediate and catastrophic. One way to do this on the cheap is modify the code’s stability condition to a more conservative version usually with a smaller safety factor. If the catastrophic behavior goes away then it points a finger at the stability condition with some certainty. Either the method is wrong, or not coded correctly, or you don’t really understand the stability condition properly. It is important to figure out which of these possibilities you’re subject to. Sometimes this needs to be studied using analytical techniques to examine the stability theoretically. Another approach to take is systematically make the problem you’re solving easier until the results are “correct,” or the catastrophic behavior is replaced with something less odious. An important part of this process is more deeply understand how the problems are being triggered in the code. What sort of condition is being exceeded and how are the methods in the code going south? Is there something explicit that can be done to change the methodology so that this doesn’t happen? Ultimately, the issue is a systematic understanding of how the code and its method’s behave, their strengths and weaknesses. Once the weakness is exposed in the testing can you do something to get rid of it? Whether the weakness is a bug or feature of the code is another question to answer. Through the process of successively harder problems one can make the code better and better until you’re at the limits of knowledge.

Another approach to take is systematically make the problem you’re solving easier until the results are “correct,” or the catastrophic behavior is replaced with something less odious. An important part of this process is more deeply understand how the problems are being triggered in the code. What sort of condition is being exceeded and how are the methods in the code going south? Is there something explicit that can be done to change the methodology so that this doesn’t happen? Ultimately, the issue is a systematic understanding of how the code and its method’s behave, their strengths and weaknesses. Once the weakness is exposed in the testing can you do something to get rid of it? Whether the weakness is a bug or feature of the code is another question to answer. Through the process of successively harder problems one can make the code better and better until you’re at the limits of knowledge. Whether you are at the limits of knowledge takes a good deal of experience and study. You need to know the field and your competition quite well. You need to be willing to borrow from others and consider their success carefully. There is little time for pride, if you want to get to the frontier of capability; you need to be brutal and focused along the path. You need to keep pushing your code with harder testing and not be satisfied with the quality. Eventually you will get to problems that cannot be overcome with what people know how to do. At that point your methodology probably needs to evolve a bit. This is really hard work, and prone to risk and failure. For this reason most codes never get to this level of endeavor, its simply too hard on the code developers, and worse on those managing them. Today’s management of science simply doesn’t enable the level of risk and failure necessary to get to the summit of our knowledge. Management wants sure results and cannot deal with ambiguity at all, and striving at the frontier of knowledge is full of it, and usually ends up failing.

Whether you are at the limits of knowledge takes a good deal of experience and study. You need to know the field and your competition quite well. You need to be willing to borrow from others and consider their success carefully. There is little time for pride, if you want to get to the frontier of capability; you need to be brutal and focused along the path. You need to keep pushing your code with harder testing and not be satisfied with the quality. Eventually you will get to problems that cannot be overcome with what people know how to do. At that point your methodology probably needs to evolve a bit. This is really hard work, and prone to risk and failure. For this reason most codes never get to this level of endeavor, its simply too hard on the code developers, and worse on those managing them. Today’s management of science simply doesn’t enable the level of risk and failure necessary to get to the summit of our knowledge. Management wants sure results and cannot deal with ambiguity at all, and striving at the frontier of knowledge is full of it, and usually ends up failing. Another big issue is finding general purpose fixes for hard problems. Often the fix to a really difficult problem wrecks your ability to solve lots of other problems. Tailoring the solution to treat the difficulty and not destroy the ability to solve other easier problems is an art and the core of the difficulty in advancing the state of the art. The skill to do this requires fairly deep theoretical knowledge of a field study, along with exquisite understanding of the root of difficulties. The difficulty people don’t talk about is the willingness to attack the edge of knowledge and explicitly admit the limitations of what is currently done. This is an admission of weakness that our system doesn’t support. When fixes aren’t general purpose, one clear sign is a narrow range of applicability. If its not general purpose and makes a mess of existing methodology, you probably don’t really understand what’s going on.

Another big issue is finding general purpose fixes for hard problems. Often the fix to a really difficult problem wrecks your ability to solve lots of other problems. Tailoring the solution to treat the difficulty and not destroy the ability to solve other easier problems is an art and the core of the difficulty in advancing the state of the art. The skill to do this requires fairly deep theoretical knowledge of a field study, along with exquisite understanding of the root of difficulties. The difficulty people don’t talk about is the willingness to attack the edge of knowledge and explicitly admit the limitations of what is currently done. This is an admission of weakness that our system doesn’t support. When fixes aren’t general purpose, one clear sign is a narrow range of applicability. If its not general purpose and makes a mess of existing methodology, you probably don’t really understand what’s going on.

Fortunate are those who take the first steps.

Fortunate are those who take the first steps. Marty DiBergi: I don’t know.

Marty DiBergi: I don’t know. simplified versions of what we know brings the code to its knees, and serve as good blueprints for removing these issues from the code’s methods. Alternatively, they provide proof that certain pathological behaviors do or don’t exist in a code. Really brutal problems that “go to eleven” aid the development of methods by highlighting where improvement is needed clearly. Usually the simpler and cleaner problems are better because more codes and methods can run them, analysis is easier and we can successfully experiment with remedial measures. This allows more experimentation and attempts to solve it using diverse approaches. This can energize rapid progress and deeper understanding.

simplified versions of what we know brings the code to its knees, and serve as good blueprints for removing these issues from the code’s methods. Alternatively, they provide proof that certain pathological behaviors do or don’t exist in a code. Really brutal problems that “go to eleven” aid the development of methods by highlighting where improvement is needed clearly. Usually the simpler and cleaner problems are better because more codes and methods can run them, analysis is easier and we can successfully experiment with remedial measures. This allows more experimentation and attempts to solve it using diverse approaches. This can energize rapid progress and deeper understanding. I am a deep believer in the power of brutality at least when it comes to simulation codes. I am a deep believer that we are generally too easy on our computer codes; we should endeavor to break them early and often. A method or code is never “good enough”. The best way to break our codes is attempt to solve really hard problems that are beyond our ability to solve today. Once the solution of these brutal problems is well enough in hand, one should find a way of making the problem a little bit harder. The testing should actually be more extreme and difficult than anything we need to do with codes. One should always be working at, or beyond the edge of what can be done instead of safely staying within our capabilities. Today we are too prone to simply solving problems that are well in hand instead of pushing ourselves into the unknown. This tendency is harming progress.

I am a deep believer in the power of brutality at least when it comes to simulation codes. I am a deep believer that we are generally too easy on our computer codes; we should endeavor to break them early and often. A method or code is never “good enough”. The best way to break our codes is attempt to solve really hard problems that are beyond our ability to solve today. Once the solution of these brutal problems is well enough in hand, one should find a way of making the problem a little bit harder. The testing should actually be more extreme and difficult than anything we need to do with codes. One should always be working at, or beyond the edge of what can be done instead of safely staying within our capabilities. Today we are too prone to simply solving problems that are well in hand instead of pushing ourselves into the unknown. This tendency is harming progress.

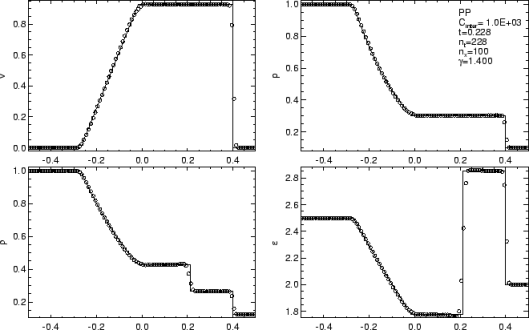

By the time a decade had passed after the introduction of Sod’s problem almost all of the pathological solutions we used to see had disappeared and far better methods existed (not because of Sod’s problem per se, but something was “in the air” too). The existence of a common problem to test and present results was a vehicle for the community to use in this endeavor. Today, the Sod problem is simply a “hello World” for shock physics and offers no real challenge to a serious method or code. It is difficult to distinguish between very good and OK solutions with results. Being able to solve the Sod problem doesn’t really qualify you to attack really hard problems either. It is a fine opening ante, but never the final call. The only problem with using the Sod problem today is that too many methods and codes stop their testing there and never move to the problems that challenge our knowledge and capability today. A key to making progress is to find problems where things don’t work so well, focus attention on changing that.

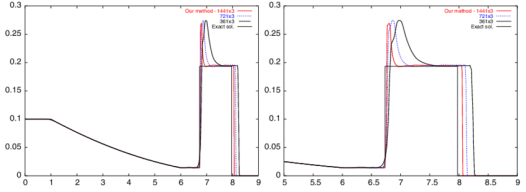

By the time a decade had passed after the introduction of Sod’s problem almost all of the pathological solutions we used to see had disappeared and far better methods existed (not because of Sod’s problem per se, but something was “in the air” too). The existence of a common problem to test and present results was a vehicle for the community to use in this endeavor. Today, the Sod problem is simply a “hello World” for shock physics and offers no real challenge to a serious method or code. It is difficult to distinguish between very good and OK solutions with results. Being able to solve the Sod problem doesn’t really qualify you to attack really hard problems either. It is a fine opening ante, but never the final call. The only problem with using the Sod problem today is that too many methods and codes stop their testing there and never move to the problems that challenge our knowledge and capability today. A key to making progress is to find problems where things don’t work so well, focus attention on changing that. Therefore, if we want problems to spur progress and shed light on what works, we need harder problems. For example, we could systematically up the magnitude of the variation in initial conditions in shock tube problems until results start to show issues. This usefully produces harder problems, but really hasn’t been done (note to self this is a really good idea). One problem that produces this comes from the National Lab community in the form of LeBlanc’s shock tube, or its more colorful colloquial name, “the shock tube from Hell.” This is a much less forgiving problem than Sod’s shock tube, and that is an understatement. Rather than jumps in pressure and density of about one order of magnitude, the jump in density is 1000-to-1 and the jump in pressure is one billion-to-one. This stresses methods far more than Sod and many simple methods can completely fail. Most industrial or production quality methods can actually solve the problem albeit with much more resolution than Sod’s problem requires (I’ve gotten decent results on Sod’s problem on mesh sequences of 4-8-16 cells!). For the most part we can solve LeBlanc’s problem capably today, so its time to look for fresh challenges.

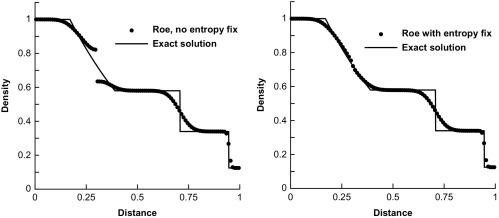

Therefore, if we want problems to spur progress and shed light on what works, we need harder problems. For example, we could systematically up the magnitude of the variation in initial conditions in shock tube problems until results start to show issues. This usefully produces harder problems, but really hasn’t been done (note to self this is a really good idea). One problem that produces this comes from the National Lab community in the form of LeBlanc’s shock tube, or its more colorful colloquial name, “the shock tube from Hell.” This is a much less forgiving problem than Sod’s shock tube, and that is an understatement. Rather than jumps in pressure and density of about one order of magnitude, the jump in density is 1000-to-1 and the jump in pressure is one billion-to-one. This stresses methods far more than Sod and many simple methods can completely fail. Most industrial or production quality methods can actually solve the problem albeit with much more resolution than Sod’s problem requires (I’ve gotten decent results on Sod’s problem on mesh sequences of 4-8-16 cells!). For the most part we can solve LeBlanc’s problem capably today, so its time to look for fresh challenges. Other good problems are devised though knowing what goes wrong in practical calculation. Often those who know how to solve the problem create these problems. A good example is the issue of expansion shocks, which can be seen by taking Sod’s shock tube and introducing a velocity to the initial condition. This puts a sonic point (where the characteristic speed goes to zero) in the rarefaction. We know how to remove this problem by adding an “entropy” fix to the Riemann solver defining a class of methods that work. The test simply unveils whether the problem infests a code that may have ignored this issue. This detection is a very good side-effect of a well-designed test problem.

Other good problems are devised though knowing what goes wrong in practical calculation. Often those who know how to solve the problem create these problems. A good example is the issue of expansion shocks, which can be seen by taking Sod’s shock tube and introducing a velocity to the initial condition. This puts a sonic point (where the characteristic speed goes to zero) in the rarefaction. We know how to remove this problem by adding an “entropy” fix to the Riemann solver defining a class of methods that work. The test simply unveils whether the problem infests a code that may have ignored this issue. This detection is a very good side-effect of a well-designed test problem.

attacking something important and creating innovative solutions. I do a lot of things every day, but very little of it is either worthwhile or meaningful. At the same time I’m doing exactly what I am supposed to be doing! This means that my employer (or masters) are asking me to spend all of my valuable time doing meaningless, time wasting things as part of my conditions of employment. This includes trips to stupid, meaningless meetings with little or no content of value, compliance training, project planning, project reports, e-mails, jumping through hoops to get a technical paper, and a smorgasbord of other paperwork. Much of this is hoisted upon us by our governing agency, coupled with rampant institutional over-compliance, or managerially driven ass covering. All of this equals no time or focus on anything that actually matters squeezing out all the potential for innovation. Many of the direct actions result in creating an environment where risk and failure are not tolerated thus killing innovation before it can attempt to appear.

attacking something important and creating innovative solutions. I do a lot of things every day, but very little of it is either worthwhile or meaningful. At the same time I’m doing exactly what I am supposed to be doing! This means that my employer (or masters) are asking me to spend all of my valuable time doing meaningless, time wasting things as part of my conditions of employment. This includes trips to stupid, meaningless meetings with little or no content of value, compliance training, project planning, project reports, e-mails, jumping through hoops to get a technical paper, and a smorgasbord of other paperwork. Much of this is hoisted upon us by our governing agency, coupled with rampant institutional over-compliance, or managerially driven ass covering. All of this equals no time or focus on anything that actually matters squeezing out all the potential for innovation. Many of the direct actions result in creating an environment where risk and failure are not tolerated thus killing innovation before it can attempt to appear. only thing not required of me at work is actual productivity. All my training, compliance, and other work activities are focused on things that produce nothing of value. At some level we fail to respect our employees and end up wasting our lives by having us invest time in activities devoid of content and value. Basically the entire apparatus of my work is focused on forcing me to do things that produce nothing worthwhile other than providing a wage to support my family. Real productivity and innovation is all on me and increasingly a pro bono activity. The fact that actual productivity isn’t a concern for my employer is really fucked up. The bottom line is that we aren’t funded to do anything valuable, and the expectations on me are all bullshit and no substance. Its been getting steadily worse with each passing year too. When my employer talks about efficiency, it is all about saving money, not producing anything for the money we spend. Instead of focusing on producing more or better with the funding and unleashing the creative energy of people, we focus on penny pinching and making the workplace more unpleasant and genuinely terrible. None of the changes make for a better, more engaging workplace and simply continually reduce the empowerment of employees.

only thing not required of me at work is actual productivity. All my training, compliance, and other work activities are focused on things that produce nothing of value. At some level we fail to respect our employees and end up wasting our lives by having us invest time in activities devoid of content and value. Basically the entire apparatus of my work is focused on forcing me to do things that produce nothing worthwhile other than providing a wage to support my family. Real productivity and innovation is all on me and increasingly a pro bono activity. The fact that actual productivity isn’t a concern for my employer is really fucked up. The bottom line is that we aren’t funded to do anything valuable, and the expectations on me are all bullshit and no substance. Its been getting steadily worse with each passing year too. When my employer talks about efficiency, it is all about saving money, not producing anything for the money we spend. Instead of focusing on producing more or better with the funding and unleashing the creative energy of people, we focus on penny pinching and making the workplace more unpleasant and genuinely terrible. None of the changes make for a better, more engaging workplace and simply continually reduce the empowerment of employees. incremental at best. The result is a system where I am almost definitively not productive if I do exactly what I’m supposed to do. The entire apparatus of accountability is absurd and an insult to productive work. It sounds good, but its completely destructive, and we keep adding more and more of it.

incremental at best. The result is a system where I am almost definitively not productive if I do exactly what I’m supposed to do. The entire apparatus of accountability is absurd and an insult to productive work. It sounds good, but its completely destructive, and we keep adding more and more of it. ng me to be productive is to trust me. We need to realize that our systems at work are structured to deal with a lack of trust. Implicit in all the systems is a feeling that people need to be constantly being checked up on. If people aren’t constantly being checked up on they are fucking off. The result is an almost complete lack of empowerment, and a labyrinth of micromanagement. To be productive we need to be trusted, and we need to be empowered. We need to be chasing big important goals that we are committed to achieving. Once we accept the goals, we need to be unleashed to accomplish them. In the process we need to solve all sorts of problems, and in the process we can provide innovative solutions that enrich the knowledge of humanity and enrich society at large. This is a tried and true formula for progress that we have lost faith in, and with this lack of faith we have lost trust in our fellow citizens.

ng me to be productive is to trust me. We need to realize that our systems at work are structured to deal with a lack of trust. Implicit in all the systems is a feeling that people need to be constantly being checked up on. If people aren’t constantly being checked up on they are fucking off. The result is an almost complete lack of empowerment, and a labyrinth of micromanagement. To be productive we need to be trusted, and we need to be empowered. We need to be chasing big important goals that we are committed to achieving. Once we accept the goals, we need to be unleashed to accomplish them. In the process we need to solve all sorts of problems, and in the process we can provide innovative solutions that enrich the knowledge of humanity and enrich society at large. This is a tried and true formula for progress that we have lost faith in, and with this lack of faith we have lost trust in our fellow citizens. t they can’t be trusted. If people are treated like they can’t be trusted, you can’t expect them to be productive. To be better and productive, we need to start with a different premise. To be productive we need to center and focus our work on the producers, not the managers. We need to trust and put faith in each other to solve problems, innovate and create a better future.

t they can’t be trusted. If people are treated like they can’t be trusted, you can’t expect them to be productive. To be better and productive, we need to start with a different premise. To be productive we need to center and focus our work on the producers, not the managers. We need to trust and put faith in each other to solve problems, innovate and create a better future. decisions for business, money is all that matters. If it puts more money in the pockets of those in power (i.e., the stockholder), it is by current definition a good decision. The flow and availability of money is maximized by a short business cycle, and an utter lack of long-term perspective. In the work that I do, we define the correctness of our work by whether money is allocated for it. This attitude has led us toward some really disturbing outcomes.

decisions for business, money is all that matters. If it puts more money in the pockets of those in power (i.e., the stockholder), it is by current definition a good decision. The flow and availability of money is maximized by a short business cycle, and an utter lack of long-term perspective. In the work that I do, we define the correctness of our work by whether money is allocated for it. This attitude has led us toward some really disturbing outcomes. s second World standards, and for the poor third World standards. The outcomes for our citizens follow these outcomes in terms of life expectancy. The reasons for our terrible health care system are clear, as the day is long, money. More specifically, the medical system is tied to profit motive rather than responsibility and ethics resulting in outcomes being directly linked to people’s ability to pay. The moral and ethical dimension of health care in the United States is indefensible and appalling. It is because money is the prime mover for decisions. Worse yet, the substandard medical care for most of our citizens is a drain on society, produces awful results, but provides a vast well of money for the rich and wealthy to leech off of.

s second World standards, and for the poor third World standards. The outcomes for our citizens follow these outcomes in terms of life expectancy. The reasons for our terrible health care system are clear, as the day is long, money. More specifically, the medical system is tied to profit motive rather than responsibility and ethics resulting in outcomes being directly linked to people’s ability to pay. The moral and ethical dimension of health care in the United States is indefensible and appalling. It is because money is the prime mover for decisions. Worse yet, the substandard medical care for most of our citizens is a drain on society, produces awful results, but provides a vast well of money for the rich and wealthy to leech off of. Money is a tool. Period. Computers are tools too. When tools become reasons and central organizing principles we are bound to create problems. I’ve written volumes on the issues created by the lack of perspective on computers as tools as opposed to ends unto themselves. Money is similar in character. In my world these two issues are intimately linked, but the problems with money are broader. Money’s role as a tool is a surrogate for value and worth, and can be exchanged for other things of value. Money’s meaning is connected to the real world things it can be exchanged for. We have increasingly lost this sense and put ourselves in a position where value and money have become independent of each other. This independence is truly a crisis and leads to severe misallocation of resources. At work, the attitude is increasingly “do what you are paid to do” “the customer is always right” “we are doing what we get funded to do”. The law, training and all manner of organizational tools, enforces all of this. This shadowed by business where the ability to make money justifies anything. We trade, destroy and carve up businesses so that stockholders can make money. All sense of morality, justice, and long-term consequence is scarified if money can be extracted from the system. Today’s stock market is built to create wealth in this manner, and legally enforced. The true meaning of the stock market is a way of creating resources for businesses to invest and grow. This purpose has been completely lost today, and the entire apparatus is in place to generate wealth. This wealth generation is done without regard for the health of the business. Increasingly we have used business as the model for managing everything. To its disservice, science has followed suit and lost the sense of long-term investment by putting business practice into use to manage research. In many respects the core religion in the United States is money and profit with its unquestioned supremacy as an organizing and managing principle.

Money is a tool. Period. Computers are tools too. When tools become reasons and central organizing principles we are bound to create problems. I’ve written volumes on the issues created by the lack of perspective on computers as tools as opposed to ends unto themselves. Money is similar in character. In my world these two issues are intimately linked, but the problems with money are broader. Money’s role as a tool is a surrogate for value and worth, and can be exchanged for other things of value. Money’s meaning is connected to the real world things it can be exchanged for. We have increasingly lost this sense and put ourselves in a position where value and money have become independent of each other. This independence is truly a crisis and leads to severe misallocation of resources. At work, the attitude is increasingly “do what you are paid to do” “the customer is always right” “we are doing what we get funded to do”. The law, training and all manner of organizational tools, enforces all of this. This shadowed by business where the ability to make money justifies anything. We trade, destroy and carve up businesses so that stockholders can make money. All sense of morality, justice, and long-term consequence is scarified if money can be extracted from the system. Today’s stock market is built to create wealth in this manner, and legally enforced. The true meaning of the stock market is a way of creating resources for businesses to invest and grow. This purpose has been completely lost today, and the entire apparatus is in place to generate wealth. This wealth generation is done without regard for the health of the business. Increasingly we have used business as the model for managing everything. To its disservice, science has followed suit and lost the sense of long-term investment by putting business practice into use to manage research. In many respects the core religion in the United States is money and profit with its unquestioned supremacy as an organizing and managing principle. needed, we construct programs to get money. Increasingly, the way we are managed pushes a deep level of accountability to the money instead of value and purpose. The workplace messaging is “only work on what you are paid to do.” Everything we do is based on the customer who is writing the checks. The vacuous and shallow end results of this management philosophy are clear. Instead of doing the best thing possible for real world outcomes, we propose what people want to hear and what is easily funded. Purpose, value and principles are all sacrificed for money. The biggest loss is the inability to deal with difficult issues or get to the heart of anything subtle. The money is increasingly uncoordinated and nothing is tied to large objectives. In the trenches people simply work on the thing they are being paid by and learn to not ask difficult questions or think in the long term. The customer cares nothing about the career development or expertise of those they fund. In the process of money first our career development and National scientific research is plummeting and in free fall whether we look at National Labs or Universities.

needed, we construct programs to get money. Increasingly, the way we are managed pushes a deep level of accountability to the money instead of value and purpose. The workplace messaging is “only work on what you are paid to do.” Everything we do is based on the customer who is writing the checks. The vacuous and shallow end results of this management philosophy are clear. Instead of doing the best thing possible for real world outcomes, we propose what people want to hear and what is easily funded. Purpose, value and principles are all sacrificed for money. The biggest loss is the inability to deal with difficult issues or get to the heart of anything subtle. The money is increasingly uncoordinated and nothing is tied to large objectives. In the trenches people simply work on the thing they are being paid by and learn to not ask difficult questions or think in the long term. The customer cares nothing about the career development or expertise of those they fund. In the process of money first our career development and National scientific research is plummeting and in free fall whether we look at National Labs or Universities. business is always lost to the possibility of making more money in the now. By the same token, the short-term thinking is terrible for value to society and leads to many businesses simply being chewed up and spit out. Unfortunately our society has adopted the short term thinking for everything including science. All activities are measured quarterly (or even monthly) against the funded plans. Organizations are driving everyone to abide by this short-term thinking. No one can use their judgment or knowledge gained to change this for values that transcend money. The result is a complete loss of long-term perspective in decision-making. We have lost the ability to care for the health and growth of careers. The defined financial path has become the only arbiter of right and wrong. All of our judgment is based on money, if its funded, it is right, if it isn’t funded its wrong. More and more all the long-term interests aren’t funded, so our future whither right in front of us. The only ones benefiting from the short-term thinking are a small number of the wealthiest people in society. Most people and society itself are left behind, but forced to serve their own demise.

business is always lost to the possibility of making more money in the now. By the same token, the short-term thinking is terrible for value to society and leads to many businesses simply being chewed up and spit out. Unfortunately our society has adopted the short term thinking for everything including science. All activities are measured quarterly (or even monthly) against the funded plans. Organizations are driving everyone to abide by this short-term thinking. No one can use their judgment or knowledge gained to change this for values that transcend money. The result is a complete loss of long-term perspective in decision-making. We have lost the ability to care for the health and growth of careers. The defined financial path has become the only arbiter of right and wrong. All of our judgment is based on money, if its funded, it is right, if it isn’t funded its wrong. More and more all the long-term interests aren’t funded, so our future whither right in front of us. The only ones benefiting from the short-term thinking are a small number of the wealthiest people in society. Most people and society itself are left behind, but forced to serve their own demise. need to receive a significant benefit for putting off short-term profit to take the long-term perspective. We need to overhaul how science is done. The notably long-term investment is research must be recovered and freed from the business ideas that are destroying the ability of science to create value. The idea that business practices today are correct is utterly perverse and damaging.

need to receive a significant benefit for putting off short-term profit to take the long-term perspective. We need to overhaul how science is done. The notably long-term investment is research must be recovered and freed from the business ideas that are destroying the ability of science to create value. The idea that business practices today are correct is utterly perverse and damaging. . We need a realization of the long-term effects of current attitudes and policies as a loss to everyone. A piece of this puzzle is a greater degree of responsibility for the future on the part of the rich and powerful. Our leaders need to work for the benefit of everyone, not for their accumulation of more wealth and power. Until this fact becomes more evident to the population as a whole we can expect the wealthy and powerful to continue to favor a system that benefits himself or herself to exclusion of everyone else.

. We need a realization of the long-term effects of current attitudes and policies as a loss to everyone. A piece of this puzzle is a greater degree of responsibility for the future on the part of the rich and powerful. Our leaders need to work for the benefit of everyone, not for their accumulation of more wealth and power. Until this fact becomes more evident to the population as a whole we can expect the wealthy and powerful to continue to favor a system that benefits himself or herself to exclusion of everyone else. unsatisfactorily understood thing. For general nonlinear problems dominating the use and utility of high performance computing, the state of affairs is quite incomplete. It has a central role in modeling and simulation making our gaps in theory, knowledge and practice rather unsettling. Theory is strong for linear problems where solutions are well behaved and smooth (i.e., continuously differentiable, or a least many derivatives exist). Almost every problem of substance driving National investments in computing is nonlinear and rough. Thus, we have theory that largely guides practice by faith rather than rigor. We would be well served by a concerted effort to develop theoretical tools better suited to our reality.