[E]xceptional claims demand exceptional evidence.

What can be asserted without evidence can also be dismissed without evidence.

― Christopher Hitchens

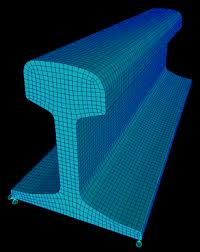

Commercial CFD and mechanics codes are an increasingly big deal. They would have  you believe that the only thing people should concern themselves with is the meshing problems, graphical user interfaces, and computer power. The innards of the code with its models and methods are basically in the bag, and no big deal. The quality of the solutions is assured because it’s a solved problem. Input your problem in a point and click manner, mesh it, run it on a big enough computer and point and click visuals, then you’re done.

you believe that the only thing people should concern themselves with is the meshing problems, graphical user interfaces, and computer power. The innards of the code with its models and methods are basically in the bag, and no big deal. The quality of the solutions is assured because it’s a solved problem. Input your problem in a point and click manner, mesh it, run it on a big enough computer and point and click visuals, then you’re done.

Marketing is what you do when your product is no good.

― Edwin H. Land

Of course this is not the case, not even close. One might understand why these vendors might prefer to sell their product with this mindset. The truth is that the methods in the codes are old, generally not very good by modern standards. If we had a healthy research agenda for developing improved methods, the methods in the codes would be appalling. On the other hand they are well understood, highly reliable or robust (meaning they will run to completion without undue user intervention). This doesn’t mean that they are correct or accurate. The problem is that they represent a very low bar of success. The codes and the methods utilized by them are far below what might be possible with a healthy computational science program.

Another huge issue is the targeted audience of the users for these codes. If we go back in time we could rely upon the codes being used only by people with PhD’s. Nowadays the codes are targeted at people with Bachelor’s degrees with little or no expertise or interest in numerical methods or advanced models in the context of partial differential equations. As a result, these aspects of the code’s makeup and behavior have been systematically reduced in importance to being practically ignored. Of course, because I work at these very details provides me with the knowledge and evidence that these aspects are paramount in importance. All the meshing, graphics and gee-whiz interface can’t overcome a bad model or method in a code.

Another huge issue is the targeted audience of the users for these codes. If we go back in time we could rely upon the codes being used only by people with PhD’s. Nowadays the codes are targeted at people with Bachelor’s degrees with little or no expertise or interest in numerical methods or advanced models in the context of partial differential equations. As a result, these aspects of the code’s makeup and behavior have been systematically reduced in importance to being practically ignored. Of course, because I work at these very details provides me with the knowledge and evidence that these aspects are paramount in importance. All the meshing, graphics and gee-whiz interface can’t overcome a bad model or method in a code.

Reality is one of the possibilities I cannot afford to ignore

― Leonard Cohen

One way to get to the truth is verification and validation (V&V). While V&V has become an important technical endeavor, it usually is applied more as a buzzword than an actual technical competence. The result is usually a set of activities that have more of the look and feel of V&V than the actual proper practice of V&V. Those marketing the codes tend to trumpet their commitment to V&V while actually espousing the cutting of V&V corners. Part of the problem is that rigorous V&V would in large part undercut many of their marketing premises.

One way to get to the truth is verification and validation (V&V). While V&V has become an important technical endeavor, it usually is applied more as a buzzword than an actual technical competence. The result is usually a set of activities that have more of the look and feel of V&V than the actual proper practice of V&V. Those marketing the codes tend to trumpet their commitment to V&V while actually espousing the cutting of V&V corners. Part of the problem is that rigorous V&V would in large part undercut many of their marketing premises.

We all die. The goal isn’t to live forever, the goal is to create something that will.

― Chuck Palahniuk

What is truly terrifying about the state of affairs today is that this attitude has gone beyond the commercial code vendors and increasingly defines the attitude at National Labs, and Academia, the place where innovation should be coming from? Money for developing new methods and models has dried up. The emphasis in computational science has shifted to parallel computing and the acquisition of massive new computer platforms.

What is truly terrifying about the state of affairs today is that this attitude has gone beyond the commercial code vendors and increasingly defines the attitude at National Labs, and Academia, the place where innovation should be coming from? Money for developing new methods and models has dried up. The emphasis in computational science has shifted to parallel computing and the acquisition of massive new computer platforms.

Reality is that which, when you stop believing in it, doesn’t go away.

― Philip K. Dick

The unifying theme in all of this is that the perception that is being floated is modeling and numerical methods is a solved area of investigation and we simply await a powerful enough computer to unveil the secrets of the universe. This sort of mindset is more appropriate for some sort of cultish religion than science. It is actually antithetical to science, and the result is a lack of real scientific progress.

The unifying theme in all of this is that the perception that is being floated is modeling and numerical methods is a solved area of investigation and we simply await a powerful enough computer to unveil the secrets of the universe. This sort of mindset is more appropriate for some sort of cultish religion than science. It is actually antithetical to science, and the result is a lack of real scientific progress.

Don’t try to follow trends. Create them.

― Simon Zingerman

So, what is needed?

- Computational science needs to acknowledge and play by the scientific method. Increasingly, today it does not. It acts on articles of faith and politically correct low risk paths to “progress”.

- We need to cease believing that all our problems will be solved by a faster computer

- The needs of computational science should balance the benefits of models, methods, algorithms, implementation, software and hardware instead of the articles of faith taken today.

- Embrace risks needed for breakthroughs in all of these areas especially models, methods and algorithms, which require creative work and generally need inspired results for progress.

- Acknowledge that the impact of computational science on reality is most greatly improved by modeling improvements. Next in impact are methods and algorithms, which provide greater efficiency. Instead our focus on implementation, software and hardware actually produces less impact on reality.

- Practice V&V with rigor and depth in a way that provides unambiguous evidence that calculations are trustworthy in a well-defined and supportable manner.

- Acknowledge the absolute need for experimental and observational science in providing the window into reality.

- Stop overselling modeling and simulation as an absolute replacement for experiments, and more as a guide for intuition and exploration to be used in association with other scientific methods.

Reality is frequently inaccurate.

― Douglas Adams

We appear to be living in a golden age of progress. I’ve come increasingly to the view that this is false. We are living in an age that is enjoying the fruits of a golden age and following the inertia of a scientific golden age. The forces powering the “progress” we enjoy are not being returned to our future generations. So, what are we going to do when we run out of the gains made by our fore bearers?

We appear to be living in a golden age of progress. I’ve come increasingly to the view that this is false. We are living in an age that is enjoying the fruits of a golden age and following the inertia of a scientific golden age. The forces powering the “progress” we enjoy are not being returned to our future generations. So, what are we going to do when we run out of the gains made by our fore bearers? Progress is a tremendous bounty to all. We can all benefit from wealth, longer and healthier lives, greater knowledge and general well-being. The forces arrayed against progress are small-minded and petty. For some reason the small-minded and petty interests have swamped forces for good and beneficial efforts. Another way of saying this is the forces of the status quo are working to keep change from happening. The status quo forces are powerful and well-served by keeping things as they are. Income inequality and conservatism are closely related because progress and change favors those who benefit from change. The people at the top favor keeping things just as they are.

Progress is a tremendous bounty to all. We can all benefit from wealth, longer and healthier lives, greater knowledge and general well-being. The forces arrayed against progress are small-minded and petty. For some reason the small-minded and petty interests have swamped forces for good and beneficial efforts. Another way of saying this is the forces of the status quo are working to keep change from happening. The status quo forces are powerful and well-served by keeping things as they are. Income inequality and conservatism are closely related because progress and change favors those who benefit from change. The people at the top favor keeping things just as they are.

Most of the technology that powers today’s world was actually developed a long time ago. Today the technology is simply being brought to “market”. Technology at a commercial level has a very long lead-time. The breakthroughs in science that surrounded the effort fighting the Cold War provide the basis of most of our modern society. Cell phones, computers, cars, planes, etc. are all associated with the science done decades ago. The road to commercial success is long and today’s economic supremacy is based on yesterday’s investments.

Most of the technology that powers today’s world was actually developed a long time ago. Today the technology is simply being brought to “market”. Technology at a commercial level has a very long lead-time. The breakthroughs in science that surrounded the effort fighting the Cold War provide the basis of most of our modern society. Cell phones, computers, cars, planes, etc. are all associated with the science done decades ago. The road to commercial success is long and today’s economic supremacy is based on yesterday’s investments.

plenty there that needs to be done.

plenty there that needs to be done. t up in trying to justify the funding for the path they are already taking. The damage done to long-term progress is accumulating with each passing year. Our leadership will not put significant resources into things that pay off far into the future (what good will that do them?). We have missed a number of potentially massive breakthroughs chasing progress from computers alone. The lack of perspective and balance in the course for progress shows a stunning lack of knowledge for the history of computing. The entire strategy is remarkably bankrupt philosophically. It is playing to the lowest intellectual denominator. An analogy that does the strategy too much justice would compare this to rating cars solely on the basis of horsepower.

t up in trying to justify the funding for the path they are already taking. The damage done to long-term progress is accumulating with each passing year. Our leadership will not put significant resources into things that pay off far into the future (what good will that do them?). We have missed a number of potentially massive breakthroughs chasing progress from computers alone. The lack of perspective and balance in the course for progress shows a stunning lack of knowledge for the history of computing. The entire strategy is remarkably bankrupt philosophically. It is playing to the lowest intellectual denominator. An analogy that does the strategy too much justice would compare this to rating cars solely on the basis of horsepower. The end product of our current strategy will ultimately starve the World of an avenue for progress. Our children will be those most acutely impacted by our mistakes. Of course we could chart another path that balanced computing emphasis with algorithms, methods and models. Improvements in our grasp of physics and engineering should probably be in the driver’s seat. This would require a significant shift in the focus, but the benefits would be profound.

The end product of our current strategy will ultimately starve the World of an avenue for progress. Our children will be those most acutely impacted by our mistakes. Of course we could chart another path that balanced computing emphasis with algorithms, methods and models. Improvements in our grasp of physics and engineering should probably be in the driver’s seat. This would require a significant shift in the focus, but the benefits would be profound.

ncertainty quantification is a hot topic. It is growing in importance and practice, but people should be realistic about it. It is always incomplete. We hope that we have captured the major forms of uncertainty, but the truth is that our assumptions about simulation blind us to some degree. This is the impact of “unknown knowns” the assumptions we make without knowing we are making them. In most cases our uncertainty estimates are held hostage to the tools at our disposal. One way of thinking about this looks at codes as the tools, but the issue is far deeper actually being the basic foundation we base of modeling of reality upon.

ncertainty quantification is a hot topic. It is growing in importance and practice, but people should be realistic about it. It is always incomplete. We hope that we have captured the major forms of uncertainty, but the truth is that our assumptions about simulation blind us to some degree. This is the impact of “unknown knowns” the assumptions we make without knowing we are making them. In most cases our uncertainty estimates are held hostage to the tools at our disposal. One way of thinking about this looks at codes as the tools, but the issue is far deeper actually being the basic foundation we base of modeling of reality upon. One of the really uplifting trends in computational simulations is the focus on uncertainty estimation as part of the solution. This work is serving the demands of decision makers who increasingly depend on simulation. The practice allows simulations to come with a multi-faceted “error” bar. Just like the simulations themselves the uncertainty is going to be imperfect, and typically far more imperfect than the simulations themselves. It is important to recognize the nature of imperfection and incompleteness inherent in uncertainty quantification. The uncertainty itself comes from a number of sources, some interchangeable.

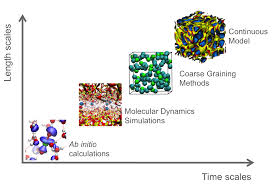

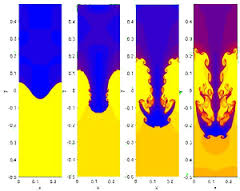

One of the really uplifting trends in computational simulations is the focus on uncertainty estimation as part of the solution. This work is serving the demands of decision makers who increasingly depend on simulation. The practice allows simulations to come with a multi-faceted “error” bar. Just like the simulations themselves the uncertainty is going to be imperfect, and typically far more imperfect than the simulations themselves. It is important to recognize the nature of imperfection and incompleteness inherent in uncertainty quantification. The uncertainty itself comes from a number of sources, some interchangeable. Aleatory: This is uncertainty due to the variability of phenomena. This is the weather. The archetype of variability is turbulence, but also think about the detailed composition of every single device. They are all different in some small degree never mind their history after being built. To some extent aleatory uncertainty is associated with a breakdown of continuum hypothesis and is distinctly scale dependent. As things are simulated at smaller scales different assumptions must be made. Systems will vary at a range of length and time scales, and as scales come into focus their variation must be simulated. One might argue that this is epistemic, in that if we could measure things precisely enough then it could be precisely simulated (given the right equations, constitutive equation and boundary conditions). This point of view is rational and constructive only to a small degree. For many systems of interest chaos reigns and measurements will never be precise enough to matter. By and large this form of uncertainty is simply ignored because simulations can’t provide information.

Aleatory: This is uncertainty due to the variability of phenomena. This is the weather. The archetype of variability is turbulence, but also think about the detailed composition of every single device. They are all different in some small degree never mind their history after being built. To some extent aleatory uncertainty is associated with a breakdown of continuum hypothesis and is distinctly scale dependent. As things are simulated at smaller scales different assumptions must be made. Systems will vary at a range of length and time scales, and as scales come into focus their variation must be simulated. One might argue that this is epistemic, in that if we could measure things precisely enough then it could be precisely simulated (given the right equations, constitutive equation and boundary conditions). This point of view is rational and constructive only to a small degree. For many systems of interest chaos reigns and measurements will never be precise enough to matter. By and large this form of uncertainty is simply ignored because simulations can’t provide information. bar. Too often these errors are ignored, wrongly assumed to be small, or incorrectly estimated. There is no excuse for this today.

bar. Too often these errors are ignored, wrongly assumed to be small, or incorrectly estimated. There is no excuse for this today. gical if the conditions being modeled are known with exceeding precision. The problem is that such precision is virtually impossible for any circumstance. This is the core of the problem with simulating the aleatory uncertainty that so frequently remains untreated. It is almost completely ignored by a host of fundamental assumption in modeling that is inherited by simulations. These assumptions are holding back real progress in a host of fields of major importance.

gical if the conditions being modeled are known with exceeding precision. The problem is that such precision is virtually impossible for any circumstance. This is the core of the problem with simulating the aleatory uncertainty that so frequently remains untreated. It is almost completely ignored by a host of fundamental assumption in modeling that is inherited by simulations. These assumptions are holding back real progress in a host of fields of major importance. methods are understood only superficially, and this results in a superficial uncertainty estimate. Often the black box thinking extends to the tool used to get uncertainty too. We then get the result from a superposition of two black boxes. Not a lot light bets shed on reality in the process. Numerical errors are ignored, or simply misdiagnosed. Black box users often simply do a mesh sensitivity study, and assume that small changes under mesh variation are indicative of convergence and small errors. They may or may not be such evidence. Without doing a more formal analysis this sort of conclusion is not justified. If code and problem is not converging, the small changes may be indicative of very large numerical errors or even divergence and a complete lack of control.

methods are understood only superficially, and this results in a superficial uncertainty estimate. Often the black box thinking extends to the tool used to get uncertainty too. We then get the result from a superposition of two black boxes. Not a lot light bets shed on reality in the process. Numerical errors are ignored, or simply misdiagnosed. Black box users often simply do a mesh sensitivity study, and assume that small changes under mesh variation are indicative of convergence and small errors. They may or may not be such evidence. Without doing a more formal analysis this sort of conclusion is not justified. If code and problem is not converging, the small changes may be indicative of very large numerical errors or even divergence and a complete lack of control. Moore’s law isn’t a law, but rather an empirical observation that has held sway for far longer than could have been imagined fifty years ago. In some way shape or form, Moore’s law has provided a powerful narrative for the triumph of computer technology in our modern World. For a while it seemed almost magical in its gift of massive growth in computing power over the scant passage of time. Like all good things, it will come to an end, and soon if not already.

Moore’s law isn’t a law, but rather an empirical observation that has held sway for far longer than could have been imagined fifty years ago. In some way shape or form, Moore’s law has provided a powerful narrative for the triumph of computer technology in our modern World. For a while it seemed almost magical in its gift of massive growth in computing power over the scant passage of time. Like all good things, it will come to an end, and soon if not already.

For those of us doing real practical work on computers this program is a disaster. Even doing the same things we do today will be harder and more expensive. It is likely that the practical work will get harder to complete and more difficult to be sure of. Real gains in throughput are likely to be far less than the reported gains in performance attributed to the new computers too. In sum the program will almost certainly be a massive waste of money. The plan is for most of the money going to the hardware and the hardware vendors (should I think corporate welfare?). All of this will be done to squeeze another 7 to 10 years of life out of Moore’s law even though the patient is metaphorically in a coma already.

For those of us doing real practical work on computers this program is a disaster. Even doing the same things we do today will be harder and more expensive. It is likely that the practical work will get harder to complete and more difficult to be sure of. Real gains in throughput are likely to be far less than the reported gains in performance attributed to the new computers too. In sum the program will almost certainly be a massive waste of money. The plan is for most of the money going to the hardware and the hardware vendors (should I think corporate welfare?). All of this will be done to squeeze another 7 to 10 years of life out of Moore’s law even though the patient is metaphorically in a coma already. If someone gives you some data and asks you to fit a function that “models” the data, many of you know the intuitive answer, “least squares”. This is the obvious, simple choice, and perhaps, not surprisingly, not the best answer. How bad this choice may be depends on the situation? One way to do better is to recognize the situations where the solution via least squares may be problematic, and produce an undue influence on the results.

If someone gives you some data and asks you to fit a function that “models” the data, many of you know the intuitive answer, “least squares”. This is the obvious, simple choice, and perhaps, not surprisingly, not the best answer. How bad this choice may be depends on the situation? One way to do better is to recognize the situations where the solution via least squares may be problematic, and produce an undue influence on the results. one. If the deviations are large or some of your data might be corrupt (i.e., outliers), the choice of least squares can be catastrophic. The corrupt data may have a completely overwhelming impact on the fit. There are a number of methods for dealing with outliers in least squares, and in my opinion none of them good.

one. If the deviations are large or some of your data might be corrupt (i.e., outliers), the choice of least squares can be catastrophic. The corrupt data may have a completely overwhelming impact on the fit. There are a number of methods for dealing with outliers in least squares, and in my opinion none of them good. Fortunately there are existing methods that are free from these pathologies. For example the least median deviation fit can deal with corrupt data easily. It naturally excludes outliers from the fit because of a different underlying model. Where least squares are the solution of a minimization problem in the energy or L2 norm, the least median deviation uses the L1 norm. The problem is that the fitting algorithm is inherently nonlinear, and generally not included in most software.

Fortunately there are existing methods that are free from these pathologies. For example the least median deviation fit can deal with corrupt data easily. It naturally excludes outliers from the fit because of a different underlying model. Where least squares are the solution of a minimization problem in the energy or L2 norm, the least median deviation uses the L1 norm. The problem is that the fitting algorithm is inherently nonlinear, and generally not included in most software.

One of the problems is that least squares are virtually knee-jerk in its application. It is contained in standard software such as Microsoft Excel and can be applied with almost no thought. If you have to write your own curve-fitting program by far the simplest approach is to use least squares. It can often produce a linear system of equations to solve where alternatives are invariably nonlinear. The key point is to realize that this convenience has a consequence. If your data reduction is important, it might be a good idea to think about what you ought to do a bit more.

One of the problems is that least squares are virtually knee-jerk in its application. It is contained in standard software such as Microsoft Excel and can be applied with almost no thought. If you have to write your own curve-fitting program by far the simplest approach is to use least squares. It can often produce a linear system of equations to solve where alternatives are invariably nonlinear. The key point is to realize that this convenience has a consequence. If your data reduction is important, it might be a good idea to think about what you ought to do a bit more.

A week ago I received bad news, the review for a paper were back. One might think that getting a review back would be good, but it rarely is. These reviews are too often a horrible soul-crushing experience. In this case I had reports from two reviewers, and one of them delivered the ego thrashing I’ve come to fear.

A week ago I received bad news, the review for a paper were back. One might think that getting a review back would be good, but it rarely is. These reviews are too often a horrible soul-crushing experience. In this case I had reports from two reviewers, and one of them delivered the ego thrashing I’ve come to fear. In total the two reviews were generally consistent on the details of the paper, and the sorts of suggestions for bringing the paper into the condition needed to allow publication. The difference was the tone of the reviews. One of the reviews was completely constructive and detailed in its critique. Each and every critique was offered in a positive light even when the error was pure carelessness.

In total the two reviews were generally consistent on the details of the paper, and the sorts of suggestions for bringing the paper into the condition needed to allow publication. The difference was the tone of the reviews. One of the reviews was completely constructive and detailed in its critique. Each and every critique was offered in a positive light even when the error was pure carelessness. it could have been much easier. There is nothing wrong with being critical, but the way its done matters a lot.

it could have been much easier. There is nothing wrong with being critical, but the way its done matters a lot.