Better never means better for everyone… It always means worse, for some.

― Margaret Atwood

A couple of days ago I went through the HLL flux function –Riemann solver, and a small improvement (https://williamjrider.wordpress.com/2014/11/24/the-power-of-simple-questions-the-hll-flux/). Today I’ll report on another improvement, that doesn’t appear to improve much at all, but its worth considering. This is work in progress and right now its not yielding anything too exciting.

Never confuse movement with action.

― Ernest Hemingway

Again, I’ll focus on the HLL flux and attack the issue of the final flux function’s sign preservation. The first thing to address is the propriety of the entire idea. There is a nice (short) paper on a closely related topic [Linde]. The bottom line is that the initial data may have a certain sign convention, and the dynamics induced in the Riemann problem can change that. So before deciding how to apply the Riemann solver and whether that application is appropriate one needs to realize the impact of the internal structure.

The problem I noted that under some conditions the dissipation in the flux can change the sign of the computed flux (when the velocity is much less than the sound speed, and the change in the equations variables is large enough). If you look at the fluxes in the mass or energy equation, they are the product of the velocity multiplying a positive definite quantity. The mass flux is the velocity multiplying the density, and the energy flux is

where

,

and

are the density, total energy and the pressure. If the velocity always has a sign in the Riemann solution, the resulting flux inherits that sign convention.

The HLL flux is ;

–

;

;

where

and

. If

we can have problems. This is particularly true is

–

is large.

Without deviation from the norm, progress is not possible.

― Frank Zappa

To attack the issue of whether the sign change is a problem and might be unphysical, I look at the dynamics within the Riemann solution. This can be easily computed using the linearized solution to the Lagrangian Riemann solution, where

and

are the Lagrangian wave speeds. If

,

and

all have the same sign, the flux will have that sign. Given this background I test the HLL flux for compatibility with the established sign convention. If the sign convention is violated I do one of a couple of things: set

or

if

and

if

. Then I test it.

Restlessness is discontent — and discontent is the first necessity of progress. Show me a thoroughly satisfied man — and I will show you a failure.

― Thomas A. Edison

The issue definitely shows up a lot in computations. I set up a challenging problem where the density is constant in the initial data, and there is a million-to-one pressure jump. This produces a shock and contact discontinuity moving to the right. The density jump is nearly six because the shock is so strong (). The HLL tends to smear the contact very strongly, and this is where the flux sign convention is violated.

We can see that an exact Riemann solver gives a sharp contact (the structure on the left side of the density peak). We also show the energy profile, which is also impacted by the idiosyncrasy discussed today.

With HLL we get a smeared out contact, especially to the left. None of the changes to HLL flux discussed above really make much of a difference either.

But knowing that things could be worse should not stop us from trying to make them better.

― Sheryl Sandberg

To do better is better than to be perfect.

― Toba Beta

[Linde] Linde, Timur, and Philip Roe. “On a mistaken notion of “proper upwinding”.” Journal of Computational Physics 142, no. 2 (1998): 611-614.

For supercomputing to provide the value it promises for simulating phenomena, the methods in the codes must be convergent. The metric of weak scaling is utterly predicated on this being true. Despite its intrinsic importance to the actual relevance of high performance computing relatively little effort has been applied to making sure convergence is being achieved by codes. As such the work on supercomputing simply assumes that it happens, but does little to assure it. Actual convergence is largely an afterthought and receives little attention or work.

For supercomputing to provide the value it promises for simulating phenomena, the methods in the codes must be convergent. The metric of weak scaling is utterly predicated on this being true. Despite its intrinsic importance to the actual relevance of high performance computing relatively little effort has been applied to making sure convergence is being achieved by codes. As such the work on supercomputing simply assumes that it happens, but does little to assure it. Actual convergence is largely an afterthought and receives little attention or work. Thus the necessary and sufficient conditions are basically ignored. This is one of the simplest examples of the lack of balance I experience every day. In modern computational science the belief that faster supercomputers are better and valuable has become closer to an article of religious faith than a well-crafted scientific endeavor. The sort of balanced, well-rounded efforts that brought scientific computing to maturity have been sacrificed for an orgy of self-importance. China has the world’s fastest computer and reflexively we think there is a problem.

Thus the necessary and sufficient conditions are basically ignored. This is one of the simplest examples of the lack of balance I experience every day. In modern computational science the belief that faster supercomputers are better and valuable has become closer to an article of religious faith than a well-crafted scientific endeavor. The sort of balanced, well-rounded efforts that brought scientific computing to maturity have been sacrificed for an orgy of self-importance. China has the world’s fastest computer and reflexively we think there is a problem.

While necessary applied math isn’t sufficient. Sufficiency is achieved when the elements are applied together with science. The science of computing cannot remain fixed because computing is changing the physical scales we can access, and the fundamental nature of the questions we ask. The codes of twenty years ago can’t simply be used in the same way. It is much more than rewriting them or just refining a mesh. The physics in the codes needs to change to reflect the differences.

While necessary applied math isn’t sufficient. Sufficiency is achieved when the elements are applied together with science. The science of computing cannot remain fixed because computing is changing the physical scales we can access, and the fundamental nature of the questions we ask. The codes of twenty years ago can’t simply be used in the same way. It is much more than rewriting them or just refining a mesh. The physics in the codes needs to change to reflect the differences.

A chief culprit is the combination of the industry and its government partners who remain tied to the same stale model for two or three decades. At the core the cost has been intellectual vitality. The implicit assumption of convergence, and the lack of deeper intellectual investment in new ideas has conspired to strand the community in the past. The annual Supercomputing conference is a monument to this self-imposed mediocrity. It’s a trade show through and through, and in terms of technical content a truly terrible meeting (I remember pissing the Livermore CTO off when pointing this out).

A chief culprit is the combination of the industry and its government partners who remain tied to the same stale model for two or three decades. At the core the cost has been intellectual vitality. The implicit assumption of convergence, and the lack of deeper intellectual investment in new ideas has conspired to strand the community in the past. The annual Supercomputing conference is a monument to this self-imposed mediocrity. It’s a trade show through and through, and in terms of technical content a truly terrible meeting (I remember pissing the Livermore CTO off when pointing this out).

One of the big issues is the proper role of math in the computational projects. The more applied the project gets, the less capacity math has to impact it. Things simply shouldn’t be this way. Math should always be able to compliment a project.

One of the big issues is the proper role of math in the computational projects. The more applied the project gets, the less capacity math has to impact it. Things simply shouldn’t be this way. Math should always be able to compliment a project. A proof that is explanatory gives conditions that describe the results achieved in computation. Convergence rates observed in computations are often well described by mathematical theory. When a code gives results of a certain convergence rate, a mathematical proof that explains why is welcome and beneficial. It is even better if it gives conditions where things break down, or get better. The key is we see something in actual computations, and math provides a structured, logical and defensible explanation of what we see.

A proof that is explanatory gives conditions that describe the results achieved in computation. Convergence rates observed in computations are often well described by mathematical theory. When a code gives results of a certain convergence rate, a mathematical proof that explains why is welcome and beneficial. It is even better if it gives conditions where things break down, or get better. The key is we see something in actual computations, and math provides a structured, logical and defensible explanation of what we see. Too often mathematics is done that simply assumes that others are “smart” enough to squeeze utility from the work. A darker interpretation of this attitude is that people who don’t care if it is useful, or used. I can’t tolerate that attitude. This isn’t to say that math without application shouldn’t be done, but rather it shouldn’t seek support from computational science.

Too often mathematics is done that simply assumes that others are “smart” enough to squeeze utility from the work. A darker interpretation of this attitude is that people who don’t care if it is useful, or used. I can’t tolerate that attitude. This isn’t to say that math without application shouldn’t be done, but rather it shouldn’t seek support from computational science. None of these priorities can be ignored. For example if the efficiency becomes too poor, the code won’t be used because time is money. A code that is too inaccurate won’t be used no matter how robust it is (these go together, with accurate and robust being a sort of “holy grail”).

None of these priorities can be ignored. For example if the efficiency becomes too poor, the code won’t be used because time is money. A code that is too inaccurate won’t be used no matter how robust it is (these go together, with accurate and robust being a sort of “holy grail”). Robust. A robust code runs to completion. Robustness in its most refined and crudest sense is stability. The refined sense of robustness is the numerical stability that is so keenly important, but it is so much more. It gives an answer come hell or high water even if that answer is complete crap. Nothing upsets your users more than no answer; a bad answer is better than none at all. Making a code robust is hard work and difficult especially if you have morals and standards. It is an imperative.

Robust. A robust code runs to completion. Robustness in its most refined and crudest sense is stability. The refined sense of robustness is the numerical stability that is so keenly important, but it is so much more. It gives an answer come hell or high water even if that answer is complete crap. Nothing upsets your users more than no answer; a bad answer is better than none at all. Making a code robust is hard work and difficult especially if you have morals and standards. It is an imperative.

Efficiency. The code runs fast and uses the computers well. This is always hard to do, a beautiful piece of code that clearly describes an algorithm turns into a giant plate of spaghetti, but runs like the wind. To get performance you end up throwing out that wonderful inheritance hierarchy you were so proud of. To get performance you get rid of all those options that you put into the code. This requirement is also in conflict with everything else. It is also the focus of the funding agencies. Almost no one is thinking productively about how all of this (doesn’t) fit together. We just assume that faster supercomputers are awesome and better.

Efficiency. The code runs fast and uses the computers well. This is always hard to do, a beautiful piece of code that clearly describes an algorithm turns into a giant plate of spaghetti, but runs like the wind. To get performance you end up throwing out that wonderful inheritance hierarchy you were so proud of. To get performance you get rid of all those options that you put into the code. This requirement is also in conflict with everything else. It is also the focus of the funding agencies. Almost no one is thinking productively about how all of this (doesn’t) fit together. We just assume that faster supercomputers are awesome and better.

It isn’t a secret that the United States has engaged in a veritable orgy of classification since 9/11. What is less well known is the massive implied classification through other data categories such as “official use only (OUO)”. This designation is itself largely unregulated as such is quite prone to abuse.

It isn’t a secret that the United States has engaged in a veritable orgy of classification since 9/11. What is less well known is the massive implied classification through other data categories such as “official use only (OUO)”. This designation is itself largely unregulated as such is quite prone to abuse.

Again something at work has inspired me to write. It’s a persistent theme among authors, artists and scientists regarding the concept of the fresh start (blank page, empty canvas, original idea). I think its worth considering how truly “fresh” these really are. This idea came up during a technical planning meeting where one of participants viewed this new project as being offered a blank page.

Again something at work has inspired me to write. It’s a persistent theme among authors, artists and scientists regarding the concept of the fresh start (blank page, empty canvas, original idea). I think its worth considering how truly “fresh” these really are. This idea came up during a technical planning meeting where one of participants viewed this new project as being offered a blank page. Once we stepped over that threshold, conflict erupted over the choices available with little conclusion. A large part of the issue was the axioms each person was working with. Across the board we all took a different set of decisions to be axiomatic. At some time in the past these “axioms” were choices, and became axiomatic through success. Someone’s past success becomes the model for future success, and the choices that led to that success become unstated decisions we are generally completely unaware of. These form the foundation of future work and often become culturally iconic in nature.

Once we stepped over that threshold, conflict erupted over the choices available with little conclusion. A large part of the issue was the axioms each person was working with. Across the board we all took a different set of decisions to be axiomatic. At some time in the past these “axioms” were choices, and became axiomatic through success. Someone’s past success becomes the model for future success, and the choices that led to that success become unstated decisions we are generally completely unaware of. These form the foundation of future work and often become culturally iconic in nature. Take the basic framework for discretization as an operative example: at Sandia this is the finite element method; at Los Alamos it is finite volumes. At Sandia we talk “elements”, at Los Alamos it is “cells”. From there we continued further down the proverbial rabbit hole to discuss what sort of elements (tets or hexes). Sandia is a hex shop, causing all sorts of headaches, but enabling other things, or simply the way a difficult problem was tackled. Tets would improve some things, but produce other problems. For some ,the decisions are flexible, for others there isn’t a choice, the use of a certain type of element is virtually axiomatic. None of these things allows a blank slate, all of them are deeply informed and biased toward specific decisions of made in some cases decades ago.

Take the basic framework for discretization as an operative example: at Sandia this is the finite element method; at Los Alamos it is finite volumes. At Sandia we talk “elements”, at Los Alamos it is “cells”. From there we continued further down the proverbial rabbit hole to discuss what sort of elements (tets or hexes). Sandia is a hex shop, causing all sorts of headaches, but enabling other things, or simply the way a difficult problem was tackled. Tets would improve some things, but produce other problems. For some ,the decisions are flexible, for others there isn’t a choice, the use of a certain type of element is virtually axiomatic. None of these things allows a blank slate, all of them are deeply informed and biased toward specific decisions of made in some cases decades ago. The other day I risked a lot by comparing the choices we’ve collectively made in the past as “original sin”. In other words what is computing’s original sin? Of course this is a dangerous path to tread, but the concept is important. We don’t have a blank slate; our choices are shaped, if not made by decisions of the past. We are living, if not suffering due to decisions made years or decades ago. This is true in computing as much as any other area.

The other day I risked a lot by comparing the choices we’ve collectively made in the past as “original sin”. In other words what is computing’s original sin? Of course this is a dangerous path to tread, but the concept is important. We don’t have a blank slate; our choices are shaped, if not made by decisions of the past. We are living, if not suffering due to decisions made years or decades ago. This is true in computing as much as any other area. In case you’re wondering about my writing habit and blog. I can explain a bit more. If you aren’t, stop reading. In the sense of authorship I force myself to face the blank page every day as an exercise in self-improvement. I read Charles Durhigg’s book “Habits” and realized that I needed better habits. I thought about what would make me better and set about building them up. I have a list of things to do every day, “write” “exercise” “walk” “meditate” “read” and so on.

In case you’re wondering about my writing habit and blog. I can explain a bit more. If you aren’t, stop reading. In the sense of authorship I force myself to face the blank page every day as an exercise in self-improvement. I read Charles Durhigg’s book “Habits” and realized that I needed better habits. I thought about what would make me better and set about building them up. I have a list of things to do every day, “write” “exercise” “walk” “meditate” “read” and so on. The blog is a concrete way of putting the writing to work. Usually, I have an idea the night before, and draft most of the thoughts during my morning dog walk (dogs make good motivators for walks). I still need to craft (hopefully) coherent words and sentences forming the theme. The blog allows me to publish the writing with a minimal effort, and forces me to take editing a bit more seriously. The whole thing is an effort to improve my writing both in style and ease of production.

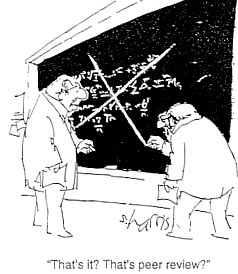

The blog is a concrete way of putting the writing to work. Usually, I have an idea the night before, and draft most of the thoughts during my morning dog walk (dogs make good motivators for walks). I still need to craft (hopefully) coherent words and sentences forming the theme. The blog allows me to publish the writing with a minimal effort, and forces me to take editing a bit more seriously. The whole thing is an effort to improve my writing both in style and ease of production. For some reason I’m having more “WTF” moments at work lately. Perhaps something is up, or I’m just paying attention to things. Yesterday we had a discussion about reviews, and people’s intense desire to avoid them. The topic came up because there have been numerous efforts to encourage and amplify technical review recently. There are a couple of reasons for this, mostly positive, but a tinge of negativity lies just below the surface. It might be useful to peel back the layers a bit and look at the dark underbelly.

For some reason I’m having more “WTF” moments at work lately. Perhaps something is up, or I’m just paying attention to things. Yesterday we had a discussion about reviews, and people’s intense desire to avoid them. The topic came up because there have been numerous efforts to encourage and amplify technical review recently. There are a couple of reasons for this, mostly positive, but a tinge of negativity lies just below the surface. It might be useful to peel back the layers a bit and look at the dark underbelly. I touched on this topic a couple of weeks ago (

I touched on this topic a couple of weeks ago ( The biggest problems with peer reviews are “bullshit reviews”. These are reviews that are mandated by organizations for the organization. These always get graded and the grades have consequences. The review teams know this thus the reviews are always on a curve, a very generous curve. Any and all criticism is completely muted and soft because of the repercussions. Any harsh critique even if warranted puts the reviewers (and their compensation for the review at risk). As a result of this dynamic, these reviews are quite close to a complete waste of time.

The biggest problems with peer reviews are “bullshit reviews”. These are reviews that are mandated by organizations for the organization. These always get graded and the grades have consequences. The review teams know this thus the reviews are always on a curve, a very generous curve. Any and all criticism is completely muted and soft because of the repercussions. Any harsh critique even if warranted puts the reviewers (and their compensation for the review at risk). As a result of this dynamic, these reviews are quite close to a complete waste of time. Because of the risk associated with the entire process, the organizations approach the review in an overly risk-averse manner, and control the whole thing. It ends up being all spin, and little content. Together with the dynamic created with the reviewers, the whole thing spirals into a wasteful mess that does no one any good. Even worse, the whole process has a corrosive impact on the perception of reviews. They end up having no up side; it is all down side and nothing useful comes out of them. All of this even though the risk from the reviews has been removed through a thoroughly incestuous process.

Because of the risk associated with the entire process, the organizations approach the review in an overly risk-averse manner, and control the whole thing. It ends up being all spin, and little content. Together with the dynamic created with the reviewers, the whole thing spirals into a wasteful mess that does no one any good. Even worse, the whole process has a corrosive impact on the perception of reviews. They end up having no up side; it is all down side and nothing useful comes out of them. All of this even though the risk from the reviews has been removed through a thoroughly incestuous process. An element in the overall dynamic is the societal image of external review as a sideshow meant to embarrass. The congressional hearing is emblematic of the worst sort of review. The whole point is grandstanding and/or destroying those being reviewed. Given this societal model, it is no wonder that reviews have a bad name. No one likes to be invited to their own execution.

An element in the overall dynamic is the societal image of external review as a sideshow meant to embarrass. The congressional hearing is emblematic of the worst sort of review. The whole point is grandstanding and/or destroying those being reviewed. Given this societal model, it is no wonder that reviews have a bad name. No one likes to be invited to their own execution. environment we find ourselves. First of all, people should be trained or educated in conducting, accepting and responding to reviews. Despite its importance to the scientific process, we are never trained how to conduct, accept or responds to a review (response happens a bit in a typical graduate education). Today, it is a purely experiential process. Next, we should stop including the scoring of reviews in any organizational “score”. Instead the quality of the review including the production or hard-hitting critique should be expected as a normal part of organizational functioning.

environment we find ourselves. First of all, people should be trained or educated in conducting, accepting and responding to reviews. Despite its importance to the scientific process, we are never trained how to conduct, accept or responds to a review (response happens a bit in a typical graduate education). Today, it is a purely experiential process. Next, we should stop including the scoring of reviews in any organizational “score”. Instead the quality of the review including the production or hard-hitting critique should be expected as a normal part of organizational functioning. I’m a progressive. In almost every way that I can imagine, I favor progress over the status quo. This is true for science, music, art, and literature, among other things. The one place where I tend to be status quo are work and personal relationships that form the foundation for my progressive attitudes. These foundations are formed by several “social contracts” that serve to define the roles and expectations. Without this foundation, the progress I so cherish is threatened because people naturally retreat to conservatism for stability.

I’m a progressive. In almost every way that I can imagine, I favor progress over the status quo. This is true for science, music, art, and literature, among other things. The one place where I tend to be status quo are work and personal relationships that form the foundation for my progressive attitudes. These foundations are formed by several “social contracts” that serve to define the roles and expectations. Without this foundation, the progress I so cherish is threatened because people naturally retreat to conservatism for stability. What I’ve come to realize is that the shortsighted, short is demolishing many of these social contracts –term thinking dominating our governance. Our social contracts are the basis of trust and faith in our institutions whether they are the rule of government, or the place we work. In each case we are left with a severe corrosion of the intrinsic faith once granted these cornerstones of public life. The cost is enormous, and may have created a self-perpetuating cycle of loss of trust precipitating more acts that undermine trust.

What I’ve come to realize is that the shortsighted, short is demolishing many of these social contracts –term thinking dominating our governance. Our social contracts are the basis of trust and faith in our institutions whether they are the rule of government, or the place we work. In each case we are left with a severe corrosion of the intrinsic faith once granted these cornerstones of public life. The cost is enormous, and may have created a self-perpetuating cycle of loss of trust precipitating more acts that undermine trust. What gets lost? Almost everything. Progress, quality, security, you name it. Our short-term balance sheet looks better, but our long-term prospects look dismal. The scary thing is that these developments help drive conservative thinking, which in turn drives these developments. As much as anything this could explain our Nation’s 50-year march to the right. We have taken the virtuous cycle we were granted, and developed a viscous cycle. It is a cycle that we need to get out of before it crushes our future.

What gets lost? Almost everything. Progress, quality, security, you name it. Our short-term balance sheet looks better, but our long-term prospects look dismal. The scary thing is that these developments help drive conservative thinking, which in turn drives these developments. As much as anything this could explain our Nation’s 50-year march to the right. We have taken the virtuous cycle we were granted, and developed a viscous cycle. It is a cycle that we need to get out of before it crushes our future. We got here through overconfidence and loss of trust can we get out of it by combining realism with trust in each other. Right now, the signs are particularly bad with nothing looking like realism, or trust being part of the current public discourse on anything.

We got here through overconfidence and loss of trust can we get out of it by combining realism with trust in each other. Right now, the signs are particularly bad with nothing looking like realism, or trust being part of the current public discourse on anything.