tl;dr

Since the end of World War 2, the United States has led the World in Science and Technology. Today, that leadership is gone. The decline has been decades in the making. That decline has turned into a free fall. In my own work, I have witnessed our almost willful abdication of the throne. As I discovered, this trend was seen across many disciplines. Through a combination of arrogance, mismanagement, and outright incompetence, American supremacy decayed. All of this revolves around a loss of societal focus coupled with waning trust. COVID-19 proved to be a near-death blow to societal support. These trends now combine to see a National suicide pact for scientific superiority under Trump. The lead that had disappeared is now in retreat and absolute surrender.

“Anti-intellectualism has been a constant thread winding its way through our political and cultural life, nurtured by the false notion that democracy means that ‘my ignorance is just as good as your knowledge.’” ― Isaac Asimov

Who was leading a year ago?

China was already ahead of the USA before Trump took office. Losing the lead was decades in the making. It is well documented by the Australian Strategic Policy Institute’s in a recent report (https://www.aspi.org.au/report/critical-technology-tracker/). “Who is Leading in Critical Technology” shows that China leads in 37 of 44 important areas. This report confirmed signs I’d been seeing in person for years. China’s ascent was rapid and absolutely stunning to watch. In my own area, they went from laughable to world-class in a little more than a decade. When I spoke to scientists from completely different areas, the story was the same (an area of chemistry specifically).

Why did I put this section in this frame? A year ago, the scientific community in the USA was far healthier than it is today. The Trump administration has launched an all-out assault on Science. The core of American science is being slaughtered in plain sight. The top universities in the country are under assault. The key agencies for research funding are being butchered as NIH, NSF, and NASA budgets are being cut by huge amounts. Where the money is going, the science production is highly suspect. This is mostly the defense sector, which already has huge problems.

The upshot is that American science and technology were in poor shape a year ago. The past year has seen the Nation decide to mercy kill it. If we were out of the lead a year ago, we have receded even further. Worse yet, we have no plan or way back. The slow, steady decline of science has turned into a free fall. This is a massive crisis, and American National Security and economic prosperity are in peril. This development puts American lives at risk. It assures that Americans of the future will be poorer and live shorter lives. The loss of scientific supremacy was incompetent, but the current approach is criminal negligence. Rather than fix the underlying problems, the current trajectory is to dig the hole even deeper.

“America says it loves science, but it sure as hell doesn’t want to pay for it.” ― Hope Jahren

What Is Killing Science?

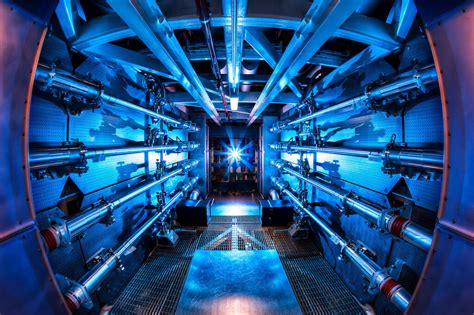

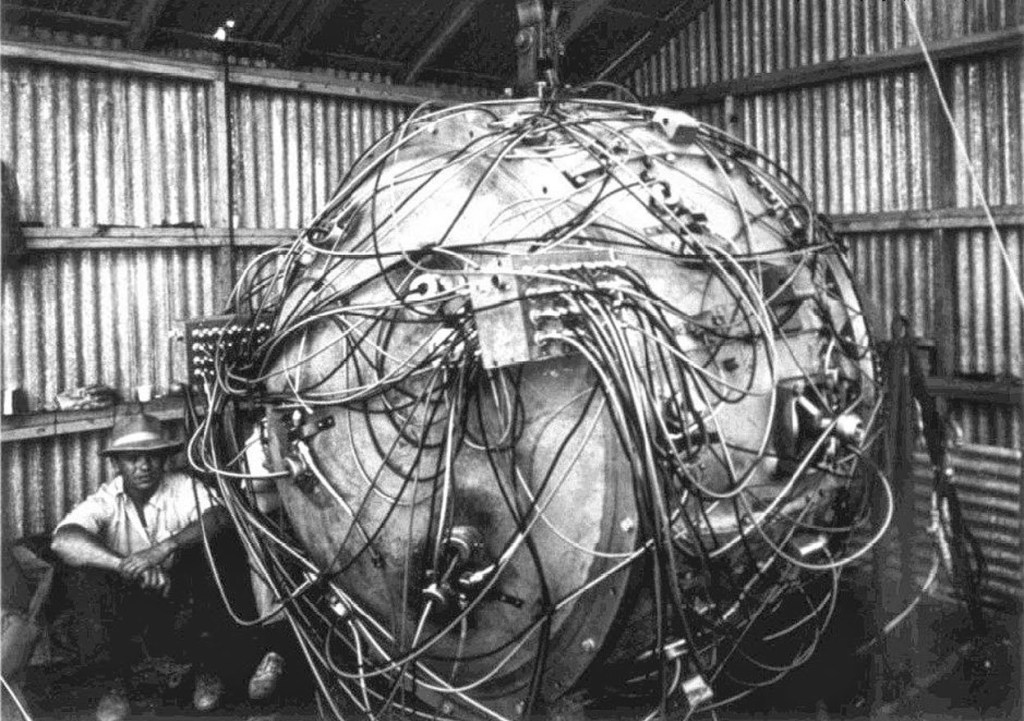

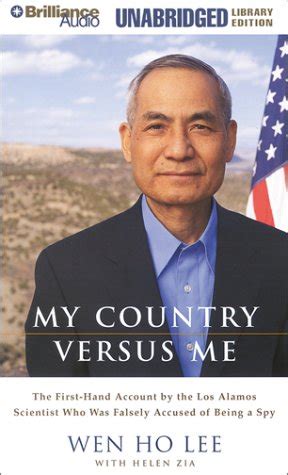

If I look back at my professional life, which spans nearly 40 years, the decline is obvious. It became obvious shortly after I arrived at Los Alamos in 1989. At first, Los Alamos was a godsend, and I felt magnificent. It was a huge upgrade from my University (third-tier New Mexico). Los Alamos would be an upgrade from almost any university, save a select few (Harvard, MIT, Caltech, …). That said, the signs of decline were almost immediately evident. Los Alamos had been in decline since roughly 1980, if not earlier.

As any keen observer of history, 1980 stands out with the election of Reagan and the beginning of an assault against the government. The 1970’s were more likely the origin of the decline with a host of ills from Watergate, the end of the Vietnam War, Love Canal, Three Mile Island, … We felt a collective withdrawal from faith and trust in the Nation represented by the Government. Reagan and the GOP took this into a full-on attack. The trust and confidence in science were part of the decline, as some constraints were placed on science.

Part of the damage Reagan produced came from the ascendancy of the “maximizing shareholder value” philosophy. This became central to American governance, whether in the public or private sector. The use of business principles based on this scarcity approach became how the government worked, too. Thus, the business approach driving massive inequality was applied to science and technology. The other side effect of the greedy business philosophy was the decimation of industrial science. Say goodbye to IBM and AT&T labs, and set the stage for the debacle of Boeing, seen more recently. It is a philosophy that breeds incompetence and fuck-ups. We see the rank and file worker or scientist devalued. Managers are now the valuable ones. Gone are pensions and the value of technical experts.

“The dumbing down of American is most evident in the slow decay of substantive content in the enormously influential media, the 30 second sound bites (now down to 10 seconds or less), lowest common denominator programming, credulous presentations on pseudoscience and superstition, but especially a kind of celebration of ignorance” ― Carl Sagan

This was reflected in Lab leadership and governance, which declined. Reagan also took on the Soviets, and this shielded Los Alamos to some degree. The Star Wars funding also blunted the damage. When the Cold War ended in 1989, marked by the Berlin Wall coming down, the real decline stepped into overdrive. Gradually, all funding support for the defense against Soviet aggression was lost. It was also far less simple than the lack of trust by the Nation also took root. Funding started to come with strings attached and micromanagement by Congress.

This micromanagement has only become worse and has become a slow strangulation of science. The management is being done by people who have little to no business telling the Labs what to do. This is complemented by a host of other dictums in safety and security. Each of these other requirements takes its own pound of flesh. None of them yields any benefit for the Lab’s mission. All of them detract. Each one is a sign of the lack of trust for the Lab. Any minor screwup or failure breeds another useless bit of bureaucracy, training, or overhead. The result is an appalling cost for the Lab’s work and a diminishing effectiveness. Meanwhile, nothing is done to push in the opposite direction.

The consequence is a decline in science. Los Alamos and the other NNSA labs are bastions of competence and accomplishment to much of the rest of science. NASA and the Defense Labs are far worse and have taken a bigger fall from the heights. Despite vast sums of money, Defense Labs are terrible. They rely on crap technology from NNSA labs and can’t produce anything better. Universities are not immune either. We see less accomplishment and increased costs everywhere. The result is American science and technology losing its lead internationally. This retreat was steady and gradual until now. This year, we handed the crown to China with a virtual abdication.

“But you see, a rich country like America can perhaps afford to be stupid.” ― Barack Obama

Who is leading now?

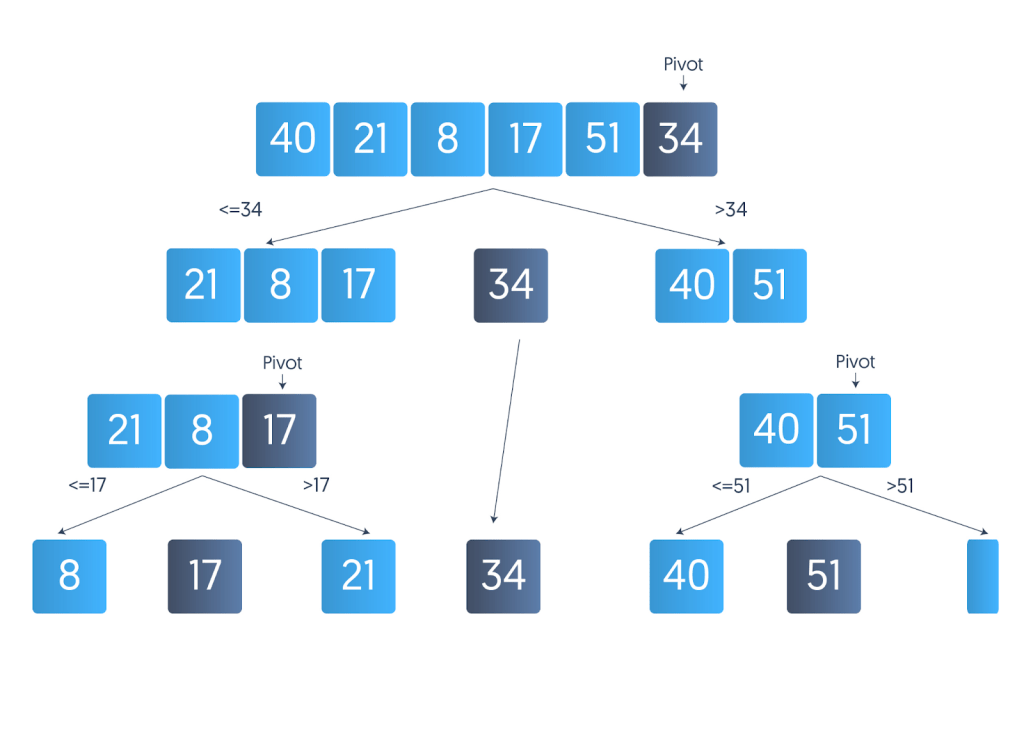

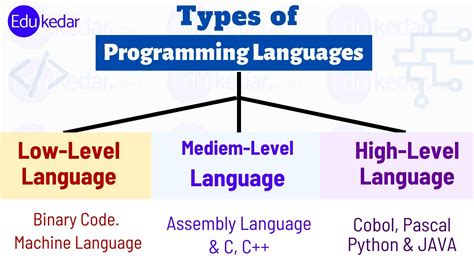

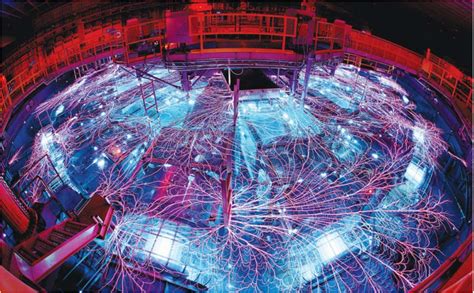

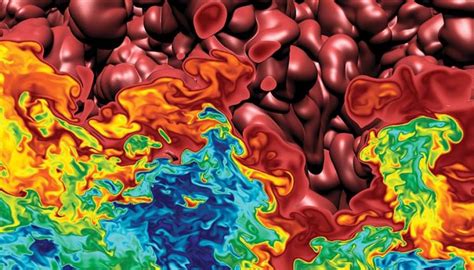

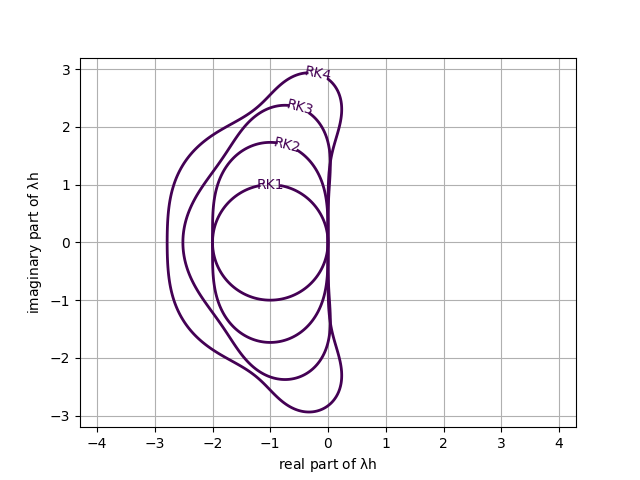

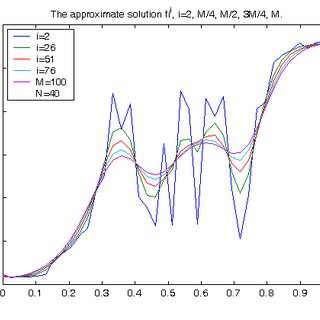

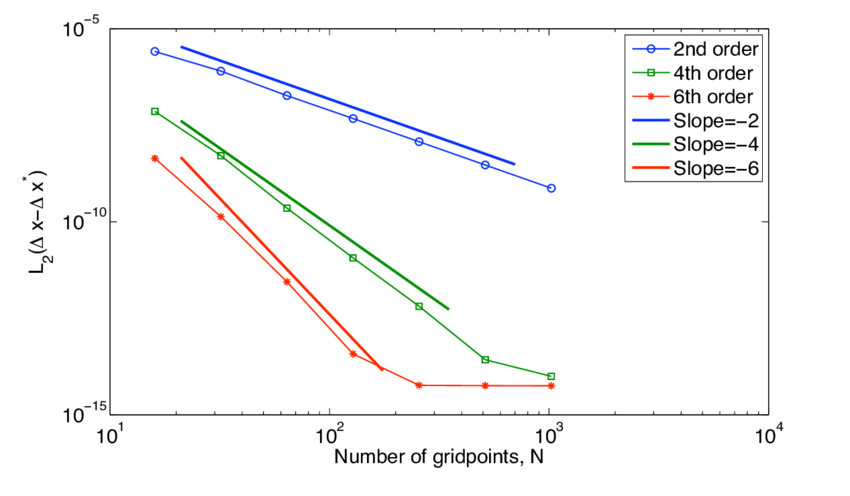

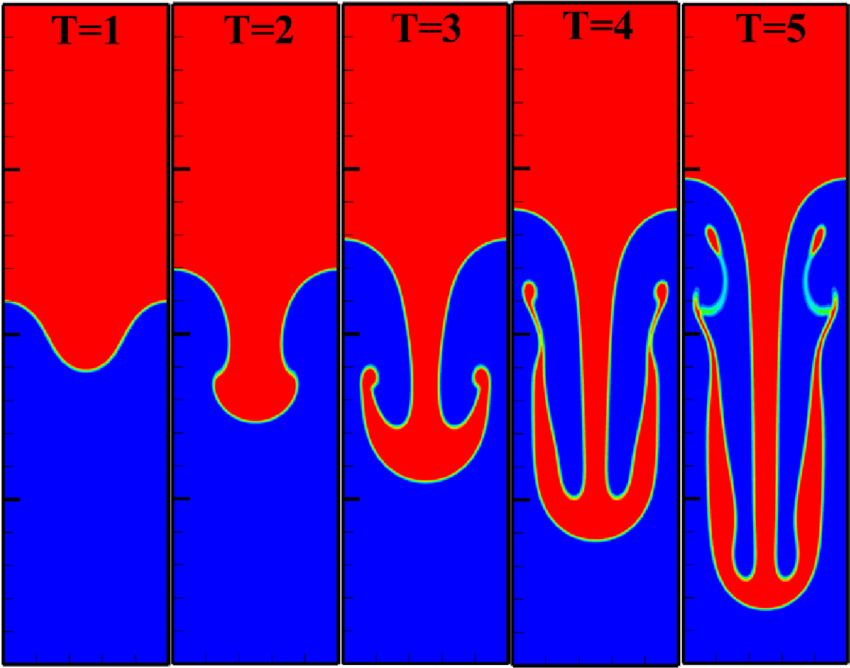

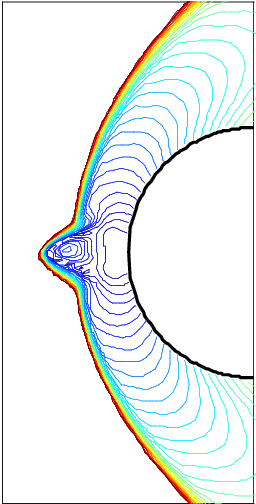

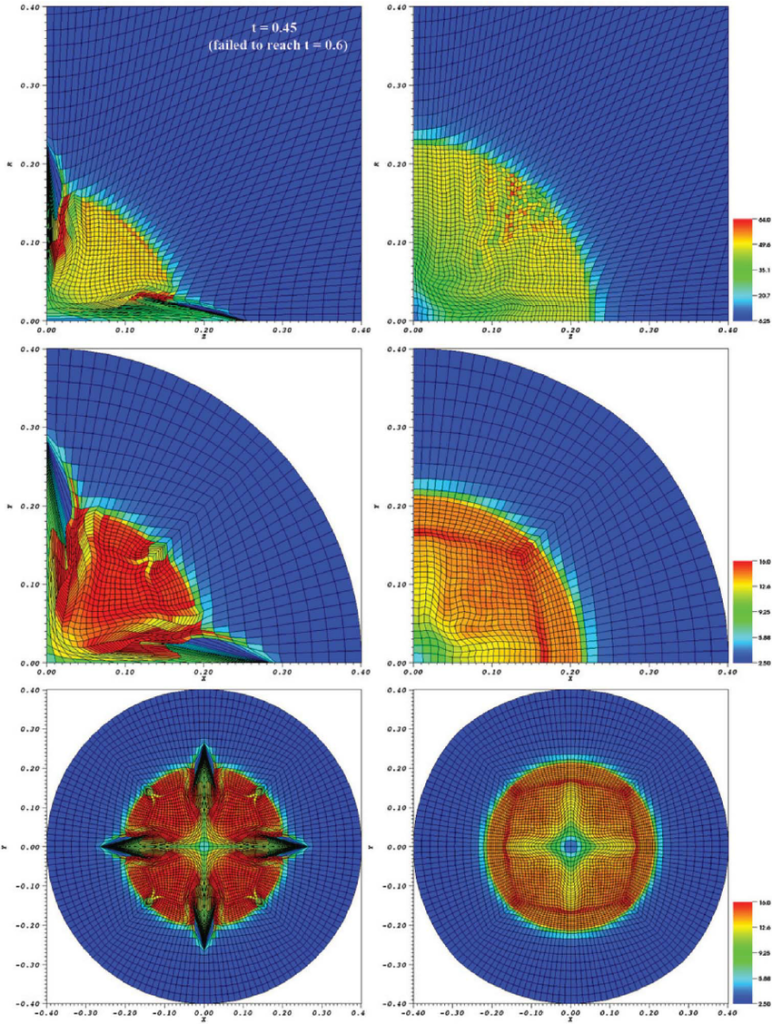

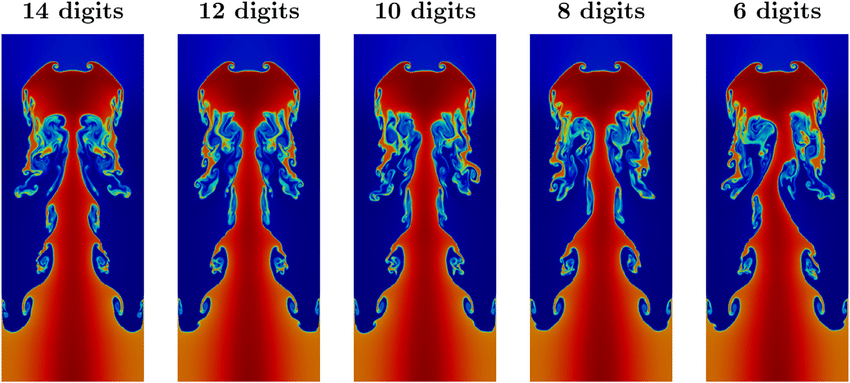

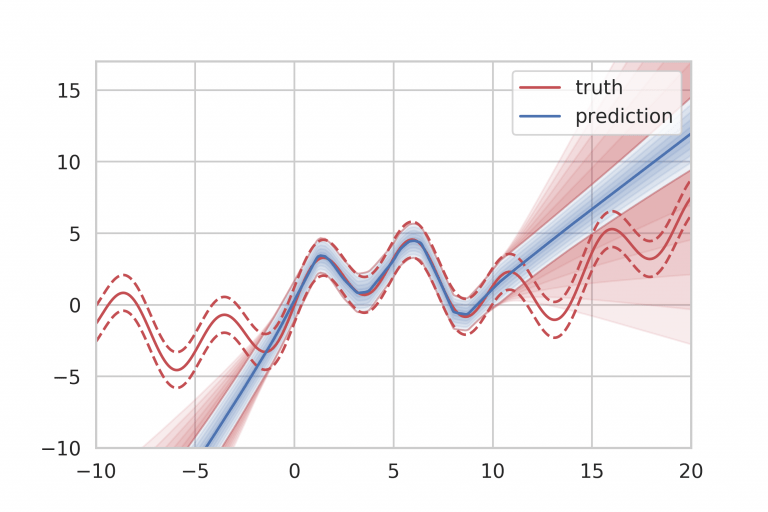

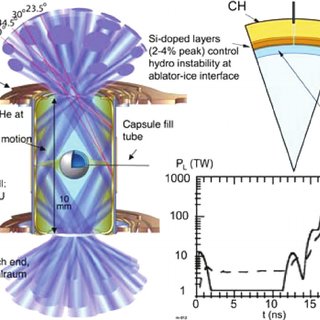

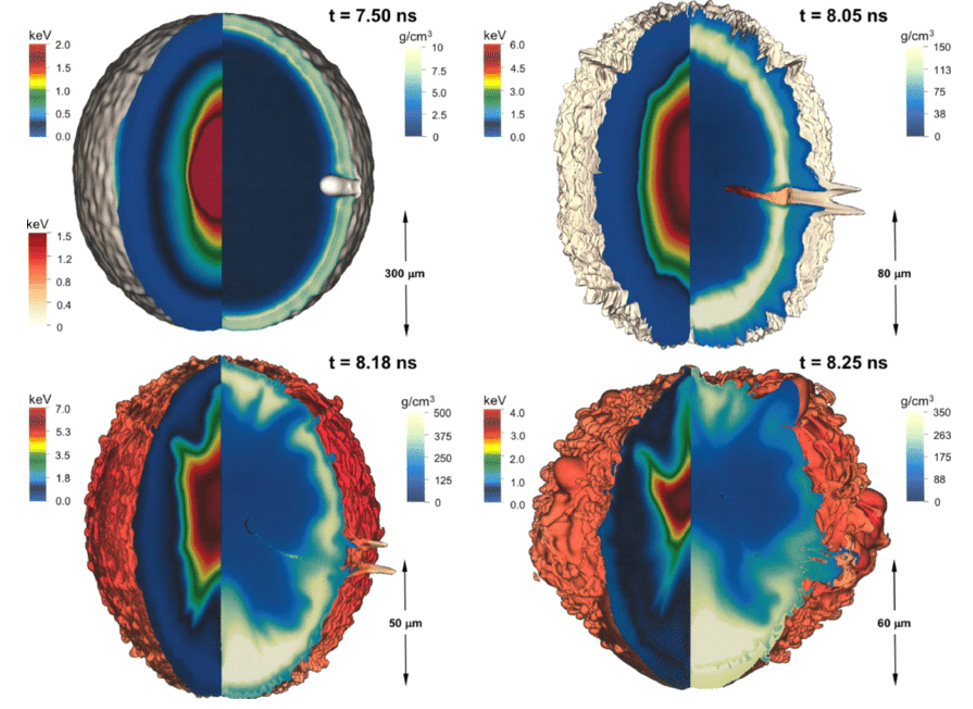

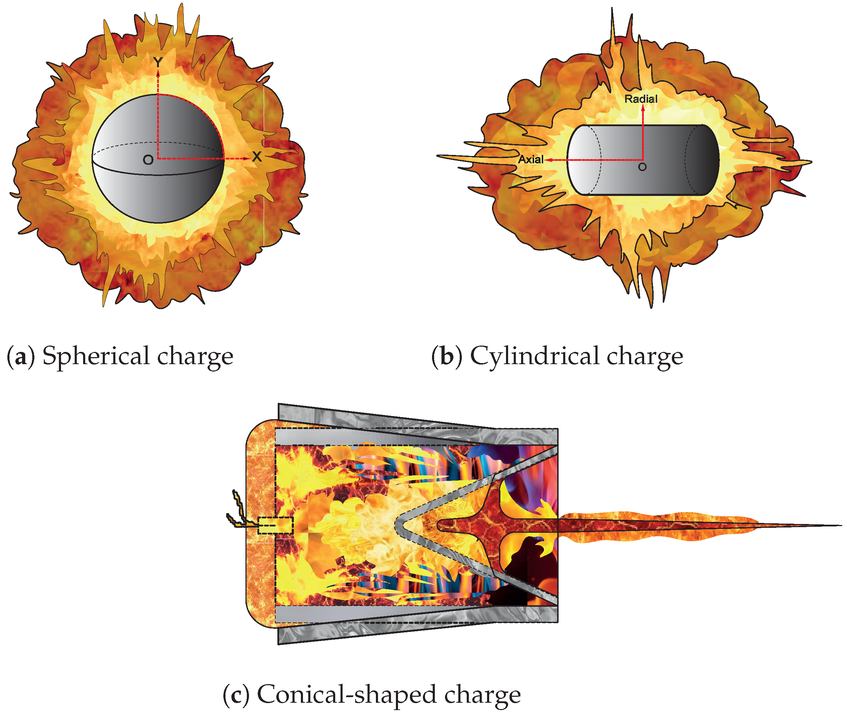

As noted above, China now leads, and the USA may now be behind Europe as well once the Trump Administration’s carnage takes hold. I watched this play out in my area of expertise, Shock Physics. I am an expert in solving the equations of fluid dynamics, especially with shock waves. It is a key and essential science needed in defense science and nuclear weapons. I have always paid attention to the work around the world. Europeans have always been very good. If I go back 15-20 years, the Chinese were modestly to laughably competent. Their papers weren’t very good at all. Over a decade, this changed. All of a sudden, after 2015, something changed. Their work was great. It was as good or better than anything in the West.

A bunch of us from the Western nuclear weapons labs attended a meeting in 2018. It was ECCOMAS in Glasgow, Scotland. We saw the impressive Chinese work in person. We were all in agreement about how impressive it was. We saw talk after talk of world-class work, including efforts that exceeded what was going on at our Labs. Soon after, I had the opportunity to engage our federal program manager. I felt like it was essential to point this out to them. The response was utter and complete indifference. Basically, they could not give a single fuck about the newfound Chinese lead in shock physics. So the USA is fucked.

Let’s talk a bit about just how fucked we are. There is a shock code that is used broadly across the American National security establishment. It comes from an NNSA lab, and is used by another Lab, but extensively by DoD. It is this DoD connection that illustrates vividly how fucked we are. Let me remind you that the DoD budget is now over a trillion dollars. Yet for this vast amount of money and copious funding for decades, the DoD can’t make a shock code worth a shit. This code they all use is an abomination. This abomination is better than anything they could make, which is nothing. Easy to use and lots of models, plus it runs fast on computers (although its character is threatened there being a Fortran code). Worse yet, the code was written when I was graduating from high school (class of ’82! Go Eagles!). It includes 1982 technology and none of the vast improvements since. To me, this is unacceptable, but to the USA, this is just what we do. So we are fucked.

This was not a sudden event. It was years in the making. On the one hand, you had mismanagement, poor investment, and different priorities in the USA. This was countered by focused support and radical progress in China. The USA simply stopped striving and allowed the tools to dull. We focused on big computers instead of a balance of computers with codes, methods, and mathematics. We quit doing the things that brought us the lead in the first place. The Chinese did. The USA simply surrendered, not intentionally, but by lack of care mixed with arrogance. We lost a key area of defense science that we invented. This was done by the same indifference and lack of giving fucks I encountered.

“Scientists and inventors of the USA (especially in the so-called “blue state” that voted overwhelmingly against Trump) have to think long and hard whether they want to continue research that will help their government remain the world’s superpower. All the scientists who worked in and for Germany in the 1930s lived to regret that they directly helped a sociopath like Hitler harm millions of people. Let us not repeat the same mistakes over and over again.” ― Piero Scaruffi

As other professionals have told me, this is not limited to shock physics. I talked to a distinguished Oak Ridge Chemist at a cocktail party. He told me the same story in his area. Marginal competence followed by a rapid ascent to superiority. The Australian study noted at the beginning highlights this happening in area after area. These stories are not isolated; the problems are systematic. This was the result of two forces working in concert. American decline and incompetence, together with Chinese focus, investment, and endeavor. Our decline is the product of decades of malpractice. Current policy is not fixing the problems, but adding malice and outright negligence to the problems.

What is at stake?

“Science and technology are the engines of prosperity. Of course, one is free to ignore science and technology, but only at your peril. The world does not stand still because you are reading a religious text. If you do not master the latest in science and technology, then your competitors will.” ― Michio Kaku

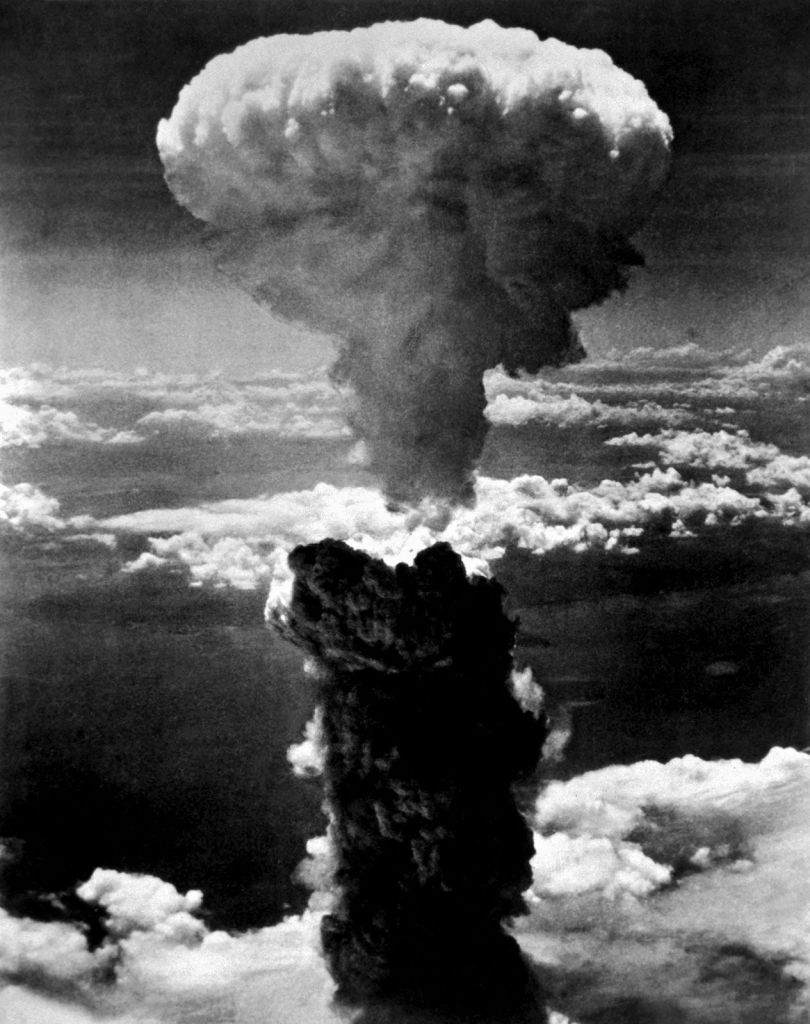

The stakes for the USA and the World are huge. For most Americans, the most obvious impact is national security. This feels the highest leverage for pushing back. Ever since World War 2, scientific supremacy has been essential for National defense. Scientific power with nuclear weapons replaced industrial capacity for effective war-making. As drones, robots, and AI become more central, this becomes even more compelling. We already have nuclear weapons and their science as a huge leverage point for science and technology. It is precisely the moment in history when the danger feels maximized. In this moment, American supremacy is disappearing. Future Americans will be less safe and less free.

“The progress and perfection of mathematics are linked closely with the prosperity of the state.” ― Carl Sagan

The signs are more troubling than most realize. Take our industrial base in the form of aerospace. Boeing used to be the apex of engineering in the USA. Greed and short-term focus have annihilated the company’s prowess, seeding a host of disasters. This also hints at another loss from incompetent science, our prosperity. Defense science has been the root of much of our economy today. Just take the internet, a product of a DARPA project amid the Cold War. Now it has become the central backbone of the international economy. The future “internet” and tomorrow’s economy are much more likely to come from elsewhere. Future Americans will be poorer for this. The damning fact is that this is almost entirely self-inflicted.

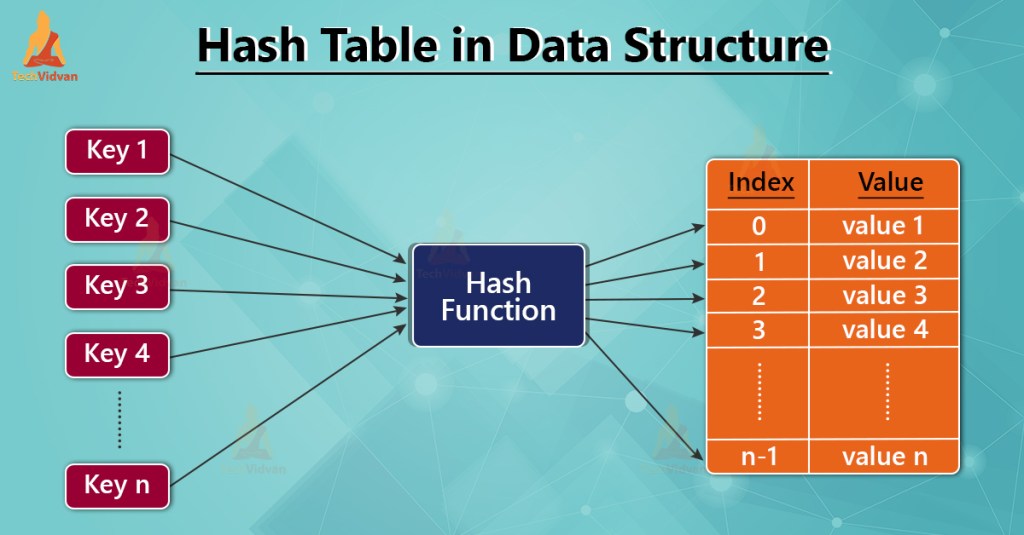

We are amid vast and over-the-top AI hype. Every fucking Lab is going ape shit over AI. I agree that the current moment is a big deal. The real reason the Labs are all gaga about AI is all about lots of money. Intellectually, almost no one is meeting the moment. The idea space for AI within government is close to the empty set. All this money is going to efforts to apply AI to our mission space. That said, the strategy is abysmal.

Like efforts before this in computational science, the strategy is computer-heavy and thinking-light. It has the intellectual depth of a rain puddle in my driveway. The whole moment with LLMs is grounded on an algorithm improvement. The next big step for AI will be algorithm improvement. The level of advances coming from hardware and data has a very low ceiling, but it is easy. We are taking this easy, simple path, and we will hit the wall. This is the same shallow blueprint we used to hand over computational science to the Chinese.

This will create the next AI winter unless discoveries are made. Worse yet and more dangerously, the next breakthrough won’t likely happen in the USA. It could, but probably not. China is the likely place for this. When they do, China will own the AI future. This may be their route to owning the economic and national security future, too. The incompetence of our leadership is paving the way for their dominance. They do this one stupid and short-term decision at a time. The writing is on the Wall, the future will be Chinese and not American. If we were paying attention instead of bullshitting ourselves about how great we are, this could be stopped. Instead, we are destroying science in the USA and creating an environment where we can’t win.

“For Fauci, science was a self-correcting compass, always pointed at the truth. For Trump, the truth was Play-doh, and he could twist it to fit the shape of his desire.” ― Lawrence Wright

The benefits of science have a massive impact on our health. The fruits of science allow us to live longer and better. This comes from medicines and therapies of all sorts. The backlash after the COVID pandemic is destroying the medical advances and science in the USA. This is the apex of withdrawn trust in science and the road to suffering and death for many. Future Americans will die needlessly. They already are, as Americans reject vaccines. It is pure ignorance. It is another self-inflicted wound that will harm the Nation for the foreseeable future. All of it coming from a lack of trust and some degree of irresponsible arrogance. It is combined with the amoral profit motive governing American medicine. Together, this is literally toxic to the health of Americans.

“It is my hope that this short book will remind all Americans that blind faith in authority is a feature of religion and autocracy, but not of science nor democracy.” ― Robert F. Kennedy Jr.

To go one level deeper, we can examine the roots of this. At the core of our problem is a rejection of expertise. The key aspect is the common thread of American dysfunction, lack of trust. This lack of trust has become a feature of America. This stems from a belief that everyone is out for themselves. No one is committed to anyone but themselves. Left to their own devices, people will choose greed. Self-interest is the core creed of America. One should ask what values any American would sacrifice for others or the good of the Nation. Can you have anything that is real patriotism when you don’t trust your fellow citizens? This ultimately is the root of our decline and may destroy the nation as it has destroyed science.

“Facts do not cease to exist because they are ignored.” ― Aldous Huxley