Clarke’s Third Law: Any sufficiently advanced technology is indistinguishable from magic.

– Arthur C. Clarke

One of the most important things about modern computational methods is their nonlinear approach to solving problems. These methods are easily far more important to the utility of modeling and simulation in the modern world than high performance computing. The sad thing is that little or no effort is going into extending and improving these approaches despite the evidence of their primacy. Our current investments in hardware are unlikely to yield much improvement whereas these methods were utterly revolutionary in their impact. The lack of perspective regarding this reality is leading to vast investment in computing technology that will provide minimal returns.

One of the most important things about modern computational methods is their nonlinear approach to solving problems. These methods are easily far more important to the utility of modeling and simulation in the modern world than high performance computing. The sad thing is that little or no effort is going into extending and improving these approaches despite the evidence of their primacy. Our current investments in hardware are unlikely to yield much improvement whereas these methods were utterly revolutionary in their impact. The lack of perspective regarding this reality is leading to vast investment in computing technology that will provide minimal returns.

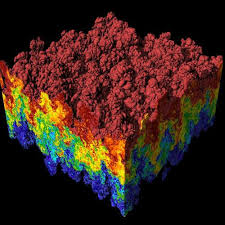

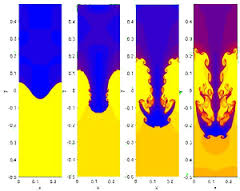

In this case the nonlinearity is the use of solution adaptive methods that apply the “best” method adjusted to the local structure of the solution itself. In many cases the methods are well grounded in theory, and provided the essential push of improvement in results  that have powered computational physics into a powerful technology. Without these methods we would not have the capacity to reliably simulate many scientifically interesting and important problems, or utilize simulation in the conduct of engineering. The epitome of modeling and simulation success is computational fluid dynamics (CFD) where these nonlinear methods have provided the robust stability and stunning results capturing imaginations. In CFD these methods were utterly game changing and the success of the entire field is predicated on their power. The key to this power is poorly understood, and too often credited to computing hardware instead of the real source of progress: models, methods and algorithms with the use of nonlinear discretizations being primal.

that have powered computational physics into a powerful technology. Without these methods we would not have the capacity to reliably simulate many scientifically interesting and important problems, or utilize simulation in the conduct of engineering. The epitome of modeling and simulation success is computational fluid dynamics (CFD) where these nonlinear methods have provided the robust stability and stunning results capturing imaginations. In CFD these methods were utterly game changing and the success of the entire field is predicated on their power. The key to this power is poorly understood, and too often credited to computing hardware instead of the real source of progress: models, methods and algorithms with the use of nonlinear discretizations being primal.

And a step backward, after making a wrong turn, is a step in the right direction.

― Kurt Vonnegut

Unfortunately, we live in an era where the power of these methods and algorithms is under-utilized. Instead we see an almost complete emphasis on computing hardware as the route toward progress. This emphasis is naïve, and ultimately limits the capacity of computing, modeling and simulation to become a boon to society. Our current efforts are utterly shallow intellectually and almost certainly bound to fail. This failure wouldn’t be the good kind of failure since the upcoming crash is absolutely predictable. Computing hardware is actually the least important and most distant aspect of computing technology necessary for the success of simulation. High performing computers are absolutely necessary, but woefully insufficient for success. A deeper and more appreciative understanding of nonlinear methods for solving our models would go a long way to harnessing the engine of progress.

When one thinks about these things that almost immediately comes to mind with nonlinear methods is the term “limiters”. Often limiters are viewed as being a rather heavy-handed and crude approach to things. A limiter is a term inherited from the physics community where physical effects in modeling and simulation need to be limited to stay within some prescribed physical bounds. For example keeping transport within the limit of the speed of light for radiation transport. Another example would be a limit keeping positive-definite quantities such as density or pressure, positive. In nonlinear methods the term limiter often can be interpreted as limiting the amount of high-order method used in solving an equation. It has been found that without limits high-order methods produce anomalous effects (e.g., oscillations, solutions outside bounds, non-positivity). The limiter becomes a mixing parameter for high-order and low-order method for solving a transport equation. Usually the proviso is that the low-order method is guaranteed to produce a physically admissible solution.

All theories are legitimate, no matter. What matters is what you do with them.

― Jorge Luis Borges

To keep from falling into the old traps of how to talk about things, let’s take a deeper look at how these things work, and why. Also explore the alternatives to limiters and the ways everything needs to work together to be effective. There is more than one way to make it all work, but the recipe ends up being quite the same at the high end.

Ideally, we would like to have highly accurate, very stable, and completely reliable computations. The fundamental nature of our models of the universe conspire to make this far from a trivial task. Our models being comprised of mathematical expressions then are governed by mathematical realities. These realities in the form of fundamental theorems provide a foundation upon which excellent numerical methods may be built. Last week I discussed the Lax Equivalence theorem and this is a good place to start. There the combination of consistency and stability imply convergence of solutions. Consistency is having numerical accuracy if at least first order, but higher than first order accuracy will be more efficient. Stability is the nature of just getting a solution that hangs together (doesn’t blow up as we compute it). Stability is something so essential to success that it supersedes anything else when it is absent.  Next in our tour of basic foundational theorems is Godunov’s theorem, which tells us a lot about what is needed. In its original form it’s a bit of a downer, you can’t have a linear method be higher than first-order accurate and non-oscillatory (monotonicity preserving). The key concept is to turn the barrier on its head, you can have a nonlinear method be higher than first-order accurate and non-oscillatory. This is then the instigation for the topic of this post, the need and power of nonlinear methods. I’ll posit the idea that the concept may actually go beyond hyperbolic equations, but the whole concept of nonlinear discretizations is primarily applied to hyperbolic equations.

Next in our tour of basic foundational theorems is Godunov’s theorem, which tells us a lot about what is needed. In its original form it’s a bit of a downer, you can’t have a linear method be higher than first-order accurate and non-oscillatory (monotonicity preserving). The key concept is to turn the barrier on its head, you can have a nonlinear method be higher than first-order accurate and non-oscillatory. This is then the instigation for the topic of this post, the need and power of nonlinear methods. I’ll posit the idea that the concept may actually go beyond hyperbolic equations, but the whole concept of nonlinear discretizations is primarily applied to hyperbolic equations.

A few more theorems help to flesh out the basic principles we bring to bear. A key result is the theorem of Lax and Wendroff that shows the value of discrete conservation. If one has conservation then you can show that you are achieving weak solutions. This must be combined with picking the right weak solution, as there are actually infinitely many weak solutions, all of them wrong save one. The task of getting the right weak solution is produced with sufficient dissipation, which produces entropy. Key results are due to Osher who attached the character of (approximate) Riemann solvers to the production of sufficient dissipation to insure physically relevant solutions. As we will describe there are other means to introducing dissipation aside from Riemann solvers, but these lack some degree of theoretical support unless we can tie them directly to the Riemann problem. Of course most of us want physically relevant solutions although lots of mathematicians act like this is not a primal concern! There is a vast phalanx of other

A few more theorems help to flesh out the basic principles we bring to bear. A key result is the theorem of Lax and Wendroff that shows the value of discrete conservation. If one has conservation then you can show that you are achieving weak solutions. This must be combined with picking the right weak solution, as there are actually infinitely many weak solutions, all of them wrong save one. The task of getting the right weak solution is produced with sufficient dissipation, which produces entropy. Key results are due to Osher who attached the character of (approximate) Riemann solvers to the production of sufficient dissipation to insure physically relevant solutions. As we will describe there are other means to introducing dissipation aside from Riemann solvers, but these lack some degree of theoretical support unless we can tie them directly to the Riemann problem. Of course most of us want physically relevant solutions although lots of mathematicians act like this is not a primal concern! There is a vast phalanx of other

theorems of practical interest, but I will end this survey with a last one by Osher and Majda with lots of practical import. Simply stated this theorem limits the numerical accuracy we can achieve in regions affected by a discontinuous solution to first-order accuracy. The impacted region is bounded by the characteristics emanating from the discontinuity. This puts a damper on the zeal for formally high order accurate methods, which needs to be considered in the context of this theorem.

theorems of practical interest, but I will end this survey with a last one by Osher and Majda with lots of practical import. Simply stated this theorem limits the numerical accuracy we can achieve in regions affected by a discontinuous solution to first-order accuracy. The impacted region is bounded by the characteristics emanating from the discontinuity. This puts a damper on the zeal for formally high order accurate methods, which needs to be considered in the context of this theorem.

Whenever a theory appears to you as the only possible one, take this as a sign that you have neither understood the theory nor the problem which it was intended to solve.

― Karl Popper

I will note that we are focused on hyperbolic equations because they are so much harder to solve. It remains an open question as to how much all of this applies to parabolic or elliptic equations. I suspect it does albeit to a less degree because those equations are so much more forgiving. Hyperbolic equations are deeply unforgiving and cause you to pay for all sins many times over. For this reason hyperbolic equations have been a pacing aspect of computational modeling where nonlinear methods have allowed progress and to some degree tamed the demons.

Let’s return to the idea of limiters and act to dissuade the common view of limiters and their intrinsic connection to dissipation. The connection there is real, but less direct than commonly acknowledged. A limiter is really a means of stencil selection in an adaptive manner separate from dissipation. They may be combined, but usually not with good comprehension of the consequences. Another way to view the limiter is a way of selecting the appropriate bias in the stencil used to difference an equation based upon the application of a principle. The principle most often used for limiting is some sort of boundedness in the representation, which may equivalently be associated with selecting a smoother (nicer) neighborhood to execute the discretization on. The way an equation is differenced certainly impacts the nature and need for dissipation in the solution, but it is indirect. Put differently, the amount of dissipation needed with the application of a limiter varies both with the limiter itself, but also with the dissipation mechanism, but the two are independent. This does get into the whole difference between flux limiters and geometric limiters, a topic worth some digestion.

Let’s return to the idea of limiters and act to dissuade the common view of limiters and their intrinsic connection to dissipation. The connection there is real, but less direct than commonly acknowledged. A limiter is really a means of stencil selection in an adaptive manner separate from dissipation. They may be combined, but usually not with good comprehension of the consequences. Another way to view the limiter is a way of selecting the appropriate bias in the stencil used to difference an equation based upon the application of a principle. The principle most often used for limiting is some sort of boundedness in the representation, which may equivalently be associated with selecting a smoother (nicer) neighborhood to execute the discretization on. The way an equation is differenced certainly impacts the nature and need for dissipation in the solution, but it is indirect. Put differently, the amount of dissipation needed with the application of a limiter varies both with the limiter itself, but also with the dissipation mechanism, but the two are independent. This does get into the whole difference between flux limiters and geometric limiters, a topic worth some digestion.

In examining the topic of limiters and nonlinear stabilization we find an immediate and significant line of demarcation between two philosophical tenets. The first limiters came from limiting fluxes in the method of flux corrected transport. An alternative approach is found through extending Godunov’s method to higher order accuracy, and involves the limiting of the geometric reconstruction of variables. In the case of flux limiting, the accuracy and dissipation (e.g. stabilization) are mixed together while the geometric  approach separates these effects into independent steps. The geometric approach puts bounds on the variables being solved, and then relies on an agnostic approach to the variables for stabilization in the form of a Riemann solver. Both approaches are successful in solving complex systems of equations and have their rabid adherents (I favor the reconstruct-Riemann approach). The flux form can be quite effective and produces better extensions to multiple dimensions, but can also involve heavy-handed dissipation mechanisms.

approach separates these effects into independent steps. The geometric approach puts bounds on the variables being solved, and then relies on an agnostic approach to the variables for stabilization in the form of a Riemann solver. Both approaches are successful in solving complex systems of equations and have their rabid adherents (I favor the reconstruct-Riemann approach). The flux form can be quite effective and produces better extensions to multiple dimensions, but can also involve heavy-handed dissipation mechanisms.

If one follows the path using geometric reconstruction several aspects of nonlinear stabilization may be examined more effectively. Many of the key issues associated with the nonlinear stabilization are crystal clear in this context. The clearest example is monotonicity of the reconstruction. It is easy to see how the reconstructed version of the solution is bounded (or not) compared to the local variable’s bounds. It is generally appreciated that the application of monotonicity renders solutions relatively low order in accuracy. This is most acute at extrema in the solution where the accuracy degrades to first-order intrinsically. What becomes even more enabled by looking at the geometric reconstruction is moving beyond monotonicity to stabilization that provides better accuracy. The derivation of methods that do not degrade accuracy at extrema, but extend the concept of boundedness appropriately is much clearer with a geometric approach. Solving this problem effectively is a key to producing methods with greater numerical accuracy and better efficiency computationally. While this may be achieved via flux limiters with some success there are serious issues in conflating geometric fidelity with dissipative stabilization mechanisms.

The needs for dissipation in schemes are often viewed with significant despair by parts of the physics community. Much of the conventional wisdom would dictate that dissipation-free solutions are favored. This is rather unfortunate because dissipation is the encoding of powerful physics principles into methods rather than some sort of crude numerical artifice. Their specific and bounded dissipative character governs most complex nonlinear systems. If the solution has too little dissipation, the solution is not physical. As such, dissipation is necessary and its removal could be catastrophic.

The first stabilization of numerical methods was found with the original artificial viscosity (developed by Robert Richtmyer to stabilize and make useful John Von Neumann’s numerical method for shock waves). The name “artificial viscosity” is vastly unfortunate because the dissipation is utterly physical. Without its presence the basic numerical method was utterly and completely useless leading to a catastrophic instability (almost certainly helped instigate the investigation of numerical stability along with instability in integrating parabolic equations). Physically most interesting nonlinear systems produce dissipation even in the limit where the explicit dissipation can be regarded as vanishingly small. This is true for shock waves and turbulence where the dissipation in the inviscid limit has

The first stabilization of numerical methods was found with the original artificial viscosity (developed by Robert Richtmyer to stabilize and make useful John Von Neumann’s numerical method for shock waves). The name “artificial viscosity” is vastly unfortunate because the dissipation is utterly physical. Without its presence the basic numerical method was utterly and completely useless leading to a catastrophic instability (almost certainly helped instigate the investigation of numerical stability along with instability in integrating parabolic equations). Physically most interesting nonlinear systems produce dissipation even in the limit where the explicit dissipation can be regarded as vanishingly small. This is true for shock waves and turbulence where the dissipation in the inviscid limit has remarkably similar forms structurally. Given this basic need, the application of some sort of stabilization is an absolute necessity to produce meaningful results both from a purely numerical respect and the implicit connection to the physical World. I’ve written recently on the use of hyperviscosity as yet another mechanism for producing dissipation. Here the artificial viscosity is the archetype of hyperviscosity and its simplest form. As I’ve mentioned before the original turbulent subgrid model was also based directly upon the artificial viscosity devised by Richtmyer (often misattributed to Von Neumann although their collaboration clearly was important).

remarkably similar forms structurally. Given this basic need, the application of some sort of stabilization is an absolute necessity to produce meaningful results both from a purely numerical respect and the implicit connection to the physical World. I’ve written recently on the use of hyperviscosity as yet another mechanism for producing dissipation. Here the artificial viscosity is the archetype of hyperviscosity and its simplest form. As I’ve mentioned before the original turbulent subgrid model was also based directly upon the artificial viscosity devised by Richtmyer (often misattributed to Von Neumann although their collaboration clearly was important).

The other approach to stabilization is at first blush utterly different in approach, but upon deep reflection completely similar and complementary. For other numerical methods the use of Riemann solvers can be used to provide stability. A Riemann solver uses the nature of a local (approximate) exact physical solution to resolve a flow locally. In this way the nature of the physical propagation of information provides a more stable representation of the solution. It turns out that the Riemann solution will produce an implicit dissipative effect that impacts the solution much like artificial viscosity would. The real power is exploiting this implicit dissipative effect to provide real synergy where each may provide complementary views of stabilization. For example a Riemann solver produces a complete image of what modes in a solution must be stabilized in a manner that might inform the construction of an improved artificial viscosity. On the other hand artificial viscosity applies to nonlinearities often ignored by approximate Riemann solvers. Finding an equivalence between the two can produce more robust and complete Riemann solvers. As is true with most things, true innovation can be achieved through an open mind and a two way street.

mind and a two way street.

Perhaps I will attend to a deeper discussion of how high-order accuracy connects to these ideas in next week’s post. I’ll close with a bit of speculation. In my view one of the keys to the future is how to figure out how to efficiently harness high-order methods for more accurate solutions with sufficient robustness. New computing platforms dearly need the computational intensity offered by high-order methods to keep themselves busy in auseful manner because of exorbitant memory access costs. Because practical problems are ugly with all sorts of horrible nonlinearities and effectively singular features, the actual attainment of high-order convergence of solutions is unachievable. Making high-order methods both robust enough and efficient enough under these conditions has thus far eluded our existing practice.

Extending the ideas of nonlinear methods to other physics is another direction needed. For example, do any of these ideas apply to parabolic equations with high-order methods? There are some key ideas of physically relevant solutions, but parabolic equations are generally so forgiving no focus is given. The question of whether something like Godunov’s theorem applying to high-order solution of parabolic equations is worth asking (I think a modified version of it might well). I will not that some non-oscillatory schemes do apply to extremely nonlinear parabolic equations exhibiting wave-like solutions.

The last bit of speculation will apply to the application of these ideas to common methods for solid mechanics. Generally it has been found that artificial viscosity is needed for stabling integrating solid mechanical models. Unfortunately this is using the crudest and least justified philosophy of artificial viscosity. Solid mechanics could deeply utilize the principles of nonlinear methods to very great effect. Solid mechanics is basically a system hyperbolic equations abiding by the full set of foundational conditions elucidated earlier in the post. As such everything should follow as a matter of course. Today this is not the case and my belief is that the codes suffer greatly from a lack of robustness and deeper mathematical foundation for the modeling and simulation capability.

The success of modeling and simulation as a technological tool and engine of progress would be well served by focus on nonlinear discretization lacking almost entirely from our current emphasis on hardware.

The reasonable man adapts himself to the world: the unreasonable one persists in trying to adapt the world to himself. Therefore all progress depends on the unreasonable man.

― George Bernard Shaw

References

VonNeumann, John, and Robert D. Richtmyer. “A method for the numerical calculation of hydrodynamic shocks.” Journal of applied physics 21.3 (1950): 232-237.

Lax, Peter D., and Robert D. Richtmyer. “Survey of the stability of linear finite difference equations.” Communications on Pure and Applied Mathematics 9.2 (1956): 267-293.

Godunov, Sergei Konstantinovich. “A difference method for numerical calculation of discontinuous solutions of the equations of hydrodynamics.”Matematicheskii Sbornik 89.3 (1959): 271-306.

Lax, Peter, and Burton Wendroff. “Systems of conservation laws.”Communications on Pure and Applied mathematics 13.2 (1960): 217-237.

Osher, Stanley. “Riemann solvers, the entropy condition, and difference.”SIAM Journal on Numerical Analysis 21.2 (1984): 217-235.

Majda, Andrew, and Stanley Osher. “Propagation of error into regions of smoothness for accurate difference approximations to hyperbolic equations.”Communications on Pure and Applied Mathematics 30.6 (1977): 671-705.

Harten, Amiram, James M. Hyman, Peter D. Lax, and Barbara Keyfitz. “On finite‐difference approximations and entropy conditions for shocks.”Communications on pure and applied mathematics 29, no. 3 (1976): 297-322.

Boris, Jay P., and David L. Book. “Flux-corrected transport. I. SHASTA, A fluid transport algorithm that works.” Journal of computational physics 11.1 (1973): 38-69.

Zalesak, Steven T. “Fully multidimensional flux-corrected transport algorithms for fluids.” Journal of computational physics 31.3 (1979): 335-362.

Van Leer, Bram. “Towards the ultimate conservative difference scheme. V. A second-order sequel to Godunov’s method.” Journal of computational Physics 32.1 (1979): 101-136.

Van Leer, Bram. “Towards the ultimate conservative difference scheme. IV. A new approach to numerical convection.” Journal of computational physics23.3 (1977): 276-299.

Liu, Yuanyuan, Chi-Wang Shu, and Mengping Zhang. “High order finite difference WENO schemes for nonlinear degenerate parabolic equations.”SIAM Journal on Scientific Computing 33.2 (2011): 939-965.

It is actually worse than simply being a problem that the best effort isn’t put forth, lack of acceptance of failure inhibits success. The outright acceptance of failure as a viable outcome of work is necessary for the sort of success one can have pride in. If nothing is risked enough to potentially fail than nothing can be achieved. Today we have accepted the absence of failure as being the tell tale sign of success. It is not. This connection is desperately unhealthy and leads to a diminishing return on effort. Potential failure while an unpleasant prospect is absolutely necessary for achievement. As such the failures when best effort is put forth should be celebrated and lauded whenever possible and encouraged. Instead we have a culture that crucifies those who fail with regard for the effort on excellence of the work going into it.

It is actually worse than simply being a problem that the best effort isn’t put forth, lack of acceptance of failure inhibits success. The outright acceptance of failure as a viable outcome of work is necessary for the sort of success one can have pride in. If nothing is risked enough to potentially fail than nothing can be achieved. Today we have accepted the absence of failure as being the tell tale sign of success. It is not. This connection is desperately unhealthy and leads to a diminishing return on effort. Potential failure while an unpleasant prospect is absolutely necessary for achievement. As such the failures when best effort is put forth should be celebrated and lauded whenever possible and encouraged. Instead we have a culture that crucifies those who fail with regard for the effort on excellence of the work going into it.

In the area of security, the lack of tolerance for bad events is immense. More than this, the pervasive security apparatus produces a side effect that greatly empowers things like terrorism. Terror’s greatest weapon is not high explosives, but fear and we go out of our way to do terrorists jobs for them. Instead of tamping down fears our government and politicians go out of their way to scare the shit out of the public. This allows them to gain power and fund more activities to answer the security concerns of the scared shitless public. The best way to get rid of terror is to stop getting scared. The greatest weapon against terror is bravery, not bombs. A fearless public cannot be terrorized.

In the area of security, the lack of tolerance for bad events is immense. More than this, the pervasive security apparatus produces a side effect that greatly empowers things like terrorism. Terror’s greatest weapon is not high explosives, but fear and we go out of our way to do terrorists jobs for them. Instead of tamping down fears our government and politicians go out of their way to scare the shit out of the public. This allows them to gain power and fund more activities to answer the security concerns of the scared shitless public. The best way to get rid of terror is to stop getting scared. The greatest weapon against terror is bravery, not bombs. A fearless public cannot be terrorized. The end result of all of this risk intolerance is a lack of achievement as individuals, organizations, or the society itself. Without the acceptance of failure, we relegate ourselves to a complete lack of achievement. Without the ability to risk greatly we lack the ability to achieve greatly. Risk, danger and failure all improve our lives in every respect. The dial is too turned away from accepting risk to allow us to be part of progress. All of us will live poorer lives with less knowledge, achievement and experience because of the attitudes that exist today. The deeper issue is that the lack of appetite for obvious risks and failure actually kicks the door open for even greater risks and more massive failures in the long run. These sorts of outcomes may already be upon us in terms of massive opportunity cost. Terrorism is something that has cost our society vast sums of money and undermined the full breadth of society. We should have had astronauts on Mars already, yet the reality of this is decades away, so our societal achievement is actually deeply pathetic. The gap between “what could be” and “what is” has grown into a yawning chasm. Somebody needs to lead with bravery and pragmatically take the leap over the edge to fill it.

The end result of all of this risk intolerance is a lack of achievement as individuals, organizations, or the society itself. Without the acceptance of failure, we relegate ourselves to a complete lack of achievement. Without the ability to risk greatly we lack the ability to achieve greatly. Risk, danger and failure all improve our lives in every respect. The dial is too turned away from accepting risk to allow us to be part of progress. All of us will live poorer lives with less knowledge, achievement and experience because of the attitudes that exist today. The deeper issue is that the lack of appetite for obvious risks and failure actually kicks the door open for even greater risks and more massive failures in the long run. These sorts of outcomes may already be upon us in terms of massive opportunity cost. Terrorism is something that has cost our society vast sums of money and undermined the full breadth of society. We should have had astronauts on Mars already, yet the reality of this is decades away, so our societal achievement is actually deeply pathetic. The gap between “what could be” and “what is” has grown into a yawning chasm. Somebody needs to lead with bravery and pragmatically take the leap over the edge to fill it.

In the constellation of numerical analysis theorems the Lax equivalence theorem may have no equals in its importance. It is simple and its impact is profound on the whole business of numerical approximations. The theorem basically implies that if you provide a consistent approximation to the differential equations of interest and it is stable, the solution will converge. The devil of course is in the details. Consistency is defined by having an approximation with ordered errors in the mesh or time discretization, which implies that the approximation is at least first-order accurate, if not better. A key aspect of this that is overlooked is the necessity to have mesh spacing sufficiently small to achieve the defined error where failure to do so renders the solution erratically convergent at best.

In the constellation of numerical analysis theorems the Lax equivalence theorem may have no equals in its importance. It is simple and its impact is profound on the whole business of numerical approximations. The theorem basically implies that if you provide a consistent approximation to the differential equations of interest and it is stable, the solution will converge. The devil of course is in the details. Consistency is defined by having an approximation with ordered errors in the mesh or time discretization, which implies that the approximation is at least first-order accurate, if not better. A key aspect of this that is overlooked is the necessity to have mesh spacing sufficiently small to achieve the defined error where failure to do so renders the solution erratically convergent at best.

Stability then becomes the issue where you must assure that the approximations produce bounded results under the appropriate control of the solution. Usually the stability is defined as a character of the time stepping approach and requires that the time step be sufficiently small to provide stability. A lesser-known equivalence theorem is due to Dahlquist and applies to integrating ordinary differential equations and applies to multistep methods. From this work the whole aspect of zero stability arises where you have to assure that a non-zero time step size gives stability in the first place. More deeply, Dahlquist’s version of the equivalence theorem applies to nonlinear equations, but is limited to multistep methods where as Lax’s applies to linear equations.

Stability then becomes the issue where you must assure that the approximations produce bounded results under the appropriate control of the solution. Usually the stability is defined as a character of the time stepping approach and requires that the time step be sufficiently small to provide stability. A lesser-known equivalence theorem is due to Dahlquist and applies to integrating ordinary differential equations and applies to multistep methods. From this work the whole aspect of zero stability arises where you have to assure that a non-zero time step size gives stability in the first place. More deeply, Dahlquist’s version of the equivalence theorem applies to nonlinear equations, but is limited to multistep methods where as Lax’s applies to linear equations. rem doesn’t formally apply to the nonlinear case the guidance is remarkably powerful and appropriate. We have a simple and limited theorem that produces incredible consequences for any approximation methodology that we are applying to partial differential equations. Moreover the whole this was derived in the early 1950’s and generally thought through even earlier. The theorem came to pass because we knew that approximations to PDEs and their solution on computers do work. Dahlquist’s work is founded on a similar path; the availability of the computers shows us what the possibilities are and the issues that must be elucidated. We do see a virtuous cycle where the availability of computing capability spurs on developments in theory. This is an important aspect of healthy science where different aspects of a given field push and pull each other. Today we are counting on hardware advances to push the field forward. We should be careful that our focus is set where advances are ripe, its my opinion that hardware isn’t it.

rem doesn’t formally apply to the nonlinear case the guidance is remarkably powerful and appropriate. We have a simple and limited theorem that produces incredible consequences for any approximation methodology that we are applying to partial differential equations. Moreover the whole this was derived in the early 1950’s and generally thought through even earlier. The theorem came to pass because we knew that approximations to PDEs and their solution on computers do work. Dahlquist’s work is founded on a similar path; the availability of the computers shows us what the possibilities are and the issues that must be elucidated. We do see a virtuous cycle where the availability of computing capability spurs on developments in theory. This is an important aspect of healthy science where different aspects of a given field push and pull each other. Today we are counting on hardware advances to push the field forward. We should be careful that our focus is set where advances are ripe, its my opinion that hardware isn’t it. One of the very large topics in V&V that is generally overlooked is models and their range of validity. All models are limited in terms of their range of applicability based on time and length scales. For some phenomena this is relatively obvious, e.g., multiphase flow. For other phenomena the range of applicability is much more subtle. Among the first important topics to examine is the satisfaction of the continuum hypothesis, the capacity of a homogenization or averaging to be representative. The degree of satisfaction of homogenization is dependent on the scale of the problem and degrades as phenomenon becomes smaller scale. For multiphase flow this is obvious as the example of bubbly flow shows. As the number of bubbles becomes smaller any averaging becomes highly problematic. It argues that the models should be modified in some fashion to account for the change in scale size.

One of the very large topics in V&V that is generally overlooked is models and their range of validity. All models are limited in terms of their range of applicability based on time and length scales. For some phenomena this is relatively obvious, e.g., multiphase flow. For other phenomena the range of applicability is much more subtle. Among the first important topics to examine is the satisfaction of the continuum hypothesis, the capacity of a homogenization or averaging to be representative. The degree of satisfaction of homogenization is dependent on the scale of the problem and degrades as phenomenon becomes smaller scale. For multiphase flow this is obvious as the example of bubbly flow shows. As the number of bubbles becomes smaller any averaging becomes highly problematic. It argues that the models should be modified in some fashion to account for the change in scale size. Another more pernicious and difficult issues are homogenization assumptions that are not so fundamental. Consider the situation where a solid is being modeled in a continuum fashion. When the mesh is very large, the solid comprised of discrete grains can be modeled by averaging over these grains because there are so many of them. Over time we are able to solve problems with smaller and smaller mesh scales. Ultimately we now solve problems where the mesh size approaches the grain size. Clearly under this circumstance the homogenization used for averaging will lose its validity. The structural variations in the homogenized equations are removed and should become substantial and not be ignored as the mesh size becomes small. In the quest for exascale computing this issue is completely and utterly ignored. Some areas of study for high performance computing consider these issues carefully most notably climate and weather modeling where the mesh size issues are rather glaring. I would note that these fields are subjected to continual and rather public validation.

Another more pernicious and difficult issues are homogenization assumptions that are not so fundamental. Consider the situation where a solid is being modeled in a continuum fashion. When the mesh is very large, the solid comprised of discrete grains can be modeled by averaging over these grains because there are so many of them. Over time we are able to solve problems with smaller and smaller mesh scales. Ultimately we now solve problems where the mesh size approaches the grain size. Clearly under this circumstance the homogenization used for averaging will lose its validity. The structural variations in the homogenized equations are removed and should become substantial and not be ignored as the mesh size becomes small. In the quest for exascale computing this issue is completely and utterly ignored. Some areas of study for high performance computing consider these issues carefully most notably climate and weather modeling where the mesh size issues are rather glaring. I would note that these fields are subjected to continual and rather public validation. The theorem is applied to the convergence of the model’s solution in the limit where the “mesh” spacing goes to zero. Models are always limited in their applicability as a function of length and time scale. The equivalence theorem will be applied and take many models outside their true applicability. An important thing to wrangle in the grand scheme of things is whether models are being solved and convergent in the actual range of scales where it is applicable. A true tragedy would be a model that is only accurate and convergent in regimes where it is not applicable. This may actually be the case in many cases most notably the aforementioned multiphase flow. This calls into question the nature of the modeling and numerical methods used to solve the equations.

The theorem is applied to the convergence of the model’s solution in the limit where the “mesh” spacing goes to zero. Models are always limited in their applicability as a function of length and time scale. The equivalence theorem will be applied and take many models outside their true applicability. An important thing to wrangle in the grand scheme of things is whether models are being solved and convergent in the actual range of scales where it is applicable. A true tragedy would be a model that is only accurate and convergent in regimes where it is not applicable. This may actually be the case in many cases most notably the aforementioned multiphase flow. This calls into question the nature of the modeling and numerical methods used to solve the equations. WTF has become the catchphrase for today’s world. “What the fuck” moments fill our days and nothing is more WTF than Donald Trump. We will be examining the viability of the reality show star, and general douchebag celebrity-rich guy as a viable presidential candidate for decades to come. Some view his success as a candidate apocalyptically, or characterize it as an “extinction level event” politically. In the current light it would seem to be a stunning indictment of our modern society. How could this happen? How could we have come to this moment as a nation where it is even a possibility for such a completely insane outcome to be a reasonably high probability outcome of our political process? What else does it say about us as a people? WTF? What in the actual fuck!

WTF has become the catchphrase for today’s world. “What the fuck” moments fill our days and nothing is more WTF than Donald Trump. We will be examining the viability of the reality show star, and general douchebag celebrity-rich guy as a viable presidential candidate for decades to come. Some view his success as a candidate apocalyptically, or characterize it as an “extinction level event” politically. In the current light it would seem to be a stunning indictment of our modern society. How could this happen? How could we have come to this moment as a nation where it is even a possibility for such a completely insane outcome to be a reasonably high probability outcome of our political process? What else does it say about us as a people? WTF? What in the actual fuck! The phrase “what the fuck” came into the popular lexicon along with Tom Cruz in the movie, “Risky Business” back in 1983. There the lead character played by Tom Cruz exclaims, “sometimes you gotta say, what the fuck” It was a mantra for just going for broke and trying stuff without obvious regard for the consequences. Given our general lack of an appetite for risk and failure, the other side of the coin took the phrase over. Over time the phrase has morphed into a general commentary about things that are generally unbelievable. Accordingly the acronym WTF came into being by 1985. I hope that 2016 is peak-what the fuck, cause things can’t get much more what the fuck without everything turning into a complete shitshow. Going to a deeper view of things the real story behind where we are is the victory of bullshit as a narrative element in society. In a sense the transfer of WTF from a mantra for risk taking has transmogrified into a mantra for the breadth of impact of not taking risks!

The phrase “what the fuck” came into the popular lexicon along with Tom Cruz in the movie, “Risky Business” back in 1983. There the lead character played by Tom Cruz exclaims, “sometimes you gotta say, what the fuck” It was a mantra for just going for broke and trying stuff without obvious regard for the consequences. Given our general lack of an appetite for risk and failure, the other side of the coin took the phrase over. Over time the phrase has morphed into a general commentary about things that are generally unbelievable. Accordingly the acronym WTF came into being by 1985. I hope that 2016 is peak-what the fuck, cause things can’t get much more what the fuck without everything turning into a complete shitshow. Going to a deeper view of things the real story behind where we are is the victory of bullshit as a narrative element in society. In a sense the transfer of WTF from a mantra for risk taking has transmogrified into a mantra for the breadth of impact of not taking risks!

It is the general victory of showmanship and entertainment. The superficial and bombastic rule the day. I think that Trump is committing one of the greatest frauds of all time. He is completely and utterly unfit for office, yet has a reasonable (or perhaps unreasonable) chance to win the election. The fraud is being committed in plain sight and the fact that he speaks falsehoods at a marvelously high rate without any of the normative ill effects. Trump’s victory is testimony to how gullible the public is to complete bullshit. This gullibility reflects the lack of will on the part of the public to address real issues. With the sort of “leadership” that Trump represents, the ability to address real problems will further erode. The big irony is that Trump’s mantra of “Make America Great Again” is the direct opposite impact of his message. Trump’s sort of leadership destroys the capacity of the Nation to solve the sort of problems that lead to actual greatness. He is hastening the decline of the United States by choking our will to act in a tidal wave of bullshit.

It is the general victory of showmanship and entertainment. The superficial and bombastic rule the day. I think that Trump is committing one of the greatest frauds of all time. He is completely and utterly unfit for office, yet has a reasonable (or perhaps unreasonable) chance to win the election. The fraud is being committed in plain sight and the fact that he speaks falsehoods at a marvelously high rate without any of the normative ill effects. Trump’s victory is testimony to how gullible the public is to complete bullshit. This gullibility reflects the lack of will on the part of the public to address real issues. With the sort of “leadership” that Trump represents, the ability to address real problems will further erode. The big irony is that Trump’s mantra of “Make America Great Again” is the direct opposite impact of his message. Trump’s sort of leadership destroys the capacity of the Nation to solve the sort of problems that lead to actual greatness. He is hastening the decline of the United States by choking our will to act in a tidal wave of bullshit. There is a lot more bullshit in society than just Trump; he is just the most obvious example right now. Those who master bullshit win the day today, and it drives the depth of the WTF moments. Fundamentally there are forces in society today that are driving us toward the sorts of outcomes that cause us to think, “WTF?” For example we are prioritizing a high degree of micromanagement over achievement due to the risks associated with giving people freedom. Freedom encourages achievement, but also carries the risk of scandal when people abuse their freedom. Without the risks you cannot have the achievements. Today the priority is no scandal and accomplishment simply isn’t important enough to empower anyone. We are developing systems of management that serve to disempower people so that they don’t do anything unpredictable (like achieve something!).

There is a lot more bullshit in society than just Trump; he is just the most obvious example right now. Those who master bullshit win the day today, and it drives the depth of the WTF moments. Fundamentally there are forces in society today that are driving us toward the sorts of outcomes that cause us to think, “WTF?” For example we are prioritizing a high degree of micromanagement over achievement due to the risks associated with giving people freedom. Freedom encourages achievement, but also carries the risk of scandal when people abuse their freedom. Without the risks you cannot have the achievements. Today the priority is no scandal and accomplishment simply isn’t important enough to empower anyone. We are developing systems of management that serve to disempower people so that they don’t do anything unpredictable (like achieve something!). I wonder deeply about the extent to which things like the Internet play into this dynamic. Does the Internet allow bullshit to be presented with equality to bona fide facts? Does the Internet and computers allow a degree of micromanagement that strangles achievement? Does the Internet produce new patterns in society that we don’t understand much less have the capacity to manage? What is the value of information if it can’t be managed or understood in any way that is beyond superficial? The real danger is that people will gravitate toward what they want to view as facts instead of confronting issues that are unpleasant. The danger seems to be playing out in the political events in the United States and beyond.

I wonder deeply about the extent to which things like the Internet play into this dynamic. Does the Internet allow bullshit to be presented with equality to bona fide facts? Does the Internet and computers allow a degree of micromanagement that strangles achievement? Does the Internet produce new patterns in society that we don’t understand much less have the capacity to manage? What is the value of information if it can’t be managed or understood in any way that is beyond superficial? The real danger is that people will gravitate toward what they want to view as facts instead of confronting issues that are unpleasant. The danger seems to be playing out in the political events in the United States and beyond. happening faster and it is lubricating changes to take effect at a high pace. On the one hand we have an incredible ability to communicate with people that is beyond the capacity to even imagine a generation ago. The same communication mechanisms produces a deluge of information we are drowning and input to a degree that is choking people’s capacity to process what they are being fed. What good is the information, if the people receiving it are unable to comprehend it sufficiently to take action? or if people are unable to distinguish the proper actionable information from the complete garbage?

happening faster and it is lubricating changes to take effect at a high pace. On the one hand we have an incredible ability to communicate with people that is beyond the capacity to even imagine a generation ago. The same communication mechanisms produces a deluge of information we are drowning and input to a degree that is choking people’s capacity to process what they are being fed. What good is the information, if the people receiving it are unable to comprehend it sufficiently to take action? or if people are unable to distinguish the proper actionable information from the complete garbage? All of these forces are increasingly driving the elites in society (and increasingly the elites are simply those who have a modicum of education) to look at events and say “WTF?” I mean what the actual fuck is going on? The movie Idiocracy was set 500 years in the future, and yet we seem to be moving toward that vision of the future at an accelerated path that makes anyone educated enough to see what is happening tremble with fear. The sort of complete societal shitshow in the movie seems to be unfolding in front of our very eyes today. The mind-numbing effects of reality show television and pervasive low-brow entertainment is spreading like a plague. Donald Trump is the most obvious evidence of how bad things have gotten.

All of these forces are increasingly driving the elites in society (and increasingly the elites are simply those who have a modicum of education) to look at events and say “WTF?” I mean what the actual fuck is going on? The movie Idiocracy was set 500 years in the future, and yet we seem to be moving toward that vision of the future at an accelerated path that makes anyone educated enough to see what is happening tremble with fear. The sort of complete societal shitshow in the movie seems to be unfolding in front of our very eyes today. The mind-numbing effects of reality show television and pervasive low-brow entertainment is spreading like a plague. Donald Trump is the most obvious evidence of how bad things have gotten. The sort of terrible outcomes we see obviously through our broken political discourse are happening across society. The scientific world I work in is no different. The low-brow and superficial are dominating the dialog. Our programs are dominated by strangling micromanagement that operates in the name of accountability, but really speaks volumes about the lack of trust. Furthermore the low-brow dialog simply reflects the societal desire to eliminate the technical elites from the process. This also connects back to the micromanagement because the elites can’t be trusted either. It’s become better to speak to the uneducated common man who you can “have a beer with” than trust the gibberish coming from an elite. As a result the rise of complete bullshit as technical achievements has occurred. When the people in charge can’t distinguish between Nobel Prize winning work and complete pseudo-science, the low-bar wins out. Those of us who know better are left with nothing to do but say What the Fuck? Over and over again.

The sort of terrible outcomes we see obviously through our broken political discourse are happening across society. The scientific world I work in is no different. The low-brow and superficial are dominating the dialog. Our programs are dominated by strangling micromanagement that operates in the name of accountability, but really speaks volumes about the lack of trust. Furthermore the low-brow dialog simply reflects the societal desire to eliminate the technical elites from the process. This also connects back to the micromanagement because the elites can’t be trusted either. It’s become better to speak to the uneducated common man who you can “have a beer with” than trust the gibberish coming from an elite. As a result the rise of complete bullshit as technical achievements has occurred. When the people in charge can’t distinguish between Nobel Prize winning work and complete pseudo-science, the low-bar wins out. Those of us who know better are left with nothing to do but say What the Fuck? Over and over again.

Effectively we are creating an ecosystem where the apex predators are missing, and this isn’t a good thing. The models we use in science are the key to everything. They are the translation of our understanding into mathematics that we can solve and manipulate to explore our collective reality. Computers allow us to solve much more elaborate models than otherwise possible, but little else. The core of the value in scientific computing are the capacity of the models to explain and examine the physical World we live in. They are the “apex predators” in the scientific computing system, and taking this analogy further our models are becoming virtual dinosaurs where evolution has ceased to take place. The models in our codes are becoming a set of fossilized skeletons and not at all alive, evolving and growing.

Effectively we are creating an ecosystem where the apex predators are missing, and this isn’t a good thing. The models we use in science are the key to everything. They are the translation of our understanding into mathematics that we can solve and manipulate to explore our collective reality. Computers allow us to solve much more elaborate models than otherwise possible, but little else. The core of the value in scientific computing are the capacity of the models to explain and examine the physical World we live in. They are the “apex predators” in the scientific computing system, and taking this analogy further our models are becoming virtual dinosaurs where evolution has ceased to take place. The models in our codes are becoming a set of fossilized skeletons and not at all alive, evolving and growing. People do not seem to understand that faulty models render the entirety of the computing exercise moot. Yes, the computational results may be rendered into exciting and eye-catching pictures suitable for entertaining and enchanting various non-experts including congressmen, generals, business leaders and the general public. These eye-catching pictures are getting better all the time and now form the basis of a lot of the special effects in the movies. All of this does nothing for how well the models capture reality. The deepest truth is that no amount of computer power, numerical accuracy, mesh refinement, or computational speed can rescue a model that is incorrect. The entire process of validation against observations made in reality must be applied to determine if models are correct. HPC does little to solve this problem. If the validation provides evidence that the model is wrong and a more complex model is needed then HPC can provide a tool to solve it.

People do not seem to understand that faulty models render the entirety of the computing exercise moot. Yes, the computational results may be rendered into exciting and eye-catching pictures suitable for entertaining and enchanting various non-experts including congressmen, generals, business leaders and the general public. These eye-catching pictures are getting better all the time and now form the basis of a lot of the special effects in the movies. All of this does nothing for how well the models capture reality. The deepest truth is that no amount of computer power, numerical accuracy, mesh refinement, or computational speed can rescue a model that is incorrect. The entire process of validation against observations made in reality must be applied to determine if models are correct. HPC does little to solve this problem. If the validation provides evidence that the model is wrong and a more complex model is needed then HPC can provide a tool to solve it. seamlessly to produce incredible things. We have immensely complex machines that produce important outcomes in the real world through a set of interweaved systems that translate electrical signals into instructions understood by the computer and humans, into discrete equations, solved by mathematical procedures that describe the real world and ultimately compared with measured quantities in systems we care about. If we look at our focus today, the complexity of focus is the part of the technology that connects very elaborate complex computers to the instructions understood both by computers and people. This is electrical engineering and computer science. The focus begins to dampen in the part of the system where the mathematics, physics and reality comes in. These activities form the bond between the computer and reality. These activities are not a priority, and conspicuously diminished significantly by today’s HPC.

seamlessly to produce incredible things. We have immensely complex machines that produce important outcomes in the real world through a set of interweaved systems that translate electrical signals into instructions understood by the computer and humans, into discrete equations, solved by mathematical procedures that describe the real world and ultimately compared with measured quantities in systems we care about. If we look at our focus today, the complexity of focus is the part of the technology that connects very elaborate complex computers to the instructions understood both by computers and people. This is electrical engineering and computer science. The focus begins to dampen in the part of the system where the mathematics, physics and reality comes in. These activities form the bond between the computer and reality. These activities are not a priority, and conspicuously diminished significantly by today’s HPC. HPC today is structured in a manner to eviscerate fields that have been essential to the success of scientific computing. A good example is our applied mathematics programs. In many cases applied mathematics has become little more than scientific programming and code development. Far too little actual mathematics is happening today, and far too much focus is seen in productizing mathematics in software. Many people with training in applied mathematics only do software development today and spend little or no effort in doing analysis and development away from their keyboards. It isn’t that software development isn’t important, the issue is the lack of balance in the overall ratio of mathematics to software. The power and beauty of applied mathematics must be harnessed to achieve success in modeling and simulation. Today we are simply bypassing

HPC today is structured in a manner to eviscerate fields that have been essential to the success of scientific computing. A good example is our applied mathematics programs. In many cases applied mathematics has become little more than scientific programming and code development. Far too little actual mathematics is happening today, and far too much focus is seen in productizing mathematics in software. Many people with training in applied mathematics only do software development today and spend little or no effort in doing analysis and development away from their keyboards. It isn’t that software development isn’t important, the issue is the lack of balance in the overall ratio of mathematics to software. The power and beauty of applied mathematics must be harnessed to achieve success in modeling and simulation. Today we are simply bypassing  this essential part of the problem to focus on delivering software products.

this essential part of the problem to focus on delivering software products. To avoid the sort of implicit assumption of ZERO uncertainty one can use (expert) judgment to fill in the information gap. This can be accomplished in a distinctly principled fashion and always works better with a basis in evidence. The key is the recognition that we base our uncertainty on a model (a model that is associated with error too). The models are fairly standard and need a certain minimum amount of information to be solvable, and we are always better off with too much information making it effectively over-determined. Here we look at several forms of models that lead to uncertainty estimation including discretization error, and statistical models applicable to epistemic or experimental uncertainty.

To avoid the sort of implicit assumption of ZERO uncertainty one can use (expert) judgment to fill in the information gap. This can be accomplished in a distinctly principled fashion and always works better with a basis in evidence. The key is the recognition that we base our uncertainty on a model (a model that is associated with error too). The models are fairly standard and need a certain minimum amount of information to be solvable, and we are always better off with too much information making it effectively over-determined. Here we look at several forms of models that lead to uncertainty estimation including discretization error, and statistical models applicable to epistemic or experimental uncertainty. meshes to solve the error model exactly or more if we solve it in some sort of optimal manner. We recently had a method published that discusses how to include expert judgment in the determination of numerical error and uncertainty using models of this type. This model can be solved along with data using minimization techniques including the expert judgment as constraints on the solution for the unknowns. For both the over- or the under-determined cases different minimizations one can get multiple solutions to the model and robust statistical techniques may be used to find the “best” answers. This means that one needs to resort to more than simple curve fitting, and least squares procedures; one needs to solve a nonlinear problem associated with minimizing the fitting error (i.e., residuals) with respect to other error representations.

meshes to solve the error model exactly or more if we solve it in some sort of optimal manner. We recently had a method published that discusses how to include expert judgment in the determination of numerical error and uncertainty using models of this type. This model can be solved along with data using minimization techniques including the expert judgment as constraints on the solution for the unknowns. For both the over- or the under-determined cases different minimizations one can get multiple solutions to the model and robust statistical techniques may be used to find the “best” answers. This means that one needs to resort to more than simple curve fitting, and least squares procedures; one needs to solve a nonlinear problem associated with minimizing the fitting error (i.e., residuals) with respect to other error representations. ables can be completely eliminated by simply choosing the solution based on expert judgment. For numerical error an obvious example is assuming that calculations are converging at an expert-defined rate. Of course the rate assumed needs an adequate justification based on a combination of information associated with the nature of the numerical method and the solution to the problem. A key assumption that often does not hold up is the achievement of the method’s theoretical rate of convergence for realistic problems. In many cases a high-order method will perform at a lower rate of convergence because the problem has a structure with less regularity than necessary for the high-order accuracy. Problems with shocks or other forms of discontinuities will not usually support high-order results and a good operating assumption is a first-order convergence rate.

ables can be completely eliminated by simply choosing the solution based on expert judgment. For numerical error an obvious example is assuming that calculations are converging at an expert-defined rate. Of course the rate assumed needs an adequate justification based on a combination of information associated with the nature of the numerical method and the solution to the problem. A key assumption that often does not hold up is the achievement of the method’s theoretical rate of convergence for realistic problems. In many cases a high-order method will perform at a lower rate of convergence because the problem has a structure with less regularity than necessary for the high-order accuracy. Problems with shocks or other forms of discontinuities will not usually support high-order results and a good operating assumption is a first-order convergence rate. To make things concrete let’s tackle a couple of examples of how all of this might work. In the paper published recently we looked at solution verification when people use two meshes instead of the three needed to fully determine the error model. This seems kind of extreme, but in this post the example is the cases where people only use a single mesh. Seemingly we can do nothing at all to estimate uncertainty, but as I explained last week, this is the time to bear down and include an uncertainty because it is the most uncertain situation, and the most important time to assess it. Instead people throw up their hands and do nothing at all, which is the worst thing to do. So we have a single solution

To make things concrete let’s tackle a couple of examples of how all of this might work. In the paper published recently we looked at solution verification when people use two meshes instead of the three needed to fully determine the error model. This seems kind of extreme, but in this post the example is the cases where people only use a single mesh. Seemingly we can do nothing at all to estimate uncertainty, but as I explained last week, this is the time to bear down and include an uncertainty because it is the most uncertain situation, and the most important time to assess it. Instead people throw up their hands and do nothing at all, which is the worst thing to do. So we have a single solution  A lot of this information is probably good to include as part of the analysis when you have enough information too. The right way to think about this information is as constraints on the solution. If the constraints are active they have been triggered by the analysis and help determine the solution. If the constraints have no effect on the solution then they are proven to be correct given the data. In this way the solution can be shown to be consistent with the views of the expertise. If one is in the circumstance where the expert judgment is completely determining the solution, one should be very wary as this is a big red flag.

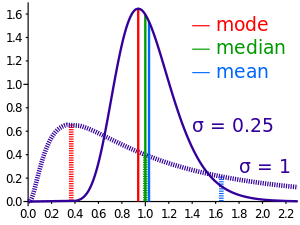

A lot of this information is probably good to include as part of the analysis when you have enough information too. The right way to think about this information is as constraints on the solution. If the constraints are active they have been triggered by the analysis and help determine the solution. If the constraints have no effect on the solution then they are proven to be correct given the data. In this way the solution can be shown to be consistent with the views of the expertise. If one is in the circumstance where the expert judgment is completely determining the solution, one should be very wary as this is a big red flag. standard modeling assumption is the use of the normal or Gaussian distribution as the starting assumption. This is almost always chosen as a default. A reasonable blog post title would be “The default probability distribution is always Gaussian”. A good thing for a distribution is that we can start to assess it beginning with two data points. A bad and common situation is that we only have a single data point. Thus uncertainty estimation is impossible without adding information from somewhere, and an expert judgment is the obvious place to look. With statistical data and its quality we can apply the standard error estimation using the sample size to scale the additional uncertainty driven by poor sampling,

standard modeling assumption is the use of the normal or Gaussian distribution as the starting assumption. This is almost always chosen as a default. A reasonable blog post title would be “The default probability distribution is always Gaussian”. A good thing for a distribution is that we can start to assess it beginning with two data points. A bad and common situation is that we only have a single data point. Thus uncertainty estimation is impossible without adding information from somewhere, and an expert judgment is the obvious place to look. With statistical data and its quality we can apply the standard error estimation using the sample size to scale the additional uncertainty driven by poor sampling,  For statistical models eventually one might resort to using a Bayesian method to encode the expert judgment in defining a prior distribution. In general terms this might seem to be an absolutely key approach to structure the expert judgment where statistical modeling is called for. The basic form of Bayes theorem is

For statistical models eventually one might resort to using a Bayesian method to encode the expert judgment in defining a prior distribution. In general terms this might seem to be an absolutely key approach to structure the expert judgment where statistical modeling is called for. The basic form of Bayes theorem is  I have noticed that we tend to accept a phenomenally common and undeniably unfortunate practice where a failure to assess uncertainty means that the uncertainty reported (acknowledged, accepted) is identically ZERO. In other words if we do nothing at all, no work, no judgment, the work (modeling, simulation, experiment, test) is allowed to provide an uncertainty that is ZERO. This encourages scientists and engineers to continue to do nothing because this wildly optimistic assessment is a seeming benefit. If somebody does work to estimate the uncertainty the degree of uncertainty always gets larger as a result. This practice is desperately harmful to the practice and progress in science and incredibly common.

I have noticed that we tend to accept a phenomenally common and undeniably unfortunate practice where a failure to assess uncertainty means that the uncertainty reported (acknowledged, accepted) is identically ZERO. In other words if we do nothing at all, no work, no judgment, the work (modeling, simulation, experiment, test) is allowed to provide an uncertainty that is ZERO. This encourages scientists and engineers to continue to do nothing because this wildly optimistic assessment is a seeming benefit. If somebody does work to estimate the uncertainty the degree of uncertainty always gets larger as a result. This practice is desperately harmful to the practice and progress in science and incredibly common. t shortcomings, a gullible or lazy community readily accepts the incomplete work. Some of the better work has uncertainties associated with it, but almost always varying degrees of incompleteness. Of course one should acknowledge up front that uncertainty estimation is always incomplete, but the degree of incompleteness can be spellbindingly large.

t shortcomings, a gullible or lazy community readily accepts the incomplete work. Some of the better work has uncertainties associated with it, but almost always varying degrees of incompleteness. Of course one should acknowledge up front that uncertainty estimation is always incomplete, but the degree of incompleteness can be spellbindingly large.

within the expressed taxonomy for uncertainty. I’ll start with numerical uncertainty estimation that is the most commonly completely non-assessed uncertainty. Far too often a single calculation is simply shown and used without any discussion. In slightly better cases, the calculation will be given with some comments on the sensitivity of the results to the mesh and the statement that numerical errors are negligible at the mesh given. Don’t buy it! This is usually complete bullshit! In every case where no quantitative uncertainty is explicitly provided, you should be suspicious. In other cases unless the reasoning is stated as being expertise or experience it should be questioned. If it is stated as being experiential then the basis for this experience and its documentation should be given explicitly along with evidence that it is directly relevant.

within the expressed taxonomy for uncertainty. I’ll start with numerical uncertainty estimation that is the most commonly completely non-assessed uncertainty. Far too often a single calculation is simply shown and used without any discussion. In slightly better cases, the calculation will be given with some comments on the sensitivity of the results to the mesh and the statement that numerical errors are negligible at the mesh given. Don’t buy it! This is usually complete bullshit! In every case where no quantitative uncertainty is explicitly provided, you should be suspicious. In other cases unless the reasoning is stated as being expertise or experience it should be questioned. If it is stated as being experiential then the basis for this experience and its documentation should be given explicitly along with evidence that it is directly relevant. estimation. Far too many calculational efforts provide a single calculation without any idea of the requisite uncertainties. In a nutshell, the philosophy in many cases is that the goal is to complete the best single calculation possible and creating a calculation that is capable of being assessed is not a priority. In other words the value proposition for computation is either do the best single calculation without any idea of the uncertainty versus a lower quality simulation with a well-defined assessment of uncertainty. Today the best single calculation is the default approach. This best single calculation then uses the default uncertainty estimate of exactly ZERO because nothing else is done. We need to adopt an attitude that will reject this approach because of the dangers associated with accepting a calculation without any quality assessment.