The conventional view serves to protect us from the painful job of thinking.

― John Kenneth Galbraith

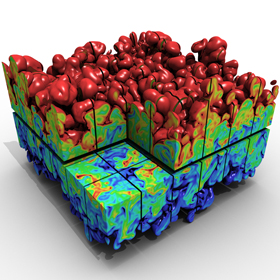

I chose the name the “Regularized Singularity” because it’s so important to the conduct of computational simulations of significance. For real world computations, the nonlinearity of the models dictates that the formation of a singularity is almost a foregone conclusion. To remain well behaved and physical, the singularity must be regularized, which means the singular behavior is moderated into something computable. This almost always accomplished with the application of a dissipative mechanism and effectively imposes the second law of thermodynamics on the solution.

I chose the name the “Regularized Singularity” because it’s so important to the conduct of computational simulations of significance. For real world computations, the nonlinearity of the models dictates that the formation of a singularity is almost a foregone conclusion. To remain well behaved and physical, the singularity must be regularized, which means the singular behavior is moderated into something computable. This almost always accomplished with the application of a dissipative mechanism and effectively imposes the second law of thermodynamics on the solution.

A useful, if not vital, tool is something called “hyperviscosity”. Taken broadly hyperviscosity is a broad spectrum of mathematical forms arising in numerical calculations. I’ll elaborate a number of the useful forms and options. Basically a hyperviscosity is viscous operator that has a higher differential order than regular viscosity. As most people know, but I’ll remind them the regular viscosity is a second-order differential operator, and it is directly proportional to a physical value of viscosity. Such viscosities are usually a weakly nonlinear function of the solution, and functions of the intensive variables (like temperature, pressure) rather than the structure of the solution. The hyperviscosity falls into a couple of broad categories, the linear form and the nonlinear form.

Unlike most people I view numerical dissipation as a good thing and an absolute necessity. This doesn’t mean that it should be wielded cavalierly or brutally because it can and gives computations a bad name. Generally conventional wisdom dictates that dissipation should always be minimized, but this is wrong-headed. One of the key aspects of important physical systems is the finite amount of dissipation produced dynamically. The correct asymptotically correct solution with a small viscosity is not zero dissipation; it is a non-zero amount of dissipation arising from the proper large-scale dynamics. This knowledge is useful in guiding the construction of good numerical viscosities that enable us to efficiently compute solutions to important physical systems.

One of the really big ideas to grapple with is the utter futility of using computers to simply crush problems into submission. For most problems of any practical significance this will not be happening, ever. In terms of the physics of the problems, this is often the coward’s way out of the issue. In my view, if nature were going to be submitting to our mastery via computational power, it would have already happened. The next generation of computing won’t be doing the trick either. Progress depends on actually thinking about modeling. A more likely outcome will be the diversion of resources away from the sort of thinking that will allow progress to be made. Most systems do not depend on the intricate details of the problem anyway. The small-scale dynamics are universal and driven by large scales. The trick to modeling these systems is to unveil the essence and core of the large-scale dynamics leading to what we observe.

One of the really big ideas to grapple with is the utter futility of using computers to simply crush problems into submission. For most problems of any practical significance this will not be happening, ever. In terms of the physics of the problems, this is often the coward’s way out of the issue. In my view, if nature were going to be submitting to our mastery via computational power, it would have already happened. The next generation of computing won’t be doing the trick either. Progress depends on actually thinking about modeling. A more likely outcome will be the diversion of resources away from the sort of thinking that will allow progress to be made. Most systems do not depend on the intricate details of the problem anyway. The small-scale dynamics are universal and driven by large scales. The trick to modeling these systems is to unveil the essence and core of the large-scale dynamics leading to what we observe.

Given that we aren’t going to be crushing our problems out of existence with raw computing power, hyperviscosity ends up being a handy tool to get more out of the computing we have. Viscosity depends upon having enough computational resolution to effectively allow it to dissipate energy from the computed system. If the computational mesh isn’t fine enough, the viscosity can’t stably remove the energy and the calculation blows up. This provides a very stringent limit on the resolution that can be computationally achieved.

The first form of viscosity to consider is the standard linear form in its simplest form which is a second order differential operator, . If we apply a Fourier transform

to the operator we can see how simple viscosity works,

(just substitute the Fourier description for the function into the operator). The viscosity grows in magnitude with the square of the wave number

. Only when the product of the viscosity and wavenumber squared becomes large will the operator remove energy from the system effectively.

Linear dissipative operators only come from even orders of the differential. Moving to a fourth-order bi-Laplacian operator it is easy to see how the hyperviscosity will works, . The dissipation now kicks in faster (

) with the wavenumber allowing the simulation to be stabilized at comparatively coarser resolution than the corresponding simulation only stabilized by a second-order viscous operator. As a result the simulation can attack more dynamic and energetic flows with the hyperviscosity. One detail is that the sign of the operator changes with each step up the ladder, a sixth order operator will have a negative sign, and attack the spectrum of the solution even faster,

, and so on.

Taking the linear approach to hyperviscosity is simple, but has a number of drawbacks from a practical point-of-view. First the linear hyperviscosity operator becomes quite broad in its extent as the order of the method increases. The method is also still predicated on a relatively well-resolved numerical solution and does not react well to discontinuous solutions. As such the linear hyperviscosity is not entirely robust for general flows. It is better as an additional dissipation mechanism with more industrial strength methods and for studies of a distinctly research flavor. Fortunately there is a class of methods that remove most of these difficulties, nonlinear hyperviscosity. Nonlinear is almost always better, or so it seems, not easier, but better.

Linearity breeds contempt

– Peter Lax

The first nonlinear viscosity came about from Prantl’s mixing length theory and still forms the foundation of most practical turbulence modeling today. For numerical work the original shock viscosity derived by Richtmyer is the simplest hyperviscosity possible, . Here

is a relevant length scale for the viscosity. In purely numerical work,

. It provides what linear hyperviscosity cannot, stability and robustness, making flows that would be

computed with pervasive instability and making them stable and practically useful. It provides the fundamental foundation for shock capturing and the ability to compute discontinuous flows on grids. In many respects the entire CFD field is grounded upon this method. The notable aspect of the method is the dependence of the dissipation on the product of the coefficient

computed with pervasive instability and making them stable and practically useful. It provides the fundamental foundation for shock capturing and the ability to compute discontinuous flows on grids. In many respects the entire CFD field is grounded upon this method. The notable aspect of the method is the dependence of the dissipation on the product of the coefficient and the absolute value of the gradient of the solution.

Looking at the functional form of the artificial viscosity, one sees that it is very much like the Prantl mixing length model of turbulence. The simplest model used for large eddy simulation (LES) is the Smagorinsky model developed first by Joseph Smagorinsky and used in the first three dimensional model for global circulation. This model is significant as the first LES and the model that is a precursor of the modern codes used to predict climate change. The LES subgrid model is really nothing more than Richtmyer (and Von Neumann’s) artificial viscosity and is used to stabilize the calculation against instability that invariably creeps in with enough simulation time. The suggestion to do this was made by Jules Charney upon seeing early weather simulations. The significance of having the first useful numerical method for capturing shock waves, and computing turbulence being one and the same is rarely commented upon. I believe this connection is important and profound. Equally valid arguments can be made that state that the form of nonlinear dissipation is fated by the dimensional form of the governing equations and the resulting dimensional analysis.

Before I derive a general form for the nonlinear hyperviscosity, I should discuss a little bit about another shortcoming of the linear hyperviscosity. In its simplest form the linear operator for classical linear viscosity produces a positive-definite operator. Its application as a numerical solution will keep positive quantities positive. This is actually a form of strong nonlinear stability. The solutions will satisfy discrete forms for the second law of thermodynamics, and provide so-called “entropy solutions”. In other words the solutions are guaranteed to be physically relevant.

This isn’t generally considered important for viscosity, but in the content of more complex systems of equations may have importance. One of the keys to bringing this up is that generally speaking linear hyperviscosity will not have this property, but we can build nonlinear hyperviscosity that will preserve this property. At some level this probably explains the utility of nonlinear hyperviscosity for shock capturing. In nonlinear hyperviscosity we have immense freedom in designing the viscosity as long as we keep it positive. We then have a positive viscosity multiplying a positive definite operator, and this provides a deep form of stability we want along with a connection that guarantees of physically relevant solutions.

This isn’t generally considered important for viscosity, but in the content of more complex systems of equations may have importance. One of the keys to bringing this up is that generally speaking linear hyperviscosity will not have this property, but we can build nonlinear hyperviscosity that will preserve this property. At some level this probably explains the utility of nonlinear hyperviscosity for shock capturing. In nonlinear hyperviscosity we have immense freedom in designing the viscosity as long as we keep it positive. We then have a positive viscosity multiplying a positive definite operator, and this provides a deep form of stability we want along with a connection that guarantees of physically relevant solutions.

With the basic principles in hand we can go wild and derive forms for the hyperviscosity that are well-suited to whatever we are doing. If we have a method with high-order accuracy, we can derive a hyperviscosity to stabilize the method that will not intrude on the accuracy of the method. For example, let’s just say we have a fourth-order accurate method, so we want a viscosity with at least a fifth order operator, . If one wanted better high-frequency damping a different form would work like

. To finish the generalization of the idea consider that you have eighth-order method, now a ninth- or tenth-order viscosity would work, for example,

. The point is that one can exercise immense flexibility in deriving a useful method.

I’ll finish with making brief observation about how to apply these ideas to systems of conservations laws, . This system of equations will have characteristic speeds,

determined by the eigen-analysis of the flux Jacobian,

. A reasonable way to think about hyperviscosity would be to write the nonlinear version as

, where

is the number of derivatives to take. A second approach that would work with Godunov-type methods would compute the absolute value jump at cell interfaces in the characteristic speeds where the Riemann problem is solved to set the magnitude of the viscous coefficient. This jump is the order of the approximation, and would multiply the cell-centered jump in the variables,

. This would guarantee proper entropy production through the hyperviscous flux that would augment the flux computed via the Riemann solver. The hyperviscosity would not impact the formal accuracy of the method.

We can not solve our problems with the same level of thinking that created them

― Albert Einstein

I spent the last two posts railing against the way science works today and its rather dismal reflection in my professional life. I’m taking a week off. It wasn’t that last week was any better, it was actually worse. The rot in the world of science is deep, but the rot is simply part of larger World to which science is a part. Events last week were even more appalling and pregnant with concerns. Maybe if I can turn away and focus on something positive, it might be better, or simply more tolerable. Soon I have a trip to Washington and into the proverbial belly of the beast, it should be entertaining at the very least.

Till next Friday, keep all your singularities regularized.

Think before you speak. Read before you think.

― Fran Lebowitz

VonNeumann, John, and Robert D. Richtmyer. “A method for the numerical calculation of hydrodynamic shocks.” Journal of applied physics 21.3 (1950): 232-237.

Borue, Vadim, and Steven A. Orszag. “Local energy flux and subgrid-scale statistics in three-dimensional turbulence.” Journal of Fluid Mechanics 366 (1998): 1-31.

Cook, Andrew W., and William H. Cabot. “Hyperviscosity for shock-turbulence interactions.” Journal of Computational Physics 203.2 (2005): 379-385.

Smagorinsky, Joseph. “General circulation experiments with the primitive equations: I. the basic experiment*.” Monthly weather review 91.3 (1963): 99-164.

Sometimes the blog is just an open version of a personal journal. I feel myself torn between wanting to write about some thoroughly nerdy topic that holds my intellectual interest (like hyperviscosity for example), but end up ranting about some aspect of my professional life (like last week). I genuinely felt like the rant from last week would be followed this week by a technical post because things would be better. Was I ever wrong! This week is even more appalling! I’m getting to see the rollout of the new national program reaching for Exascale computers. As deeply problematic as the current NNSA program might be, it is a paragon of technical virtue compared with the broader DOE program. Its as if we already had a President Trump in the White House to lead our Nation over the brink toward chaos, stupidity and making everything an episode in the World’s scariest reality show. Electing Trump would just make the stupidity obvious, make no mistake, we are already stupid.

Sometimes the blog is just an open version of a personal journal. I feel myself torn between wanting to write about some thoroughly nerdy topic that holds my intellectual interest (like hyperviscosity for example), but end up ranting about some aspect of my professional life (like last week). I genuinely felt like the rant from last week would be followed this week by a technical post because things would be better. Was I ever wrong! This week is even more appalling! I’m getting to see the rollout of the new national program reaching for Exascale computers. As deeply problematic as the current NNSA program might be, it is a paragon of technical virtue compared with the broader DOE program. Its as if we already had a President Trump in the White House to lead our Nation over the brink toward chaos, stupidity and making everything an episode in the World’s scariest reality show. Electing Trump would just make the stupidity obvious, make no mistake, we are already stupid. I think there are parallels that are worth discussing in depth. Something big is happening and right now it looks like a great unraveling. People are choosing sides and the outcome will determine the future course of our World. On one side we have the forces of conservatism, which want to preserve the status quo through the application of fear to control the populace. This allows control, lack of initiative, deprivation and a herd mentality. The prime directive for the forces on the right is the maintenance of the existing structures of power in society. The forces of liberalization and progress are arrayed on the other side wanting freedom, personal meaning, individuality, diversity, and new societal structure. These forces are colliding on many fronts and the outcome is starting to be violent. The outcome still hangs in the balance.

I think there are parallels that are worth discussing in depth. Something big is happening and right now it looks like a great unraveling. People are choosing sides and the outcome will determine the future course of our World. On one side we have the forces of conservatism, which want to preserve the status quo through the application of fear to control the populace. This allows control, lack of initiative, deprivation and a herd mentality. The prime directive for the forces on the right is the maintenance of the existing structures of power in society. The forces of liberalization and progress are arrayed on the other side wanting freedom, personal meaning, individuality, diversity, and new societal structure. These forces are colliding on many fronts and the outcome is starting to be violent. The outcome still hangs in the balance. The Internet is a great liberalizing force, but it also provides a huge amplifier for ignorance, propaganda and the instigation of violence. It is simply a technology and it is not intrinsically good or bad. On the one hand the Internet allows people to connect in ways that were unimaginable mere years ago. It allows access to incredible levels of information. The same thing creates immense fear in society because new social structures are emerging. Some of these structures are criminal or terrorists, some of these are dissidents against the establishment, and some of these are viewed as immoral. The information availability for the general public becomes an overload. This works in favor of the establishment, which benefits from propaganda and ignorance. The result is a distinct tension between knowledge and ignorance, freedom and tyranny hinging upon fear and security. I can’t see who is winning, but signs are not good.

The Internet is a great liberalizing force, but it also provides a huge amplifier for ignorance, propaganda and the instigation of violence. It is simply a technology and it is not intrinsically good or bad. On the one hand the Internet allows people to connect in ways that were unimaginable mere years ago. It allows access to incredible levels of information. The same thing creates immense fear in society because new social structures are emerging. Some of these structures are criminal or terrorists, some of these are dissidents against the establishment, and some of these are viewed as immoral. The information availability for the general public becomes an overload. This works in favor of the establishment, which benefits from propaganda and ignorance. The result is a distinct tension between knowledge and ignorance, freedom and tyranny hinging upon fear and security. I can’t see who is winning, but signs are not good. The core of the issue is an unhealthy relationship to risk, fear and failure. Our management is focused upon controlling risk, fear of bad things, and outright avoidance of failure. The result is an implemented culture of caution and compliance manifesting itself as a gulf of leadership. The management becomes about budgets and money while losing complete sight of purpose and direction. The focus on leading ourselves in new directions gets lost completely. The ability take risks get destroyed because of fears and outright fear of failure. People are so completely wrapped up in trying to avoid ever fucking up that all the energy behind doing progressive things moving forward are completely sapped. We are so tied to compliance that plans must be followed even when they make no sense at all.

The core of the issue is an unhealthy relationship to risk, fear and failure. Our management is focused upon controlling risk, fear of bad things, and outright avoidance of failure. The result is an implemented culture of caution and compliance manifesting itself as a gulf of leadership. The management becomes about budgets and money while losing complete sight of purpose and direction. The focus on leading ourselves in new directions gets lost completely. The ability take risks get destroyed because of fears and outright fear of failure. People are so completely wrapped up in trying to avoid ever fucking up that all the energy behind doing progressive things moving forward are completely sapped. We are so tied to compliance that plans must be followed even when they make no sense at all. ry small degree. Our managers are human and limited in capacity for complexity and time available to provide focus. If all of the focus is applied to management nothing is left for leadership. The impact is clear, the system is full of management assurance, compliance and surety, yet virtually absent of vision and inspiration. We are bereft of aspirational perspectives with clear goals looking forward. The management focus breeds an incremental approach that too concretely grounds future vision completely on what is possible today. All of this is brewing in a sea of risk aversion and intolerance for failure.

ry small degree. Our managers are human and limited in capacity for complexity and time available to provide focus. If all of the focus is applied to management nothing is left for leadership. The impact is clear, the system is full of management assurance, compliance and surety, yet virtually absent of vision and inspiration. We are bereft of aspirational perspectives with clear goals looking forward. The management focus breeds an incremental approach that too concretely grounds future vision completely on what is possible today. All of this is brewing in a sea of risk aversion and intolerance for failure. that I work for. It has resulted in the wholesale divestiture of quality because quality no longer matters to success. It is creating a thoroughly awful and untenable situation where truth and reality are completely detached from how we operate. Every time that something of low quality is allowed to be characterized as being high quality, we undermine our culture. Capability to make real progress is completely undermined because progress is extremely difficult and prone to failure and setbacks. It is much easier to simply incrementally move along doing what we are already doing. We know that will work and frankly those managing us don’t know the difference anyway. Doing what we are already doing is simply the status quo and orthogonal to progress.

that I work for. It has resulted in the wholesale divestiture of quality because quality no longer matters to success. It is creating a thoroughly awful and untenable situation where truth and reality are completely detached from how we operate. Every time that something of low quality is allowed to be characterized as being high quality, we undermine our culture. Capability to make real progress is completely undermined because progress is extremely difficult and prone to failure and setbacks. It is much easier to simply incrementally move along doing what we are already doing. We know that will work and frankly those managing us don’t know the difference anyway. Doing what we are already doing is simply the status quo and orthogonal to progress. micromanagement. Each step in micromanagement produces another tax on the time and energy of every one impacted by the system and diminishes the good that can be done. In essence we are draining our system of energy for creating positive outcomes. The management system is unremittingly negative in its focus, trying to stop stuff from happening rather than enable stuff. It is ultimately a losing battle, which is gutting our ability to produce great things.

micromanagement. Each step in micromanagement produces another tax on the time and energy of every one impacted by the system and diminishes the good that can be done. In essence we are draining our system of energy for creating positive outcomes. The management system is unremittingly negative in its focus, trying to stop stuff from happening rather than enable stuff. It is ultimately a losing battle, which is gutting our ability to produce great things. management work shouldn’t be done in the abstract. Almost all of the management stuff are a good ideas and “good”. They are bad in the sense of what they displace from the sorts of efforts we have the time and energy to engage in. We all have limits in terms of what we can reasonably achieve. If we spend our energy on good, but low value activities, we do not have the energy to focus on difficult high value activities. A lot of these management activities are good, easy, and time consuming and directly displace lots of hard high value work. The core of our problems is the inability to focus sufficient energy on hard things. Without focus the hard things simply don’t get done. This is where we are today, consumed by easy low value things, and lacking the energy and focus to do anything truly great.

management work shouldn’t be done in the abstract. Almost all of the management stuff are a good ideas and “good”. They are bad in the sense of what they displace from the sorts of efforts we have the time and energy to engage in. We all have limits in terms of what we can reasonably achieve. If we spend our energy on good, but low value activities, we do not have the energy to focus on difficult high value activities. A lot of these management activities are good, easy, and time consuming and directly displace lots of hard high value work. The core of our problems is the inability to focus sufficient energy on hard things. Without focus the hard things simply don’t get done. This is where we are today, consumed by easy low value things, and lacking the energy and focus to do anything truly great. s a general ambiguity regarding the purpose of our Labs, the goals of our science and the importance of the work. None of this is clear. It is the generic implication of the lack of leadership within the specific context of our Labs, or federally supported science. It is probably a direct result of a broader and deeper vacuum of leadership nationally infecting all areas of endeavor. We have no visionary or aspirational goals as a society either.

s a general ambiguity regarding the purpose of our Labs, the goals of our science and the importance of the work. None of this is clear. It is the generic implication of the lack of leadership within the specific context of our Labs, or federally supported science. It is probably a direct result of a broader and deeper vacuum of leadership nationally infecting all areas of endeavor. We have no visionary or aspirational goals as a society either. I’ll write the equation in TeX and show all of you a picture, you can make out a little of my other ink too, a lithium-7 atom and a Rayleigh-Taylor instability (I also have my favorite dog’s paw on my right shoulder and the famous Von Karmen vortex street on my forearm). The equation is how I think about the second law of thermodynamics in operation through the application of a vanishing viscosity principle tying the solution of equations to a concept of physical admissibility. In other words I believe in entropy and its power to guide us to solutions that matter in the physical world.

I’ll write the equation in TeX and show all of you a picture, you can make out a little of my other ink too, a lithium-7 atom and a Rayleigh-Taylor instability (I also have my favorite dog’s paw on my right shoulder and the famous Von Karmen vortex street on my forearm). The equation is how I think about the second law of thermodynamics in operation through the application of a vanishing viscosity principle tying the solution of equations to a concept of physical admissibility. In other words I believe in entropy and its power to guide us to solutions that matter in the physical world.

was “play”. In talking about what the concept of play means to me first in the context of childhood then adulthood I had several epiphanies about the health and vitality of our current society and workplaces. Basically, the concept of play is under siege by forces that find it too frivolous to be supported. Societally we have destroyed play as a free wheeling unstructured activity for children, and crushed the freedom to play at work under the banner of accountability. We are poorer and more unhappy as a result and it is yet another manifestation of unremitting fear governing our behaviors.

was “play”. In talking about what the concept of play means to me first in the context of childhood then adulthood I had several epiphanies about the health and vitality of our current society and workplaces. Basically, the concept of play is under siege by forces that find it too frivolous to be supported. Societally we have destroyed play as a free wheeling unstructured activity for children, and crushed the freedom to play at work under the banner of accountability. We are poorer and more unhappy as a result and it is yet another manifestation of unremitting fear governing our behaviors. The greatest realization in the dialog came when I took note of how I used to play at work and all the good that came from it. The times when I have been the most productive, creative and happy with work have all been associated with being allowed to play at work. By play I mean allowed to experiment, test, and create new ideas in an environment allowing for failure and risk (essentially by placing very few constraints and limitations on what I was doing). The key was the creation and commitment to very high level goals and the freedom to pursue these goals in a relatively free way. The key is the pursuit of the broad objectives using methods that are not strongly prescribed a priori.

The greatest realization in the dialog came when I took note of how I used to play at work and all the good that came from it. The times when I have been the most productive, creative and happy with work have all been associated with being allowed to play at work. By play I mean allowed to experiment, test, and create new ideas in an environment allowing for failure and risk (essentially by placing very few constraints and limitations on what I was doing). The key was the creation and commitment to very high level goals and the freedom to pursue these goals in a relatively free way. The key is the pursuit of the broad objectives using methods that are not strongly prescribed a priori.

We end up working extremely hard across everything in society to make sure that bad things don’t ever happen. We put all sorts of measures in place to prevent bad things. We don’t seem to have the capacity to realize that bad things just happen and it’s a fact of life. We spend so much effort trying to manage all the risks that life is just passing us by. This manifests itself with the destructive belief that the government’s job is to protect all of us from bad things (like terrorism). We are willing to give up freedom, accomplishment and productivity to assure a slight increase in safety. Often the risks we are sacrificing so much to diminish are vanishingly small and trivial (like terrorism), yet we are making this trade over and over again. We are allowing ourselves to drown in a sea of safety measures against risks that are inconsequential. The aggregate cost of all of these risk control measures exceeds the value of almost any of the measures. It represents the true threat to our future.

We end up working extremely hard across everything in society to make sure that bad things don’t ever happen. We put all sorts of measures in place to prevent bad things. We don’t seem to have the capacity to realize that bad things just happen and it’s a fact of life. We spend so much effort trying to manage all the risks that life is just passing us by. This manifests itself with the destructive belief that the government’s job is to protect all of us from bad things (like terrorism). We are willing to give up freedom, accomplishment and productivity to assure a slight increase in safety. Often the risks we are sacrificing so much to diminish are vanishingly small and trivial (like terrorism), yet we are making this trade over and over again. We are allowing ourselves to drown in a sea of safety measures against risks that are inconsequential. The aggregate cost of all of these risk control measures exceeds the value of almost any of the measures. It represents the true threat to our future. In economic policy it is well known that monopolies are bad. They are bad for everyone except the people who own and control those monopolies (who invest a lot in retaining their power!). They are drags on growth, innovation and progress. They are the essence of the too big to fail problem. In a very real sense the same thing is happening in science. We are being swallowed by monopolistic ideas. We are too invested in a variety of traditional solutions to problem (which solve traditional problems). Innovation, invention and progress are falling victim to this seemingly societal-wide trend.

In economic policy it is well known that monopolies are bad. They are bad for everyone except the people who own and control those monopolies (who invest a lot in retaining their power!). They are drags on growth, innovation and progress. They are the essence of the too big to fail problem. In a very real sense the same thing is happening in science. We are being swallowed by monopolistic ideas. We are too invested in a variety of traditional solutions to problem (which solve traditional problems). Innovation, invention and progress are falling victim to this seemingly societal-wide trend. Looking at our soon to be, if not already ancient codes based on ancient technology I asked how often did we build a new code in the old days? Sure as could be the answer was radically different than today’s world, we build new codes every five to seven years. FIVE TO SEVEN YEARS!!!! Today we are sheparding codes that are at least a quarter of a century old, and nothing new is in sight. We just continue to accrete capability on to these old codes horribly constrained by sets of decisions increasingly divorced from today’s reality, technology and problems. It is a recipe for failure, but not the good kind of failure, the kind of failure that crushes the future slowly and painlessly like the hardening of the arteries.

Looking at our soon to be, if not already ancient codes based on ancient technology I asked how often did we build a new code in the old days? Sure as could be the answer was radically different than today’s world, we build new codes every five to seven years. FIVE TO SEVEN YEARS!!!! Today we are sheparding codes that are at least a quarter of a century old, and nothing new is in sight. We just continue to accrete capability on to these old codes horribly constrained by sets of decisions increasingly divorced from today’s reality, technology and problems. It is a recipe for failure, but not the good kind of failure, the kind of failure that crushes the future slowly and painlessly like the hardening of the arteries. Perhaps no greater emblem of our addiction to shortsightedness exists than the crumbling infrastructure. The roads, bridges, electrical grids, airports, sewers, water systems, power plants,… that our core economy depend upon are in horrible shape and no will exists to support them. We can’t even conjure up the vision to create the infrastructure for the new century and leave it to privatized interests that will never deliver it. We are setting ourselves up to be permanently behind the rest of the World. We have no pride as a nation, no leadership and no vision of anything different. We just have short-term narcissistic self-interest embodied by the low tax, low service mentality. The same dynamic is happening at work.

Perhaps no greater emblem of our addiction to shortsightedness exists than the crumbling infrastructure. The roads, bridges, electrical grids, airports, sewers, water systems, power plants,… that our core economy depend upon are in horrible shape and no will exists to support them. We can’t even conjure up the vision to create the infrastructure for the new century and leave it to privatized interests that will never deliver it. We are setting ourselves up to be permanently behind the rest of the World. We have no pride as a nation, no leadership and no vision of anything different. We just have short-term narcissistic self-interest embodied by the low tax, low service mentality. The same dynamic is happening at work. undations, we have stale old codes, models, methods and algorithms that ill-serve our potential. The application of too big to fail to our codes is creating a slow-motion failure of epic proportions. The basis for the failure is the loss of innovation and a sense that we are creating the future. Instead we simply curate the past. Our best should be ahead of us and any leadership worth its salt would demand that we work steadfastly to seize greatness. In modeling and simulation the creation of new codes should be an energizing factor creating effective laboratories for innovation, invention and creativity providing new avenues for progress.

undations, we have stale old codes, models, methods and algorithms that ill-serve our potential. The application of too big to fail to our codes is creating a slow-motion failure of epic proportions. The basis for the failure is the loss of innovation and a sense that we are creating the future. Instead we simply curate the past. Our best should be ahead of us and any leadership worth its salt would demand that we work steadfastly to seize greatness. In modeling and simulation the creation of new codes should be an energizing factor creating effective laboratories for innovation, invention and creativity providing new avenues for progress.

more apt description. There is frightfully little investigation or intellectual engagement

more apt description. There is frightfully little investigation or intellectual engagement Societally, the concept of too big to fail applies to the banking and financial institutions that almost destroyed the World economy eight years ago. We demonstrated that they were both too big to fail and too big and too powerful to change thus remaining a ticking time bomb. It is only a matter of time before the same issues present in 2007 erupt again and wreck havoc on the World economy. All the evidence needed to energize real change is available, but there is simply too much money to be made, and greed is more powerful than common sense. I realized that our application codes and computers probably properly deserve to be thought of in exactly the same light, they are too big to fail too. This character is slowly and steadily poisoning the environment we live in and any discussion of different intellectual paths is simply forbidden.

Societally, the concept of too big to fail applies to the banking and financial institutions that almost destroyed the World economy eight years ago. We demonstrated that they were both too big to fail and too big and too powerful to change thus remaining a ticking time bomb. It is only a matter of time before the same issues present in 2007 erupt again and wreck havoc on the World economy. All the evidence needed to energize real change is available, but there is simply too much money to be made, and greed is more powerful than common sense. I realized that our application codes and computers probably properly deserve to be thought of in exactly the same light, they are too big to fail too. This character is slowly and steadily poisoning the environment we live in and any discussion of different intellectual paths is simply forbidden. As I said, the depth of intellectual ownership of these very codes is diminishing with each passing day. The essential aspects of these code’s utility and success in our application areas is based on deep knowledge and intense focus of talented individuals. The talent and skills leading to successful codes are difficult to develop and maintain; the skills must be developed by simultaneously pushing several envelopes: the applications, the models, methods to solve models, and computer science-programming. Today we really only focus on the computer science-programming and simply sort all the other details. Rather than continually reinvest in people and science, we are creating an environment where codes are curated. This state is actually a recipe for catastrophic failure rather than glorious success. The path forward should be adaptive, flexible and agile; instead the path is a lumbering goliath and viewed as a fait accompli.

As I said, the depth of intellectual ownership of these very codes is diminishing with each passing day. The essential aspects of these code’s utility and success in our application areas is based on deep knowledge and intense focus of talented individuals. The talent and skills leading to successful codes are difficult to develop and maintain; the skills must be developed by simultaneously pushing several envelopes: the applications, the models, methods to solve models, and computer science-programming. Today we really only focus on the computer science-programming and simply sort all the other details. Rather than continually reinvest in people and science, we are creating an environment where codes are curated. This state is actually a recipe for catastrophic failure rather than glorious success. The path forward should be adaptive, flexible and agile; instead the path is a lumbering goliath and viewed as a fait accompli. humans who understand the basis of models and how these models are solved. When we curate code this key connection is lost. We lose the fundamental nature of the model as our impression of nature, rather than its direct image. We use models as a way of explaining nature rather than a substitute for the natural World. This tie is being systematically undermined by the way we compute today and results in a potentially catastrophic loss of humility. Such loses of humility ultimately produce reactions that are unpleasant and damaging.

humans who understand the basis of models and how these models are solved. When we curate code this key connection is lost. We lose the fundamental nature of the model as our impression of nature, rather than its direct image. We use models as a way of explaining nature rather than a substitute for the natural World. This tie is being systematically undermined by the way we compute today and results in a potentially catastrophic loss of humility. Such loses of humility ultimately produce reactions that are unpleasant and damaging. We are creating a program that will collide with reality leaving a broken and limping community in its wake. It has a demonstrated track record of not learning from past mistakes, producing a plan for moving ahead that is devoid of innovation and deep thought. Today’s path forward is solely predicated on the idea that we must have the fastest computer rather than the best computing. It is the epitome of bigger and more expensive is better, rather than faster, smarter and more agile. Perhaps more damaging is a perspective that the problems we face are already solved save the availability of more computer power. We will end up eviscerating the very communities of scientists that are the lifeblood of modeling and simulation. The program may be a massive mistake and no one is questioning any of it.

We are creating a program that will collide with reality leaving a broken and limping community in its wake. It has a demonstrated track record of not learning from past mistakes, producing a plan for moving ahead that is devoid of innovation and deep thought. Today’s path forward is solely predicated on the idea that we must have the fastest computer rather than the best computing. It is the epitome of bigger and more expensive is better, rather than faster, smarter and more agile. Perhaps more damaging is a perspective that the problems we face are already solved save the availability of more computer power. We will end up eviscerating the very communities of scientists that are the lifeblood of modeling and simulation. The program may be a massive mistake and no one is questioning any of it.

One scene in “The Big Short” stands out as helping define the depth of the dysfunction in the system, the trip to Moody’s, the rating agency for the securities. The securities created by the banks were incredibly unstable and literally junk, yet the ratings agencies kept putting their top seal of approval on them, AAA. When pressed on the matter, the woman representing Moody’s said, “if we don’t give them the rating they want, the guy down the street will, we want the business.” The people watching the system for fraud were completely in bed with the crooks. The reality is that this practice and problem are everywhere. It is true where I’ve worked, it is obviously true in politics, and sports, and education, and… Our whole nation is living in the Golden Age of Bullshit. This serves no purpose but perpetuating the existing structures of power at the cost of progress, quality and ethical behavior.

One scene in “The Big Short” stands out as helping define the depth of the dysfunction in the system, the trip to Moody’s, the rating agency for the securities. The securities created by the banks were incredibly unstable and literally junk, yet the ratings agencies kept putting their top seal of approval on them, AAA. When pressed on the matter, the woman representing Moody’s said, “if we don’t give them the rating they want, the guy down the street will, we want the business.” The people watching the system for fraud were completely in bed with the crooks. The reality is that this practice and problem are everywhere. It is true where I’ve worked, it is obviously true in politics, and sports, and education, and… Our whole nation is living in the Golden Age of Bullshit. This serves no purpose but perpetuating the existing structures of power at the cost of progress, quality and ethical behavior. cause being responsible will just get you replaced by a more corrupt or corruptible irresponsible person. The sorts of peer reviews that we see at work are the same thing. Everything is graded on a curve, and a bad failing grade is never allowed. Failure isn’t allowed, if it comes up the messenger is “shot” (usually by being dismissed from the peer review). We never confront any problems until they blow up in our faces. This tendency basically allows progress to grind to a proverbial halt. Failures are the fuel for progress and when you disallow failure, you disallow progress.

cause being responsible will just get you replaced by a more corrupt or corruptible irresponsible person. The sorts of peer reviews that we see at work are the same thing. Everything is graded on a curve, and a bad failing grade is never allowed. Failure isn’t allowed, if it comes up the messenger is “shot” (usually by being dismissed from the peer review). We never confront any problems until they blow up in our faces. This tendency basically allows progress to grind to a proverbial halt. Failures are the fuel for progress and when you disallow failure, you disallow progress. of bullshit responses that hamper us today. If everyone is an expert then no one is an expert. If people don’t like the information they get, they find someone else who gives them a different answer. As a result science in our society is in decline. Actual science is being hurt, and science’s role in society is similarly degrading. Look at the whole anti-vaxxer movement, which has absolutely no basis, but lots of proponents. We get ideas where any risk at all is unacceptable and we allow progress to grind to a complete halt. Failure, problems and the identification of things that need to be improved creates the basis of valuable work. We have structurally destroyed mechanisms for doing this by our addiction to praise and inability to identify and confront problems while they are small.

of bullshit responses that hamper us today. If everyone is an expert then no one is an expert. If people don’t like the information they get, they find someone else who gives them a different answer. As a result science in our society is in decline. Actual science is being hurt, and science’s role in society is similarly degrading. Look at the whole anti-vaxxer movement, which has absolutely no basis, but lots of proponents. We get ideas where any risk at all is unacceptable and we allow progress to grind to a complete halt. Failure, problems and the identification of things that need to be improved creates the basis of valuable work. We have structurally destroyed mechanisms for doing this by our addiction to praise and inability to identify and confront problems while they are small. management provides. Progress often comes from applying new thinking to old problems. One of the key things to do is identify and take on unsolved problems, another name for failures. Making progress is often the antithesis of things that can be managed in today’s common fashion, so progress makes way for management satisfaction. The key is the project management is simply a tool, and a useful one at that. It is not a recipe for success or an alternative to thinking deeply and differently.

management provides. Progress often comes from applying new thinking to old problems. One of the key things to do is identify and take on unsolved problems, another name for failures. Making progress is often the antithesis of things that can be managed in today’s common fashion, so progress makes way for management satisfaction. The key is the project management is simply a tool, and a useful one at that. It is not a recipe for success or an alternative to thinking deeply and differently. Even with a day off, work last week really, completely sucked. I got to spend very little time doing my daily habit of writing in a focused manner. Every day at work was a pain and ended the week with hosting a group of visitors who are responsible for part of the new exascale computing initiative. Among the visitors were a few people whom I have history with both good and bad. If you’ve read this blog you know that I’m not a fan of the exascale computing imitative. Despite this, I was expected to be on my best behavior (and I think that I was). It was not the time, nor place to debate the program’s goals or wisdom (to be honest I’m not sure what the right time is). It’s pretty clear to me that there hasn’t been much of any debate or thought put into the whole thing. That’s a discussion for a different day.

Even with a day off, work last week really, completely sucked. I got to spend very little time doing my daily habit of writing in a focused manner. Every day at work was a pain and ended the week with hosting a group of visitors who are responsible for part of the new exascale computing initiative. Among the visitors were a few people whom I have history with both good and bad. If you’ve read this blog you know that I’m not a fan of the exascale computing imitative. Despite this, I was expected to be on my best behavior (and I think that I was). It was not the time, nor place to debate the program’s goals or wisdom (to be honest I’m not sure what the right time is). It’s pretty clear to me that there hasn’t been much of any debate or thought put into the whole thing. That’s a discussion for a different day. I also noted the distinct air of control from the visitors and discussion of their colleagues who run our programs. The programs want to give us very little breathing room to exercise our own judgment on priorities. They want to define and narrow our focus to their priorities. Given the lack of technical prowess from those running things it’s dangerous. Awful programs like exascale are the direct result of this sort of control and lack of intellectual thought running research. Everything is politically engineered and nothing is really composed of elements that are designed to maximize science. The result is a long-term malaise in research, progress and science we are suffering from. Ultimately, the system we are laboring under will result is less growth and prosperity for us all. It is the inevitable result of basing our decisions on fear and risk avoidance instead of hope, faith and boldness.

I also noted the distinct air of control from the visitors and discussion of their colleagues who run our programs. The programs want to give us very little breathing room to exercise our own judgment on priorities. They want to define and narrow our focus to their priorities. Given the lack of technical prowess from those running things it’s dangerous. Awful programs like exascale are the direct result of this sort of control and lack of intellectual thought running research. Everything is politically engineered and nothing is really composed of elements that are designed to maximize science. The result is a long-term malaise in research, progress and science we are suffering from. Ultimately, the system we are laboring under will result is less growth and prosperity for us all. It is the inevitable result of basing our decisions on fear and risk avoidance instead of hope, faith and boldness. Because our program is all about stockpile stewardship the meeting was held in a classified setting. This means no electronics and a requirement that I unplug. It might be a good excuse to get some reading done, but I had to look like I was paying attention all day. So I took copious notes. Not much interesting happened so most of the notes were to self and captured my thoughts, reflections and perspectives. This alone made the entire experience valuable from a personal-professional perspective. I managed to digest a lot of my backlog of thinking that the well-connected World distracts you from constantly. I had some well-structured time with my own thoughts and that’s a really good thing.

Because our program is all about stockpile stewardship the meeting was held in a classified setting. This means no electronics and a requirement that I unplug. It might be a good excuse to get some reading done, but I had to look like I was paying attention all day. So I took copious notes. Not much interesting happened so most of the notes were to self and captured my thoughts, reflections and perspectives. This alone made the entire experience valuable from a personal-professional perspective. I managed to digest a lot of my backlog of thinking that the well-connected World distracts you from constantly. I had some well-structured time with my own thoughts and that’s a really good thing. Aside from the deeper thoughts I also realize that it pays to think in many different ways even from a mechanical point of view. I try walking each day along with a walking meditation, followed by free association. It ends up being a very effective way to self-brainstorm. I keep a notebook for each day in a cloud app. There is this blog, which allows for a freeform prose, but done in an electronic form. Writing things down on paper has subsided a lot, and last week I rediscover the virtue of that medium albeit by the nature of the circumstances. For a long time I kept a pad of lined post-it notes in my car since a lot of good ideas would just come to me driving to and from work. It might be good to force myself to use paper alone more often. By the power of cameras and remarkable text recognition the paper can go directly into my electronic notebook any way.

Aside from the deeper thoughts I also realize that it pays to think in many different ways even from a mechanical point of view. I try walking each day along with a walking meditation, followed by free association. It ends up being a very effective way to self-brainstorm. I keep a notebook for each day in a cloud app. There is this blog, which allows for a freeform prose, but done in an electronic form. Writing things down on paper has subsided a lot, and last week I rediscover the virtue of that medium albeit by the nature of the circumstances. For a long time I kept a pad of lined post-it notes in my car since a lot of good ideas would just come to me driving to and from work. It might be good to force myself to use paper alone more often. By the power of cameras and remarkable text recognition the paper can go directly into my electronic notebook any way. contempt. Our overlords encourage us to ignore the carnage they are subjecting the world to, but it is there hidden in plain sight. Today we live in a coarse and belligerent culture that threatens to undermine everything good. I’m not talking about the sort of moral decay social conservatives would point to. I’m talking about the fundamental rewards, checks and balances that encourage an environment of selfish and greedy behavior. At the same time these same forces work to undermine every effort to pay attention to larger societal, organizational and social imperatives that collectively make everything better. We act selfish in the service of maintaining the power of others, and avoiding the sort of collective service that raises everyone.

contempt. Our overlords encourage us to ignore the carnage they are subjecting the world to, but it is there hidden in plain sight. Today we live in a coarse and belligerent culture that threatens to undermine everything good. I’m not talking about the sort of moral decay social conservatives would point to. I’m talking about the fundamental rewards, checks and balances that encourage an environment of selfish and greedy behavior. At the same time these same forces work to undermine every effort to pay attention to larger societal, organizational and social imperatives that collectively make everything better. We act selfish in the service of maintaining the power of others, and avoiding the sort of collective service that raises everyone.

We really don’t have to have our collective act together to compete. It’s all fear and no benefit of accomplishing great things, and we aren’t. We just have the requisite reduction in freedom in response to this fear without any of the virtues. This dismal state of affairs results in a virtual emptying of meaning from work that used to be important. I work at a place where work ought to have value and importance, yet we’ve managed to ruin it.

We really don’t have to have our collective act together to compete. It’s all fear and no benefit of accomplishing great things, and we aren’t. We just have the requisite reduction in freedom in response to this fear without any of the virtues. This dismal state of affairs results in a virtual emptying of meaning from work that used to be important. I work at a place where work ought to have value and importance, yet we’ve managed to ruin it. gment to feel truly empowered at work. All the control and accountability at work is primarily disempowering employees and sucking all the meaning from work. I ought feel an immense amount of importance to what I do. My management, writ large, is managing to destroy something that ought to be completely easy to achieve. This malaise is something we see nationally as the general sense that your work has little larger meaning is used to crush people’s wills. Instead of empowering people and achieving their best efforts, we see control used to minimize contributions and destroy any attempt toward deeper meaning. This sense is deeply reflected in the current political situation in the World and the broad sweeping anger seen in the populace.

gment to feel truly empowered at work. All the control and accountability at work is primarily disempowering employees and sucking all the meaning from work. I ought feel an immense amount of importance to what I do. My management, writ large, is managing to destroy something that ought to be completely easy to achieve. This malaise is something we see nationally as the general sense that your work has little larger meaning is used to crush people’s wills. Instead of empowering people and achieving their best efforts, we see control used to minimize contributions and destroy any attempt toward deeper meaning. This sense is deeply reflected in the current political situation in the World and the broad sweeping anger seen in the populace. The love affair with corporate governance for science is another aspect of the current milieu that is deeply corrupting science. Our corporate culture is corrupting society as a whole and science is no exception. The greed and bottom line infatuation perverts and distorts value systems and has systematically harshened the cultures of everything it touches. Increasingly, the accepted moral thing to do is make yourself as successful as possible. This includes lying, cheating and stealing if necessary (and you can get away with it). More corrosively it means losing any view of broader social, societal, organizational or professional responsibility and obligation. This undermines collaboration and free exchange of ideas, which ultimately destroys innovation and discovery.

The love affair with corporate governance for science is another aspect of the current milieu that is deeply corrupting science. Our corporate culture is corrupting society as a whole and science is no exception. The greed and bottom line infatuation perverts and distorts value systems and has systematically harshened the cultures of everything it touches. Increasingly, the accepted moral thing to do is make yourself as successful as possible. This includes lying, cheating and stealing if necessary (and you can get away with it). More corrosively it means losing any view of broader social, societal, organizational or professional responsibility and obligation. This undermines collaboration and free exchange of ideas, which ultimately destroys innovation and discovery. Accountability has been instituted that allows people to ethically ignore the broader context in favor of narrow focus. They are told that doing this is the “right” thing to do, and basically they should otherwise mind their own business. This attitude extends to society as a whole and we are all poorer for it. We keep ideas to ourselves, and the narrowly defined parochial interests of those who pay us. Instead we should operate as engaged and collaborative stewards of our society, organizations or professions. We have adopted a system that encourages the worst in people rather than the best. We should absolutely expect problems to be caused by this culture of selfishness. The symptoms are everywhere and threaten our society in a myriad of ways. The only portion of society that benefits from our present culture is the rich and powerful overlords. These systems maintain and expand their ability to keep their corrupt and poisonous stranglehold on everyone else.

Accountability has been instituted that allows people to ethically ignore the broader context in favor of narrow focus. They are told that doing this is the “right” thing to do, and basically they should otherwise mind their own business. This attitude extends to society as a whole and we are all poorer for it. We keep ideas to ourselves, and the narrowly defined parochial interests of those who pay us. Instead we should operate as engaged and collaborative stewards of our society, organizations or professions. We have adopted a system that encourages the worst in people rather than the best. We should absolutely expect problems to be caused by this culture of selfishness. The symptoms are everywhere and threaten our society in a myriad of ways. The only portion of society that benefits from our present culture is the rich and powerful overlords. These systems maintain and expand their ability to keep their corrupt and poisonous stranglehold on everyone else. merely to demonstrate rote knowledge; one needs to understand the principles underlying the knowledge. One way to demonstrate the mastery over knowledge is utilize the current knowledge and then extend the knowledge in that area to something new. This gets to the core of our current problem in science, we are not being asked to extend knowledge, and we are asked to curate knowledge. As a result we are losing the ownership that denotes mastery.

merely to demonstrate rote knowledge; one needs to understand the principles underlying the knowledge. One way to demonstrate the mastery over knowledge is utilize the current knowledge and then extend the knowledge in that area to something new. This gets to the core of our current problem in science, we are not being asked to extend knowledge, and we are asked to curate knowledge. As a result we are losing the ownership that denotes mastery. tware libraries, and the mapping of all of these to modern computing architectures. Because of the demise of Moore’s law we are exploring a myriad of extremely exotic computing approaches. These exotic computer architectures are causing implementations to become similarly exotic. In a sense my concern is that the difficulty of simply using these computers has the effect of sucking “all the oxygen” from the system and leaves precious little resource behind for any other creative endeavor, or risk taking. As a result we have no real progress being made in any of the activities in modeling and simulation beyond mere implementations.

tware libraries, and the mapping of all of these to modern computing architectures. Because of the demise of Moore’s law we are exploring a myriad of extremely exotic computing approaches. These exotic computer architectures are causing implementations to become similarly exotic. In a sense my concern is that the difficulty of simply using these computers has the effect of sucking “all the oxygen” from the system and leaves precious little resource behind for any other creative endeavor, or risk taking. As a result we have no real progress being made in any of the activities in modeling and simulation beyond mere implementations. ls? Or improved methods? Or improved algorithms? The answers to these questions are not uniform by any means. There are times when the greatest need for modeling and simulation is the capacity of the computing hardware. At other times the models, methods or algorithms are the limiting factors. The question we should answer is what is the limiting factor today? It is not computing hardware. While we can always use more computing power, it is not limiting us today. I believe we are far more limited by our models of reality, and the manner in which we create, analyze and assess these models. Despite this lack of need for improved hardware, computing hardware is the focus of our efforts.

ls? Or improved methods? Or improved algorithms? The answers to these questions are not uniform by any means. There are times when the greatest need for modeling and simulation is the capacity of the computing hardware. At other times the models, methods or algorithms are the limiting factors. The question we should answer is what is the limiting factor today? It is not computing hardware. While we can always use more computing power, it is not limiting us today. I believe we are far more limited by our models of reality, and the manner in which we create, analyze and assess these models. Despite this lack of need for improved hardware, computing hardware is the focus of our efforts. time those people go away and the logic and rationale for the code’s form and function begins to fade away. We often find that certain things in the code can never be changed lest the code become non-functional. We are left with something that looks and feels like magic. It works and we don’t know why or understand how it works, but it does.

time those people go away and the logic and rationale for the code’s form and function begins to fade away. We often find that certain things in the code can never be changed lest the code become non-functional. We are left with something that looks and feels like magic. It works and we don’t know why or understand how it works, but it does. he space of knowledge is taken off the table and relegated to being purely curated. This full demonstration has the role of providing an important feedback of reality to the work being done. Reality is very good at injecting humility into the system when it is most needed. When the knowledge is curated we rapidly remove important and essential aspects of stewardship. We have immense issues associated with the long-term responsibility of caring for a stockpile. New issues arise that are beyond the set of conditions the systems were originally designed for. All of this needs a fertile intellectual environment to be properly stewarded. We are not doing this today. Instead the intellectual environment is actually being steadily eroded in favor of curating knowledge. In computing, the creation of legacy codes is a key symptom of this environment.

he space of knowledge is taken off the table and relegated to being purely curated. This full demonstration has the role of providing an important feedback of reality to the work being done. Reality is very good at injecting humility into the system when it is most needed. When the knowledge is curated we rapidly remove important and essential aspects of stewardship. We have immense issues associated with the long-term responsibility of caring for a stockpile. New issues arise that are beyond the set of conditions the systems were originally designed for. All of this needs a fertile intellectual environment to be properly stewarded. We are not doing this today. Instead the intellectual environment is actually being steadily eroded in favor of curating knowledge. In computing, the creation of legacy codes is a key symptom of this environment. ually undocumented. Many of the tricks are far more obvious and logical to use, and their failure is usually unexplained. Hence the production code works on the basis of tricks of the trade that are often history dependent, and rarely explained, yet utterly essential.

ually undocumented. Many of the tricks are far more obvious and logical to use, and their failure is usually unexplained. Hence the production code works on the basis of tricks of the trade that are often history dependent, and rarely explained, yet utterly essential. for it is the standard way things unfold. The reason this happens is because creating a new production code is a risky thing. Most of the time the effort fails. The creation of the code requires a good environment that nurtures the effort. If the environment is not biased toward replacing older codes with new codes (i.e., progress and improving technology), the inertia of the status quo will almost invariably win. This inertia is based on the very human tendency to base correctness on what you are already doing. The current answer has a great deal greater propriety than the new answer. In many cases the results of existing codes provide the strongest and clearest mental image of what a phenomena looks like to people utilizing modeling and simulation especially in fields where experimental visuals do not exist.

for it is the standard way things unfold. The reason this happens is because creating a new production code is a risky thing. Most of the time the effort fails. The creation of the code requires a good environment that nurtures the effort. If the environment is not biased toward replacing older codes with new codes (i.e., progress and improving technology), the inertia of the status quo will almost invariably win. This inertia is based on the very human tendency to base correctness on what you are already doing. The current answer has a great deal greater propriety than the new answer. In many cases the results of existing codes provide the strongest and clearest mental image of what a phenomena looks like to people utilizing modeling and simulation especially in fields where experimental visuals do not exist.