tl;dr

The HLL family of approximate Riemann solvers is amongst the most popular available. They have an appealing simplicity, performance, and structure powering their use. They apply simple wave diagrams and an adaptive structure for an easy-to-understand formulation. I had an idea years ago during COVID to explore, and it really had no outlet at Sandia. Rather than model each wave as discontinuous, waves could be modeled as having a finite width. This choice could be made based on the nature of the waves. This makes the solver more complex. My hope is that this would improve resolution and behavior near expansions. The idea arose after seeing some systematic shortcomings in (strong) expansion and looking for cures. I will outline the basic ideas here.

“Every problem is a gift – without problems we would not grow.” ― Anthony Robbins

The Origin of the Idea

The focus here stems from the collision of several parts of my work. Back in the 2010s, I discovered some very troubling behavior in hydrocodes. By the time I had investigated it for several months, it shook my confidence in some of the methods. Under extreme conditions, the solutions to the Euler equations fell apart. Many note that the physics falls apart there. The equations do not hold as you approach a vacuum. The continuum falls apart. Nonetheless, this is a limit where the math and codes should still work for robustness, if nothing else. At first, I thought this was the consequence of the nature of the Sandia code, ALEGRA. One of the features of Sandia hydrocodes is the void. This is an empty field variable, nothing at all. Void has no density or pressure. It gets introduced physically during an interaction, such as a spall, where a material breaks. It is also introduced when material is deleted from the problem.

“There is no greater agony than bearing an untold story inside you.” ― Maya Angelou

This material deletion is a truly disgusting procedure. It renders the solution physically and mathematically corrupted. I have pointed out that it renders the modeling inconsistent with the governing equations. If one invokes the Lax Equivalence theorem you can see that the model is rendered non-convergent. To me, this is a fatal flaw and unacceptable. It should be obvious to any well-trained CFD person. The response from Sandia shock physics was somewhere on the spectrum of “meh” to “we don’t give a fuck”. Thus, you can see the level of technical non-excellence I was dealing with. Sigh.

In CTH, it is called “discard”; in ALEGRA, it is called “Cell Doctor”. I joked that ALEGRA should call it, “Cell Undertaker”! Many code users will make excuses for it. They say the deleted material is inconsequential or outside the problem’s focus. This is complete and utter bullshit. This procedure violates the conservation of mass. The conservation of mass is the primary conservation law at the foundation of the entire model. The technical disregard is profound. Violations of energy conservation are bad enough, but mildly defensible. Throwing away mass is simple and pure incompetence. It should not be tolerated. Yet, it is a key feature for code robustness. Other routes for robustness with physical and mathematical legitimacy are ignored. They are too hard. Barf!

So this is what I thought was causing the problems I was seeing. I was wrong. There was something far more insidious happening. The issues are more pervasive and apply to principled methods, too. It is a big challenge awaiting a solution.

I was handed a mysterious problem one of the users of the code was having. They were running a pretty classic problem for the code, an explosive going off and simulating the effects. The explosive was isolated and modeled in a standard way. They were using the common JWL equation of state. In the classical CTH (Sandia) way, as the explosive products blew off and expanded the material was eventually deleted as it expanded. The JWL becomes weird and unphysical as the explosive products expand too much. The deletion of the weird material replaces it with a void (nothing). Pretty soon, the flow accelerates and then actually goes faster and faster, until… The code ends up blowing up and grinding to a halt. The time step crashes due to high velocities. It looks like a semi-classical instability. This is exactly the issue the material deletion is supposed to fix.

I had recently sat down with my Friend Misha from Los Alamos at a conference. Misha is a Lab Fellow and leader in the field. He had issued a challenge on the topic of behavior of void material. He had posed a set of problems to solve. It is an understatement that the behavior of unphysical materials is an issue for hydrocodes. My general view is that the right way to do things is fix the equation of state. Impose physical asymptotic behavior. Definitely conserve masa. Conserving energy is also a good idea. Deleting material is unacceptable and a perversion of science. The codes should act well in extreme expansions (or infinite shocks too) and not blow up.

“The intelligent have plans; the wise have principles.” ― Raheel Farooq

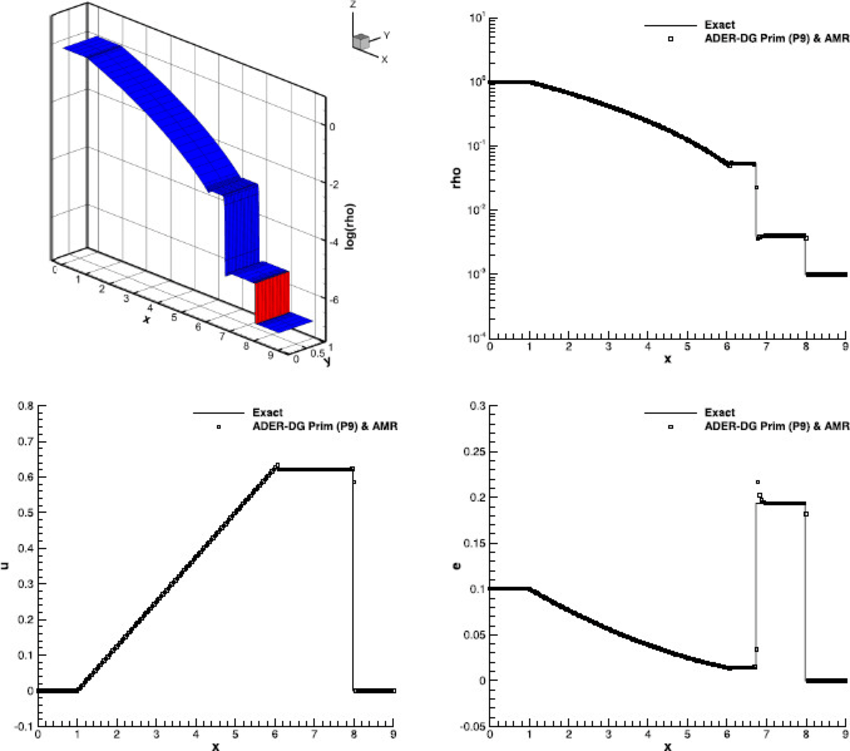

I went to abstract the problem to something classical. I would look at a classic problem of material expanding into void. This is just an extreme shock tube. The LeBlanc shock tube is like this. LeBlanc uses 1000:1 in density jumps, and a billion to one in pressure. It kicks the ass of codes. What I discovered is that as the density jump grows past 1000:1 to much larger, everything goes to shit. LeBlanc is hard, but larger density jumps are seemingly impossible. These calculations do not converge to the right answer with reasonable resolution. They are too difficult in 1-D. Modern codes are all about 3-D, so this is bad. The gauntlet was thrown down.

“The problems are solved, not by giving new information, but by arranging what we have known since long.” ― Ludwig Wittgenstein

Background on HLL

One of the things at ALEGRA is that it does not conserve energy. With the deletion of material, you have a code that doesn’t conserve mass or energy, and I know that that’s wrong. At least, you should. I’d also accepted some of this as a cost of working at Sandia. I created the test problems to approach vacuum step by step towards the ideal. I solved it in ALEGRA, CTH and codes I’d written. I also started solving it with codes that I had written that do conserve energy (and mass of course).

“Mental acuity of any kind comes from solving problems yourself, not from being told how to solve them.” ― Paul Lockhart

ALEGRA can solve the problem in Lagrangian mode. I also have a simple classical Lagrangian code I wrote too (back in X-Division at LANL). A Lagrangian code will work, but falls apart quite quickly. The reason is that the Lagrangian mass mesh is proportional to the mass, and the mass is going to zero. The mesh resolution is also becoming larger and larger. There is no hope of convergence there or resolution of the problem. In an Eulerian code, you do have resolution, and as I discovered, ALEGRA, CTH and all other codes were having problems. When I used my research-grade conservative codes based on things like the piecewise parabolic method (PPM), I found that the problems were not fixed. It had all kinds of issues, and so I had to go back to the drawing board.

My confidence was shaken as I tried the best method I could devise, which is a PPM with a high order approximations for the edge values and an exact Riemann solver. The code did not converge to the right answer, even using extreme resolution. I relayed this to others, and subsequently it has been discovered that if you apply enough mesh cells, on the order of 30,000 cells one does start to get better behavior. This class of problems appears to be a genuine challenge.

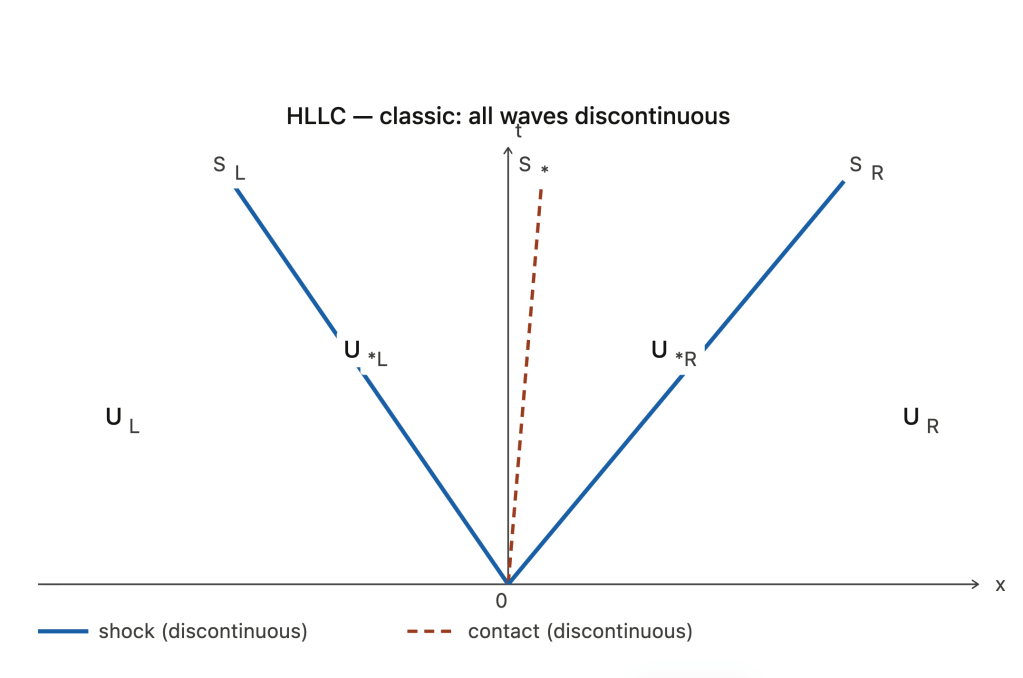

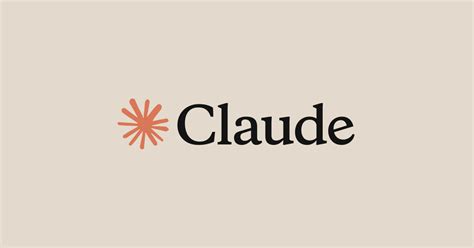

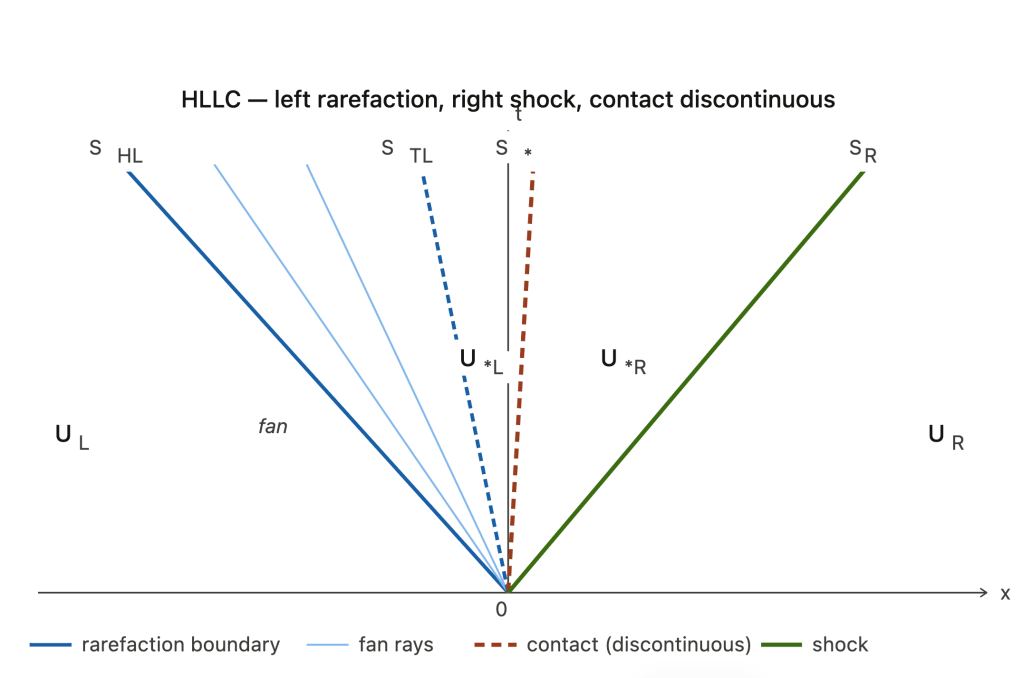

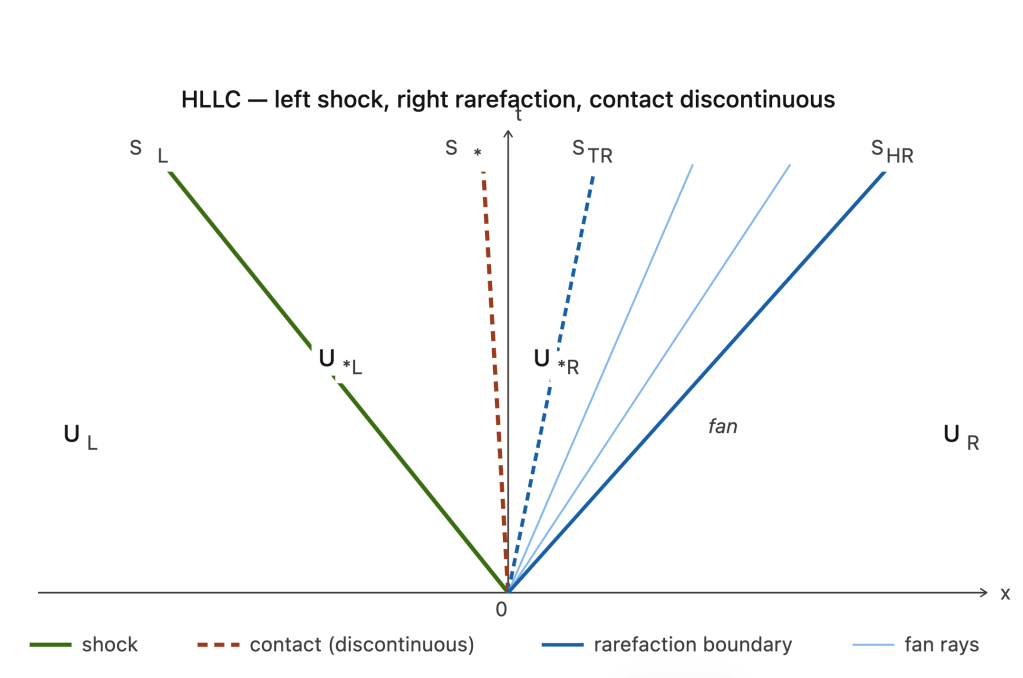

Now, one of the key methods used in this class of codes are approximate Riemann solvers based on the Harten-Lax-van Leer formulation. In the midst of this, I started to look at this formulation more deeply and saw a potentially key issue about making them work for strong expansion waves. This wouldn’t solve this problem that I had discovered, but rather would make them potentially more robust for expansion problems in general. Shocks and contacts are discontinuous waves and the conditions are algebraic. This is a source of simplicity. The solution jumps from one state to another.

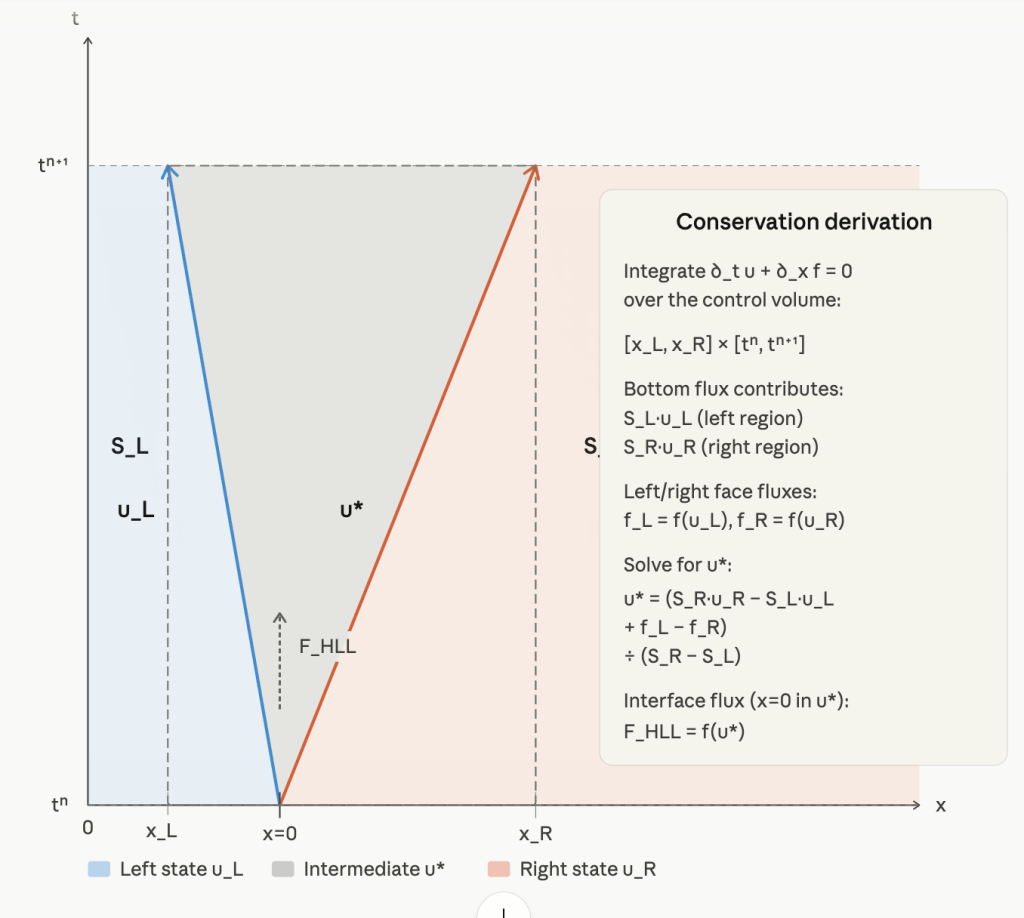

This is the idea I’m exploring here. The gist is that the HLL solvers all are based on simple waves, where you have a discontinuous jump across each wave in the material states. Now, if you look at an expansion, it’s a continuous wave where you have a continuous transition from one state to the other. I started to wonder: could you create an HLL solver with a continuous transition? I think the answer is yes. It doesn’t solve the original problem that I was dealing with, but it’s an interesting thing to explore. It might be a genuine improvement. That’s what I’ll discuss next.

The Details and Changes

“Every solution to every problem is simple. It’s the distance between the two where the mystery lies.” ― Derek Landy

I will go on a slight aside to say that, in posing this problem and trying to draw a diagram of the solution, I found the moment where the Claude AI actually pulled way ahead of its competitors. I had asked ChatGPT and Gemini to draw a wave diagram based on HLL that expressed my ideas. Both of these large language models failed miserably, even with a number of corrections to their initial response. Then I opened up Claude, still in free mode, and asked it the original question. On the very first try, Claude drew the right diagrams. These are given here. This convinced me that I would at least try to buy a pro license, a first for me, and was the single most impressive thing Claude had done for me to that point. Although I was already genuinely interested, as my last post on querying large language models showed. Claude produced exemplary results for fairly technical questions and much better than its competitors.

So let me outline the idea. This is something I worked on during the COVID lockdown, and I exchanged a lot with my friend, Tom, at work. The basic idea is to detect when you have a continuous wave in the remap fan and replace the discontinuous jump with a continuous jump. My sense is that just a linear profile would be a good start. Do the derivation and see how it works. This is something that I hope to actually complete. It’s a very simple idea. The detection of the structure is easy if you look at the wavespeeds. If they converge into the wave, its a shock. At a contact the wavespeed is the same. In an expanding wave the wavespeeds spread the wave. This spread defines the extent of the expanding wave. The math’s a little bit messy, but overall it’s quite doable, and I think would make an interesting study, particularly if you can get rid of things like expansion shocks and improve the behavior in problems like the LeBlanc shock tube.

There are a host of other issues with the original expansion into void problem that I posed that I’ll get to in the next section, but this, in a nutshell, is the gist of the idea. I’ll close just the basic statement of the idea with the comment that this kind of work is completely unsupportable at a place like Sandia. They don’t use codes like this. They don’t fund work like this, and it’s not related to anything I’m doing. Generally speaking, the HLL class of solvers is not used at Sandia at all (maybe in an aerospace code), so I shelved the idea. It went into the dustbin of all the other ideas I had at Sandia that I couldn’t follow because the environment is so non-conducive to research in this area.

“It is an acceptance of being uncomfortable that drives change.” ― Curtis L. Jenkins

Lots of Related Issues

“The possession of knowledge does not kill the sense of wonder and mystery. There is always more mystery.” ― Anais Nin

The response of Sandia to the issues around this mass deletion algorithm should have told me everything I needed to know. They don’t care. Issues that centered around energy conservation would be ignored too. Not just ignored; they would be defended tooth and nail. A Lab that tolerates and even celebrates mass deletion wouldn’t give a fuck about lesser variationsal crimes. The fact that there was a set of choices that led to a lack of convergence with thisa dismissal of convergence is a flashing red warning. For me, a technical concerns such as this, which I view as absolutely essential, is optional to them. It was foolhardy for me to try to stick up for technical correctness, or competence at Sandia. Ultimately, I stuck to my principles and paid for it.

“We are all in the gutter, but some of us are looking at the stars.” ― Oscar Wilde

As it would turn out, looking into the void problem ended up being a gateway to uncovering a whole host of issues! This shows the power of verification in computational science. An exact solution makes it hard to look away. It asks hard questions about correctness,. When that correctness is not found, it provides a source of research opportunities. Unfortunately, this scrambles the careful plans of managers. Thus, today, problems are more convenient to ignore. In this case, I found a host of these that should be explored, but were not. I will expand on these in a later post. I’ll just mention them here in brief.

One of the key aspects of this problem, in the expansion of material into a vacuum, is that the wave speeds that arise are fast. They can be much faster than initial conditions. The wave speed estimates typically used are linearized. These are then used to set things such as time step size and determine levels of dissipation in a calculation. If these are not properly physically bounded, there can be a loss of stability, and there’s also a loss of dissipation. This lack of stability could be related to the code blowup I observed. So, this needed to be explored. It is indeed a substantial problem for codes and methods to tackle. It is essential. At present, it is being ignored.

“Which is the greater sin? To care too much? Or too little?” ― K. Ritz

References

Harten, Amiram, Peter D. Lax, and Bram van Leer. “On upstream differencing and Godunov-type schemes for hyperbolic conservation laws.” SIAM review 25, no. 1 (1983): 35-61.

Einfeldt, Bernd. “On Godunov-type methods for gas dynamics.” SIAM Journal on numerical analysis 25, no. 2 (1988): 294-318.

Toro, Eleuterio F. Riemann solvers and numerical methods for fluid dynamics: a practical introduction. Springer Science & Business Media, 2013.

Toro, Eleuterio F., Michael Spruce, and William Speares. “Restoration of the contact surface in the HLL-Riemann solver.” Shock waves 4, no. 1 (1994): 25-34.

Toro, Eleuterio F. “The HLLC Riemann solver.” Shock waves 29, no. 8 (2019): 1065-1082.

Osher, Stanley, and Fred Solomon. “Upwind difference schemes for hyperbolic systems of conservation laws.” Mathematics of computation 38, no. 158 (1982): 339-374.

Dumbser, Michael, and Eleuterio F. Toro. “A simple extension of the Osher Riemann solver to non-conservative hyperbolic systems.” Journal of Scientific Computing 48, no. 1 (2011): 70-88.

Bill, do you check your LANL email? If so, shoot me an email as we’ve looked at such problems in depth recently. Tariq

I don’t get to the LANL email. Issues with the security software.

Not surprised you’d looked at this. Actually surprised that it hadn’t been looked at sooner by someone. Seems like an obvious thing to examine or think about.

Bill,

I have heard that you are now guest scientist at LANL.

Is it true?

Misha

I have an agreement with T-3. I am not sure how useful it actually is.

Then eventually you will have LANL e-mail, Tariq asking about?

Anyway, if you will be actually visit LANL I would like to have a meeting.

I do have a LANL e-mail, but the cyber security software won’t function on my personal computer. I can’t get it to work thus logging on is impossible. I can definitely visit in the not too distant future though

Ok. Let us communicate using this way. Or send me your private e-mail

by e-mail to shashkov@lanl.gov.

I am still interested in modified equation for Lagrangian and ALE=Lagrange+Remap – we have talked about this “long time”

ago.

Check